Official statement

Other statements from this video 8 ▾

- □ Le contenu dupliqué freine-t-il réellement le crawl de votre site ?

- □ Faut-il vraiment s'inquiéter des alertes de duplication dans Google Search Console ?

- □ La balise canonical : pourquoi Google ignore-t-il parfois vos instructions ?

- □ Faut-il privilégier la balise HTML ou l'en-tête HTTP pour déclarer une URL canonique ?

- □ Pourquoi Google ignore-t-il votre balise canonical et comment le corriger ?

- □ Faut-il vraiment rediriger en 301 toutes les URL non-canoniques pour le SEO ?

- □ Pourquoi fusionner des pages similaires améliore-t-il le SEO même sans duplicate content ?

- □ Faut-il vraiment fusionner vos pages pour améliorer votre SEO ?

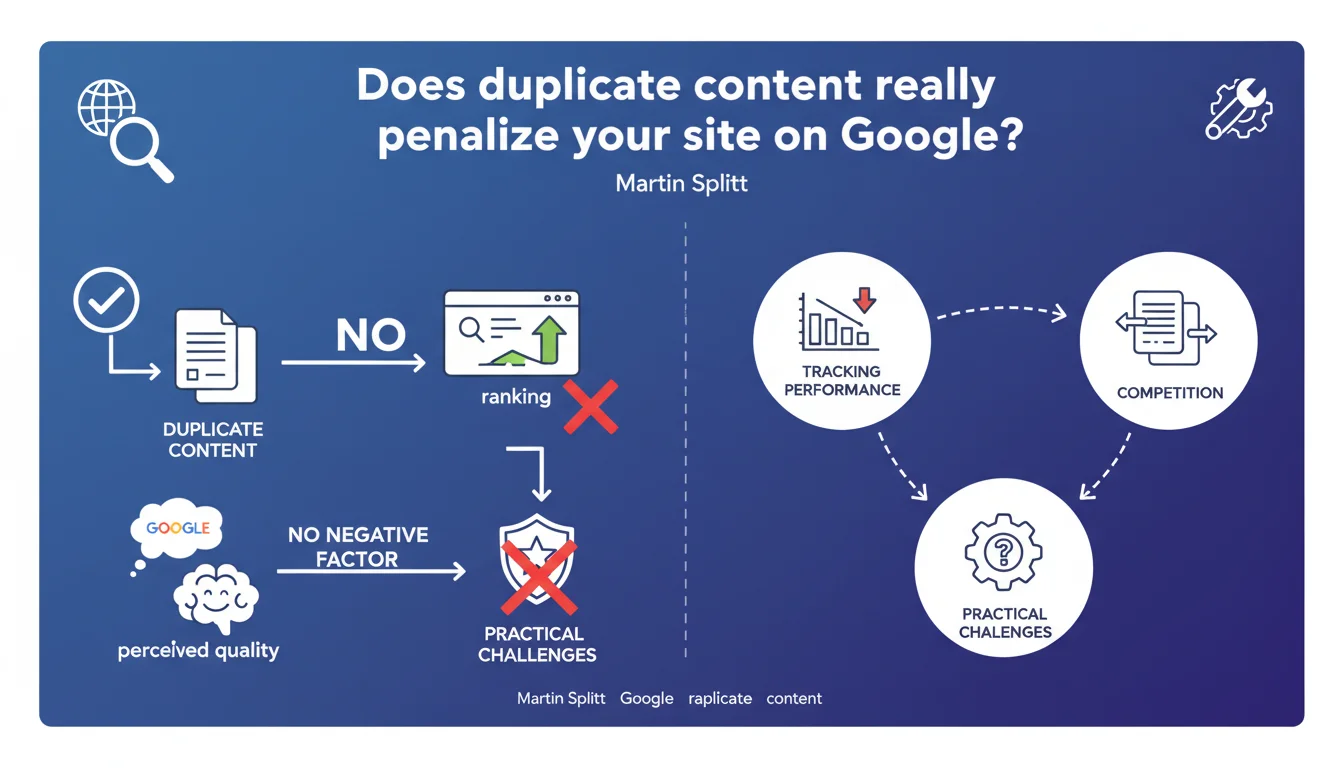

Google states that duplicate content does not negatively affect the perceived quality of a site or its rankings. However, it complicates performance tracking and creates cannibalization between similar pages that can dilute your visibility.

What you need to understand

What exactly does Google mean by "duplicate content"?

Google refers to identical or very similar content present on multiple URLs, whether within the same domain or on different sites. Contrary to popular belief, this does not trigger any algorithmic penalty.

The search engine handles this situation by filtering duplicates when displaying results. A single version is generally selected to be shown, while others are excluded — but not penalized.

Why does Martin Splitt emphasize the absence of impact on perceived quality?

Because for years, the SEO community believed — and some still believe — that having duplicate content would reduce the trust that Google places in a site. This is false.

Google does not consider duplicate content as a spam signal or low-quality indicator. It treats it as a technical problem of URL selection, not as a content defect.

What are the real risks of duplicate content then?

The problem is not algorithmic, it is operational. When multiple similar pages exist, Google must choose which one to display. And this choice is not always the one you would prefer.

Result: you lose control. Your SEO efforts become scattered, your backlinks dilute, and your Analytics tracking becomes a headache.

- Duplicate content is not a penalty or a ranking factor

- It complicates performance tracking and dilutes SEO signals

- Google filters duplicates but does not always choose the canonical version you prefer

- Cannibalization between similar pages reduces their individual visibility

- Backlinks pointing to different versions of the same content lose link juice concentration

SEO Expert opinion

Is this statement consistent with what we observe in practice?

Yes, overall. Sites with a lot of duplicate content are not systematically penalized, we observe this regularly. E-commerce stores with identical product sheets, content aggregators, multilingual sites — none are hit by manual action for this reason.

What becomes problematic is when the duplicate prevents Google from understanding which page to index. There, we see position fluctuations, pages that switch in the SERPs, sometimes the wrong URL ranking. Not a sudden drop, but rather underperformance.

What nuances should be added to this statement?

Saying that duplicate does not affect perceived quality is true in absolute terms. But in certain edge cases, it can indirectly work against you.

Take a site that massively scrapes content from elsewhere. Technically, Google does not penalize it for duplicate. But the lack of original and useful content can trigger other negative signals — particularly related to E-E-A-T criteria or the Helpful Content System. It's no longer pure duplicate, it's a problem of added value.

Another case: if your site is mostly composed of duplicate pages with little unique content, Google can reduce your crawl budget. Not a penalty, just a lower prioritization. [To verify]: Google has never clarified the exact threshold where this becomes problematic.

In which cases does this rule not apply completely?

When the duplicate is the result of deliberate manipulation to create multiple artificial entry points, Google may consider this as spam. We then exit simple technical duplication.

Similarly, if you systematically republish content from other sites without authorization or added value, you risk manual action for thin content or scraping — not for duplicate per se, but for violation of guidelines.

Practical impact and recommendations

What should you do concretely to manage duplicate content?

First, identify duplicates on your site. Use a crawler (Screaming Frog, Sitebulb, OnCrawl) to detect identical or near-identical content. Focus on strategic pages, not minor footer duplicates.

Next, choose a consolidation strategy. If multiple URLs present the same content, determine which one should be visible and redirect the others with a 301. If the pages must coexist (filters, product variants), use the canonical tag to indicate your preference.

For syndicated or republished content elsewhere, ensure that the original version is indexed first. Publish on your site first before any external distribution, and ask partners to include a canonical to your URL.

What mistakes should you absolutely avoid?

Never ever block duplicate pages via robots.txt. Google needs to crawl them to understand they are identical and apply the canonical. Blocking prevents this detection.

Avoid circular or contradictory canonicals — this is a frequent source of confusion for the search engine. Page A pointing to B, which itself points to C, is the best way to lose control.

Don't disperse yourself chasing every micro-duplicate. Focus on pages with traffic or SEO potential. Excessive perfectionism wastes time without measurable ROI.

How do you verify that your duplicate management is effective?

Monitor in the Search Console the URLs excluded for duplicate. If you see important pages in this category, there is a canonicalization issue to fix.

Use the URL inspection tool to check which version Google considers canonical. If it's not the one you defined, dig deeper: ignored canonical, contradictory signals, or simply Google deciding otherwise.

Monitor your positions and traffic by group of similar pages. If you notice cannibalization (multiple URLs alternating in SERP for the same query), this is the signal of necessary consolidation.

- Regularly crawl your site to identify duplicate content

- Define a clear canonical URL for each group of similar pages

- Redirect 301 unnecessary duplicates to the main version

- Use the canonical tag on variants that must coexist

- Never block duplicates via robots.txt

- Verify in the Search Console that Google respects your canonicals

- Monitor position fluctuations that may indicate cannibalization

- Publish your original content before any external syndication

❓ Frequently Asked Questions

Google pénalise-t-il vraiment les sites avec du contenu dupliqué ?

La balise canonical suffit-elle à résoudre tous les problèmes de duplicate ?

Dois-je bloquer les pages dupliquées dans le robots.txt ?

Comment savoir si mon site souffre de cannibalisation SEO ?

Le contenu syndiqué ou republié peut-il nuire à mon SEO ?

🎥 From the same video 8

Other SEO insights extracted from this same Google Search Central video · published on 12/11/2024

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.