Official statement

Other statements from this video 16 ▾

- □ Is crawl budget really insignificant for your site?

- □ Should you really worry about internal content duplication?

- □ Does new content really get an automatic ranking boost?

- □ Does hreflang really function on a page-by-page basis and not for the entire site?

- □ How does Google truly measure Page Experience in its algorithm?

- □ Does Google really not use Chrome and Analytics data for rankings?

- □ Does hreflang really change the ranking or just swap the URLs?

- □ Do you really need to choose between a 301 redirect and a canonical link for a migration?

- □ Is it true that Top Stories can thrive without AMP? Here's what you need to know!

- □ Does Google Search Console really account for all your SEO impressions?

- □ Does JavaScript-generated URLs really waste your crawl budget?

- □ Does nofollow really prevent a page from being indexed?

- □ Why does Google choose not to index certain pages on your site?

- □ Should you really delete low-traffic pages to boost your SEO?

- □ Does improperly formatted breadcrumb markup lead to a Google penalty?

- □ Does unique content really enhance a site's overall ranking?

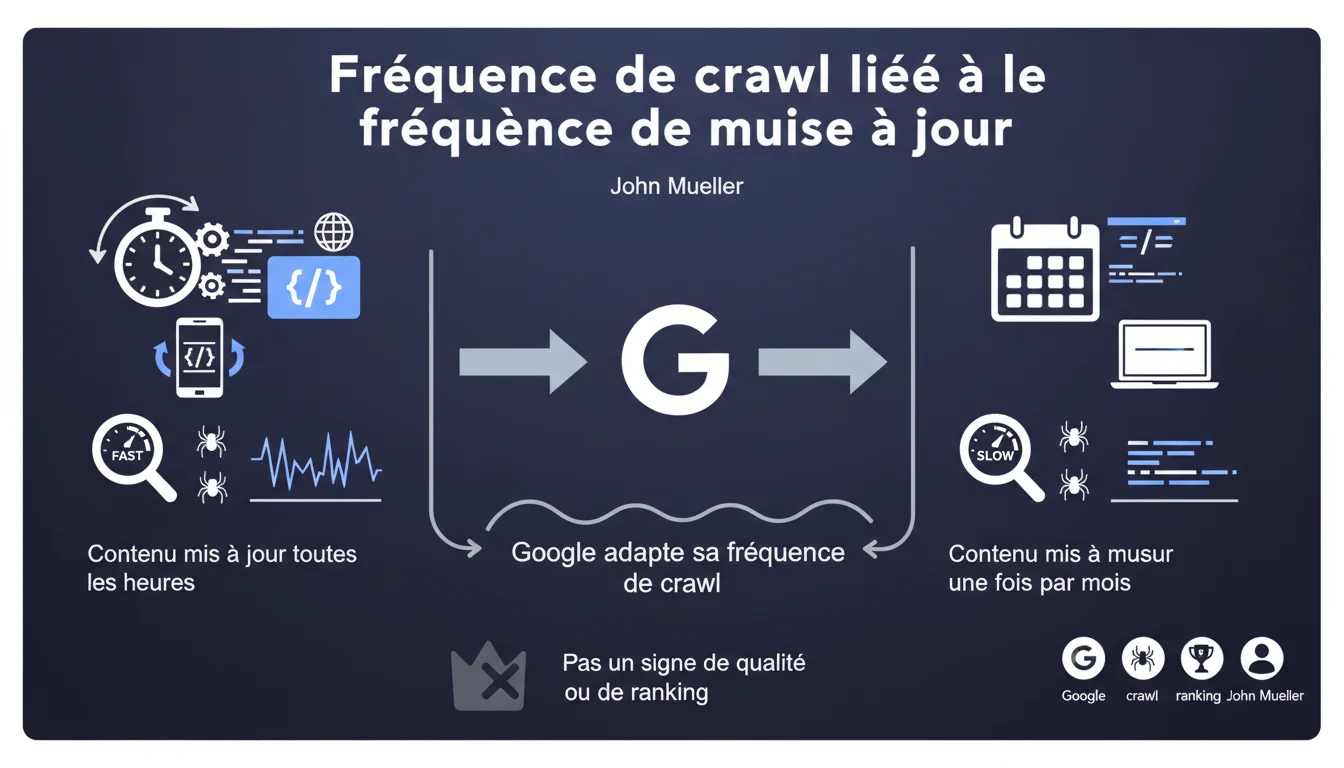

Google automatically adjusts its crawl frequency based on how often a site updates its content. The more regularly you publish, the more often Googlebot visits. However, be careful: this is not a direct ranking factor, just a logistical adjustment.

What you need to understand

Why does Google adjust its crawl frequency according to the publication rhythm?

Googlebot doesn't have time to waste. Its resources are limited, and it has to scan billions of pages every day. The crawl algorithm learns to optimize its visits: a site that publishes every hour deserves frequent visits, while a site that remains dormant for months can wait.

It's a question of operational efficiency. If Googlebot detects that your content changes rarely, it will space out its visits to allocate crawl budget where it’s really needed. The opposite is true: a high update frequency signals to the bot that it should return regularly to capture the latest updates.

Does this mean that a site crawled often ranks better?

No. And it's crucial to understand the nuance.

Mueller is clear: this is not a quality signal. Being crawled frequently does not mean that Google favors you more or that your pages are going to climb in the SERPs. It’s just that the bot is adapting its logistics to your editorial habits. A news site crawled every hour does not necessarily have more authority than a technical blog updated once a quarter.

What is the logic behind this automatic adjustment?

Google aims to maximize the freshness of its index while minimizing resource waste. If a site publishes daily but Googlebot only visits once a week, the index will become outdated. Conversely, if the bot visits a site that never changes three times a day, that’s wasted crawl budget.

The algorithm learns through observation. It detects your publication patterns, adjusts its visit frequency accordingly, and reallocates saved resources to other sites. It’s a self-learning system that continuously adapts.

- Crawl frequency ≠ quality or ranking

- The adjustment is automatic and based on the observation of update patterns

- A site updated every hour will be crawled much more frequently than a site updated monthly

- It's a matter of operational efficiency, not algorithmic favoritism

SEO Expert opinion

Is this statement consistent with what we observe on the ground?

Absolutely. Server logs don't lie: news sites with multiple publications per hour see Googlebot arriving continuously, while static showcase sites receive a few visits per week. Nothing surprising.

But — and this is where it gets interesting — some SEOs conclude that they must publish at all costs to 'stay on Google's radar.' This is a dangerous interpretation. Mueller hammers the point home: crawl frequency ≠ ranking. Publishing mediocre content daily won't save you if your quality is poor.

What nuances should be added to this rule?

First point: crawl frequency also depends on the authority of the site. A trusted domain with few publications will be crawled more often than a new site that publishes frantically. The update rhythm is one factor among others.

Second point: beware of the over-publication trap. If you update your pages every hour with cosmetic changes (changing the date, adding a word), Googlebot will pick up on it. And it could reduce its visit frequency if it detects that updates are insignificant. [To be verified] but observed cases suggest that Google is learning to distinguish real updates from false signals.

In what cases does this rule not really apply?

On very large sites — I'm talking about millions of pages — crawl frequency is also dictated by technical constraints. Even if you publish every hour, Googlebot won't crawl your entire site continuously. It will prioritize certain sections (homepage, main categories) and neglect deeper pages.

Another case: sites with recurring technical issues (slow response times, frequent 5xx errors) will see their crawl budget reduced, regardless of their publication frequency. Google protects its bots from unstable sites.

Practical impact and recommendations

What should you do concretely if you want to optimize your crawl frequency?

Let’s be honest: if your goal is to be crawled more often, the only real solution is to publish substantial content regularly. Not micro-adjustments, not artificially modified dates. Real content.

But — and this is crucial — first ask yourself: do you really need a high crawl frequency? If you manage an e-commerce site with fluctuating stock products, yes. If you run a corporate blog with two articles per month, no. No need to force it.

What errors should you absolutely avoid?

Error #1: believing that by publishing mediocre content daily, you'll climb the SERPs. Crawl frequency does not determine ranking. You may be crawled more often, but if your content is bad, it won’t change anything.

Error #2: artificially modifying your pages (changing the date, adding a word) to simulate an update. Google detects these patterns and could reduce your crawl budget if it determines that your updates are cosmetic.

Error #3: neglecting technical aspects (server speed, 5xx errors, poorly configured robots.txt) thinking that publication frequency is sufficient. A slow or unstable site will be crawled less often, regardless of its editorial rhythm.

How can I check if my site is being crawled effectively?

Consult your server logs. It's the only source of truth. Check the frequency of Googlebot visits, the sections crawled, the errors reported. The Search Console provides insights, but nothing beats raw log analysis.

If you notice a gap between your publication frequency and the crawl frequency, first look for a technical problem. Response time? Server errors? Crawl budget wasted on unnecessary pages (infinite pagination, unfettered facets)?

- Publish substantial content at a regular pace — without forcing it if it doesn’t align with your strategy

- Don’t fake updates: Google learns to distinguish real updates from false signals

- Analyze your server logs to understand actual crawl patterns

- Optimize technical aspects: server speed, errors, robots.txt, crawl budget

- Don’t confuse crawl frequency with ranking: one does not imply the other

❓ Frequently Asked Questions

Est-ce qu'augmenter ma fréquence de publication va améliorer mon ranking ?

Comment savoir à quelle fréquence Googlebot visite mon site ?

Si je modifie artificiellement mes pages pour simuler une mise à jour, Google va-t-il crawler plus souvent ?

Un site d'actualités est-il favorisé par rapport à un site statique en termes de ranking ?

Dois-je publier quotidiennement pour maximiser mes chances d'être bien référencé ?

🎥 From the same video 16

Other SEO insights extracted from this same Google Search Central video · published on 09/01/2022

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.