Official statement

Other statements from this video 16 ▾

- □ Faut-il publier plus souvent pour être crawlé plus régulièrement par Google ?

- □ Faut-il vraiment s'inquiéter de la duplication de contenu interne ?

- □ Le contenu récent bénéficie-t-il vraiment d'un boost de ranking automatique ?

- □ Le hreflang fonctionne-t-il vraiment page par page et non pour tout un site ?

- □ Comment Google mesure-t-il réellement la Page Experience dans son algorithme ?

- □ Chrome et Analytics influencent-ils vraiment le classement Google ?

- □ Le hreflang modifie-t-il vraiment le ranking ou se contente-t-il de permuter les URLs ?

- □ Faut-il vraiment choisir entre redirection 301 et canonical pour une migration ?

- □ Top Stories sans AMP : faut-il encore optimiser la vitesse de vos pages ?

- □ Search Console compte-t-elle vraiment toutes vos impressions SEO ?

- □ Les URLs découvertes en JavaScript gaspillent-elles vraiment votre crawl budget ?

- □ Le nofollow empêche-t-il vraiment l'indexation d'une page ?

- □ Pourquoi Google refuse-t-il d'indexer certaines pages de votre site ?

- □ Faut-il supprimer les pages à faible trafic pour améliorer son SEO ?

- □ Les erreurs de balisage breadcrumb entraînent-elles une pénalité Google ?

- □ Le contenu unique booste-t-il vraiment le ranking global d'un site ?

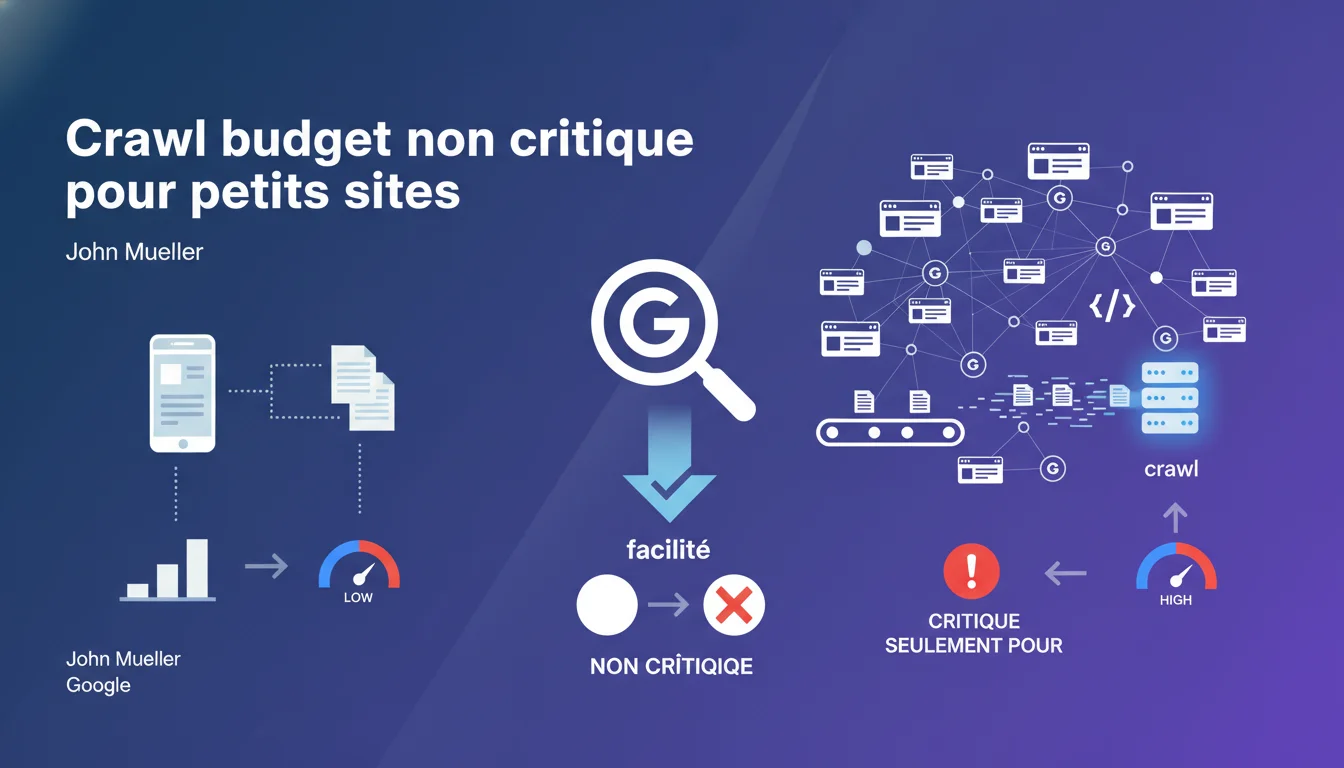

Google claims that crawl budget is not a limiting factor for sites publishing up to 10,000 pages per day. This concept only becomes relevant when dealing with several million pages daily. Therefore, most sites can ignore this issue.

What you need to understand

What does Google mean by 'crawl budget'? <\/h3>

The crawl budget refers to the amount of resources that Googlebot allocates to crawl a site over a given period. It is a balance between the server's capacity to respond and Google's interest in the content.<\/p>

Mueller sets a clear threshold here: 10,000 pages per day. Below this, Google considers that its infrastructure can handle the volume without issues. The limit does not come from the engine but potentially from the quality of content or the site's architecture.<\/p>

Why this threshold of 10,000 daily pages? <\/h3>

This figure is not arbitrary. It reflects Google's current crawl power, capable of massively processing content. For most e-commerce, media, or corporate sites, even with variations in product sheets or articles, this volume remains unattainable.<\/p>

Only massive aggregators, giant marketplaces, or automatically generated sites reach these magnitude orders. For them, the issue becomes real: prioritize high-value URLs and avoid wasting time on duplicated or obsolete content.<\/p>

What does this mean for standard sites? <\/h3>

This statement frees SEOs from the obsession with crawl budget for 99% of projects. There's no need to over-optimize robots.txt files or aggressively block entire sections for fear of "wasting" the budget.<\/p>

Energy should focus elsewhere: quality of internal linking, content relevance, user experience. The crawl will naturally follow if the architecture is healthy and the content valuable.<\/p>

- The crawl budget is not an issue for sites under 10,000 pages/day

- Google can easily crawl these volumes with its current infrastructure

- The limit only becomes real for millions of daily pages

- For the majority of sites, crawl issues stem from architecture or quality, not budget

- No need to aggressively block sections in robots.txt for budget concerns <\/ul>

SEO Expert opinion

Does this statement align with real-world observations? <\/h3>

In my practice, this statement largely holds true. Sites experiencing real crawl issues rarely suffer from a pure budget deficit. The causes are almost always structural: poorly managed infinite pagination, explosive URL parameters, mass duplicate content.<\/p>

However — and this is where Mueller simplifies — crawl budget is not a binary concept. A site can technically be fully crawled in a month, but if Google only visits certain sections every two weeks, the indexation of fresh content slows down mechanically. The budget exists, but it doesn’t manifest as a strict wall.<\/p>

What nuances should be added to this rule of 10,000 pages? <\/h3>

The figure of 10,000 daily pages is an indicative average, not an absolute law. A site with weak authority, poor server response times, or a history of mediocre content will see its crawl limited well before this threshold. [To be verified]: Google has never published a precise correlation between domain authority and crawl allocation.<\/p>

Conversely, a respected site with a solid infrastructure can exceed these volumes without friction. Context matters as much as the raw number. Don’t take this statement as a free pass to neglect your architecture simply because "Google can crawl everything".<\/p>

When does this rule not apply? <\/h3>

Sites with massive dynamic generation — infinite search facets, unmoderated UGC content, gigantic historical archives — may encounter limits even under 10,000 pages/day if the average quality is poor. Google adjusts its crawl based on the signal-to-noise ratio.<\/p>

Practical impact and recommendations

What should you do if you publish less than 10,000 pages per day? <\/h3>

Stop over-optimizing crawl budget. This obsession diverts attention from the real levers: logical architecture, loading times, content quality. If your site publishes 50, 500, or even 5,000 pages daily, Google will crawl them without issues — provided they are worthy of being crawled.<\/p>

Focus on internal linking. Important pages should be accessible within a few clicks from the homepage. Orphaned sections or ones buried 10 levels deep won’t be crawled regularly, not due to a lack of budget but because Google can’t easily find them.<\/p>

What mistakes should you avoid despite this reassuring statement? <\/h3>

Don’t confuse "Google can crawl" with "Google will index". Crawling is a necessary condition, not sufficient. Pages that are crawled but deemed duplicate, thin, or worthless will remain out of the index. The issue is not the crawl volume but the quality of what gets crawled.<\/p>

Also, avoid instinctively blocking entire sections in robots.txt under the pretext of saving budget. You risk depriving Google of useful context to understand your site. Let the engine decide, except for genuinely unnecessary elements (admin, technical duplicates, session parameters).<\/p>

How can you verify that your crawl is going smoothly? <\/h3>

Analyze your server logs over 30 days. If Googlebot regularly visits your new pages and revisits updated sections, everything is fine. If certain strategic URLs are never crawled, look for the problem in the architecture or linking, not in a hypothetical budget limit.<\/p>

In Search Console, monitor the index coverage report. Pages that are "Discovered, currently not indexed" often indicate a perceived quality problem, not a crawl issue. Google has seen them; it just decided they add no value.<\/p>

- Prioritize architecture and internal linking rather than crawl budget optimization

- Ensure strategic pages are accessible within 3-4 clicks maximum from the homepage

- Analyze your server logs to identify actual crawl patterns

- Do not block by default in robots.txt — let Google decide unless there's a manifest case

- Monitor Search Console for instances of crawled but not indexed pages (quality signal)

- Optimize server response times to facilitate crawling, even if the budget isn't limiting

- Avoid crawl traps: infinite pagination, explosive URL parameters, duplicate content <\/ul>

❓ Frequently Asked Questions

Mon site publie 200 pages par mois, dois-je m'inquiéter du crawl budget ?

Si Google peut crawler 10 000 pages par jour, pourquoi certaines de mes pages ne sont-elles pas indexées ?

Faut-il quand même optimiser mon fichier robots.txt ?

Les sites e-commerce avec variations produits sont-ils concernés par ce seuil de 10 000 pages ?

Comment savoir si mon site a un problème de crawl réel ?

🎥 From the same video 16

Other SEO insights extracted from this same Google Search Central video · published on 09/01/2022

🎥 Watch the full video on YouTube →Related statements

Get real-time analysis of the latest Google SEO declarations

Be the first to know every time a new official Google statement drops — with full expert analysis.

💬 Comments (0)

Be the first to comment.