Official statement

Other statements from this video 16 ▾

- □ Le crawl budget est-il vraiment négligeable pour votre site ?

- □ Faut-il publier plus souvent pour être crawlé plus régulièrement par Google ?

- □ Faut-il vraiment s'inquiéter de la duplication de contenu interne ?

- □ Le contenu récent bénéficie-t-il vraiment d'un boost de ranking automatique ?

- □ Le hreflang fonctionne-t-il vraiment page par page et non pour tout un site ?

- □ Comment Google mesure-t-il réellement la Page Experience dans son algorithme ?

- □ Chrome et Analytics influencent-ils vraiment le classement Google ?

- □ Le hreflang modifie-t-il vraiment le ranking ou se contente-t-il de permuter les URLs ?

- □ Faut-il vraiment choisir entre redirection 301 et canonical pour une migration ?

- □ Top Stories sans AMP : faut-il encore optimiser la vitesse de vos pages ?

- □ Search Console compte-t-elle vraiment toutes vos impressions SEO ?

- □ Le nofollow empêche-t-il vraiment l'indexation d'une page ?

- □ Pourquoi Google refuse-t-il d'indexer certaines pages de votre site ?

- □ Faut-il supprimer les pages à faible trafic pour améliorer son SEO ?

- □ Les erreurs de balisage breadcrumb entraînent-elles une pénalité Google ?

- □ Le contenu unique booste-t-il vraiment le ranking global d'un site ?

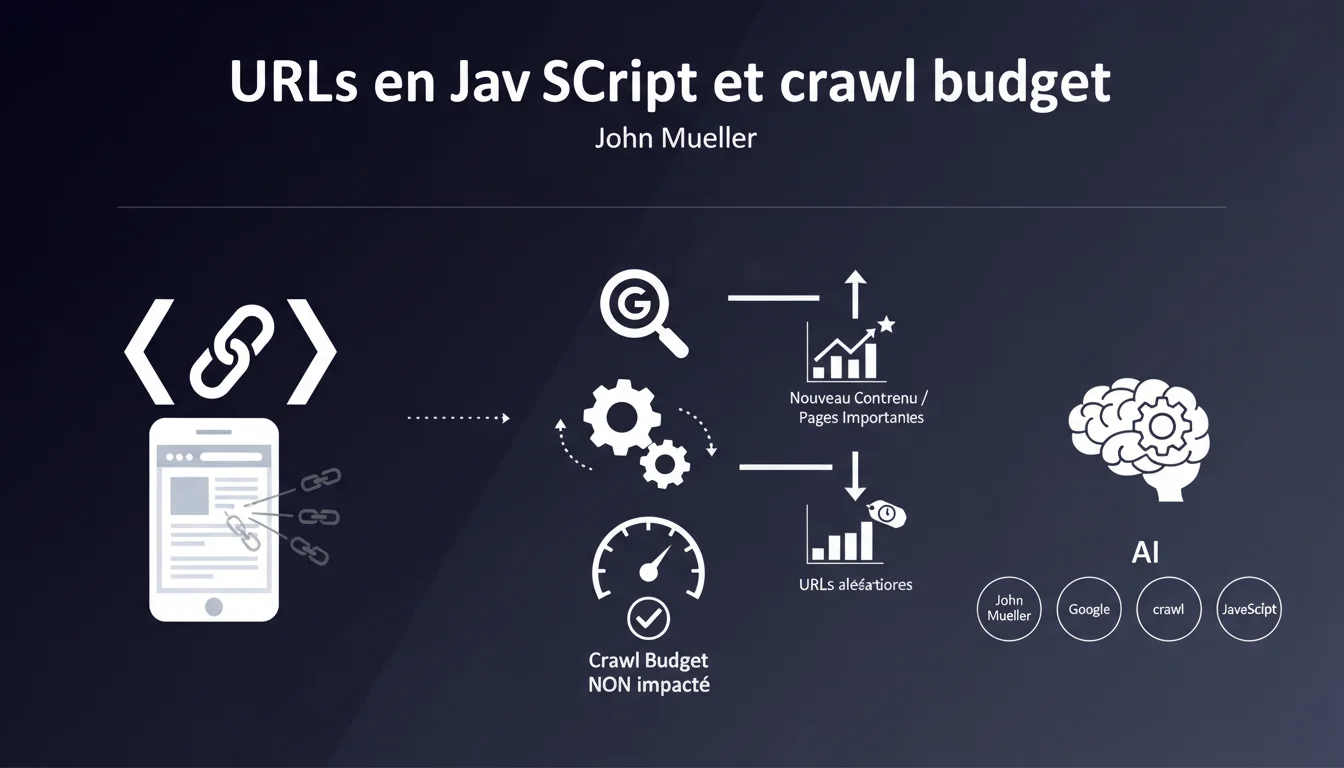

Google claims that URLs discovered through JavaScript or mentioned randomly do not negatively impact the crawl budget. These URLs receive low priority, as the engine systematically favors new content and important pages. So, don’t panic if your JavaScript generates secondary URLs.

What you need to understand

Why does Google distinguish between JavaScript URLs and traditional URLs?

Google implements a strict hierarchy in its crawling process. URLs discovered in JavaScript code — often from dynamic components, third-party scripts, or frameworks — are systematically placed at the back of the queue.

This prioritization is based on a simple observation: these URLs are rarely strategic pages. They may be technical artifacts, automatically generated links, or references without direct SEO value. Google therefore prefers to invest its crawling time on more reliable signals.

What does Mueller mean by "randomly mentioned URLs"?

This refers to URLs that appear without a logical structure in your code — for example, in JavaScript comments, debug logs, or misconfigured data-* attributes. These mentions create noise in the link graph without providing editorial value.

Google has learned to filter this noise. The engine now distinguishes between intentional links (navigation, structured internal linking) and incidental technical references. As a result, the latter do not drain the crawl resources allocated to your site.

Is the crawl budget really protected in all cases?

Mueller's statement implies automatic protection, but it remains vague on volumes. If your JavaScript generates tens of thousands of junk URLs, the impact remains to be measured concretely in your context.

- Active prioritization: Google ranks URLs by importance before crawling

- Noise filtering: Random URLs or those without editorial value are deprioritized

- No direct negative impact: These discoveries do not consume the budget allocated to strategic pages

- JavaScript = long queue: JS URLs are queued after standard HTML content and high-value pages

SEO Expert opinion

Does this statement align with field observations?

In most cases, yes. The sites I audit show no direct correlation between the volume of discovered JavaScript URLs and a slowdown in crawling on strategic pages. Server logs confirm that Googlebot maintains a stable pace on priority URLs even when JS generates noise.

But — and this is where it gets tricky — this statement hides an important nuance: it all depends on your starting crawl budget. On a small site with a limited budget, even intelligent prioritization can create indexing delays if you multiply junk URLs. [To be verified] on your own site with precise log analysis.

What situations escape this rule?

Mueller mentions "random" URLs, but not all JavaScript links are equal. If your framework generates valid canonical URLs pointing to real content — for example, a SPA with client-side routing — Google treats them differently.

The real issue arises with hybrid sites: part in standard HTML, part in heavy JavaScript, without a clear separation. In this case, Google has to arbitrate between two architectures, and the signals get mixed. The "low priority" then becomes a waiting room for indexing where some pages wait for weeks.

Should you ignore the problem?

No. Even if Google manages prioritization, each discovered URL consumes processing resources — parsing, analysis of duplication, ranking attempts. On a low-authority site, this friction can delay the indexing of new strategic content.

And let's be honest: relying on Google’s intelligence to compensate for a shaky architecture is a risky strategy. It's better to design properly from the start than to cross your fingers hoping the algorithm will sort it out correctly.

Practical impact and recommendations

What should you do to optimize this situation?

Start by auditing your server logs to identify which JavaScript URLs Google actually discovers. Use a tool like Screaming Frog in JavaScript mode, then cross-reference with actual crawl data. You will quickly see if any junk patterns emerge.

Then, clean up at the source. If your framework generates unnecessary URLs, adjust the configuration or use robots.txt to block obvious patterns. For discovered URLs without value, noindex via JavaScript works — provided Google executes the script correctly.

What mistakes should you absolutely avoid?

Do not confuse "low priority" with "ignored". Google will crawl these URLs — just later. If they return 404s or server errors, you will still accumulate negative signals in Search Console.

Another trap: believing that because Google filters noise, you can leave anything lying around. A clean technical site remains a signal of overall quality. Junk URLs, even deprioritized, dilute this signal.

- Analyze server logs to identify JavaScript URLs crawled by Googlebot

- Cross-reference this data with a JavaScript crawl of your site to spot junk patterns

- Block via robots.txt directories or patterns of URLs without SEO value

- Add noindex tags on non-strategic JavaScript pages if necessary

- Monitor Search Console to detect the massive appearance of discovered non-indexed URLs

- Optimize the HTML internal linking to strengthen signals on priority pages

- Test JavaScript rendering with the URL inspection tool to verify that Google sees what you expect

How can you check that your architecture follows best practices?

Use the URL inspection tool in Search Console on a few typical JavaScript pages. Compare the version crawled by Google with what your users see. If junk URLs appear in the crawled version, you have an architectural problem to fix.

Also, track the evolution of the "discovered/indexed" ratio in Search Console. A suddenly widening gap may signal that Google is massively discovering worthless URLs via JavaScript. This is an indicator to watch monthly.

❓ Frequently Asked Questions

Les liens en JavaScript sont-ils pris en compte pour le PageRank interne ?

Dois-je bloquer systématiquement les URLs JavaScript inutiles dans le robots.txt ?

Mon site SPA en React est-il pénalisé par cette logique de priorisation ?

Comment savoir si mes URLs JavaScript consomment trop de crawl budget ?

Les frameworks JavaScript modernes (Next.js, Nuxt) échappent-ils à ce problème ?

🎥 From the same video 16

Other SEO insights extracted from this same Google Search Central video · published on 09/01/2022

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.