Official statement

Other statements from this video 18 ▾

- □ Peut-on vraiment montrer du contenu payant structuré uniquement à Googlebot sans risque de pénalité ?

- □ Le DMCA s'applique-t-il vraiment page par page ou peut-on signaler un site entier ?

- □ Google indexe-t-il vraiment tout le contenu que vous publiez ?

- □ Une page AMP invalide peut-elle quand même être indexée par Google ?

- □ Safe Search peut-il empêcher votre site adulte de ranker sur votre propre marque ?

- □ Le Product Reviews Update peut-il impacter votre site même s'il n'est pas en anglais ?

- □ Géociblage ou hreflang : quelle méthode privilégier pour les contenus multilingues ?

- □ Google peut-il choisir arbitrairement quelle version linguistique indexer quand le contenu est identique ?

- □ Faut-il vraiment bloquer les URLs publicitaires dans robots.txt ?

- □ Faut-il abandonner l'injection dynamique de mots-clés pour éviter les pénalités Google ?

- □ Le client-side rendering React pose-t-il vraiment un problème de classement pour Google ?

- □ Faut-il vraiment bloquer toutes les URLs de recherche interne dans robots.txt ?

- □ Les sites SEO sont-ils vraiment exemptés des critères YMYL ?

- □ Google pénalise-t-il les breadcrumbs structurés invisibles ou trompeurs ?

- □ Peut-on vraiment lier plusieurs sites dans le footer sans risque SEO ?

- □ Faut-il vraiment traduire l'intégralité d'un site multilingue pour bien se positionner ?

- □ Robots.txt ou noindex : lequel choisir pour bloquer l'indexation ?

- □ Le trafic artificiel influence-t-il vraiment le classement Google ?

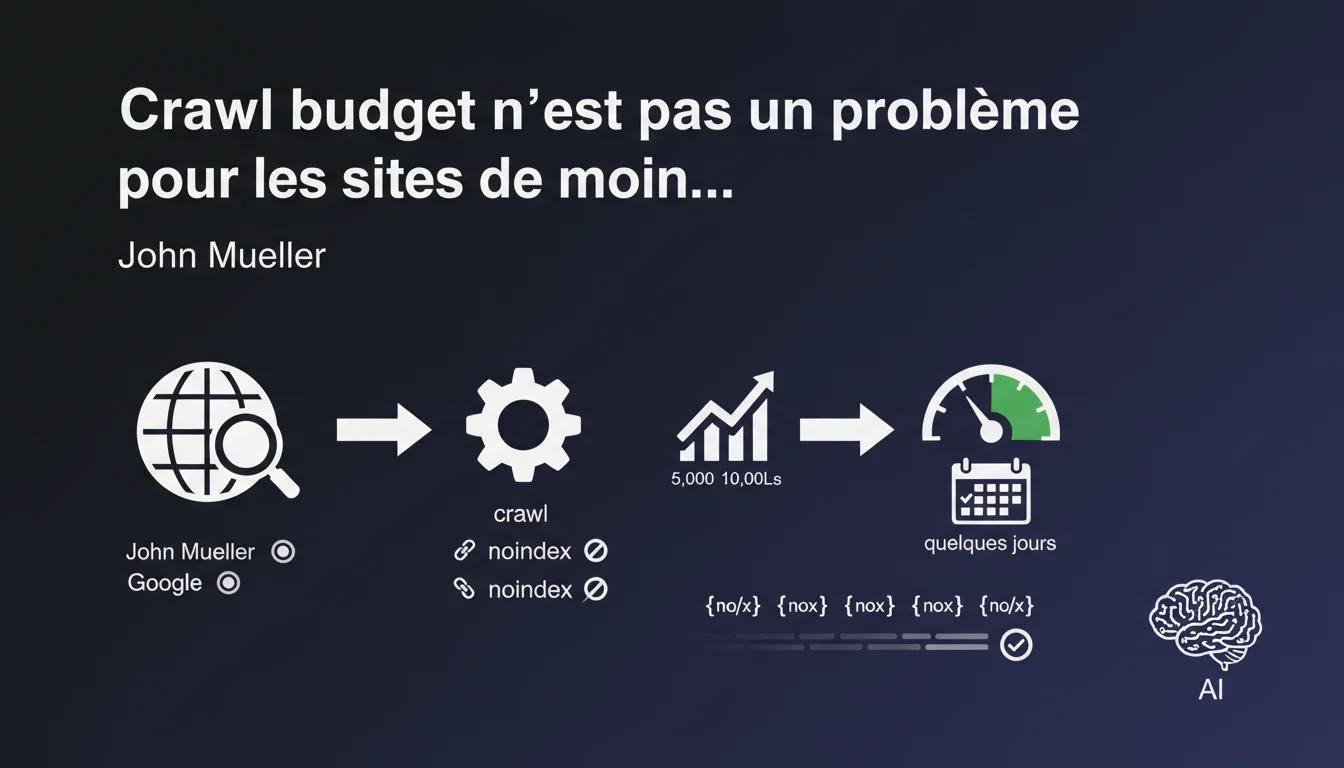

Google states that crawl budget is not a concern for sites with 5,000 to 10,000 URLs — these volumes can be crawled in a few days. Noindex pages are gradually deprioritized but continue to be checked periodically. This statement puts into perspective the obsession of some professionals with this factor.

What you need to understand

What exactly does Google mean by "a few days"?

John Mueller remains intentionally vague about this notion of "a few days". Is it 3 days, 7 days, 15 days? This imprecision leaves a lot of room for interpretation.

For an e-commerce site that regularly adds new products or a media outlet that publishes daily, this timing can make all the difference. An indexing delay of 7 days on a flash sale is a concrete business issue.

Why do noindex pages continue to be crawled?

Google must periodically verify that the noindex directive is still in place. It’s a safety mechanism: if you remove a noindex from an important page, Google needs to be able to detect it.

Over time, the frequency of these checks decreases. A noindex page that has been in effect for 6 months will be visited much less often than a regularly updated indexable page.

Is this limit of 10,000 URLs a hard and fast rule?

No, and this is where the statement becomes dangerous if taken literally. Mueller speaks of a global volume, not the quality of that volume.

A site with 8,000 URLs, of which 5,000 are duplicate filtered pages or URLs with unnecessary parameters, will experience crawl issues, no matter what Google says. The threshold is an indicator, not a guarantee.

- Crawl budget becomes critical beyond 10,000 URLs according to Google

- Noindex pages are gradually deprioritized but never completely ignored

- The quality of URLs matters as much as their raw number

- A “few days” delay remains a subjective and unquantified concept

SEO Expert opinion

Does this statement truly reflect ground reality?

Partially. On well-structured sites with 5,000 to 8,000 pages, we do indeed observe a smooth crawl. However, Mueller omits problematic scenarios: sites with infinite pagination, multiple facets, poorly managed URL parameters.

I have seen sites with 6,000 URLs where only 40% were crawled monthly because Google lost 60% of its budget on automatically generated filtered pages. The raw volume says nothing about the crawl distribution. [To be verified]: Google does not specify if this threshold includes discovered but non-indexable URLs.

What does "crawled less often" really mean for noindex?

In my observations, a noindex page for 3 months goes from weekly crawls to monthly, then quarterly. However, this rate varies greatly depending on the domain authority and page depth.

The problem? If you run a site with 2,000 noindex pages (staging, old versions, legal pages), Google will still allocate resources to it. Not huge, but enough to impact a site with 8,000 URLs already at risk.

When does this 10,000 URL rule not apply?

First case: sites with massive technical problems. Server response times greater than 500ms, recurring 5xx errors, chain redirects — volume becomes secondary.

Second case: poorly configured multilingual or multi-country sites. You may have 6,000 total URLs, but if Google detects 5 nearly identical versions of each page, the crawl will slow down drastically.

Third case: high proportion of duplicate or very similar content. The 10,000 URL threshold implicitly assumes that each URL adds differentiated value.

Practical impact and recommendations

What should I do if my site approaches 10,000 URLs?

Your first instinct: audit the true quality of these URLs. How many are indexable? How many generate organic traffic? How many are crawled monthly according to Search Console?

Then, identify the junk URLs: unblocked tracking parameters, infinite pagination pages, cross-combined filters without SEO value. A clean 9,000 URL site outperforms a 7,000 URL polluted site.

Finally, segment by strategic priority. Category and flagship product pages should be crawled daily. Old news or legal pages can wait a week.

How can I optimize crawling without exceeding the critical threshold?

- Block via robots.txt URLs with no SEO value: unnecessary facets, internal search results, user account pages

- Use noindex sparingly — prefer blocking entire sections with robots.txt that are of no interest

- Regularly clean orphan pages that consume budget without adding value

- Optimize server response times: every millisecond saved allows for more crawl

- Structure internal linking to prioritize strategic pages

- Monitor Search Console: Crawl Statistics section to detect anomalies

What mistakes should you absolutely avoid?

A classic mistake: putting entire sections on noindex "to save crawl budget." Google will still check them periodically. Better to have a clean robots.txt.

Another trap: believing a site with 9,000 URLs is "safe" and neglecting the architecture. If those 9,000 pages are 5 clicks deep on average, crawling will be ineffective even below the threshold.

Finally, ignore the velocity. A site that publishes 200 new pages a week with 8,000 total URLs has a different crawl profile than a static site — Mueller's statement does not make this distinction.

Managing crawl budget remains relevant even below 10,000 URLs if your architecture is complex or your publication pace is high. Fine optimization of these aspects — quality auditing of URLs, prioritization of internal linking, monitoring of crawl patterns — requires sharp technical expertise.

For sites approaching this threshold or with a complex e-commerce architecture, working with a specialized SEO agency can quickly identify bottlenecks and implement best practices without wasting time on trial and error.

❓ Frequently Asked Questions

Un site de 12 000 URLs doit-il forcément s'inquiéter du crawl budget ?

Vaut-il mieux bloquer en robots.txt ou mettre en noindex les pages inutiles ?

Comment savoir si Google crawle efficacement mon site de 8 000 pages ?

Les pages en noindex comptent-elles dans le seuil des 10 000 URLs ?

Cette règle s'applique-t-elle aussi aux sites JavaScript lourds ?

🎥 From the same video 18

Other SEO insights extracted from this same Google Search Central video · published on 24/12/2021

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.