Official statement

Other statements from this video 11 ▾

- □ Google rend-il vraiment toutes les pages HTML indexables sans exception ?

- □ Googlebot suit-il vraiment Chrome en temps réel ?

- □ Les données structurées injectées en JavaScript sont-elles vraiment crawlées par Google ?

- □ Les redirections JavaScript sont-elles vraiment traitées comme des redirections serveur par Google ?

- □ Pourquoi le rendu Google ne sera jamais vraiment celui d'un navigateur standard ?

- □ Faut-il vraiment débloquer toutes vos ressources dans robots.txt pour éviter les problèmes d'indexation ?

- □ Pourquoi Google ignore-t-il les bannières de consentement des cookies lors du crawl ?

- □ Faut-il abandonner le dynamic rendering basé sur le user-agent de Googlebot ?

- □ Pourquoi la gestion d'erreurs JavaScript conditionne-t-elle désormais votre capacité à être indexé par Google ?

- □ L'outil d'inspection d'URL est-il vraiment fiable pour tester le rendu par Googlebot ?

- □ Pourquoi Google rend-il toutes les pages HTML même celles qui n'ont pas besoin de JavaScript ?

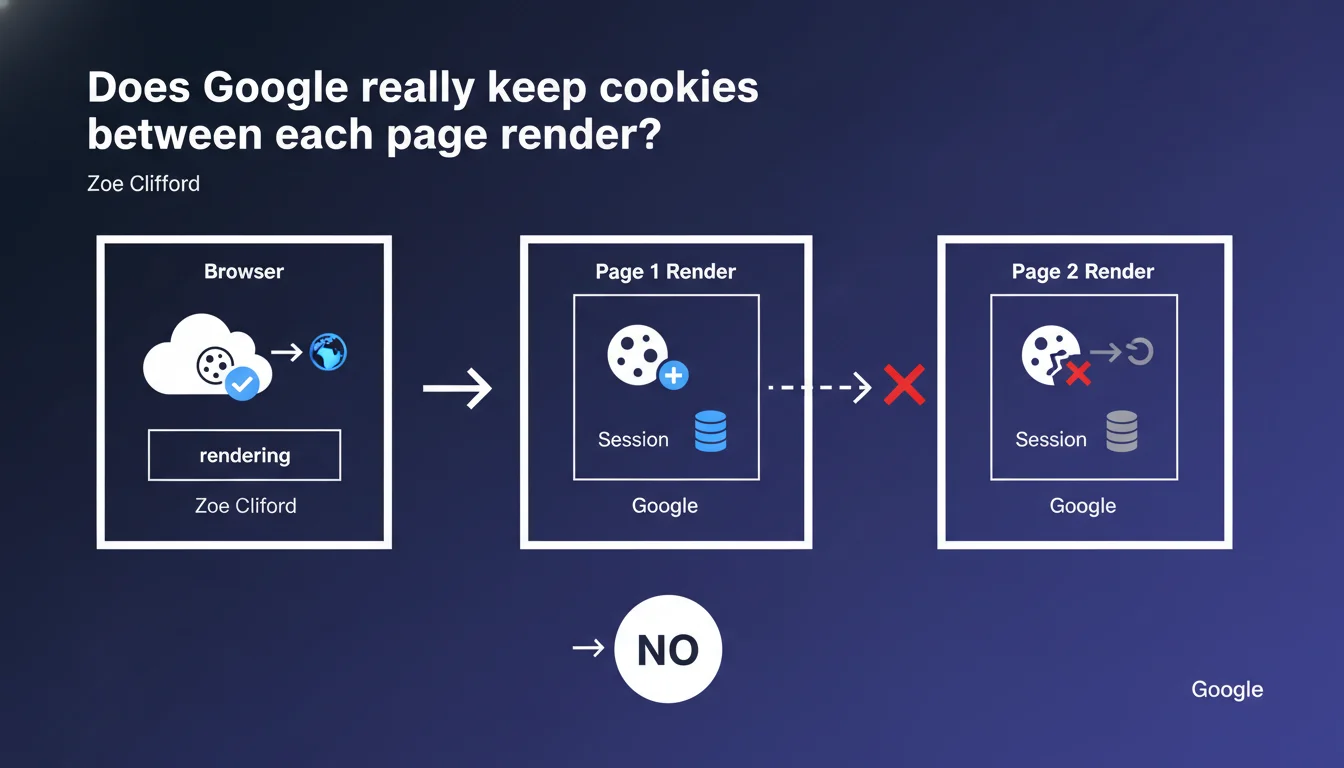

Google sees cookies set during a rendering session, but each render is a completely isolated browser session. Consequence: no cookies are persisted from one bot visit to another. Personalization mechanisms based on multi-session cookies therefore remain invisible to Googlebot.

What you need to understand

What exactly does "stateless rendering" mean?

Googlebot does activate cookies at the browser level while executing a page's JavaScript. If your code sets a cookie during this phase, Google detects it and can use it for the duration of that specific render.

The catch? Every Googlebot visit starts with a blank browser session. Cookies created during a first crawl no longer exist on the second. The bot forgets everything between visits, like a browser in perpetual private browsing mode.

Why does this approach create SEO problems?

Many modern websites rely on cookies to manage conditional content display. Think of progressive paywalls, welcome messages for new visitors, or urgent banners that disappear after acceptance.

If your logic is based on "has the user already seen this content?", Googlebot will always answer no. Every visit looks like the very first one. Result: the bot may index a version that differs from what your returning visitors see.

Does this limitation apply to all types of cookies?

Yes, without distinction. Whether it's a standard session cookie, an HttpOnly cookie, or a cookie set client-side via JavaScript, none survive beyond the current render.

The only theoretical exception: mechanisms that don't rely on cookies (localStorage, sessionStorage) — but Google has already clarified that local storage follows the same complete isolation logic between renders.

- Cookies work during a render, but never persist from one visit to another

- Each crawl starts with a completely blank browser state

- Content conditioned by multi-session cookies remains invisible or inconsistent for Googlebot

- No difference in treatment between server cookies and JavaScript cookies

SEO Expert opinion

Is this statement consistent with real-world observations?

On paper, yes — and tests in controlled environments confirm it. A bot simulating Googlebot (via Puppeteer or Selenium configured as headless) clearly shows this complete session isolation.

In practice, some sites have reported strange behaviors: Google seemed to "remember" previous interactions on complex properties with multiple redirects. [To verify] — this could be artifacts related to DNS cache or specific HTTP headers, not actual cookie persistence.

What nuances should be added to this rule?

Zoe Clifford speaks of a "completely new browser session." Technically accurate. But she doesn't mention the case of cookies set server-side via Set-Cookie before rendering even begins.

If your server sends a cookie in HTTP headers on the first hit, then Googlebot returns a second time within the same crawl sequence (quick refresh, pagination), that cookie could theoretically be resent — if the bot maintains an open TCP/HTTP2 session. Let's be honest: Google doesn't document this level of granularity, and tests show that even this scenario remains unpredictable.

In which contexts does this limitation become critical?

Three typical cases where cookie persistence failure breaks everything:

- Paywalls and freemium systems: if you offer 3 free articles before blocking, Googlebot always sees "article 1 of 3" — or worse, the full wall if the logic is poorly coded.

- Client-side A/B testing: Google might index variant A one time and variant B another, creating unintentional duplicate content.

- Legal banners (GDPR, cookies): if they obscure main content until the user clicks "Accept", and that click sets a cookie… Googlebot will never click and will index the obstructed version.

Practical impact and recommendations

What should you do concretely to avoid problems?

First step: audit all conditional logic driven by cookies. List scripts that read or write cookies before displaying content. Identify those that block, hide, or modify entire sections.

Then replace these mechanisms with alternatives compatible with a stateless environment. Typically: detect Googlebot via user-agent (imperfect but useful as a complement), use URL parameters to track state, or better yet, condition display server-side based on HTTP headers rather than cookies.

What mistakes must you absolutely avoid?

Never hide strategic content behind a "first visit" cookie. Google will only see the initial version, potentially empty or truncated.

Also avoid conditionally redirecting based on a cookie — for example, sending "new" users to a special landing page. Googlebot, eternally "new", will loop on that page without ever exploring the rest of the site. And that's where it breaks: your internal linking becomes invisible.

How do you verify that your site handles this case properly?

- Test your site in private browsing, close and reopen the tab between each page — simulate Googlebot's amnesia

- Use Search Console and the "URL Inspection" tool to compare the HTML rendered by Google vs. that seen by a regular visitor

- Audit your scripts: identify all

document.cookie,setCookie(), and verify their impact on display - Implement server-side Googlebot detection (via user-agent + reverse DNS) to serve a version without cookie dependencies

- Log Googlebot requests server-side: verify that no cookies are resent from one visit to another

❓ Frequently Asked Questions

Est-ce que Googlebot peut lire les cookies définis en JavaScript ?

Comment gérer un paywall si Google ne conserve pas les cookies ?

Les cookies de consentement RGPD bloquent-ils l'indexation de mon contenu ?

Le localStorage et sessionStorage sont-ils persistés entre les rendus ?

Peut-on contourner cette limitation en utilisant des paramètres d'URL ?

🎥 From the same video 11

Other SEO insights extracted from this same Google Search Central video · published on 11/07/2024

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.