Official statement

Other statements from this video 20 ▾

- □ Pourquoi Google ne peut-il jamais garantir que vos utilisateurs atterriront sur la bonne version linguistique de votre site ?

- □ Faut-il bannir les redirections automatiques pour les sites multilingues ?

- □ Faut-il bloquer l'exécution JavaScript pour les SPA avec SSR ?

- □ Faut-il baliser les mots étrangers avec l'attribut lang pour le SEO ?

- □ Le rel=canonical est-il vraiment pris en compte par Google ou juste une suggestion ignorée ?

- □ Les FAQ dans les articles de blog sont-elles vraiment utiles pour le SEO ?

- □ Hreflang est-il vraiment obligatoire pour gérer un site international ?

- □ Le cache Google a-t-il un impact sur votre référencement ?

- □ Les résultats de recherche localisés : comment Google adapte-t-il vraiment son algorithme selon les pays et les langues ?

- □ Le noindex est-il vraiment inutile pour gérer le budget de crawl ?

- □ Faut-il vraiment se limiter à une seule thématique sur son site pour bien ranker ?

- □ Combien de liens peut-on vraiment mettre sur une page sans pénalité Google ?

- □ L'URL référente dans Search Console impacte-t-elle vraiment votre classement ?

- □ Le nombre de mots est-il vraiment inutile pour le référencement ?

- □ Faut-il s'inquiéter de réutiliser les mêmes blocs de texte sur plusieurs pages ?

- □ Google valide-t-il vraiment la traduction automatique sur les sites multilingues ?

- □ Les URLs bloquées par robots.txt mais indexées posent-elles vraiment problème ?

- □ Faut-il vraiment dupliquer le schema Organisation sur toutes les pages du site ?

- □ Les avis auto-hébergés peuvent-ils afficher des étoiles dans les résultats de recherche Google ?

- □ Pourquoi les fusions de sites Web génèrent-elles des résultats imprévisibles aux yeux de Google ?

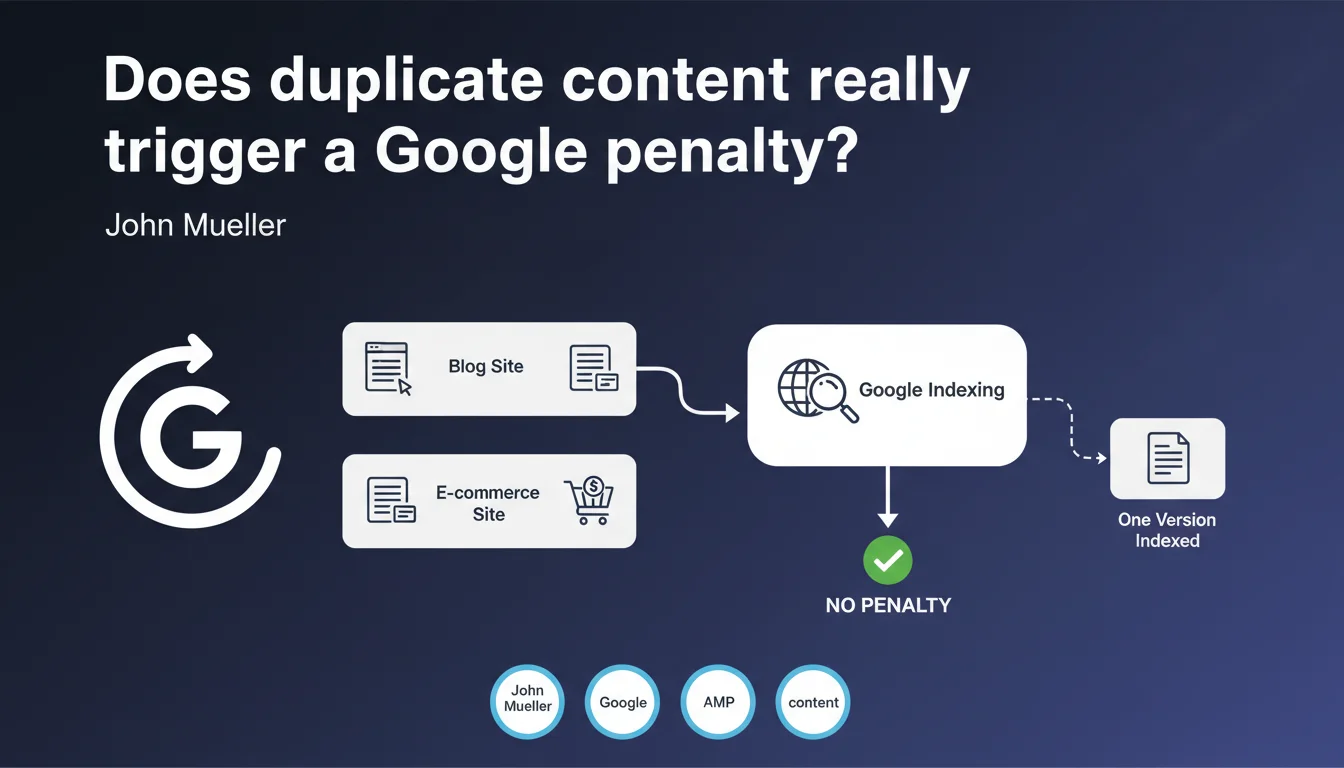

Google does not automatically penalize duplicate content across multiple sites. The search engine simply attempts to index a canonical version and considers this situation as normal. No algorithmic sanction therefore, but rather a question of indexing choice.

What you need to understand

Why does Google claim there's no automatic penalty?

John Mueller's statement shatters a persistent myth in the SEO industry. Google does not penalize the fact that the same content appears on multiple domains. The search engine instead applies a deduplication filter: it chooses one version to index and ignores the others.

In practice? If you publish the same product sheet on your e-commerce site and on a marketplace, Google will attempt to determine which version to display in search results. This isn't a penalty — it's an indexing choice based on canonicalization signals.

What's the difference between "no penalty" and "no problem"?

The absence of a penalty doesn't mean duplicate content has no consequences. Google will favor one version, and it may not be the one you want. If your competitor syndicates your articles and their domain has more authority, their version will rank instead.

The risk therefore isn't an algorithmic sanction, but visibility cannibalization. You lose control over which version gets indexed, and potentially over associated organic traffic.

In which cases does this rule apply?

Mueller explicitly mentions scenarios where identical legitimate content appears in multiple locations: personal blog syndicated on Medium, product sheets distributed to resellers, corporate content republished on affiliate sites.

- No penalty if the content is identical for justified commercial or editorial reasons

- Google chooses a canonical version based on trust, authority, and technical signal criteria

- Canonical tags and redirects remain your tools to indicate your preference

- Scraped or malicious content doesn't fall into this framework — there, manual action may apply

SEO Expert opinion

Is this statement consistent with real-world observations?

Yes and no. In principle, we do observe that Google doesn't apply systematic algorithmic penalties for duplicate content. No dramatic ranking drops like with Panda for thin content.

But — and this is where it gets tricky — reality is more nuanced. Google doesn't penalize, but it demotes. If your version isn't selected as canonical, it disappears from displayed indexes. The practical result looks suspiciously like a penalty, even if the technical mechanism differs.

What nuances should be added to this statement?

Mueller speaks of "normal" duplication between different sites. He says nothing about internal duplicate content, which poses different problems: crawl budget dilution, URL cannibalization, contradictory signals.

Another point: the absence of automatic penalty doesn't protect you from manual actions. If a spam report flags your scraped content, a Quality Rater can intervene. And then it's no longer a question of algorithm. [To verify]: Google remains vague about the threshold where "normal duplicate content" becomes "duplication spam". No public metrics, no shared percentages.

In which cases does this logic not work?

If you massively duplicate content to manipulate SERPs or create doorway pages, the algorithm or a manual team will intervene. Mueller speaks of legitimate cases — not content farms or aggressive scraping.

Practical impact and recommendations

What should you concretely do if you publish content on multiple sites?

Clearly indicate your preferred version via canonical tags. If you syndicate a blog article to Medium, ensure that Medium points back to your original version with a rel=canonical. Don't let Google guess.

If you distribute product sheets to resellers, negotiate the addition of a canonical link to your domain. Otherwise, it's the marketplace with the most authority that will capture the visibility.

What mistakes should you avoid at all costs?

Don't duplicate content internally without a canonicalization strategy. Avoid parameterized URLs, print versions, sorting filters that generate identical content without canonical tags or meta robots noindex.

And most importantly: don't count on Google to "understand" which version you want indexed. Implicit signals (age, domain authority) aren't always sufficient. Be explicit.

How can you verify that your site is properly managing duplicate content?

- Audit your canonical tags: every duplicated page should point to the master version

- Check Google Search Console's "Coverage" tab — pages excluded for the right reason "Duplicate of a page with appropriate canonical tag" are normal

- Use a crawler (Screaming Frog, Oncrawl) to identify identical content internally

- If you syndicate content, monitor in Search Console which URL Google actually indexes (not the one you think)

- Test the URL inspection tool to see which version Google considers canonical

In summary: Google won't penalize you for legitimate duplicate content, but it will choose a version on your behalf if you don't give clear instructions. Result: you risk losing your visibility to a third party.

Managing canonicalization technically, monitoring indexation signals, and optimizing content distribution requires specialized expertise. If these mechanisms seem too complex to master alone, it may be worthwhile to get support from a specialized SEO agency that can structure a strategy adapted to your digital ecosystem.

❓ Frequently Asked Questions

Le contenu dupliqué entre mon blog et Medium va-t-il nuire à mon référencement ?

Dois-je bloquer l'indexation des pages dupliquées en interne ?

Google peut-il pénaliser manuellement du contenu dupliqué même sans algorithme automatique ?

Comment savoir quelle version Google a choisi d'indexer ?

Le contenu syndiqué doit-il toujours pointer vers l'original avec un canonical ?

🎥 From the same video 20

Other SEO insights extracted from this same Google Search Central video · published on 21/10/2022

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.