Official statement

Other statements from this video 8 ▾

- □ Faut-il optimiser son site différemment pour AI Overviews et AI Mode ?

- □ Faut-il adapter sa stratégie SEO pour les fonctionnalités IA de Google ?

- □ Les clics depuis AI Overviews convertissent-ils vraiment mieux ?

- □ Les AI Overviews favorisent-elles vraiment une plus grande diversité de sites ?

- □ Pourquoi Google insiste-t-il autant sur la « valeur unique » du contenu ?

- □ Les recommandations Search Console sur Core Web Vitals vont-elles enfin servir à quelque chose ?

- □ Le fichier robots.txt reste-t-il vraiment utile pour contrôler le crawl des IA ?

- □ Faut-il arrêter de parler de SEO et adopter les nouveaux termes AIO, GEO ou optimisation pour LLM ?

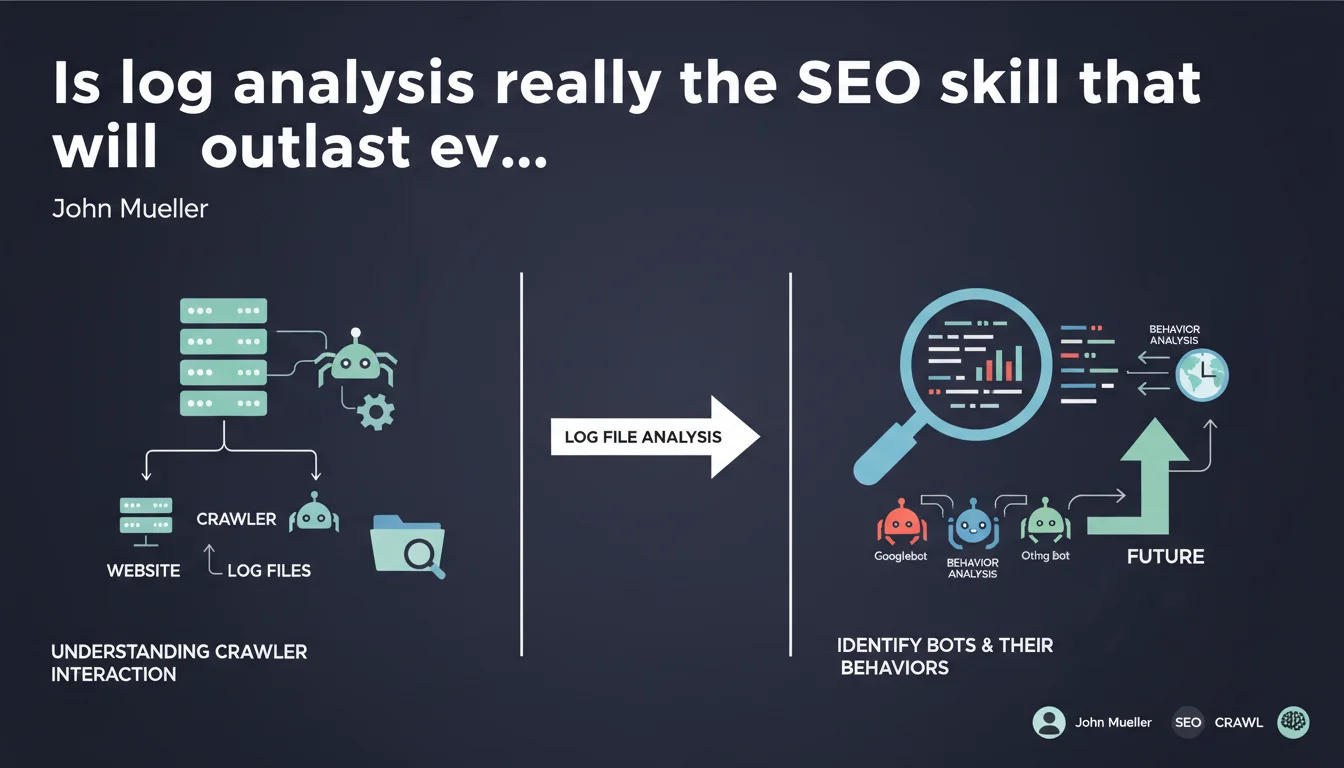

Mueller emphasizes log file analysis as a timeless SEO skill — understanding how crawlers interact with your site will remain crucial as long as the web exists. This statement repositions log analysis at the heart of technical SEO expertise, far from fleeting trends.

What you need to understand

Why does Mueller put log analysis at the center of the game?

The statement is clear: as long as there are websites, there will be crawlers. And as long as there are crawlers, understanding their behavior will remain a differentiating skill. Mueller is not talking about ephemeral tactics or trendy optimizations here.

He repositions log analysis as durable technical foundation. Unlike signals that evolve (PageRank, Core Web Vitals, generative AI), the crawler/server logic has remained stable for decades. Mastering this mechanics ensures you a structural competitive advantage.

What does log file analysis really reveal?

Server logs expose the actual actions of bots: crawl frequency, depth explored, HTTP codes encountered, user-agents detected. Nothing theoretical — it's the raw trace of what actually happened on the server side.

This data allows you to identify inconsistencies between what you believe Googlebot does and what it actually does. Orphaned pages crawled without reason, entire sections ignored, crawl budget wasted on useless URLs — all of this becomes visible.

How does this skill stand out from standard tools?

Search Console provides aggregated and delayed visibility. Logs offer granularity to the second, bot by bot, URL by URL. It's the difference between reading a monthly summary and consulting the logbook minute by minute.

Third-party tools (Screaming Frog, Oncrawl, Botify) rely on this data for their advanced analyses. But knowing how to query logs directly — without intermediaries — remains a rare and powerful skill.

- Sustainable skill: log analysis will remain relevant as long as the web exists

- Raw data: direct access to the actual actions of crawlers, without aggregation or delay

- Fine visibility: detection of anomalies invisible in Search Console or Google Analytics

- Precise diagnostics: identification of crawl budget waste and orphaned areas

SEO Expert opinion

Is this statement consistent with practices observed in the field?

Absolutely. Sites that master log analysis have a measurable competitive advantage — especially on complex infrastructures (multi-regional sites, e-commerce with large catalogs, media with thousands of articles). Crawl budget becomes a limiting factor once volume exceeds a few thousand pages.

But let's be honest: this skill remains marginal in the profession. Many SEOs rely solely on Search Console and third-party crawlers. Result? They miss structural problems invisible elsewhere.

What nuances should be added to this assertion?

Mueller doesn't specify at what threshold log analysis becomes truly discriminating. For a 50-page brochure website, the time/skill investment can be disproportionate. The impact is really measurable on sites with significant volume or specific technical issues.

Another nuance: log analysis requires technical access that not all SEOs have. Some shared hosting environments make data collection complex. And exploiting raw logs without proper tools (Splunk, ELK, Python scripts) quickly becomes unmanageable. [To verify]: is this a skill accessible to all SEO profiles or reserved for advanced technical profiles?

In what cases is this skill insufficient?

Log analysis shows what was crawled, not why certain pages don't rank despite regular crawling. It says nothing about content quality, semantic relevance, or backlink competitiveness.

It's a diagnostic skill, not a miracle solution. If your problem is weak content or a non-existent link strategy, logs will only reveal part of the picture. And that's where it gets tricky: some SEOs over-invest in technical analysis while neglecting everything else.

Practical impact and recommendations

What should you do concretely to leverage this skill?

First step: set up access to server logs. Depending on your infrastructure (Apache, Nginx, cloud hosting), the method varies. Some hosts offer automatic exports, others require SSH configuration.

Next, choose your analysis tool. Solutions range from homemade Python scripts (for technical profiles) to dedicated platforms like Oncrawl, Botify, or Screaming Frog Log Analyzer. The investment depends on your site's volume and complexity.

What errors should you avoid when interpreting logs?

First common mistake: confusing all Googlebot agents. There are several agents (Googlebot Desktop, Mobile, Image, News) with distinct behaviors. Failing to separate them in your analysis skews conclusions.

Second pitfall: ignoring non-200 HTTP codes. Intensive crawling on 404s or 301s often reveals an internal linking problem or chained redirects. It's a warning signal, not noise.

Third error: only looking at total crawl volume. The distribution of crawl matters just as much — if 80% of budget goes to low-value URLs (facets, poorly managed pagination), that's where the problem lies.

How do you verify that your crawl budget is optimized?

Compare the volume of strategic pages crawled versus total crawl volume. If your high-value pages (flagship products, pillar articles) aren't visited regularly, there's a structural issue.

Examine crawl frequency by section. A section updated daily (news) should be crawled more often than a static section (legal notices). If that's not the case, your internal architecture isn't effectively guiding crawlers.

- Set up regular access to server logs (daily or weekly depending on volume)

- Segment analysis by bot type (Googlebot Desktop, Mobile, other crawlers)

- Identify URLs crawled at high frequency but with low business value

- Spot strategic pages that are under-crawled or ignored

- Analyze HTTP codes to detect unnecessary redirects or recurring errors

- Correlate crawl spikes with your technical or content modifications

- Use dedicated analysis tools if volume exceeds several thousand log lines per day

❓ Frequently Asked Questions

L'analyse des logs est-elle utile pour tous les sites, quelle que soit leur taille ?

Quels outils gratuits permettent d'analyser les logs serveur ?

Comment différencier le vrai Googlebot d'un bot imitateur dans les logs ?

À quelle fréquence faut-il analyser les logs pour un suivi efficace ?

L'analyse des logs peut-elle remplacer Search Console pour le diagnostic SEO ?

🎥 From the same video 8

Other SEO insights extracted from this same Google Search Central video · published on 01/07/2025

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.