Official statement

Other statements from this video 11 ▾

- □ Does the H1 tag really have the SEO impact that Google claims?

- □ Is Google Search Console really the only source of truth about your actual performance?

- □ Is a sitemap really essential for Google to crawl your website effectively?

- □ Does Google really index JavaScript as well as traditional HTML?

- □ Do you really need to force server-side rendering for all JavaScript applications?

- □ Do you really need to migrate your microdata to JSON-LD for structured data?

- □ How many links should you really place on your homepage to optimize crawl budget?

- □ Does Google really expect developers and SEO teams to finally work together?

- □ Could testing your site across different browsers be the missing link to your SEO success?

- □ Do you really need to wait a full year to evaluate SEO performance on a seasonal site?

- □ Should you really wait 6 months before evaluating your new website's performance?

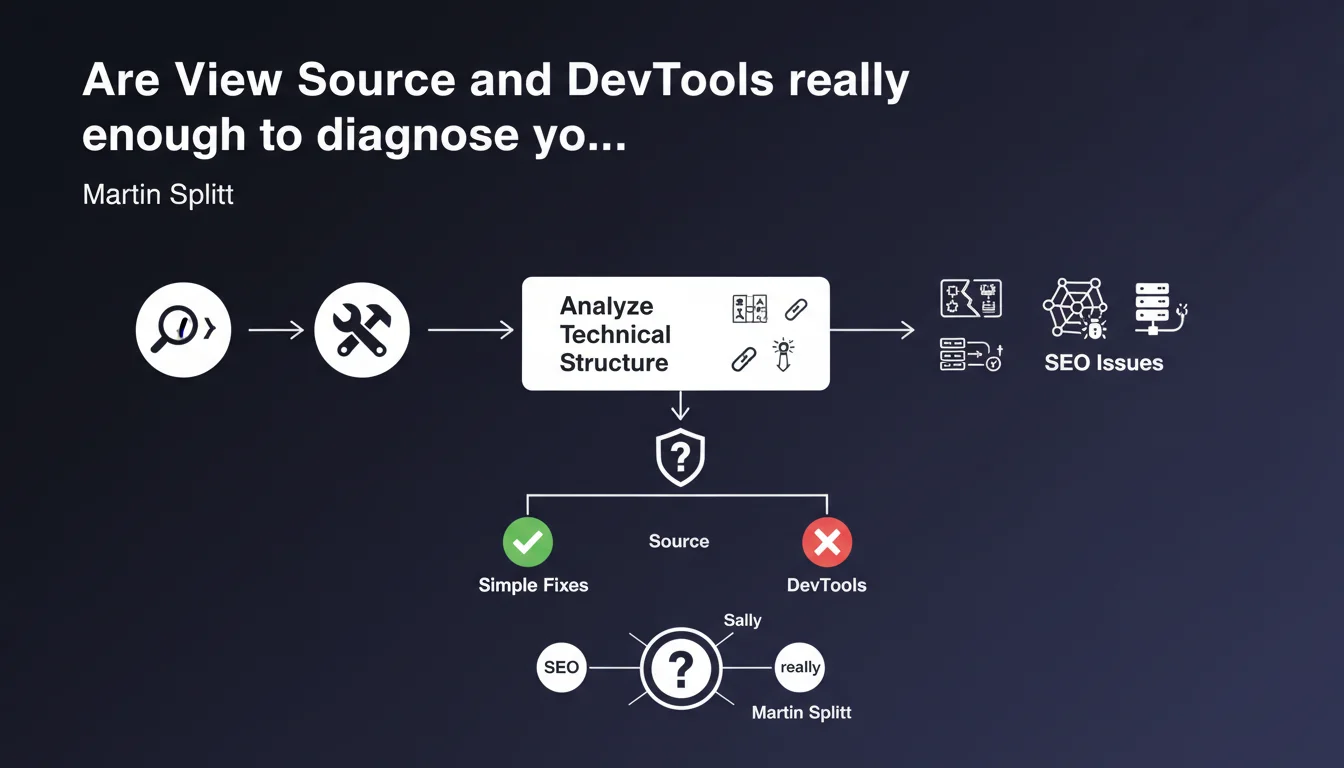

Martin Splitt positions View Source and Chrome DevTools as essential tools for understanding the technical structure of a page and bridging the gap between development and SEO. These native Chrome features allow you to analyze raw HTML, JavaScript rendering, and quickly identify technical problems without relying on paid third-party tools.

What you need to understand

Why does Google insist on these basic tools?

Splitt's statement brings SEOs back to the fundamentals: understanding what the search engine actually sees. View Source displays the initial HTML sent by the server, while DevTools (via the element inspector) shows the DOM after JavaScript execution.

This distinction is crucial for modern sites using client-side rendering. Content missing in View Source but present in DevTools signals deferred rendering by JavaScript — with all the SEO implications that entails.

What can you concretely diagnose with these tools?

DevTools allows you to audit meta tags, heading structure, structured data, missing alt attributes, and blocking resources. The Network tab reveals cascading redirects, load times, and internal 404 errors.

View Source, meanwhile, immediately exposes whether critical content is available in the initial HTML or requires JavaScript interaction. It's the zero-cost test before any deeper diagnosis.

How does this facilitate dev-SEO collaboration?

These tools speak the same language as developers. Rather than arriving with an incomprehensible Screaming Frog report, you can open DevTools in a meeting and show the problem directly in the source code.

This reduces friction and speeds up fixes: the developer sees exactly what's wrong, in their native environment.

- View Source shows the raw initial HTML sent by the server

- DevTools displays the final DOM after JavaScript execution

- The difference between the two reveals client-side rendering issues

- These free tools enable immediate diagnosis without heavy technical stack

- They facilitate technical communication between SEO and developers

SEO Expert opinion

Is this recommendation sufficient for a complete audit?

Let's be honest: View Source and DevTools are essential but not exhaustive. They excel at diagnosing specific issues on a given URL, but fail to detect patterns across an entire site.

It's impossible with these tools alone to identify crawl budget problems, weak internal linking, keyword cannibalization, or excessive depth. For that, you'll always need dedicated crawlers and log analysis.

What limitations must you understand regarding JavaScript rendering?

DevTools displays the DOM in your browser, with your connection, your extensions, your geolocation. Googlebot doesn't see exactly the same thing. It may encounter timeouts, resources blocked by robots.txt, geographically restricted content.

The Mobile-Friendly test or the Search Console URL inspection tool show what Googlebot actually renders — and it's not always identical to DevTools. [Verify] systematically with the inspection tool for critical pages.

When do you need to go beyond these tools?

For migrations, redesigns, diagnosing massive traffic loss, or in-depth technical audits, View Source and DevTools remain starting points. But you'll need comparative analysis (before/after), continuous monitoring, semantic analysis.

On very large sites, manual URL-by-URL analysis becomes impractical. Third-party tools enable automation, alerts, and dashboards. Splitt doesn't say they're useless — he's simply reminding you that you can't skip the fundamentals.

Practical impact and recommendations

What should you integrate into your daily workflow?

Make it a habit to open View Source systematically before DevTools when analyzing a page. If the main content doesn't appear in the raw HTML, you've already identified a potential risk.

Use the Network tab in DevTools to track unnecessary internal redirects, 404 resources, and blocking scripts that delay rendering. Filter by resource type to quickly isolate issues.

What reflexes should you develop when communicating with developers?

Stop sending annotated screenshots. Open DevTools in a screen share, reproduce the problem live, show the HTML element in question in the inspector. The developer will understand immediately.

Learn the basics of DevTools' Performance panel to identify scripts that block the main thread. This gives you technical credibility and facilitates decisions.

How do you validate that a fix has been properly deployed?

Clear the cache (Ctrl+Shift+R or Cmd+Shift+R), reload the page, inspect again. Compare View Source before and after. If the problem persists, verify that the deployment is live in production and not limited to a staging environment.

For sites with JavaScript rendering, use the Search Console URL inspection tool to confirm that Googlebot is properly rendering the fix.

- Install Chrome (or Chromium) without parasitic extensions for audits

- Master the Ctrl+U (View Source) and F12 (DevTools) shortcuts

- Systematically compare raw HTML vs final DOM

- Use the Network tab to track redirects and errors

- Validate meta tags, heading structure, structured data in the inspector

- Cross-check with the Search Console inspection tool for critical pages

- Train developers to reproduce your diagnostics in DevTools

❓ Frequently Asked Questions

Quelle est la différence entre View Source et l'inspecteur DevTools ?

DevTools montre-t-il exactement ce que voit Googlebot ?

Ces outils suffisent-ils pour auditer un site de 10 000 pages ?

Comment vérifier si mon contenu JavaScript est bien indexé ?

Quels onglets de DevTools sont prioritaires pour le SEO ?

🎥 From the same video 11

Other SEO insights extracted from this same Google Search Central video · published on 22/03/2022

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.