Official statement

Other statements from this video 11 ▾

- □ Does the H1 tag really have the SEO impact that Google claims?

- □ Is Google Search Console really the only source of truth about your actual performance?

- □ Is a sitemap really essential for Google to crawl your website effectively?

- □ Does Google really index JavaScript as well as traditional HTML?

- □ Do you really need to migrate your microdata to JSON-LD for structured data?

- □ How many links should you really place on your homepage to optimize crawl budget?

- □ Does Google really expect developers and SEO teams to finally work together?

- □ Could testing your site across different browsers be the missing link to your SEO success?

- □ Are View Source and DevTools really enough to diagnose your SEO issues?

- □ Do you really need to wait a full year to evaluate SEO performance on a seasonal site?

- □ Should you really wait 6 months before evaluating your new website's performance?

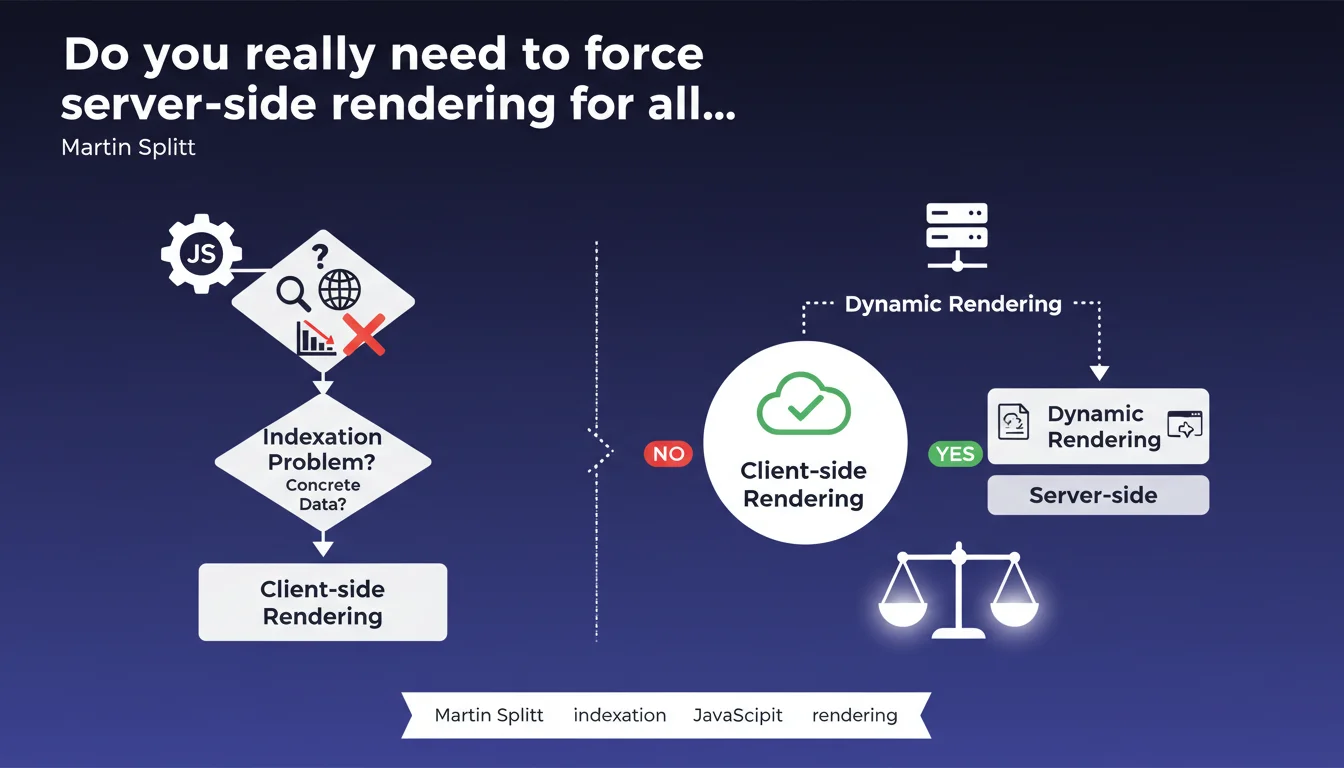

Google asserts that SSR and dynamic rendering are not systematically necessary for JavaScript sites. These solutions should only be implemented if concrete data reveals a genuine indexation problem — not by default or out of fear.

What you need to understand

Why is Google questioning the necessity of SSR?

For years, the SEO community has lived in fear of JavaScript. The prevailing idea: Googlebot can't handle JS, so you absolutely must use SSR or dynamic rendering to secure indexation. Martin Splitt challenges this belief.

Google states that its engine now handles JavaScript rendering correctly in the majority of cases. SSR becomes a workaround solution — useful in certain contexts, but not a universal obligation. The problem is that these solutions have been deployed far too often as a precaution, without verifying whether a real problem actually existed.

What exactly is dynamic rendering?

Dynamic rendering consists of serving two versions of the same page: pre-rendered HTML for bots, JavaScript for users. It's a hybrid solution, less heavy than full SSR, but it adds a layer of complexity.

Google tolerates this approach — it doesn't deceive anyone since the content remains identical. But it becomes superfluous if Googlebot can already interpret JavaScript correctly. And this is where many projects get bogged down: they're solving a problem that no longer exists.

In what cases does SSR remain relevant?

Splitt doesn't say that SSR is useless — he says it should not be systematic. Some sites genuinely need it: complex applications with excessively long client-side rendering times, sites with thousands of dynamically generated pages, or contexts where crawl budget is extremely tight.

But the real question: have you verified that your site is actually problematic before overhauling everything? Because if Google is already indexing your pages correctly, you're wasting time and money deploying heavier infrastructure.

- SSR is not a guarantee of indexation — it addresses a specific problem.

- Googlebot handles modern JavaScript, even if certain edge cases remain.

- Dynamic rendering remains tolerated, but becomes redundant if the bot is already indexing correctly.

- Concrete indexation data should guide the decision, not beliefs or excessive precautions.

SEO Expert opinion

Is this statement consistent with real-world observations?

Yes and no. On well-built sites with clean JavaScript and modern frameworks (Next.js, Nuxt, etc.), we indeed observe that Google indexes without issues. Testing shows that Googlebot renders pages, follows links, extracts content. Nothing to complain about.

But — and this is a big but — there remain contexts where things go wrong. Sites with poorly implemented lazy loading, single-page applications with client-side rendering delays exceeding 5 seconds, exotic poorly documented frameworks. In these cases, SSR or dynamic rendering save the day. [To verify]: Google provides no precise threshold for determining when rendering becomes problematic.

What nuances should be added to this statement?

Splitt is right to say you shouldn't deploy SSR on principle. But he oversimplifies somewhat. The real issue is rendering speed and JavaScript code quality. If your site takes 8 seconds to display content on the client side, Googlebot will struggle — even though it technically knows how to handle JS.

Another point: this statement is mainly aimed at sites in the design phase. If you already have SSR in place and everything works, there's no reason to break it. The mistake would be to interpret this statement as a green light to revert to pure client-side rendering without prior analysis.

In what cases does this rule not apply?

Very large e-commerce sites with thousands of dynamically generated product pages often need SSR to guarantee rapid and complete indexation. The same applies to news sites where content changes every hour: SSR accelerates content availability for bots.

Another exception: sites with limited crawl budget. If Google visits infrequently and client-side rendering takes time, you risk missing indexation opportunities. In these cases, SSR or dynamic rendering remains relevant, not out of dogma, but out of pragmatism.

Practical impact and recommendations

What should you concretely do before deciding?

First step: verify your current indexation status. Use Search Console, the URL inspection tool, and compare raw HTML with the rendered DOM. If Google already sees your content, there's no need to overhaul everything.

Second action: test rendering performance with tools like Lighthouse, PageSpeed Insights, or directly Google's Mobile-Friendly Test. If content displays in less than 3 seconds and links are properly detected, you're probably fine.

What mistakes should you absolutely avoid?

Don't deploy SSR or dynamic rendering out of fear or habit. These solutions add complexity: server infrastructure, development time, increased maintenance. If you don't have a proven problem, you're creating technical debt for nothing.

Another trap: believing that SSR solves all SEO problems. A poorly structured site with weak title tags and non-existent internal linking will remain poorly indexed even with SSR. Rendering doesn't replace the fundamentals.

How can you verify your site is compliant without SSR?

- Regularly inspect your pages in Search Console with the live test tool

- Compare raw HTML (View Source) and rendered DOM (DevTools) to detect discrepancies

- Monitor indexation rates in GSC: if pages disappear, investigate

- Test client-side rendering speed: aim for less than 3 seconds for primary content display

- Verify that internal links generated in JavaScript are properly crawled (Screaming Frog with JS rendering enabled)

- Analyze server logs to see how Googlebot actually behaves on your site

Google's message is clear: stop deploying complex solutions without data. Test, measure, adjust. If your indexation works, keep things simple. If you detect a problem, then yes, consider SSR or dynamic rendering — but methodically.

These technical decisions can quickly become complex, especially on modern architectures where JavaScript, performance, and SEO intertwine. If you're uncertain about the best approach for your project, or if you lack visibility into your actual indexation status, support from a specialized web architecture SEO agency can save you time and avoid costly mistakes. A thorough technical audit lets you decide based on facts rather than assumptions.

❓ Frequently Asked Questions

Le SSR améliore-t-il systématiquement le référencement d'un site JavaScript ?

Puis-je abandonner le SSR si mon site en dispose déjà ?

Le rendu dynamique est-il considéré comme du cloaking par Google ?

Comment savoir si mon site a besoin de SSR ?

Les sites Next.js ou Nuxt nécessitent-ils obligatoirement le mode SSR ?

🎥 From the same video 11

Other SEO insights extracted from this same Google Search Central video · published on 22/03/2022

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.