Official statement

Other statements from this video 7 ▾

- □ Pourquoi Google a-t-il vraiment créé les XML Sitemaps ?

- □ Pourquoi Google a-t-il vraiment lancé Search Console à l'origine ?

- □ Comment réduire de 80% vos emails de support grâce à une documentation SEO-friendly ?

- □ Pourquoi Google cache-t-il certaines informations SEO aux webmasters ?

- □ Pourquoi Google a-t-il ciblé les SEO en priorité avec ses premiers outils pour webmasters ?

- □ Les tirets dans les URLs sont-ils vraiment un critère de ranking essentiel ?

- □ Sous-domaines vs sous-répertoires : pourquoi Google refuse-t-il de trancher ?

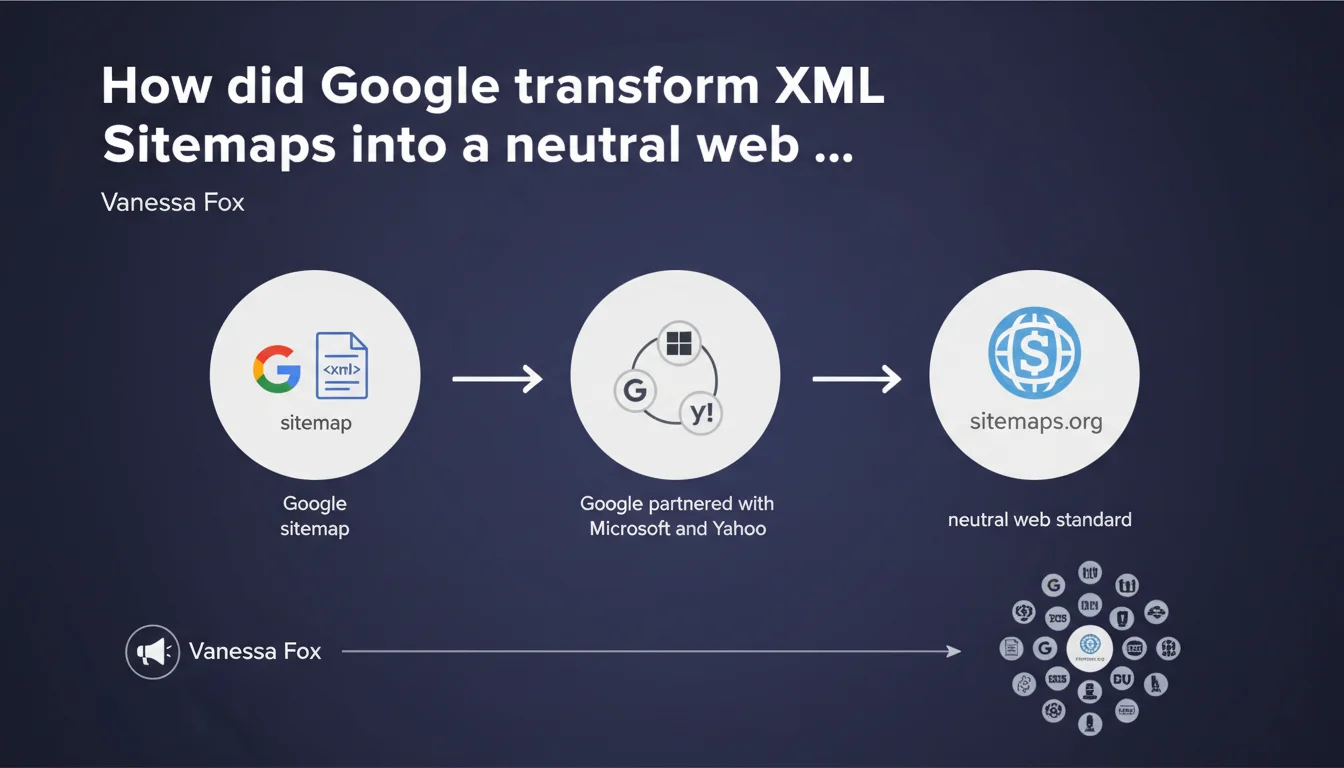

Google collaborated with Microsoft and Yahoo to establish XML Sitemaps as a unified web standard accepted by all major search engines, creating sitemaps.org with neutral branding. This initiative aimed to standardize communication protocols between websites and crawlers, eliminating the need for webmasters to create multiple sitemap versions tailored to different search engines.

What you need to understand

Why establish a shared standard instead of maintaining proprietary protocols?

Before this initiative, each search engine potentially had its own specifications for sitemaps. Google could have kept its protocol proprietary and forced webmasters to adapt specifically to its requirements.

But the chosen approach was fundamentally different: pool efforts with Microsoft and Yahoo to create a unified protocol. The sitemaps.org website with its neutral colors symbolizes this commitment to neutrality — no single search engine claims ownership of the standard.

What concrete benefits does this bring to SEO professionals?

Standardization means that a single XML Sitemap file works for Google, Bing, and historically Yahoo. No more need for technical variations depending on which crawler you're targeting.

This harmonization allowed CMS platforms and sitemap generators to focus on a single standard rather than managing multiple formats. The protocol became predictable and stable over time.

Was Google's initiative truly altruistic?

Let's be honest — Google had everything to gain. By promoting widespread adoption of XML Sitemaps through a shared standard, the search engine facilitated content discovery for crawling.

The more webmasters submit clear, well-structured sitemaps, the fewer resources Google needs to spend discovering hidden or poorly architected URLs. It's an efficiency gain for crawlers from all participating search engines.

- Multi-engine standardization via sitemaps.org to prevent protocol fragmentation

- A single XML Sitemap file compatible with Google, Bing, and Yahoo

- Easier adoption by CMS platforms and tools thanks to a unique, documented standard

- Mutual benefit: simplicity for webmasters, crawling efficiency for search engines

SEO Expert opinion

Is this assessment consistent with real-world practices?

Absolutely. In daily practice, the same sitemap.xml file submitted to Google Search Console and Bing Webmaster Tools works without modification. The protocol is respected by all major players.

What's less often mentioned: although the standard exists, Google and Bing don't necessarily treat sitemaps the same way in terms of indexation priorities or crawl frequency. The format is identical; the interpretation differs.

What points deserve particular attention today?

The neutrality displayed by sitemaps.org is real at the technical protocol level. But in practice, Google remains the dominant search engine — optimizations are primarily done for it, with Bing following in second place.

A common pitfall: assuming that submitting a sitemap guarantees indexation. [To be verified] Google has always been vague about the exact weight sitemaps carry in crawl decisions versus discovery through internal links. The sitemap helps, but it doesn't force anything.

Has the standard evolved since its creation?

The base protocol has remained stable — that's precisely its strength. But Google has added extensions: video sitemaps, image sitemaps, news sitemaps.

These extensions aren't always supported equally by Bing. So we end up with a form of fragmentation, limited but real, despite the initial standard.

Practical impact and recommendations

What should you actually do with your XML sitemap?

Generate a sitemap.xml file compliant with the sitemaps.org protocol and submit it to Google Search Console and Bing Webmaster Tools. Both platforms use the same format, so a single file is sufficient.

Regularly verify that the sitemap contains only canonical URLs, accessible with a 200 status code, without redirects. Exclude unnecessary paginated URLs, duplicate content, or pages blocked by robots.txt.

What errors must you absolutely avoid?

Never include URLs marked as noindex or blocked by robots.txt in your sitemap. Google flags this as an error in Search Console — it creates an inconsistency that can slow down crawling.

Avoid massive unsegmented sitemaps. Beyond 50,000 URLs or 50 MB, split into multiple files and use a sitemap index. Crawlers handle modular files better.

Don't forget to update the <lastmod> tag when a page actually changes. A fake date or one never updated reduces the trust accorded to the sitemap.

How do you verify that your sitemap is being processed correctly?

Check the Sitemaps reports in Google Search Console: number of discovered URLs, detected errors, processing status. Cross-reference with Bing Webmaster Tools for additional insights.

Analyze server logs to verify that Googlebot and Bingbot regularly access your sitemap.xml file. A sitemap never crawled often indicates a configuration problem (robots.txt, redirects).

- Generate a sitemap.xml compliant with the sitemaps.org standard (max 50,000 URLs, 50 MB per file)

- Submit to Google Search Console and Bing Webmaster Tools

- Include only canonical URLs accessible with a 200 status, without noindex or robots.txt blocks

- Update the <lastmod> tag only when content actually changes

- Segment into multiple files and use a sitemap index if necessary

- Monitor processing reports in webmaster tools to detect errors and anomalies

- Check logs to confirm crawlers regularly access the sitemap

❓ Frequently Asked Questions

Un sitemap XML est-il obligatoire pour être indexé par Google ?

Faut-il soumettre un sitemap différent pour Google et Bing ?

Quelle est la taille maximale recommandée pour un fichier sitemap ?

Dois-je inclure toutes les pages de mon site dans le sitemap ?

La balise <lastmod> a-t-elle un réel impact sur le crawl ?

🎥 From the same video 7

Other SEO insights extracted from this same Google Search Central video · published on 22/09/2022

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.