Official statement

Other statements from this video 7 ▾

- □ Pourquoi Google a-t-il vraiment créé les XML Sitemaps ?

- □ Pourquoi Google a-t-il voulu faire des XML Sitemaps un standard web partagé entre moteurs ?

- □ Comment réduire de 80% vos emails de support grâce à une documentation SEO-friendly ?

- □ Pourquoi Google cache-t-il certaines informations SEO aux webmasters ?

- □ Pourquoi Google a-t-il ciblé les SEO en priorité avec ses premiers outils pour webmasters ?

- □ Les tirets dans les URLs sont-ils vraiment un critère de ranking essentiel ?

- □ Sous-domaines vs sous-répertoires : pourquoi Google refuse-t-il de trancher ?

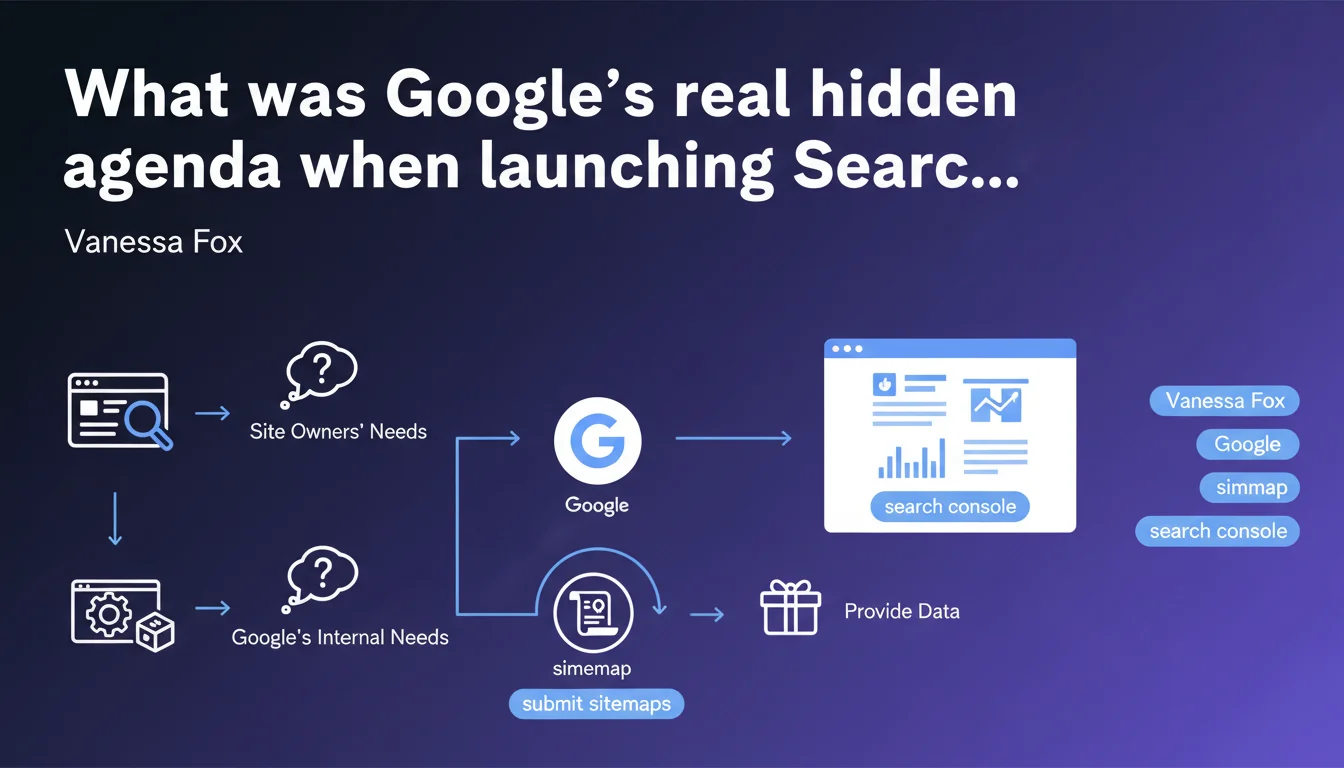

Google Webmaster Tools (now Search Console) wasn't created out of pure altruism. The initial objective: push webmasters to submit sitemaps to facilitate Google's crawling. The data provided to site owners was the bait to secure this collaboration. A win-win approach that shaped the tool we know today.

What you need to understand

What exactly was Google looking for when it created this tool?

Vanessa Fox, who led the initial Google Webmaster Tools project, is crystal clear on this. The primary objective wasn't to provide a philanthropic service to webmasters. Google wanted site owners to submit their XML sitemaps.

Why this obsession with sitemaps? Because they dramatically facilitate crawling work. Instead of discovering URLs through random exploration or via links, Google receives a complete map of the site. It's faster, more efficient, less resource-intensive.

What was the strategy to convince webmasters?

Fox's team understood that a one-way approach wouldn't work. Webmasters weren't going to submit sitemaps just to please Google. There had to be a value exchange.

The solution: provide crawl and indexation data that site owners had never had access to before. Crawl errors, index coverage, queries driving traffic — all valuable information that justified the effort of submitting a sitemap.

Did this approach shape the tool's evolution?

Absolutely. The initial philosophy — understanding what the audience needs from both sides — structured subsequent development. Each new Search Console feature serves a dual purpose: help webmasters AND make Google's work easier.

Core Web Vitals, Mobile Usability, structured data — all these reports push site owners to improve aspects that also make Google's algorithmic work easier. The tool remains a bridge of mutual interests, not charity.

- Google wanted sitemaps to optimize its crawling and reduce infrastructure costs

- Webmasters received exclusive data on how Google perceived their site

- The value exchange remains the founding principle of Search Console today

- Every added feature serves both Google and site owners simultaneously

SEO Expert opinion

Is this transparency about initial motivations surprising?

Not really. Anyone who's worked seriously with Search Console has already figured out that the tool isn't a disinterested gift. What's interesting is that Vanessa Fox openly admits it.

That contrasts with Google's usual marketing discourse, which presents its tools as services rendered to the web community. Here, we have frank acknowledgment: we needed sitemaps, we built a tool to encourage their submission. Period.

Did the audience-centric approach really work?

In the long run, yes — but with obvious biases. Search Console has indeed grown richer with useful features. But it remains fundamentally limited by what serves Google's interests.

Concrete example: keyword data is sampled and deliberately incomplete. Google could provide exhaustive stats, but that would cannibalize Google Ads. External link reports show a ridiculous sample compared to what Ahrefs or Semrush crawl. [To verify] — Google claims it's to protect its intellectual property, but the real reason is probably elsewhere.

Should we question the reliability of the provided data?

Let's be honest: Search Console remains the most reliable source for understanding how Google sees your site. No third-party tool can rival it on crawl data, indexation, or actual SERP performance.

But — and this is crucial — never forget that you're consulting a tool controlled by the search engine itself. The metrics displayed, their granularity, their presentation: it all serves Google's objectives first. When data is missing or a report is vague, ask yourself why.

Practical impact and recommendations

What should you actually do with this information?

First, submit a clean, up-to-date XML sitemap. That was the tool's initial objective, and it remains relevant today. If your sitemap contains noindex URLs, redirects, or 404 errors, you're sabotaging your crawl budget.

Next, exploit all available data in Search Console — not just queries and clicks. Index coverage, Core Web Vitals, crawl errors, ignored canonicals: every report reveals how Google interprets your site.

What mistakes must you absolutely avoid?

Never treat Search Console as absolute truth. Systematically cross-reference with your server logs to identify what Google actually crawls versus what it shows you. Gaps are often revealing.

Also avoid limiting yourself to performance data (impressions, clicks, CTR). These metrics are useful but tell you nothing about structural problems that prevent your content from being properly crawled or indexed.

How can you verify that your Search Console usage is optimal?

Ask yourself these questions: do you consult the index coverage reports at least once a week? Have you set up alerts for critical errors? Do you cross-reference Search Console data with your analytics to detect inconsistencies?

If you answer no to any of these, you're underutilizing the tool. And you're probably leaving significant opportunities on the table.

- Submit a clean XML sitemap with no blocked, redirected, or error URLs

- Regularly check the index coverage report to identify indexation issues

- Cross-reference Search Console data with server logs to detect crawl gaps

- Configure alerts for critical errors (indexation drops, 404 spikes, etc.)

- Exploit Core Web Vitals reports to anticipate ranking impacts

- Never treat Search Console as the sole source — always triangulate with analytics and third-party tools

❓ Frequently Asked Questions

Faut-il absolument soumettre un sitemap XML pour être bien indexé ?

Les données de Search Console sont-elles fiables à 100% ?

Pourquoi certaines URLs crawlées n'apparaissent-elles pas dans Search Console ?

Search Console remplace-t-il les outils SEO tiers comme Semrush ou Ahrefs ?

🎥 From the same video 7

Other SEO insights extracted from this same Google Search Central video · published on 22/09/2022

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.