Official statement

Other statements from this video 5 ▾

- □ Pourquoi Google a-t-il open sourcé son parser robots.txt officiel ?

- □ Pourquoi votre robots.txt peut-il être interprété différemment par Search Console et Google Search ?

- □ Pourquoi Google a-t-il développé une version Java de son parser robots.txt ?

- □ Comment Google teste-t-il vraiment la robustesse de son parser robots.txt ?

- □ Pourquoi Google considère-t-il votre fichier robots.txt comme une menace potentielle ?

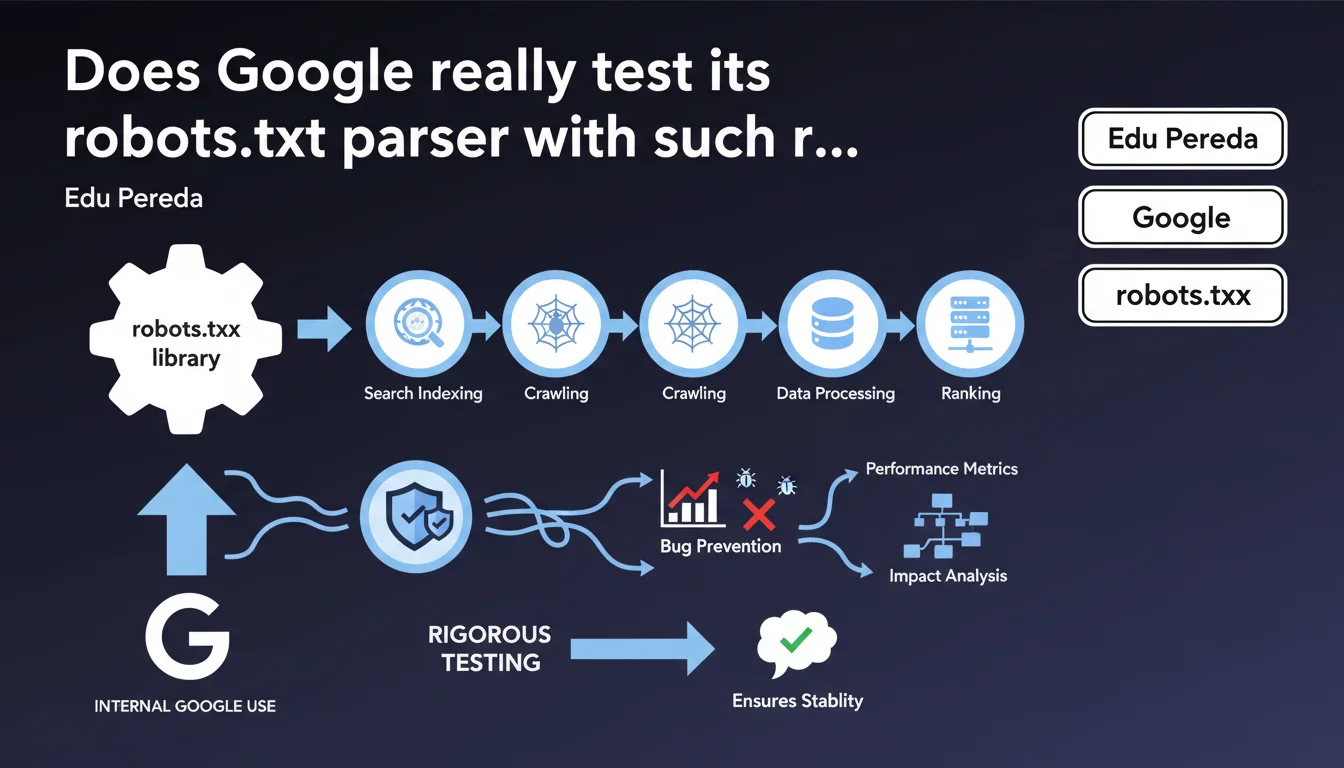

Google's robots.txt parser library is used extensively throughout the search engine's internal infrastructure. Any code modification requires exhaustive testing to prevent performance regressions on critical systems. This statement reveals the strategic importance of the robots.txt file in Google's architecture.

What you need to understand

What is a robots.txt parser and why is it so central at Google?

The robots.txt parser is the software component that reads, analyzes, and interprets the instructions contained in each website's robots.txt file. At Google, this isn't a simple isolated script — it's a shared software library used by many internal systems.

This statement from Edu Pereda reveals that this library is used extensively across Google's infrastructure. Concretely: Googlebot, crawling systems, validation tools, JavaScript rendering servers… they all rely on this same component to decide what they're allowed to crawl or not.

Why does every modification require so much testing?

If a bug or performance regression appears in the parser library, it's not just one service that goes down — it's dozens of critical systems that can be impacted simultaneously. A slowdown of just a few milliseconds multiplied by billions of daily requests = massive operational cost.

Google must therefore test each modification under real-world conditions, on colossal data volumes, before deploying it to production. This explains why certain evolutions to the robots.txt standard take time to be implemented.

What are the implications for SEO professionals?

This statement confirms that the robots.txt file remains an absolutely central control point in the relationship between a site and Google. It's not a "legacy" file destined to disappear — it's actually a critical component of the crawling infrastructure.

- The robots.txt parser is a library shared by many Google systems

- Any code modification must be tested rigorously to prevent regressions

- This caution explains the slow implementation of new robots.txt directives

- The robots.txt file maintains major strategic importance at Google

SEO Expert opinion

Is this statement consistent with practices observed in the field?

Absolutely. We've observed for years that Google is extremely cautious with robots.txt evolutions. For example, crawl-delay support (widely used by Bing or Yandex) has never been implemented at Google, despite recurring requests.

Similarly, directives like noindex in robots.txt were deprecated after working for years — and Google took time to communicate extensively before withdrawing support. This caution now clearly explains itself: touching the parser means touching dozens of production systems.

What nuances should be added to this statement?

The statement remains intentionally vague on one point: what exactly are these "many critical systems" that depend on the parser? Googlebot yes, but what else? Search Console tools? JavaScript rendering systems? Crawlers for Google Images, Google News?

Without this precision, it's difficult to assess the real scope of the "extensiveness" mentioned. [To verify]: does each Google service (Ads, Analytics, etc.) also use this parser to respect robots.txt directives, or only services related to Search?

Another point: Pereda speaks of "performance regressions," but not functional bugs. Yet both types of problems exist. A parser that slows down is problematic, but a parser that misinterprets a directive is equally so — and we've seen concrete cases of misinterpretation of wildcards or complex patterns.

What does this statement reveal about Google's technical architecture?

It confirms an approach of shared library rather than independent microservices for robots.txt parsing. This is a classical architecture but one that creates strong dependencies: a single component serves many internal clients.

This also means Google cannot easily A/B test parser modifications on a subset of sites — deployment must be global and immediate. Hence the need for exhaustive testing beforehand.

Practical impact and recommendations

What should you actually do with your robots.txt file?

First implication: your robots.txt file must be ultra-reliable. No approximate syntax, no exotic directives, no ambiguous patterns. If Google's parser is this sensitive, you might as well make its job easier.

Systematically test your modifications with the robots.txt testing tool in Search Console before putting them into production. A syntax error or malformed pattern can unexpectedly block Googlebot.

Avoid overly large or complex robots.txt files. If you have hundreds of lines of directives, it's probably a sign of an architecture problem — better to fix it at the source than pile up blocking rules.

What mistakes should you absolutely avoid?

Don't rely on non-standard or poorly documented directives. If Google has never officially supported them, it's probably because adding them to the parser would require testing too heavy for marginal benefit.

Stop using noindex in robots.txt — this directive has been officially deprecated. Use the meta robots tag or the X-Robots-Tag HTTP header instead.

Watch out for complex wildcards in patterns: some parsers (including Google's) may interpret them differently. Prefer simple and explicit rules.

- Test each robots.txt modification in Search Console before deploying to production

- Avoid non-standard or ambiguous syntax

- Remove obsolete directives (noindex, crawl-delay)

- Favor simple and explicit rules over complex wildcards

- Document each directive to understand its impact later

- Monitor crawl logs after each robots.txt change

❓ Frequently Asked Questions

Pourquoi Google ne supporte-t-il pas la directive crawl-delay dans robots.txt ?

Le parser robots.txt de Google est-il open source ?

Un fichier robots.txt mal formé peut-il pénaliser mon site ?

Dois-je bloquer les ressources CSS et JavaScript dans robots.txt ?

À quelle fréquence Google recrawle-t-il le fichier robots.txt d'un site ?

🎥 From the same video 5

Other SEO insights extracted from this same Google Search Central video · published on 08/03/2023

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.