Official statement

Other statements from this video 14 ▾

- □ Faut-il changer de domaine lors d'une réduction de catalogue ou conserver l'existant ?

- □ Les backlinks vers une page 404 sont-ils définitivement perdus ou récupérables ?

- □ Peut-on vraiment avoir des millions de redirections 301 sans impacter son SEO ?

- □ Faut-il vraiment ignorer les erreurs 404 dans Google Search Console ?

- □ Faut-il vraiment ajouter les pages paginées dans le sitemap XML ?

- □ Google crawle-t-il vraiment les liens dans les menus déroulants au survol ?

- □ Combien de redirections peut-on vraiment mettre sur un site sans pénalité SEO ?

- □ Faut-il privilégier une personne ou une organisation comme auteur d'un article pour le SEO ?

- □ Faut-il vraiment aligner URL, title et H1 pour ranker en SEO ?

- □ Les tirets multiples dans un nom de domaine pénalisent-ils votre SEO ?

- □ Faut-il publier du contenu tous les jours pour bien ranker sur Google ?

- □ Faut-il vraiment abandonner le texte dans les images pour le SEO ?

- □ Désindexer des URLs : Google limite-t-il vraiment les options à deux méthodes ?

- □ Les Core Web Vitals écrasent-ils vraiment la pertinence dans le classement Google ?

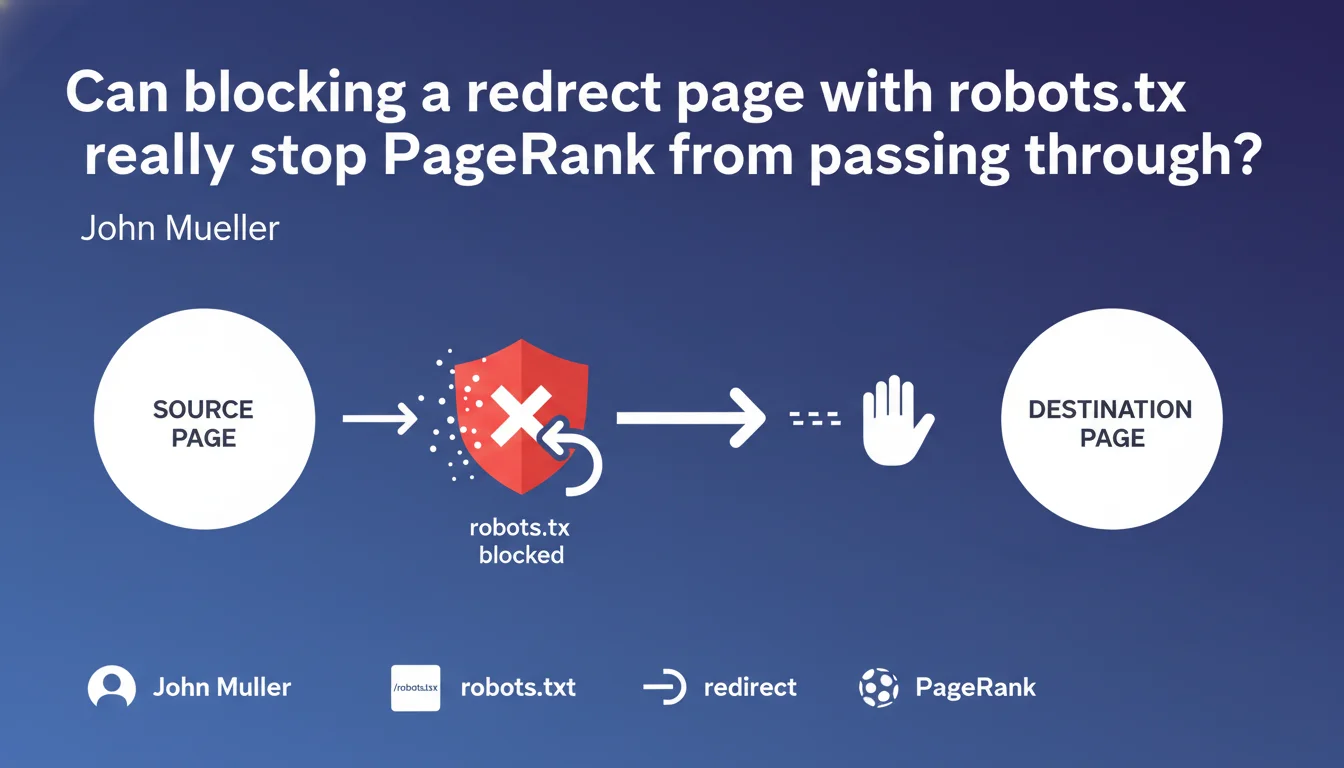

Google confirms that a redirect passing through a page blocked via robots.txt effectively prevents the transmission of PageRank signals. In practice, if you don't want a link to pass its juice, inserting a blocked intermediate page does the job. A technique that's existed for years, now officially validated.

What you need to understand

Why would you want to block PageRank transmission through a link?

There's no shortage of scenarios. You sometimes have mandatory pages from a legal or functional perspective — dynamically generated legal notices, multiple login pages, geo-targeted redirects — that create unnecessary PageRank leaks.

Rather than let these URLs consume crawl budget and dilute your internal link budget, some practitioners have gotten in the habit of using blocked intermediate pages to cut off transmission. Google just confirmed that this practice actually works.

How exactly does this blocking technique work in practice?

The principle is straightforward: instead of pointing a link directly to the final destination, you route it through an intermediate redirect page. This intermediate page is then blocked in your robots.txt.

Result: Google follows the link, hits the intermediate page, sees it's blocked from crawling, and stops there. The redirect technically exists, but the bot doesn't follow it — so no signals pass through.

Does this method completely replace nofollow?

No, and it's crucial to understand the distinction. Nofollow (or ugc/sponsored) remains a signal to Google about the nature of the link. It's interpreted as a « suggestion » — Google can choose to follow it or not for discovery, but won't transmit PageRank.

The robots.txt technique is more radical: it prevents crawling altogether. Google doesn't even discover the final destination via that path. The two approaches have different use cases.

- Redirect via robots.txt-blocked page = complete signal transmission blocking

- Technique officially validated by John Mueller for preventing PageRank transmission

- Different from nofollow which remains a suggestion interpretable by Google

- Useful for isolating functional sections that consume budget without SEO value

SEO Expert opinion

Does this statement align with real-world observations?

Yes, unequivocally. SEOs have been testing this technique for years — particularly on massive e-commerce platforms with thousands of navigation facets or multi-parameter filter systems. Real-world results clearly show that a page blocked from crawling cuts the transmission chain.

What changes here is that Google explicitly confirms it. No more gray areas: you can now use this method with full knowledge and without fear of a manual penalty for « manipulation attempts ».

What risks does this approach present in practice?

The main danger is misconfiguration. If you accidentally block a page that should be transmitting PageRank — for example a legitimate 301 redirect after a redesign — you cut off a valuable signal flow. Let's be honest: on a large site, this kind of mistake happens more often than people admit.

Another point: this technique can seriously complicate your internal link architecture. Multiplying blocked intermediate pages makes tracking PageRank flow much more opaque. You risk losing visibility into what transits where. [To verify]: Google has given no indication of any threshold beyond which this practice could be interpreted as abusive.

In which cases is this method truly relevant?

Honestly? Real use cases are fairly limited. It becomes relevant in very complex architectures — marketplaces with thousands of vendors, multi-country sites with automatic geo-targeted redirects, SaaS platforms with dozens of functional entry points.

For a standard site — even a mid-sized e-commerce — you'll probably get better results by simply optimizing your internal linking and using nofollow where appropriate. No need to bring out heavy artillery if a Swiss Army knife will do the job.

Practical impact and recommendations

How do you implement this technique without shooting yourself in the foot?

First step: precisely identify the pages you want to isolate. No gut-feel decisions — pull out your crawl analysis tools (Screaming Frog, OnCrawl, Botify depending on your budget) and pinpoint sections consuming budget without bringing SEO value.

Next, create your redirect intermediate pages. Technically, these are simple URLs that immediately redirect (301 or 302) to the final destination. Ideally group them in a dedicated directory — /redirect/ for example — to simplify robots.txt management.

Add the Disallow directive in your robots.txt to block that directory. Absolutely test with Google Search Console (URL inspection tool) to verify the blocking is properly applied. And most importantly: document your configuration. In six months, you'll have forgotten why these URLs are blocked.

What mistakes must you absolutely avoid?

NEVER block a redirect page that's part of a site migration or URL redesign. These redirects must imperatively transmit PageRank — blocking them is throwing away all the accumulated link equity.

Also avoid multiplying layers. Some are tempted to create redirect chains — A to B (blocked) to C to D — thinking they « better control » things. Result: an incomprehensible contraption that generates more problems than it solves.

How do you verify the configuration is working correctly?

Use Google Search Console to inspect your intermediate pages. If Google indicates « Blocked by robots.txt file », that's a good sign. Also verify that your final destination pages don't show crawl errors related to broken redirects.

Conduct regular audits — at least quarterly — to ensure no URL accidentally blocked cuts off important PageRank flow. A simple export of your robots.txt file combined with log analysis is enough to spot anomalies.

- Identify sections to isolate through thorough crawl analysis

- Create intermediate pages in a dedicated directory (/redirect/)

- Block that directory via robots.txt with Disallow directive

- Test configuration with Google Search Console

- Never block 301 redirects post-migration

- Document precisely each blocked page and its purpose

- Audit configuration every 3 months minimum

❓ Frequently Asked Questions

Bloquer une page de redirection par robots.txt est-il considéré comme du black hat par Google ?

Quelle est la différence entre bloquer une redirection par robots.txt et utiliser un attribut nofollow ?

Peut-on bloquer des redirections 301 légitimes pour économiser du crawl budget ?

Combien de temps faut-il pour que Google prenne en compte le blocage d'une page de redirection ?

Cette technique fonctionne-t-elle aussi pour les autres moteurs de recherche comme Bing ?

🎥 From the same video 14

Other SEO insights extracted from this same Google Search Central video · published on 29/12/2022

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.