Official statement

Other statements from this video 8 ▾

- □ Faut-il adapter sa stratégie SEO pour les fonctionnalités IA de Google ?

- □ Les clics depuis AI Overviews convertissent-ils vraiment mieux ?

- □ Les AI Overviews favorisent-elles vraiment une plus grande diversité de sites ?

- □ Pourquoi Google insiste-t-il autant sur la « valeur unique » du contenu ?

- □ Les recommandations Search Console sur Core Web Vitals vont-elles enfin servir à quelque chose ?

- □ Le fichier robots.txt reste-t-il vraiment utile pour contrôler le crawl des IA ?

- □ L'analyse des logs est-elle vraiment la compétence SEO qui survivra à tout ?

- □ Faut-il arrêter de parler de SEO et adopter les nouveaux termes AIO, GEO ou optimisation pour LLM ?

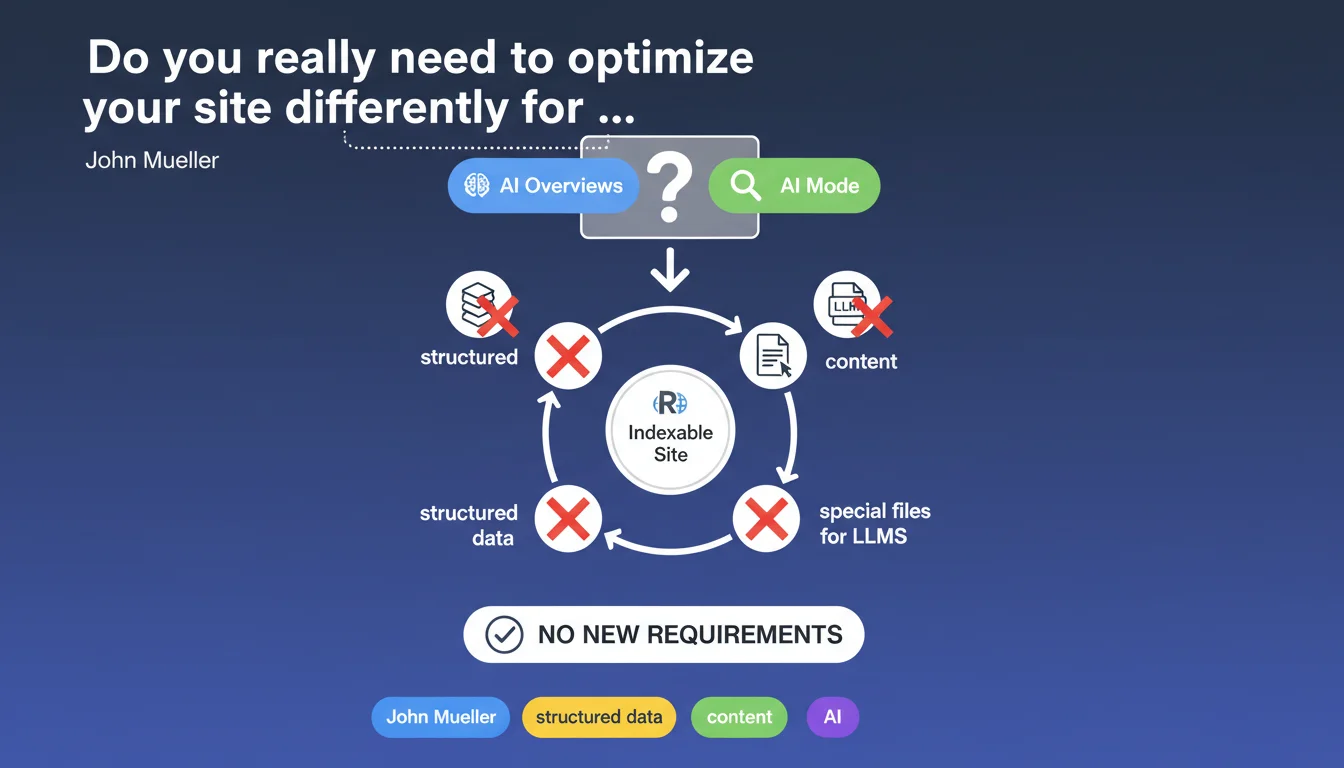

Google states that no specific optimization is required to appear in its AI features such as AI Overviews or AI Mode. All that's needed is for the site to be indexable — no additional structured data, no dedicated files for LLMs, no editorial overhaul. Standard indexing remains the only prerequisite.

What you need to understand

What exactly does Google say about the prerequisites for its AI features?

John Mueller's position is crystal clear: an indexable site already has everything it needs to be eligible for Google's AI features. No exotic Schema.org markup to implement, no special robots.txt file for AI crawlers, nothing.

This statement comes at a time when many publishers and SEO professionals are wondering whether they need to "optimize for AI" — rephrase content, structure information differently, add layers of data. Google puts that to rest: standard indexability is sufficient.

Why this clarification now?

Because the SEO industry tends to overreact. Every new Google feature generates its own set of self-proclaimed "best practices," often without any foundation. Here, Mueller is setting the record straight: the existing indexing mechanisms already feed AI systems.

In practical terms? If Googlebot can access your pages, analyze them, and understand them, you're in the race. The engine uses the same signals for classical results and to feed its AI models.

What are the essential takeaways?

- Indexability = sole criterion: no special files like "llm.txt" or equivalents are necessary

- No new structured data: standard Schema.org (if relevant) remains sufficient, nothing additional for AI

- No mandatory editorial overhaul: there's no need to rewrite your content "for LLMs" — existing content works fine if quality is there

- SEO fundamentals remain the priority: crawlability, technical structure, content quality — nothing new under the sun

SEO Expert opinion

Is this statement consistent with what we observe in the field?

Yes and no. In principle, it's consistent: Google has never introduced a "separate channel" to feed its new features. AI Overviews draw from the standard index, it's documented. But — and this is where it gets tricky — saying there's "nothing special to do" obscures a reality: certain content performs significantly better in AI Overviews than others.

Observations show that pages with clear structure (hierarchical headings, concise answers, lists), relevant FAQ or HowTo markup, and established thematic authority emerge more often. It's not "required" in the strict sense, but ignoring these levers is shooting yourself in the foot. [To verify]: Google doesn't specify whether certain signals are weighted differently for AI vs. standard SERPs.

What nuances should be added to this claim?

Let's be honest: "nothing special" doesn't mean "nothing at all." Indexability is only an entry point. Once in the index, competition plays out over relevance, authority, freshness, structure — exactly like organic results.

The essential nuance? Google isn't saying that all indexable sites have the same chances of appearing in AI Overviews. It's just saying they're eligible. The difference is huge. A site that's technically indexable but has superficial content, a shaky architecture, or weak authority will never take off — AI or not.

In what cases does this rule not apply?

If your site blocks Googlebot (intentionally or by error), if your key content is in poorly rendered JavaScript, if you serve different content to bots vs. users — you're out of the game. Indexability is not binary: it's measured in degrees.

And that's where Mueller's statement, while technically correct, becomes misleading for an average site. A misconfigured CMS, catastrophic load times, a hermetically sealed silo architecture — all of this degrades indexability de facto, even if Googlebot manages to access the pages. Result: eligible on paper, invisible in practice.

Practical impact and recommendations

What should you do concretely to maximize your chances?

Stop looking for the "AI hack." Focus on technical and editorial fundamentals. Ensure Googlebot crawls your priority pages efficiently, that JavaScript rendering (if applicable) works, that your content is logically structured with clear headings.

Leverage relevant structured data — FAQ, HowTo, Article, Product — but only if it adds genuine semantic value. Dummy markup to artificially boost visibility won't cut it anymore. Google's repeated: over-optimization backfires.

What mistakes must you absolutely avoid?

Don't create "special LLM" files or sections dedicated "for AI." That's wasted time, potentially counterproductive if it fragments your architecture. Don't rephrase your content in "prompt engineering" mode — Google isn't looking for answers formatted like ChatGPT outputs, it's looking for reliable, well-structured information.

Another classic pitfall: neglecting freshness and updates. Indexable but outdated content will never surface in AI Overviews, which favor recent and updated sources. And that's where it gets sticky for many corporate sites: they're indexed but frozen in time.

How can you verify your site is truly ready?

- Crawlability audit: Search Console, server logs, Googlebot simulation — zero unintentional blocking

- JavaScript rendering functional: test with Google's URL inspection tool, verify rendered DOM

- Content structure: coherent H1-H6 headings, short paragraphs, lists and tables for factual data

- Validated structured data: Schema.org test, relevant markup (no spam), alignment with visible content

- Speed and Core Web Vitals: a slow site degrades indexability de facto, even if Googlebot accesses it

- Thematic authority: quality backlinks, citations, mentions — AI prioritizes recognized sources

- Editorial freshness: regularly updated content, clear publication/modification dates

In summary: indexability is necessary, but far from sufficient. Google demands no "special" action, but that doesn't exempt you from solid groundwork on technical and editorial quality. Sites that excel in standard SERPs have every chance of shining in AI Overviews — others will remain invisible, even if perfectly indexed.

These cross-optimization efforts — technical, editorial, authority — can quickly become complex to orchestrate, especially on medium or large sites. If you find your site struggling to emerge despite proper indexing, support from a specialized SEO agency can help you identify priority levers and structure a coherent action plan, without scattering resources on ineffective tactics.

❓ Frequently Asked Questions

Dois-je créer un fichier robots.txt spécial pour les crawlers IA de Google ?

Les données structurées Schema.org influencent-elles l'apparition dans AI Overviews ?

Faut-il réécrire mes contenus dans un format « question-réponse » pour l'IA ?

Mon site est indexé mais n'apparaît jamais dans AI Overviews, pourquoi ?

Google privilégie-t-il certains types de contenus pour ses fonctionnalités IA ?

🎥 From the same video 8

Other SEO insights extracted from this same Google Search Central video · published on 01/07/2025

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.