Official statement

Other statements from this video 10 ▾

- □ Pourquoi Google impose-t-il trois rapports de performance distincts dans Search Console ?

- □ Faut-il vraiment filtrer vos données par type de recherche dans Search Console ?

- □ Comment identifier et résoudre les problèmes d'indexation sur vos pages stratégiques ?

- □ La navigation interne suffit-elle vraiment à garantir l'indexation de vos pages stratégiques ?

- □ Un CTR faible signifie-t-il vraiment que vos snippets manquent d'attractivité ?

- □ Pourquoi vos données Google News ne remontent-elles pas dans la Search Console ?

- □ Pourquoi Google Search Console masque-t-il les données de requêtes dans le rapport Google News ?

- □ Pourquoi le rapport Discover n'apparaît-il pas dans votre Search Console ?

- □ Pourquoi Google recommande-t-il une analyse SEO sur 16 mois minimum ?

- □ Pourquoi comparer Search, News et Discover change votre stratégie de contenu ?

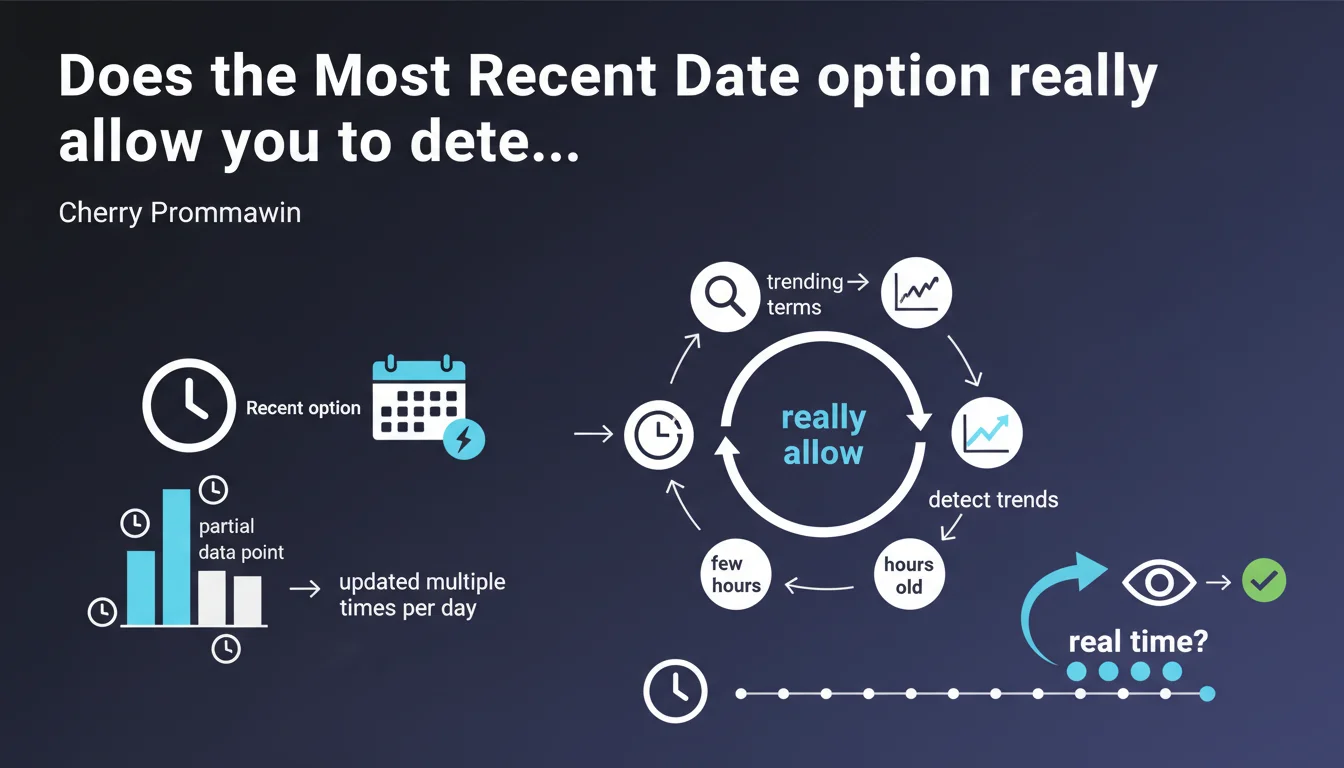

Google Search Console now offers a "Most Recent Date" option that displays partial data updated multiple times per day. Unlike classic reports (which have a 24-48 hour delay), this filter provides a near real-time view of trending queries, with data only a few hours old.

What you need to understand

What exactly is the Most Recent Date option?

In Google Search Console, the date filter now includes a "Most Recent Date" option that displays partial data updated multiple times per day. Unlike classic reports that stabilize after 24-48 hours, this mode allows you to view fresh data going back only a few hours.

This data is called "partial" because it doesn't yet cover all impressions and clicks — some logs haven't yet been consolidated in Google's systems. But for identifying sudden traffic spikes or emerging trending terms, it's an unprecedented tool.

Why does this feature change the game for SEO professionals?

Until now, we always worked with a time lag: it was impossible to know on the same day whether a news story, buzz, or event was generating organic traffic for a specific keyword. This latency prevented any quick reaction — particularly to adjust content, push articles, or capitalize on an ephemeral trend.

With Most Recent Date, you can detect a weak signal within hours and adjust your editorial or technical strategy accordingly. This is especially relevant for news sites, seasonal e-commerce, or marketing campaigns synchronized with events.

What are the limitations of this partial data?

The data displayed under Most Recent Date are not final: they will be updated and consolidated in the following hours. In other words, the number of impressions or clicks displayed may increase once all logs are integrated.

You should therefore not use them for precise reporting or ROI calculations — they are primarily a directional indicator. The value lies in detecting emerging trends, not in measuring exact stabilized performance.

- Multiple daily updates of partial data

- Lag of only a few hours from actual traffic

- Useful for identifying trending terms before complete consolidation

- Does not replace classic reports for overall performance analysis

- Particularly relevant for news sites and event-driven content

SEO Expert opinion

Does this feature answer a real operational need?

Let's be honest: we've been waiting for this for years. Most third-party tools (SEMrush, Ahrefs, etc.) can't compete with the freshness of Google data — but until now, even GSC had a lag incompatible with reactive management. For teams managing news sites or timing-sensitive campaigns, it's a real operational gain.

The problem is that Google doesn't specify the exact frequency of updates or the coverage of partial data. "Multiple times per day" is vague — is it every 2 hours? Every 6 hours? [To verify] over time, because if the refresh is too infrequent, the interest for real real-time monitoring collapses.

What biases should you anticipate with this partial data?

Partial data are not a random sample — they reflect logs already consolidated at the time of the request. Certain traffic segments (mobile vs desktop, geographies, device types) may be overrepresented or underrepresented depending on log reporting delays.

Another point: very low volume queries risk not appearing at all in the Most Recent Date window, because Google applies privacy thresholds. If you manage a niche site with ultra-specific long-tail keywords, this option may not be relevant for you.

Is this statement consistent with observed practices?

This is a logical evolution following the introduction of the "Discover" report and the progressive integration of YouTube data into GSC. Google is gradually improving the granularity and freshness of its reports — it's consistent with their desire to make Search Console an operational management tool, not just a passive monitoring dashboard.

However, we still don't know if this option will be available via the GSC API — and that's a crucial point for agencies and automated tracking tools. If Most Recent Date remains confined to the web interface, its operational impact will be limited for managing large volumes of sites.

Practical impact and recommendations

What should you do concretely to leverage this feature?

First step: test the Most Recent Date option in the date filter of your GSC account and compare it with consolidated data from the same day at D+2. This will give you an idea of the actual lag and reliability of partial figures for your specific site.

Next, identify relevant use cases: product launches, live events, media buzz, sector news. For these situations, set up alerts or regular manual checks (every 4-6 hours) to detect emerging trending terms.

What mistakes should you avoid with Most Recent Date?

Never confuse partial data with definitive data — that's the basics. If you're generating client reporting or monthly performance analysis, exclude Most Recent Date from your extractions. Use only consolidated data (at least 48 hours lookback).

Another pitfall: don't over-interpret a sudden spike without verifying its persistence. A term can explode for 2 hours (news, viral tweet) then crash to zero — wait at least 12-24 hours before adjusting your editorial strategy.

How to integrate this data into a reactive SEO workflow?

For teams capable of producing content quickly (newsrooms, corporate blogs, e-commerce), Most Recent Date allows you to validate in real time that an article published in the morning is capturing the expected traffic for targeted terms. If not, you can adjust the title, meta description, or internal linking within the day.

For less reactive sites, the value is mainly in detecting emerging trends: identifying queries that are rising, then planning optimized content for the following week — before competitors catch on.

- Enable the Most Recent Date option in GSC and compare with consolidated data at D+2

- Identify relevant use cases: events, launches, sector news

- Set up regular checks (every 4-6 hours) for timing-sensitive campaigns

- Never use Most Recent Date for client reporting or overall performance analysis

- Wait 12-24 hours before adjusting editorial strategy based on a sudden spike

- Validate in real time that published content is capturing expected traffic for targeted terms

- Detect emerging queries to plan optimized content before competition

❓ Frequently Asked Questions

Les données Most Recent Date sont-elles accessibles via l'API Google Search Console ?

Quelle est la fréquence exacte des mises à jour des données partielles ?

Peut-on exporter les données Most Recent Date pour un suivi historique ?

Most Recent Date affiche-t-il toutes les requêtes ou seulement les plus volumineuses ?

Cette option est-elle utile pour un site e-commerce classique sans actualité ?

🎥 From the same video 10

Other SEO insights extracted from this same Google Search Central video · published on 23/05/2023

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.