Official statement

Other statements from this video 10 ▾

- □ Pourquoi la navigation à facettes cause-t-elle la moitié des problèmes de crawl ?

- □ Faut-il vraiment bloquer la navigation à facettes dans robots.txt ?

- □ Les paramètres d'action dans vos URLs sabotent-ils votre crawl budget ?

- □ Pourquoi Google intervient-il directement dans le code des plugins WordPress ?

- □ Les paramètres d'URL courts mettent-ils vraiment votre crawl budget en danger ?

- □ Faut-il vraiment se débarrasser des session IDs dans vos URLs ?

- □ Pourquoi vos paramètres de calendrier WordPress sabotent-ils votre crawl budget ?

- □ Pourquoi Googlebot doit-il crawler massivement un nouveau site avant de savoir s'il vaut le coup ?

- □ Faut-il attendre 24 heures pour qu'une modification de robots.txt soit prise en compte ?

- □ Faut-il abandonner les paramètres GET pour sécuriser son crawl budget ?

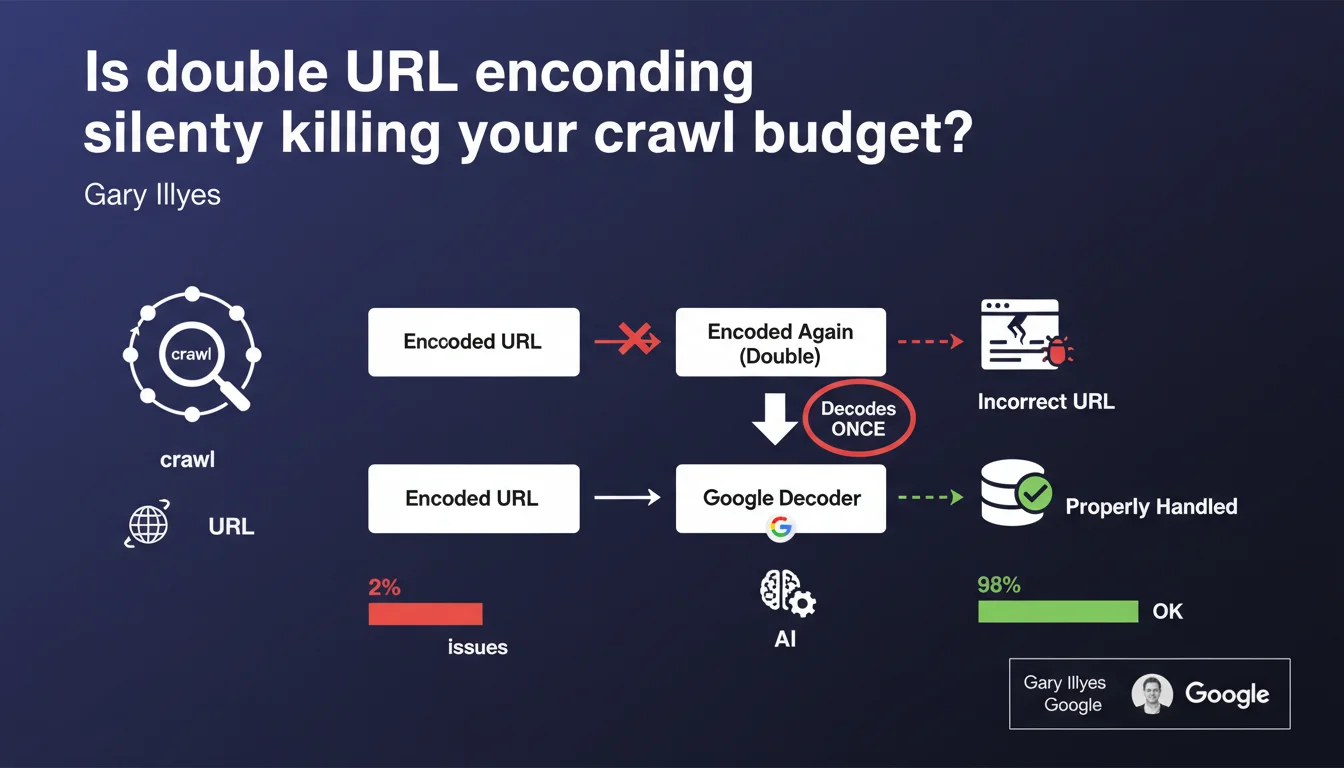

Double percent encoding of URLs accounts for approximately 2% of crawl issues encountered by Google. The search engine only decodes once: if your URL has been encoded twice, it remains broken and your server cannot process it. Result: orphaned pages, wasted crawl budget.

What you need to understand

What exactly is double percent encoding?

Percent encoding (or URL encoding) involves replacing special characters with their hexadecimal representation preceded by a %. For example, a space becomes %20, an ampersand & becomes %26.

Double encoding occurs when this process is applied twice in succession. A space becomes %20, which is then encoded again as %2520. The % itself is transformed into %25. This is often a development error — a script that encodes a URL already encoded by a CMS, for example.

Why does Google only decode once?

Google applies straightforward logic: it decodes once the URL it receives, then attempts to request it from the server. If the URL has been encoded twice, after this single decoding, it remains partially encoded — and therefore incorrect.

Your server does not recognize this malformed URL. It returns a 404 or an error, even though the page actually exists in its correct form. Googlebot then considers the resource as not found.

How many sites are actually affected?

Gary Illyes mentions that this problem represents approximately 2% of failing crawl cases. This is not negligible on large sites: 2% of thousands of pages equals hundreds of orphaned URLs.

At-risk platforms are those that chain multiple technical layers: CMS + proxy + CDN + dynamic redirects. Each link in the chain can encode without checking if it has already been done upstream.

- Double percent encoding transforms %20 into %2520, rendering the URL unreadable for the server

- Google only decodes once — no way to backtrack

- This affects approximately 2% of crawl problems, especially on complex architectures

- Affected URLs become orphaned in the index, even if they exist

SEO Expert opinion

Is this statement consistent with field observations?

Yes, completely. We regularly observe cases of double encoding in crawl logs, particularly on e-commerce sites with filter parameters or misconfigured URL rewriting. Affected URLs generate 404 errors in series in Search Console.

The 2% figure may seem low, but it is concentrated on certain types of sites. If you manage a simple WordPress blog, you will never see this problem. However, on a marketplace with dynamic URLs and multiple languages, it is a classic issue.

What edge cases does this statement not cover?

Gary Illyes does not specify whether Google attempts a second decoding in case of first decoding failure. [To verify]: some third-party crawlers apply recursive decoding until a stable URL is obtained. Google appears stricter — only one pass.

Another gray area: what happens if a URL is partially double-encoded? Imagine /product/%2520shoes/basket — Google decodes and gets /product/%20shoes/basket. Does it understand that this is a space or does it leave the %20 as is? The statement does not address this.

Should you really worry if you have never seen this problem?

No. If your Search Console shows no unexplained 404 error spikes on URLs that exist, you are probably not affected. This is not a silent bug eroding your index without symptoms.

However, if you notice valid pages that never appear in the index despite a clean sitemap and internal links, check the encoding. This is a diagnosis to have on your checklist before looking for more esoteric causes like duplicate content or cannibalization.

Practical impact and recommendations

How do I detect double encoding on my site?

Compare URLs crawled by Google (Search Console → Coverage → Excluded) with those present in your server logs. If you see URLs with %25 followed by hex codes (e.g., %2520, %253D), you have double encoding.

Another signal: 404 errors in bulk in Search Console on URLs that, once manually decoded, correspond to existing pages. Use an online decoding tool to verify.

- Audit URLs in Search Console marked as 404 or Not found

- Search for occurrences of %25 in your crawl logs — this is the sign of the % itself encoded

- Manually test suspect URLs by decoding them twice and verifying if they become valid

- Review URL generation scripts: CMS, plugins, .htaccess redirects, middlewares

- Check URLs from dynamic filters (e-commerce facets, complex UTM parameters)

What corrective actions should be implemented immediately?

Identify the technical layer responsible for double encoding: CMS, web server, CDN, third-party application. Often, it is a misconfigured plugin or module that encodes by default without checking the state of the incoming URL.

Fix at the source by disabling automatic encoding in the faulty layer. If this is not possible (external dependency), implement a rewrite rule on the server side to decode once before processing.

- Disable automatic encoding in suspect modules (URL rewriting, SEO plugins, proxies)

- Implement a .htaccess or nginx rule to decode incoming URLs if necessary

- Resubmit corrected URLs via Search Console to force a recrawl

- Monitor logs for 2-3 weeks to verify the problem does not recur

What to do if the problem persists despite fixes?

Some double encoding cases are generated dynamically by client-side scripts (JavaScript) that modify URLs before submission. Audit your event listeners, routing functions, and AJAX calls.

If the architecture is too complex or technical layers are multiple, this type of diagnosis can quickly become time-consuming. Engaging an SEO technical agency provides thorough crawl log auditing, precise mapping of encoding points, and lasting correction without regression risk.

❓ Frequently Asked Questions

Est-ce que Google peut décoder une URL encodée trois fois ou plus ?

Le double encodage affecte-t-il aussi les paramètres d'URL (query strings) ?

Un sitemap peut-il contenir des URLs double-encodées sans que je le sache ?

Faut-il utiliser des redirections 301 pour corriger les URLs double-encodées ?

Les autres moteurs de recherche (Bing, Yandex) ont-ils le même comportement ?

🎥 From the same video 10

Other SEO insights extracted from this same Google Search Central video · published on 03/02/2026

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.