Official statement

Other statements from this video 16 ▾

- □ Faut-il vraiment supprimer les balises meta keywords de votre site ?

- □ Faut-il vraiment séparer les sitemaps news et généraux pour éviter les doublons d'URLs ?

- □ Pourquoi Google ignore-t-il votre meta description alors que vous l'avez soigneusement rédigée ?

- □ Faut-il vraiment nettoyer les backlinks spammés de votre profil de liens ?

- □ Faut-il encore optimiser la densité de mots-clés pour le SEO ?

- □ Le désaveu de liens suffit-il à récupérer vos positions perdues après une pénalité ?

- □ Pourquoi les redirections 301 restent-elles le nerf de la guerre lors d'un changement de domaine ?

- □ Un code 404 ciblé sur Googlebot peut-il bloquer l'indexation de vos pages ?

- □ Faut-il vraiment avoir le même contenu sur mobile et desktop pour l'indexation mobile-first ?

- □ Faut-il vraiment demander la suppression des URLs redirigées de l'index Google ?

- □ Vérifier son site dans Search Console améliore-t-il vraiment son référencement ?

- □ Pourquoi Google refuse-t-il le contenu multilingue dynamique sur une même URL ?

- □ Que se passe-t-il quand vos liens hreflang ne se valident pas tous ?

- □ Les liens footer « Made by X » sont-ils vraiment sans danger pour votre SEO ?

- □ Comment configurer correctement les balises canonical et alternate pour un site m-dot ?

- □ Les données EXIF des images sont-elles inutiles pour le SEO ?

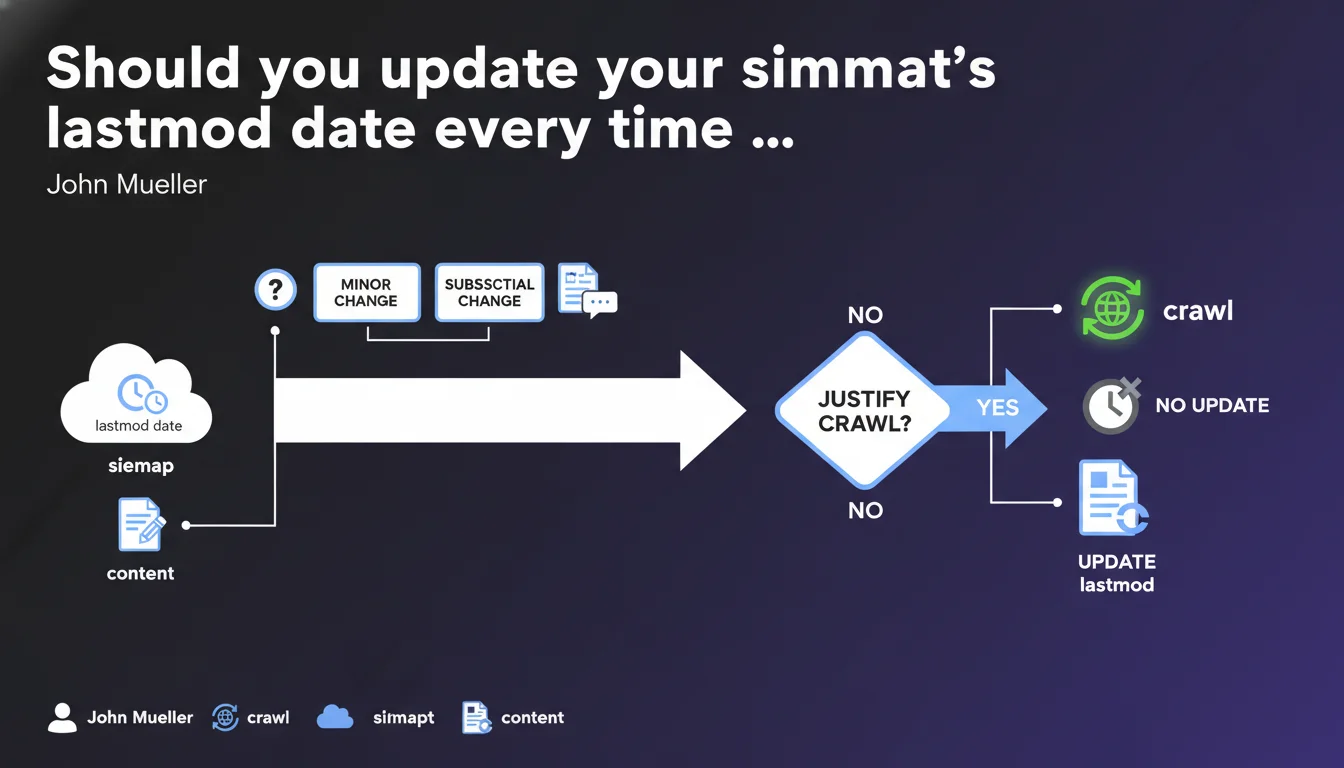

Google clarifies that the lastmod tag in an XML sitemap should only be modified when a content change truly justifies a new crawl. Cosmetic or minor updates do not warrant triggering this tag. If comments are essential to the page's content, their publication can justify a lastmod update.

What you need to understand

Why does Google insist on the notion of "significant changes"?

The XML sitemap serves to prioritize crawl budget by flagging pages that deserve a fresh visit. If you update lastmod for every minor modification — fixing a typo, adjusting CSS, changing a footer date — you create noise. Googlebot eventually learns to ignore your signals.

Mueller's message is clear: this tag must reflect a substantial change in visible content. A comprehensive article rewrite, adding an important section, a major data update — these justify a new date.

What concretely does "change sufficient to justify a new crawl" mean?

Google doesn't provide a precise metric, and that's intentional. A "sufficient change" depends on the nature of your content. For an e-commerce product page, updating price or availability can be significant. For a blog article, fixing two sentences probably isn't.

The idea is straightforward: if a user returning to this page finds something new or improved that changes their understanding of the subject, then yes, update lastmod. Otherwise, leave it as is.

Can comments really trigger a lastmod update?

Mueller specifies: "If comments are critical to the page". This nuance matters. On a news site or forum where discussions enrich the main content, comments are an integral part of the page's value. In that case, adding them can justify a new date.

Conversely, if comments are peripheral — a blog where three people post "great article" — that doesn't deserve to trigger lastmod. Again, Google asks you to exercise judgment.

- The lastmod tag should signal substantial changes, not cosmetic ones

- Too many minor updates = dilution of the signal sent to Googlebot

- Comments only count if they bring critical value to the content

- The absence of a precise metric requires editorial judgment on your part

SEO Expert opinion

Is this recommendation really applied by Googlebot?

Let's be honest: we lack public data proving that Google actively penalizes sites that update lastmod too often. What is certain is that unnecessary crawls consume budget without benefit. Googlebot quickly learns to ignore noisy signals.

In practice, many sites completely neglect this tag — either leaving it empty or automatically updating it with every deployment. And they don't necessarily suffer for it. [To verify]: the real impact of poorly managed lastmod on crawling remains difficult to measure.

What risks come with modifying this date too often?

The main risk isn't a direct penalty, but a waste of crawl budget. If Googlebot visits a page and finds it hasn't really changed, it eventually gives less weight to your sitemaps. Result: your actual updates take longer to be discovered.

On a small 100-page site, this probably isn't an issue. On a site with thousands — or even millions — of pages, every signal matters. And that's where rigor becomes critical.

Should you completely remove lastmod if you can't manage it properly?

This is a defensible option. It's better to not use lastmod than to use it poorly. Google crawls your pages anyway based on other criteria — popularity, historical update frequency, external signals.

If your CMS updates this tag automatically without discrimination and you don't have resources to refine the logic, disable it. You probably won't lose much. The XML sitemap remains useful for initial URL discovery, even without lastmod.

Practical impact and recommendations

How do you determine if a modification justifies a lastmod update?

Ask yourself this: would a regular user perceive a notable change? If you've added an entire section, updated key statistics, or corrected a major factual error, yes. If you've changed a word or adjusted an invisible meta tag, no.

For news or real-time data sites, the threshold will be lower. For "evergreen" content pages, be more selective. The important thing is to have a consistent policy across your site.

What mistakes should you absolutely avoid?

Never tie lastmod to the date of your site's last technical deployment. Every production push doesn't mean all your pages have changed. Yet some CMS or scripts do this by default — and it's a classic error.

Also avoid updating lastmod for purely visual changes: a new button color, adjusted typography, modified menu. These elements don't impact indexable content. Googlebot doesn't care about them.

How do you audit and fix an existing sitemap?

Download your XML sitemap and compare lastmod dates with the actual history of your content. If all pages share the same date, or if they're updated daily while content doesn't change, you have a problem. Dig into the generation logic.

If you use WordPress, check your SEO plugin settings (Yoast, RankMath, etc.). If you have a custom CMS, review the sitemap generation script. Ideally, you'd link lastmod to an "editorial last modified" field in your database, not a technical timestamp.

- Define a clear policy of what constitutes a "significant change" for each page type

- Audit your current sitemaps: verify that lastmod doesn't change without editorial reason

- Disconnect lastmod from the date of technical deployment if that's the case

- Disable automatic lastmod updates for comments if they're not critical

- Document your logic so the entire editorial team follows the same rules

- Test on a sample of pages: does Googlebot actually recrawl after your updates?

❓ Frequently Asked Questions

Que se passe-t-il si je ne mets jamais à jour lastmod dans mon sitemap ?

Puis-je utiliser la date du jour pour toutes mes pages dans lastmod ?

Les commentaires sur un blog justifient-ils systématiquement une mise à jour de lastmod ?

Comment savoir si Googlebot tient vraiment compte de ma balise lastmod ?

Est-ce que corriger une faute d'orthographe justifie une nouvelle date lastmod ?

🎥 From the same video 16

Other SEO insights extracted from this same Google Search Central video · published on 31/01/2023

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.