Official statement

Other statements from this video 13 ▾

- □ Les données structurées pros/cons dans les avis vont-elles changer la donne en SERP ?

- □ Les données structurées produits peuvent-elles vraiment transformer votre visibilité Google ?

- □ Le nouveau rapport Merchant Listings de Search Console change-t-il la donne pour l'e-commerce ?

- □ Faut-il vraiment oublier le SEO technique pour plaire à Google avec du contenu « people-first » ?

- □ Pourquoi le Helpful Content Update ne ciblait-il initialement que l'anglais ?

- □ Pourquoi Google maintient-il une page dédiée au suivi des mises à jour de ranking ?

- □ Comment utiliser le nouveau rapport Video Indexing de Search Console pour débloquer vos vidéos ?

- □ Comment exploiter les nouvelles données vidéo de l'outil d'inspection d'URL ?

- □ Le rapport HTTPS de Search Console peut-il vraiment booster votre ranking ?

- □ Search Console simplifie sa classification : faut-il revoir votre méthode de priorisation ?

- □ Search Console va-t-elle vraiment abandonner le ciblage géographique ?

- □ Googlebot impose-t-il vraiment une limite de 15 Mo au crawl HTML ?

- □ Comment optimiser vos feeds pour la fonctionnalité Follow de Google Discover ?

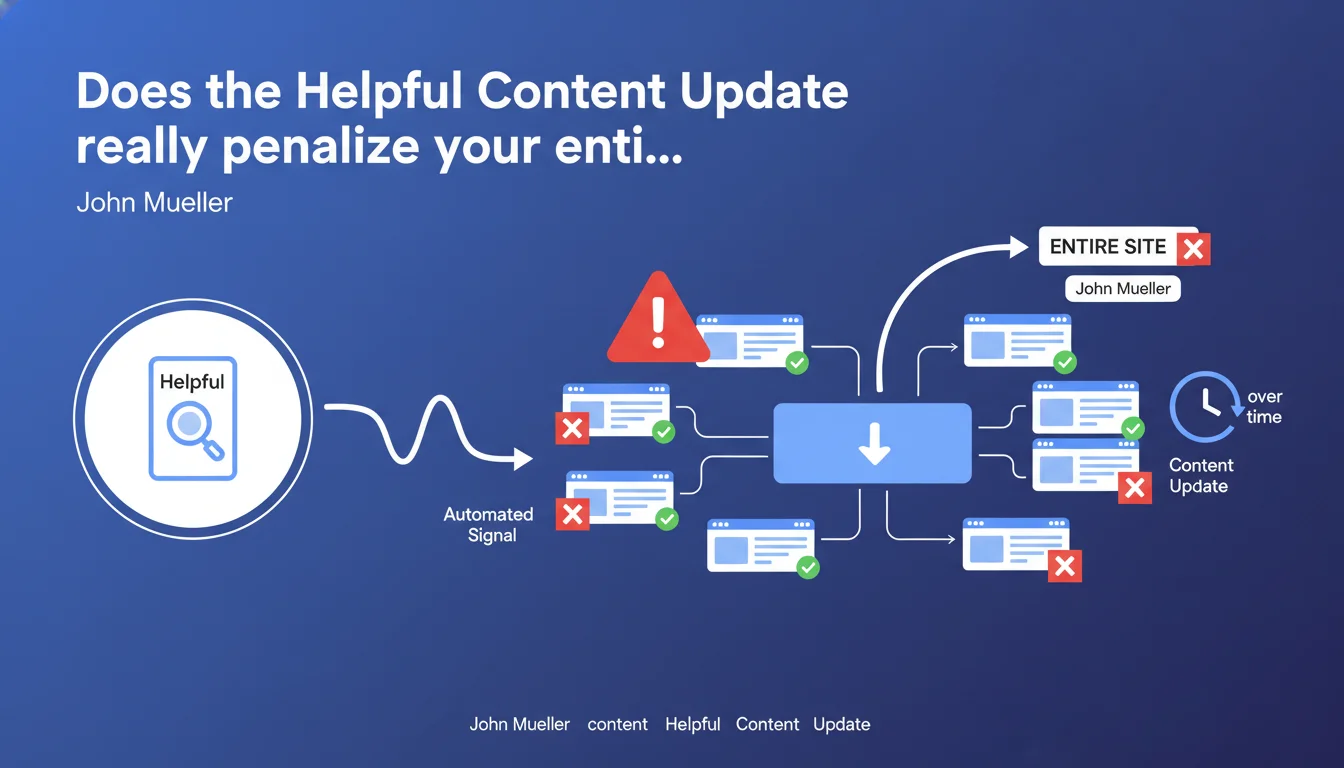

Google confirms that the Helpful Content Update is a site-wide signal: the entire domain can be affected, not just problematic pages. The system operates automatically, and penalized sites must rely on time to see improvements reflected—there's no quick fix possible.

What you need to understand

What does a "site-wide" signal actually mean in practice?

A site-wide signal means Google evaluates the overall quality of your domain, not page by page. If a significant portion of your content is deemed unhelpful, your entire site suffers—including pages that, in isolation, would be considered high-quality.

This is different from a targeted manual penalty or a localized technical issue. Here, the algorithm applies a general trust coefficient to your entire domain. An excellent page on a globally weak site will have less visibility than an average page on a site perceived as reliable.

Why does Google emphasize that the process is automated?

Because it means zero human intervention, zero appeals, zero checkbox in Search Console to request a review. The system runs continuously, re-evaluates sites during its periodic updates, and applies its verdicts without warning.

For practitioners, this means there's no direct leverage to speed up recovery. No magic button, no reconsideration form. You fix things, you wait, and you hope the next algorithm refresh gives you justice.

What does "improvements will be taken into account over time" really mean?

Google gives you no timeline. "Over time" could mean the next system update—which might happen several months after you make your corrections. In the meantime, your traffic stays in the gutter.

Let's be honest: this vague wording is frustrating. It implies that even a site that fixes its problems immediately will have to wait until the algorithm cycles back and re-evaluates the entire domain. No guaranteed timeframe, no visibility into the update schedule.

- The Helpful Content signal applies to your entire domain, not individual pages

- The process is 100% automated: Google or webmasters cannot manually intervene to force a re-evaluation

- Improvements will only be visible after an algorithm refresh, with no guarantee of timing

- An affected site can see quality pages penalized by association with weak content elsewhere on the domain

- There is no official tool to know if your site is affected or to track your recovery

SEO Expert opinion

Is this statement consistent with real-world observations?

Yes and no. We do see sharp, global visibility drops on certain domains after Helpful Content Update rollouts—which confirms the site-wide nature of the signal. But reality is more nuanced than what Google suggests.

Some sites experience partial recoveries between official algorithm updates, suggesting either that the system re-evaluates continuously (but slowly), or that there are undocumented micro-adjustments. [To verify]: Google doesn't communicate about the real refresh frequency of the HCU signal, making it impossible to plan a recovery strategy with a reliable time horizon.

What are the practical consequences for a mixed site (good + bad content)?

That's where it gets tight. If you have 80% solid content and 20% weak pages (old articles, auto-generated content, thin pages), those 20% can drag down your entire domain. The algorithm doesn't do nuance—it evaluates the overall ratio.

Result: you must either delete aggressively problematic pages (noindex, outright deletion, 410 redirects), or improve them until they reach an acceptable standard. Banking on only your good pages ranking while keeping mediocre content elsewhere? That's over. The domain is judged as a whole.

Does Google give you enough indicators to take action?

No, and that's the major problem. Google tells you the signal is site-wide, it's automated, and it takes time. But it doesn't tell you:

- Whether your site is actually affected (no Search Console notification)

- What proportion of your content is deemed problematic

- What specific types of pages trigger the signal

- How long "over time" actually means in concrete terms

You're flying blind. You can compare your traffic curves against known HCU rollout dates, but that remains indirect interpretation. No official KPI, no quality score displayed. You improve, you wait, you cross your fingers.

Practical impact and recommendations

What should you do concretely if your site is affected?

First step: audit your entire domain's content. Not just pages that ranked well before the drop—your entire site. Identify weak content, thin pages, mass-generated articles, low-value aggregations.

Next, make a radical decision: either improve these pages to meet a Google-compatible quality standard (demonstrated expertise, depth, genuine utility), or delete them outright. Letting mediocre content linger is no longer an option—it contaminates your entire domain.

What mistakes should you absolutely avoid?

Don't try to "dilute" the problem by aggressively adding good content to offset the bad. The algorithm evaluates the overall ratio, not the absolute volume of quality pages. If you have 1,000 weak pages and publish 200 excellent ones, your ratio improves marginally—but you'll likely still fall short of the threshold.

Another trap: passively waiting for the next update to "fix" things. If you change nothing, the algorithm will re-evaluate your site and reach the same conclusion. Improvements must be substantial—we're talking deep editorial overhaul, not cosmetic tweaks.

How can you verify that your fixes are working?

It's impossible to know before the next algorithm refresh. You can monitor your organic traffic curves, cross-reference them with official HCU update announcements (when Google bothers to announce them), but there's no real-time feedback.

The only indirect indicator: if you've massively cleaned up or improved your content and see no recovery during the next documented update, it means either you haven't corrected enough, or the algorithm judges your improvements insufficient. Back to square one.

- Conduct an exhaustive audit of all domain content, not just high-traffic pages

- Identify weak pages: thin content, auto-generated, low expertise, low utility

- Decide for each problem page: deep improvement or deletion (noindex, 410, relevant redirect)

- Don't offset bad content with good—delete the bad first

- Document changes (dates, affected pages) so you can correlate with future traffic fluctuations

- Monitor official HCU update announcements to identify potential re-evaluation windows

- Accept that there is no immediate feedback and recovery will take several months minimum

❓ Frequently Asked Questions

Combien de temps faut-il attendre pour voir une récupération après avoir corrigé son contenu ?

Peut-on être touché par le Helpful Content Update même si seulement une partie du site a du contenu faible ?

Existe-t-il une notification Search Console pour savoir si mon site est affecté ?

Faut-il supprimer toutes les pages de faible qualité ou peut-on juste les améliorer ?

Le Helpful Content Update peut-il affecter des sous-domaines indépendamment du domaine principal ?

🎥 From the same video 13

Other SEO insights extracted from this same Google Search Central video · published on 28/09/2022

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.