Official statement

Other statements from this video 9 ▾

- □ Pourquoi l'API Search Console contient-elle plus de données que l'interface utilisateur ?

- □ Pourquoi Search Console plafonne-t-elle vos rapports d'indexation à 1000 lignes ?

- □ Pourquoi Google a-t-il multiplié par 5 la rétention de données dans Search Console ?

- □ Faut-il vraiment corriger toutes les notifications de Google Search Console ?

- □ Faut-il vraiment corriger toutes les erreurs 404 détectées dans Search Console ?

- □ Pourquoi Google refuse-t-il de diagnostiquer vos problèmes de ranking ?

- □ L'API d'inspection d'URL peut-elle vraiment remplacer les inspections manuelles à grande échelle ?

- □ Search Console Insights : Google propose-t-il enfin un outil SEO pour non-techniciens ?

- □ Pourquoi l'intégration BigQuery de Search Console change-t-elle la donne pour l'analyse SEO avancée ?

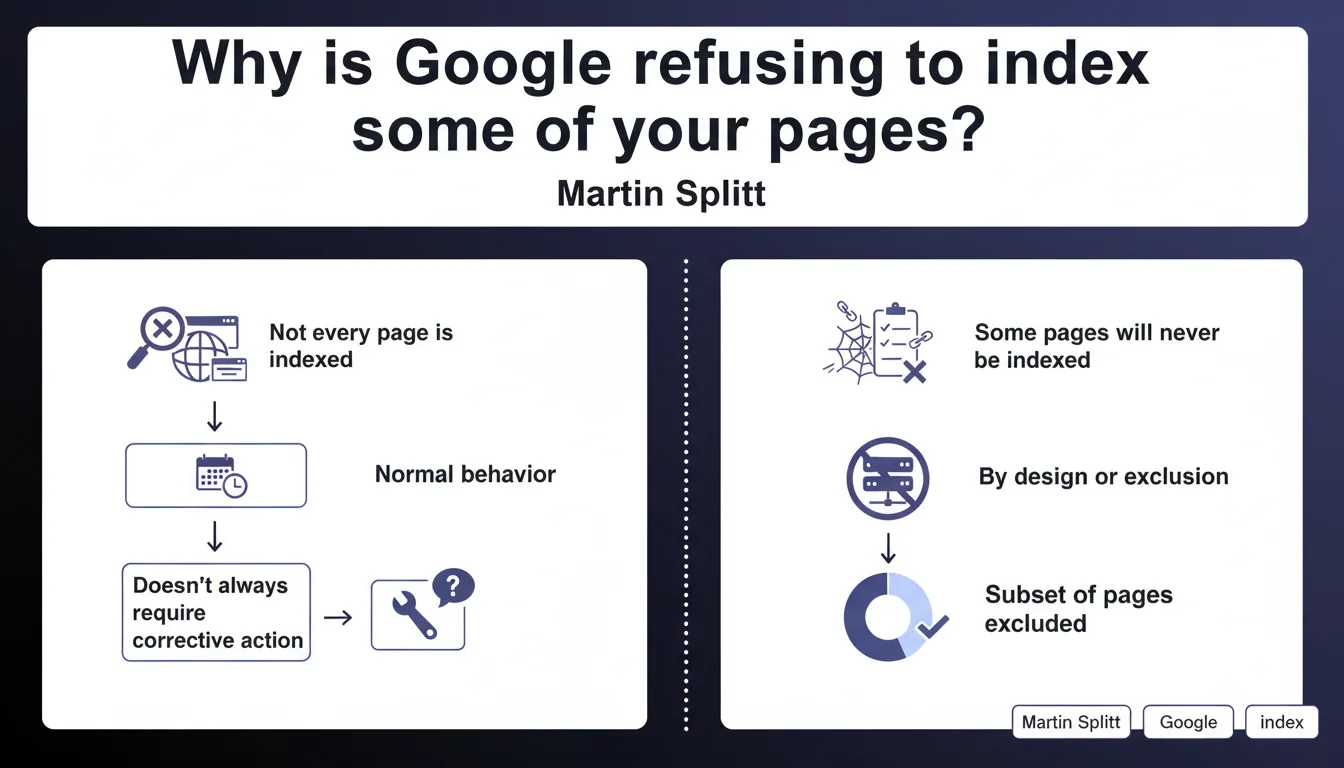

Google does not systematically index every page on a website, and this is intentional behavior. Some pages will remain outside the index without representing a technical problem to fix. The SEO challenge: identify which pages truly deserve to be indexed and accept that not all of them will be.

What you need to understand

Does Google really index everything it crawls?

No, and this is a fundamental distinction. Crawling and indexing are two separate processes. Googlebot can perfectly well explore a page without deciding to add it to its index.

Martin Splitt confirms it: some pages will never be indexed, and this is normal motor behavior. No bug, no penalty — just an algorithmic decision based on criteria Google considers relevant.

What types of pages does Google exclude from its index?

Low-value content pages are the first to be affected: duplicate content, empty tag pages, redundant navigation facets, unnecessary paginated archives. Anything that doesn't serve the end user risks staying out of the index.

Technical pages as well: internal search results, login pages, shopping carts, order confirmations. Google has no interest in ranking them — and neither should you, normally.

Is this non-indexation permanent?

Not necessarily. A page ignored today can be indexed tomorrow if its content evolves, if it receives relevant internal or external links, or if its perceived value changes in the algorithm's eyes.

But some pages will indeed remain out of index permanently. And that's precisely where Google asks SEOs to let go: not all pages are meant to be indexed.

- Crawl ≠ indexation: Google can explore without indexing

- Low-value pages are naturally excluded

- This non-indexation is not necessarily a technical problem

- Accepting that part of your site remains out of index is part of mature SEO strategy

- Indexation status can evolve over time based on content and signals received

SEO Expert opinion

Does this statement align with what we observe in practice?

Absolutely. For years now, we see websites with thousands of pages crawled but not indexed in Search Console. Before, we panicked. Now, we know it's often intentional by Google.

The problem is that Google provides no precise criteria to determine what deserves indexation or not. "Low value-add" remains a fuzzy concept. [To verify]: what exact signals trigger this exclusion? No public data on that.

Should we really "accept" this non-indexation without taking action?

It depends. If Google refuses to index your strategic pages — main product sheets, cornerstone content, commercial landing pages — then no, there's a problem. And you need to dig deeper: weak content, cannibalization, hidden technical blocking.

On the other hand, if it's pagination pages, sort filters, or chronological archives, then yes, drop it. Focus your efforts on what really matters instead.

What are the gray areas of this statement?

Google doesn't specify how long a page can remain under "observation" before it decides to index or ignore it. A few days? Several months? No numerical data.

Another gray area: the distinction between "a page Google doesn't want to index" and "a page Google can't index" (insufficient crawl budget, too-deep structure, contradictory signals). Splitt is talking about the former, but on the ground, it's often a mix of both.

Practical impact and recommendations

How do you identify pages that really pose a problem?

Open Search Console, "Pages" section. Filter on "Discovered – currently not indexed". Export the list. Now sort: which URLs are strategic? Which ones are noise?

For each strategic non-indexed page, ask yourself these questions: is the content unique and substantive? Does the page receive quality internal links? Are there contradictory signals (robots meta tag, canonical, etc.)?

What should you concretely do to maximize indexation chances?

First, improve your internal linking. An orphaned page or one too deep in your site structure has little chance of being indexed, even if it's crawled. Next, enrich the content if necessary — Google rarely indexes pages with 50 words.

If the page truly has no reason to be indexed, own it: add a noindex tag or exclude it from your XML sitemap. That frees up crawl budget for pages that matter.

- Audit the "Discovered – currently not indexed" list in Search Console

- Identify strategic vs secondary pages in this list

- Strengthen internal linking to strategic ignored pages

- Check for absence of contradictory signals (noindex, canonical, robots.txt)

- Enrich content of strategic pages if too thin

- Add explicit noindex to pages with no SEO value to free up crawl budget

- Monitor changes in indexation status over time

When should you consider external assistance?

If you manage a site with thousands of pages with complex indexation issues — multi-faceted e-commerce, media site with massive archives, SaaS platform with user-generated content — arbitration quickly becomes delicate.

Between what must be indexed, what can be indexed, and what absolutely shouldn't be, the line is thin. A specialized SEO agency has the tools and experience to provide an accurate diagnosis and guide you in optimizing your indexation strategy, especially when business stakes are high.

❓ Frequently Asked Questions

Combien de temps faut-il attendre avant qu'une page explorée soit indexée ?

Faut-il forcer l'indexation via l'outil d'inspection d'URL de la Search Console ?

Un sitemap XML garantit-il l'indexation des URLs listées ?

Les pages non indexées consomment-elles du crawl budget inutilement ?

Peut-on connaître la raison précise pour laquelle une page n'est pas indexée ?

🎥 From the same video 9

Other SEO insights extracted from this same Google Search Central video · published on 22/08/2024

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.