Official statement

What you need to understand

The "Discovered – Currently Not Indexed" status in Search Console indicates that Google has discovered your page but has deliberately chosen not to index it. This situation may seem frustrating, but it actually reveals a qualitative selection process on the part of the search engine.

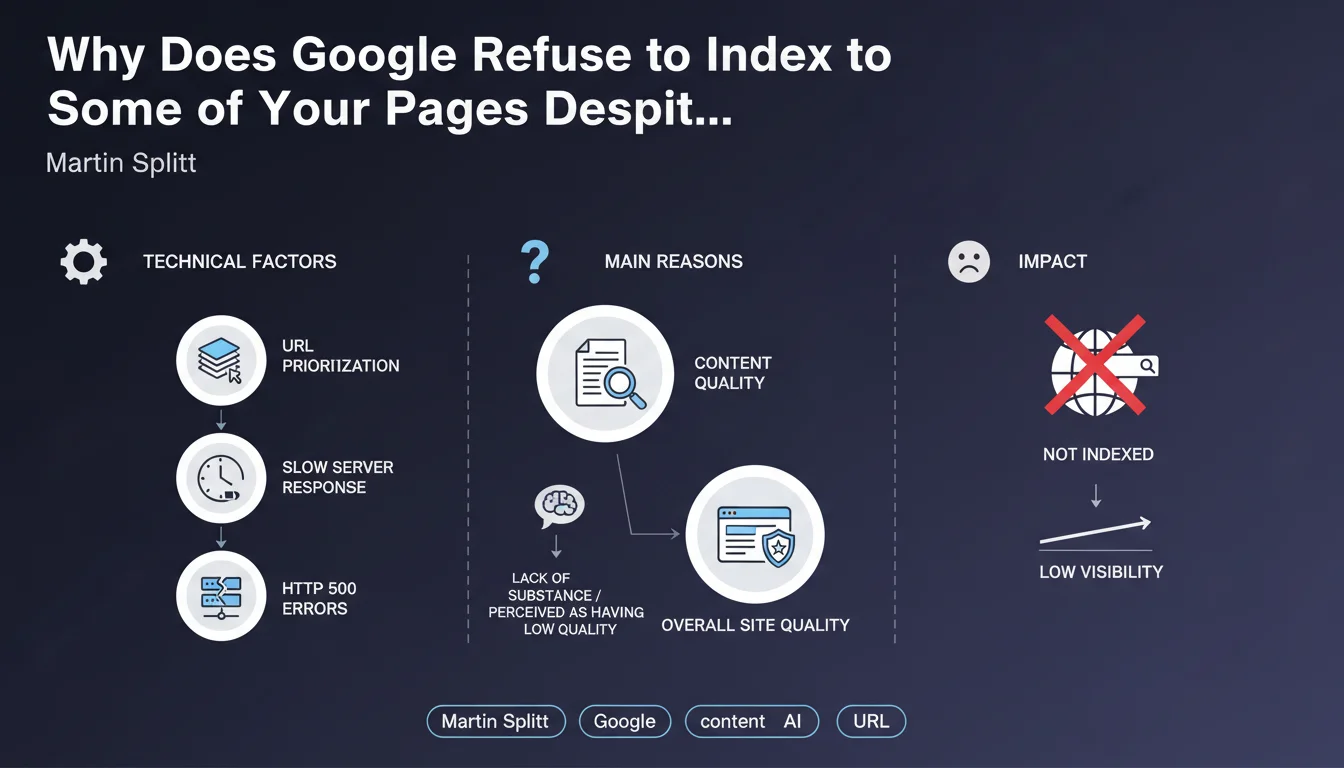

Several technical factors can block indexation: server response times that are too slow, intermittent HTTP 500 errors, or prioritization of crawl resources toward other URLs deemed more important. But beyond these purely technical aspects, Google applies a strict quality filter.

The main revelation concerns the content itself: Google won't systematically index all the pages it discovers. If a page lacks substance, provides little added value, or doesn't address an identified user need, it will remain in discovery limbo without ever entering the index.

- Indexation is no longer automatic: discovering a page doesn't guarantee its indexation

- Quality trumps quantity: Google evaluates the substance and value of each page

- Technical aspects remain important: slow servers or 500 errors also block indexation

- Crawl budget is redistributed: weak pages penalize the entire site

SEO Expert opinion

This statement confirms what we've been observing for several years: Google is adopting an increasingly selective approach to indexation. The era when you could massively index low-value pages is over. This evolution is consistent with Google's desire to reduce the size of its index while improving its relevance.

A crucial point often underestimated: the notion of "value" is evaluated within the site's overall context. A technically correct page may remain unindexed if the site as a whole presents too much weak content. This is particularly visible on e-commerce sites with thousands of similar product pages or on blogs with recycled content.

However, there are false positives: some quality pages may temporarily remain unindexed following transient technical issues or during crawl spikes. In these cases, improving server performance and resubmitting via Search Console are usually sufficient to resolve the situation.

Practical impact and recommendations

- Audit your unindexed pages: Identify all URLs in Search Console with the "Discovered – Currently Not Indexed" status and analyze their actual quality

- Evaluate content substance: Ask yourself: does this page provide unique value or does it repeat what already exists on my site or elsewhere?

- Eliminate or consolidate weak pages: Remove valueless content, merge similar pages, and redirect to more comprehensive content

- Optimize server performance: Aim for a TTFB below 500ms and eliminate all 500 errors that penalize crawling

- Prioritize with sitemap and internal links: Guide Google toward your strategic pages by including them in your XML sitemap and strengthening their internal linking

- Progressively enrich your content: Add depth, unique data, and original analysis to increase perceived substance

- Monitor the discovered/indexed pages ratio: A significant gap signals an overall site quality issue that must be corrected as a priority

- Don't force indexation of weak pages: Using the URL inspection tool on valueless content won't change Google's verdict

These optimizations require an in-depth analysis of your content architecture, technical performance, and overall editorial strategy. The boundary between "sufficiently substantial" content and "too weak" content can be difficult to establish without specialized expertise. For sites with hundreds or thousands of pages, identifying priorities and implementing a structured action plan often require specialized support to achieve measurable results more quickly.

💬 Comments (0)

Be the first to comment.