Official statement

Other statements from this video 16 ▾

- □ Should you really remove meta keyword tags from your website?

- □ Should you update your sitemap's lastmod date every time you make a minor change?

- □ Should you really separate news sitemaps and general sitemaps to avoid duplicate URLs?

- □ Is Google really ignoring your carefully crafted meta description in favor of something else?

- □ Should you really waste time cleaning up spammy backlinks from your link profile?

- □ Does disavowing toxic backlinks really help you recover your lost rankings after a penalty?

- □ Why do 301 redirects remain absolutely critical when migrating to a new domain?

- □ Can a 404 code targeting Googlebot block the indexing of your pages?

- □ Does Google really require identical content on mobile and desktop for mobile-first indexing to work?

- □ Do you really need to request removal of redirected URLs from Google's index?

- □ Does verifying your site in Search Console really improve your SEO rankings?

- □ Why does Google refuse to index dynamic multilingual content on a single URL?

- □ What happens when your hreflang links fail to validate completely?

- □ Are 'Made by X' footer links really safe for your SEO?

- □ How should you properly set up canonical and alternate tags for an m-dot architecture?

- □ Are image EXIF data really useless for SEO?

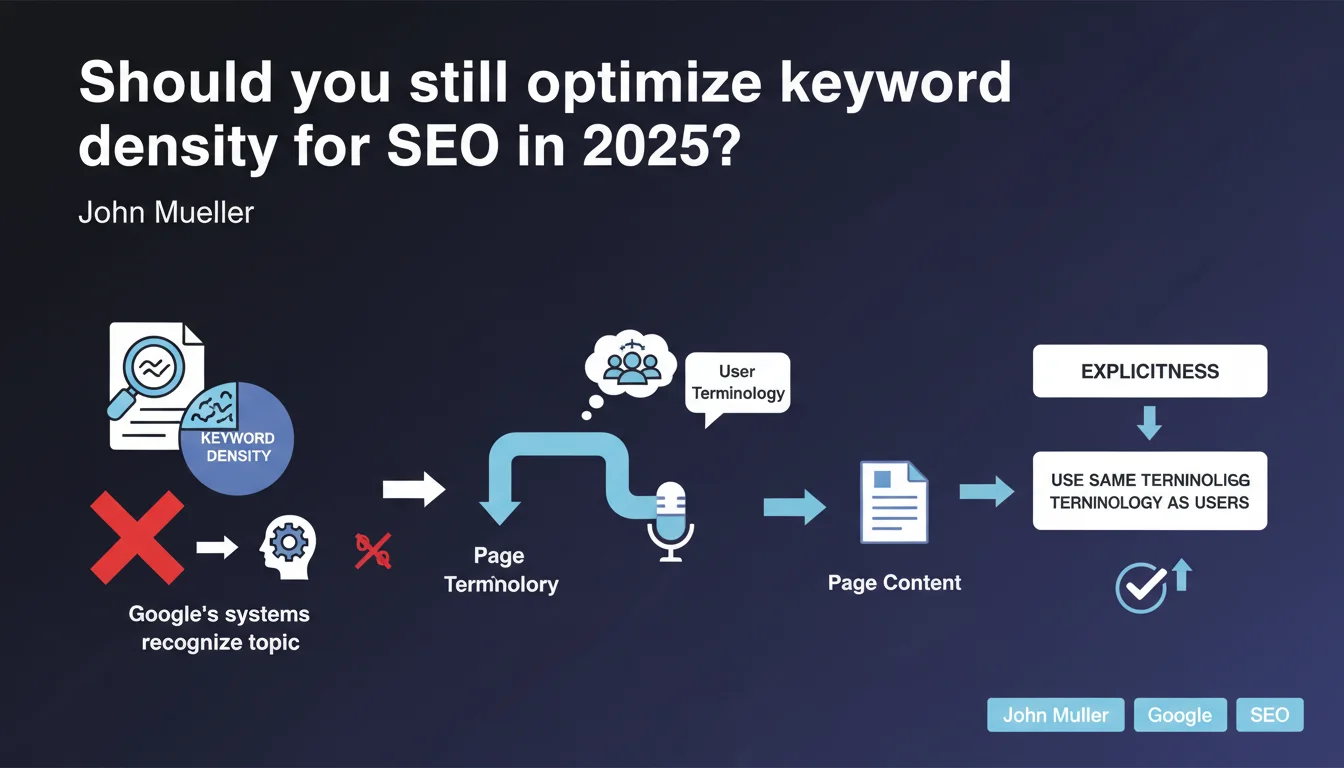

Google does not calculate optimal keyword density and can understand a page's topic without exact term repetition. However, explicitly using the terminology your users employ remains essential for clarifying context and avoiding semantic ambiguities.

What you need to understand

Does Google really analyze pages without counting keywords?

Google's semantic understanding systems (BERT, MUM, etc.) allow it to identify a content's subject even when exact terms are not repeated. The algorithm relies on global context, named entities, semantic relationships and co-occurrences to determine relevance.

In practice, a page about "electric cars" will be understood as such even if the phrase appears only once — provided the lexical field is coherent (batteries, charging, range, etc.).

Why does Mueller insist on the importance of user terminology?

Because there is often a gap between a brand's technical vocabulary and the terms used by internet users in their searches. If you sell "electric mobility solutions" while your customers search for "cheap electric cars", you create a semantic misalignment problem.

Google can make the connection, but you lose clarity. The more your terminology aligns with your audience's, the less the algorithm has to "interpret" — and the better you rank.

Does this statement definitively bury keyword density?

Yes and no. Google does not calculate a magic ratio of "2.5% of the text must be the target keyword". This outdated approach from the early 2000s no longer makes sense with current natural language processing models.

But this does not mean you should completely ignore recurrence. A page that never explicitly mentions its main subject remains fragile — especially in competitive or ambiguous fields.

- Google does not measure an ideal percentage of keywords in your text

- Modern systems rely on contextual semantics and entities

- Using the exact terminology of your users remains a good strategic practice

- The main risk is not "stuffing" but lexical fuzziness that harms understanding

SEO Expert opinion

Is this statement consistent with real-world observations?

Overall, yes. Since BERT (2019), we have seen that pages rank very well without obsessive repetition of the exact keyword. Semantic variations, synonyms and close expressions are valued.

But be careful: this works mainly on queries where context is clear. On ambiguous or highly technical terms, the absence of the target keyword can still cost you. [To verify] on your specific niche — complex B2B sectors sometimes show different behaviors.

What nuances should be added to this rule?

First, "no optimal density" does not mean "no mention". If your main keyword does not appear anywhere in structural elements (title, H1, opening paragraphs), you make Google's job difficult — even with the best semantics in the world.

Second, the advice to "speak like your users" is not just an algorithm issue. It is also a matter of conversion rate. A visitor who doesn't find their words on your page leaves — even if Google ranked you well.

In what cases can this statement be misleading?

On very competitive queries, completely ignoring the presence of the target keyword remains risky. Pages ranking in the top 3 on commercial queries often show reasonable occurrence of the exact term in hot zones.

Finally, Mueller does not say that over-optimization no longer exists. Artificially stuffing a text remains penalizable — but it's not a "density" problem, it's a problem with perceived quality by the user.

Practical impact and recommendations

What should you concretely do with this information?

Stop calculating keyword density percentages. No serious tool should still display "ideal density: 2.8%" in 2025. This metric has no predictive value on your rankings.

Focus instead on two axes: semantic clarity (is your subject explicit from the opening lines?) and lexical alignment (do you speak like your audience or like your technical director?).

How do you verify that your terminology matches that of users?

Analyze Google autocomplete, "related searches" and especially the questions asked in "People Also Ask". These are direct indicators of the actual vocabulary used on your topic.

Use Search Console to identify queries that generate impressions without clicks. Often, this is a sign that your title/description does not use the right terms — even if Google displays you.

- Remove all keyword density calculation from your SEO processes

- Verify that the target keyword appears explicitly in title, H1 and introduction

- Map your content vocabulary with that of actual searches (Search Console, PAA)

- Enrich the lexical field with entities and synonyms rather than repeating the same term

- Test comprehension: can a human identify the subject in 5 seconds of reading?

- Monitor ambiguous pages (polysemous terms, technical jargon) that require more lexical clarity

Keyword density is a relic of pre-2010 SEO. Google favors contextual understanding and semantic relevance. But this does not exempt you from being explicit: use your users' terminology, clarify your subject from the opening, and enrich your lexical field without forcing repetition.

These semantic and strategic adjustments can prove complex to roll out at scale, especially if your site has hundreds of pages or reaches varied audiences. Support from a specialized SEO agency allows you to finely audit your lexical gaps, map the vocabulary of your audience segments and implement a coherent editorial strategy across all your content.

❓ Frequently Asked Questions

Peut-on encore utiliser un mot-clé plusieurs fois sans risque de pénalité ?

Faut-il mentionner le mot-clé exact ou les synonymes suffisent-ils ?

Les outils SEO qui calculent la densité sont-ils obsolètes ?

Cette règle s'applique-t-elle aussi aux balises meta et titres ?

Comment savoir si mon contenu manque de clarté lexicale ?

🎥 From the same video 16

Other SEO insights extracted from this same Google Search Central video · published on 31/01/2023

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.