Official statement

Other statements from this video 13 ▾

- □ Robots.txt bloque-t-il vraiment l'indexation de vos pages ?

- □ La balise meta 'none' est-elle vraiment l'équivalent de noindex + nofollow ?

- □ Robots.txt est-il vraiment inefficace pour bloquer l'indexation ?

- □ Peut-on bloquer l'indexation de répertoires entiers via des modules serveur plutôt que robots.txt ?

- □ Faut-il vraiment indexer les pages de connexion de votre site ?

- □ Faut-il vraiment préférer rel=canonical à noindex pour les contenus anciens ?

- □ La balise noarchive empêche-t-elle réellement Google d'archiver vos pages ?

- □ Faut-il bloquer les snippets avec nosnippet pour protéger son contenu sensible ?

- □ Faut-il vraiment utiliser max-snippet et max-image-preview pour contrôler l'affichage dans les SERP ?

- □ Faut-il privilégier l'attribut nofollow individuel ou la balise meta robots nofollow pour contrôler le PageRank ?

- □ Comment bloquer l'indexation de PDFs et fichiers non-HTML sans accès aux headers HTTP ?

- □ Pourquoi robots.txt bloque-t-il vraiment les images et vidéos mais pas les pages web ?

- □ Comment Google transforme-t-il vraiment vos PDFs en contenu indexable ?

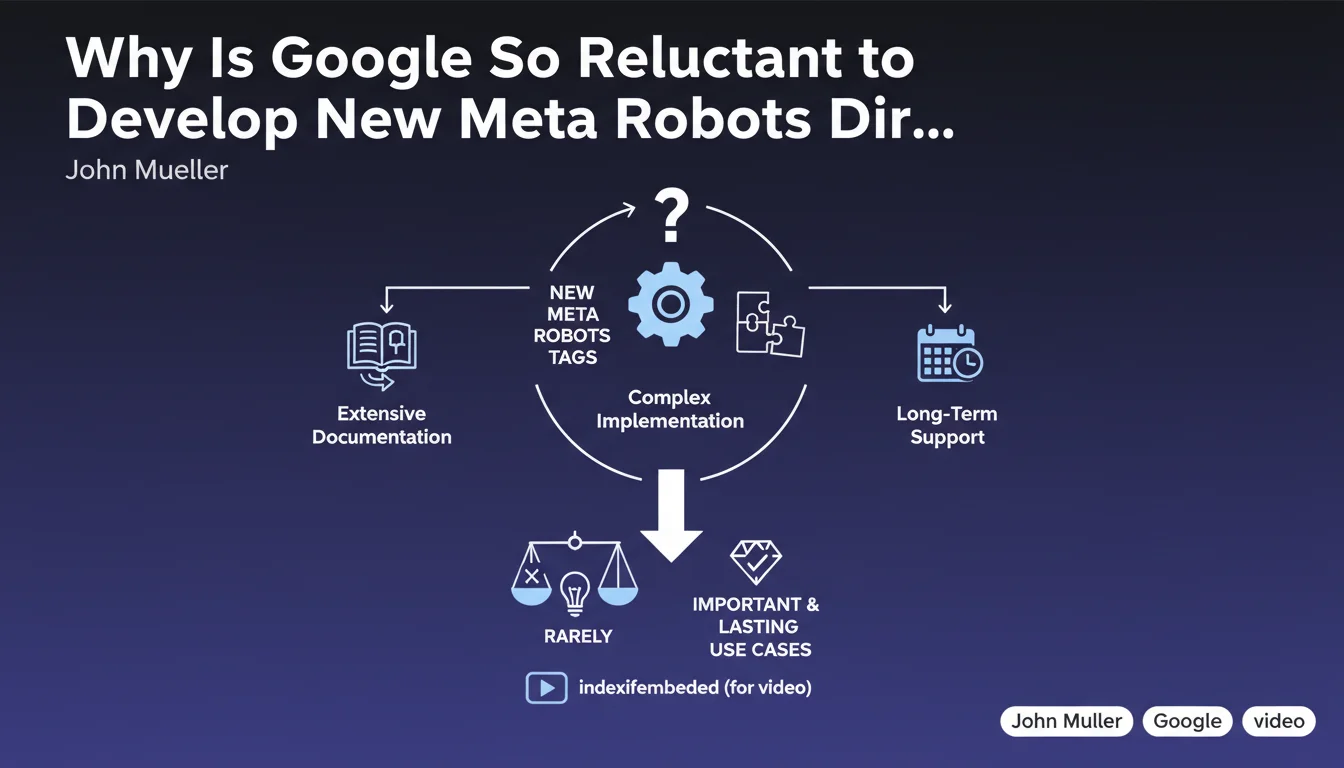

Google severely limits the creation of new meta robots tags because of the long-term maintenance commitment they require. Only critical and sustainable use cases justify their development, such as 'indexifembedded' for videos. Don't count on new robots directives to solve your specific indexation problems.

What you need to understand

What's Holding Google Back From Developing New Robots Directives?

John Mueller's position is clear: each new meta robots tag represents a permanent technical and documentary commitment. Once introduced, Google must support it indefinitely, keep documentation up to date, and manage implementation edge cases.

Concretely, this means that Google's team must balance making life easier for webmasters against the growing complexity of their own infrastructure. Each additional directive multiplies possible combinations and risks of conflicts or ambiguous interpretations.

What Criteria Determine Whether a New Tag Gets Created?

Google only introduces a new directive if the use case is both massive and impossible to solve any other way. The 'indexifembedded' example illustrates this threshold: it addressed a structural need for video platforms, not a marginal request.

This restrictive approach forces SEO practitioners to work with the existing toolkit rather than waiting for custom solutions. If your indexation problem affects only a handful of sites, it will never justify a new tag.

How Does This Policy Impact the SEO Toolkit?

The voluntary limitation on the number of robots directives forces SEOs to master existing tags and their combinations perfectly. Noindex, nofollow, unavailable_after, max-snippet: each must be exploited in all its subtlety.

- Google prioritizes long-term technical stability over rapid innovation

- New tags are reserved for structural use cases affecting millions of pages

- Practitioners must work with a relatively fixed set of directives that has been stable for years

- The documentation and support for each tag weighs heavily in Google's decisions

- Creative solutions come from intelligent combinations of existing tools, not from waiting for new ones

SEO Expert opinion

Is This Reluctance Really Anything New?

Let's be honest: Google has never been prolific when it comes to new meta tags. The last significant introduction goes back several years. What Mueller formalizes here is a policy that has existed de facto for a long time.

What's changing is the transparent acknowledgment of the reasons. And that's where it gets tricky: the question isn't so much whether Google can create new tags, but whether their current architecture allows them to do so without risks. The argument about "maintenance costs" might be hiding a technical reality more constraining than they're willing to admit.

What Gray Areas Remain in This Statement?

Mueller remains vague about what exactly constitutes an "important and lasting use case." [To be verified] How many sites need to be affected? Over what timeframe? What exact quantitative criteria?

Furthermore, this position completely ignores repeated requests from the SEO community for more granular directives. For example, a tag allowing differentiation between main content and peripheral content without relying on schema markup – but apparently, this need doesn't cross Google's criticality threshold.

Is This Position Consistent With Observed Practices?

In the field, we observe that Google prefers to modify the behavior of existing tags rather than create new ones. The evolution of 'nofollow' treatment into a "hint" rather than an absolute directive is the perfect example.

What Mueller doesn't say: this strategy transfers complexity from Google's side to webmasters'. Instead of a clear new tag, you end up with nuanced behaviors that are hard to anticipate and undocumented edge cases.

Practical impact and recommendations

What Should You Actually Do With This Information?

Stop waiting for magic solutions from Google. If your indexation problem requires a specific directive that doesn't exist, it probably never will. Focus on optimizing the available levers.

This also means you must meticulously document your current implementation choices, because the tags you use today will be supported in 5 or 10 years. No risk of planned obsolescence — but no evolution either.

What Mistakes Should You Avoid Given This Limitation?

Don't fall into the trap of over-complicating your implementations by combining too many directives. Each additional layer increases the risk of unanticipated side effects.

Another classic mistake: using JavaScript solutions to simulate behaviors that meta tags don't allow. Google crawls JavaScript, yes, but adding an abstraction layer makes your architecture fragile and complicates debugging.

How Should You Adapt Strategically to This Constraint?

The most robust approach is to design your site architecture while accounting for current limitations, not what you'd like Google to offer. If a feature requires a non-existent tag, rethink the feature.

- Audit your current meta robots tag implementations to identify non-standard or approximate usage

- Document precisely the intent behind each directive you use (to maintain consistency long-term)

- Favor simplicity: one clear directive beats three combined directives whose interaction is unclear

- Train your technical teams on the nuances of existing tags rather than waiting for new tools

- Monitor Google's documentation updates on robots directives — behavior changes are more likely than new features

- Plan alternative architectural solutions for use cases not covered by standard tags

❓ Frequently Asked Questions

Google va-t-il créer une balise pour désindexer temporairement du contenu ?

Combien de balises meta robots Google supporte-t-il actuellement ?

Peut-on combiner plusieurs directives dans une seule balise meta robots ?

Que faire si mon besoin d'indexation n'est couvert par aucune balise existante ?

La balise indexifembedded est-elle vraiment utile pour tous les sites ?

🎥 From the same video 13

Other SEO insights extracted from this same Google Search Central video · published on 30/06/2022

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.