Official statement

Other statements from this video 17 ▾

- □ Faut-il vraiment choisir entre www et non-www pour le SEO ?

- □ Pourquoi Googlebot ignore-t-il vos boutons et comment contourner cette limite ?

- □ Les guest posts pour des backlinks sont-ils vraiment bannis par Google ?

- □ Faut-il vraiment du texte sur les pages catégories pour bien ranker ?

- □ Le HTML sémantique a-t-il vraiment un impact sur le classement Google ?

- □ Faut-il vraiment s'inquiéter des erreurs 404 générées par JSON et JavaScript dans GSC ?

- □ Google privilégie-t-il vraiment la meta description quand le contenu est pauvre ?

- □ Faut-il vraiment bloquer l'indexation des menus et zones communes d'un site ?

- □ L'infinite scroll est-il compatible avec le SEO si chaque section possède une URL unique ?

- □ L'indexation mobile-first impose-t-elle vraiment la version mobile comme unique référence ?

- □ Les PDF hébergés sur Google Drive sont-ils vraiment indexables par Google ?

- □ Pourquoi Google indexe-t-il vos URLs même quand robots.txt les bloque ?

- □ Faut-il supprimer ou améliorer le contenu de faible qualité sur votre site ?

- □ Le CMS influence-t-il vraiment le jugement de Google sur votre site ?

- □ Un noindex sur la homepage peut-il vraiment faire apparaître d'autres pages en premier ?

- □ Faut-il vraiment optimiser l'INP si ce n'est pas (encore) un facteur de classement ?

- □ Faut-il arrêter de forcer l'indexation quand Google désindexe vos pages ?

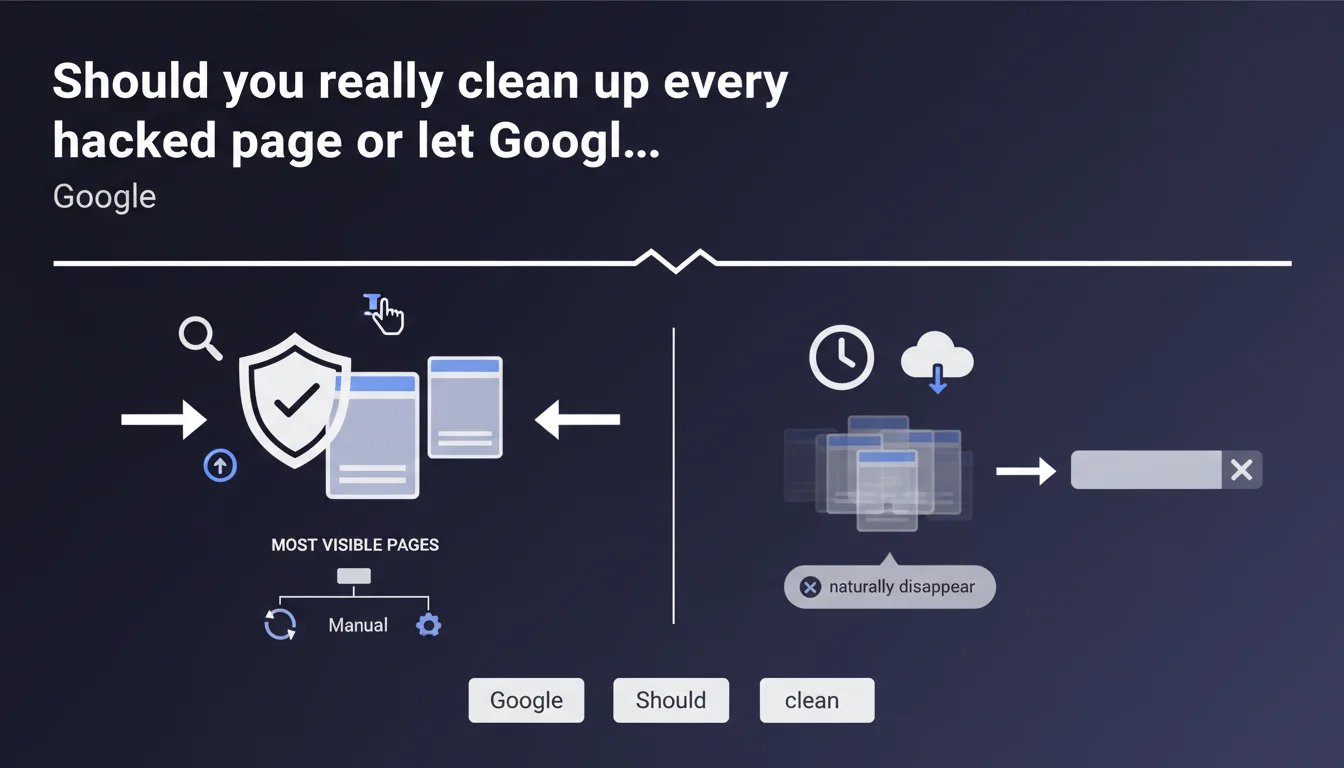

Google recommends concentrating your efforts on the most visible hacked pages and treating them manually (deletion or reindexing). The thousands of other infected pages? Let them naturally disappear from search results without touching them. A pragmatic approach that saves time but raises questions about collateral risks.

What you need to understand

Why does Google recommend not cleaning everything manually?

When a site suffers a massive hack affecting thousands of pages, the temptation is to want to fix everything immediately. Google takes the opposite approach: focus only on high-visibility pages and let the rest evaporate naturally.

The algorithm is designed to progressively detect and devalue fraudulent or low-quality content. Rather than spending weeks identifying and removing each infected URL, Google is betting on its ability to clean up its own index.

What constitutes a "visible page" in this context?

Google doesn't provide a precise definition—typical of their vague communication. You can reasonably interpret this as pages that generate organic traffic, appear in SERPs for your strategic keywords, or have been indexed for a long time.

Concretely: your category pages, pillar content, homepage, flagship product sheets. Not the thousands of spam pages automatically generated by the hack that never had any legitimacy.

How long does this "natural disappearance" take?

Again, no official figures. Real-world experience shows this can take anywhere from a few weeks to several months depending on how frequently your site is crawled and the scale of the hack.

The higher your crawl budget and the stronger your authority, the more frequently Google will return to confirm these pages no longer exist or have become 404s. Conversely, a small, rarely-crawled site can harbor zombie URLs for quarters.

- Prioritize pages generating traffic and those visible in search results

- Let Google naturally deindex the rest rather than waste time treating everything manually

- Natural disappearance works but its timeline varies based on your authority and crawl frequency

- Google provides no time guarantee for the duration of automatic cleanup

SEO Expert opinion

Is this approach really risk-free for your SEO reputation?

Let's be honest: letting thousands of hacked pages "die naturally" isn't neutral. During their slow demise, they continue to exist in the index, potentially accessible through niche searches or long-tail queries.

The risk? A user or a vigilant competitor might stumble upon them and report your site as compromised. Google could then apply a manual penalty far more severe than progressive algorithmic demotion. [To verify] the extent to which Google tolerates this slow transition without triggering a security alert.

Is Google telling you the whole truth about the effectiveness of this method?

The recommendation implies the algorithm sorts things out perfectly. In practice, we observe situations where hacked pages persist in the index for months, especially if they've accumulated a few backlinks or artificial "legitimacy" signals.

Google also doesn't specify how to handle cases where the hack injected malicious content into existing legitimate pages—not just created new URLs. In this scenario, the "visible pages vs rest" distinction becomes blurry and the passive approach insufficient.

In what cases does this minimalist strategy fail?

When the volume of hacked pages vastly exceeds your volume of legitimate pages. If your site has 500 real pages and the hack generated 50,000, the ratio can send persistent negative signals even after treating visible pages.

Another limitation: sophisticated hacks that create "almost legitimate" content difficult for the algorithm to identify. Pages that resemble your site, follow your branding, but subtly redirect to pharmaceutical spam. Google can take months to detect them.

Practical impact and recommendations

What must you do immediately after detecting a massive hack?

Secure first: patch the vulnerability before any SEO action. Identifying and treating visible pages makes no sense if the hack continues generating new URLs in the background.

Next, build a list of priority pages: those generating traffic in Search Console, your strategic URLs, those appearing in SERPs for your target queries. Use targeted site:yourdomain.com searches to spot the most exposed infected pages.

How do you accelerate the natural disappearance of non-priority pages?

Even if Google says to let things be, you can optimize the process. Configure your robots.txt file correctly to avoid blocking crawls—a classic mistake that actually slows down 404 detection.

Use the URL removal tool in Search Console only for hacked pages still visible in results and generating unwanted traffic. For the rest, let the 404s do their job—Google will interpret them as progressive cleanup.

What critical mistakes should you avoid in this situation?

Don't massively block thousands of URLs in robots.txt thinking you'll speed up the process. You're preventing Google from noting their disappearance, freezing the situation. Don't create 301 redirects from hacked pages to healthy ones either—you may transfer negative signals.

Avoid deleting then immediately recreating legitimate pages that were compromised. Better to thoroughly clean them and request reindexing via the dedicated tool in Search Console.

- Patch the security vulnerability before any corrective SEO action

- Identify the 50-100 most visible pages and treat them manually (cleanup + reindexing)

- Leave the rest as 404s without blocking crawls in robots.txt

- Use the URL removal tool only for hacked pages still on Google's first page

- Monitor Search Console weekly to track the decline in indexed pages

- Never massively redirect hacked pages to healthy ones

- Document the hack and corrective actions to prevent recurrence

❓ Frequently Asked Questions

Combien de temps faut-il pour que les pages hackées disparaissent naturellement de l'index Google ?

Dois-je utiliser l'outil de suppression d'URL pour toutes les pages hackées ?

Que faire si le hack a injecté du contenu malveillant dans mes pages légitimes existantes ?

Puis-je bloquer les pages hackées dans robots.txt en attendant qu'elles disparaissent ?

Cette méthode passive risque-t-elle de déclencher une pénalité manuelle Google ?

🎥 From the same video 17

Other SEO insights extracted from this same Google Search Central video · published on 06/09/2023

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.