Official statement

Other statements from this video 11 ▾

- □ Google indexe-t-il vraiment vos PDF ou les transforme-t-il d'abord ?

- □ Le poids du contenu varie-t-il selon son emplacement en HTML et en PDF ?

- □ Google dépend-il vraiment d'Adobe pour indexer vos PDF ?

- □ Google indexe-t-il vraiment le code source comme du texte ordinaire ?

- □ Pourquoi les fichiers de code source peinent-ils à se classer dans Google ?

- □ Faut-il vraiment arrêter de stocker tous vos PDF dans un dossier /pdfs/ ?

- □ Pourquoi Google n'indexe-t-il jamais une image isolée sans page d'hébergement ?

- □ Google indexe-t-il vraiment les images et vidéos différemment du texte ?

- □ Google filtre-t-il les données personnelles avant indexation ?

- □ L'extension de fichier (.html, .php, .txt) a-t-elle un impact sur le référencement Google ?

- □ Google indexe-t-il vraiment tous vos fichiers XML ?

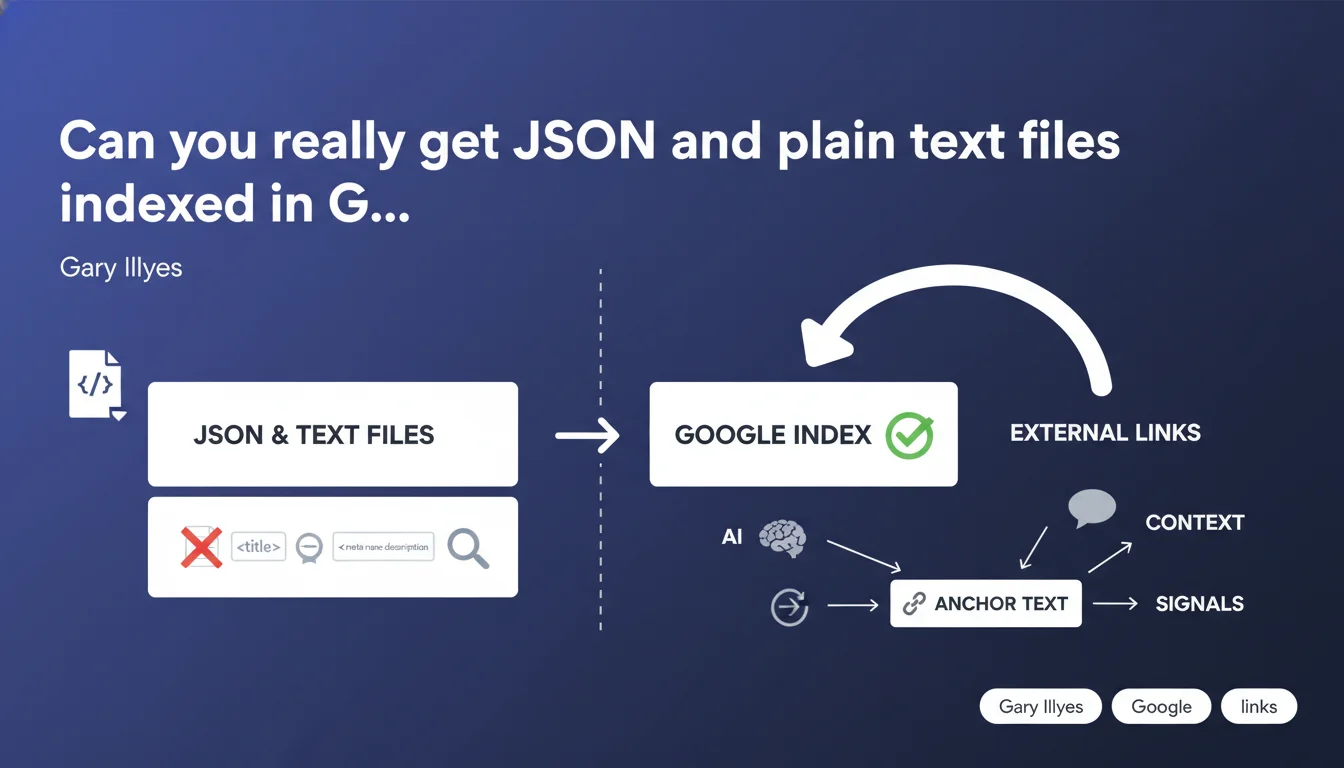

Google can index and display JSON and plain text files in the SERPs, even without titles or meta-data. The search engine then relies heavily on external context: link anchors, surrounding text, and relevance signals provided by pages linking to these files. This is an important revelation for your internal and external linking strategy.

What you need to understand

Does Google really index files without classic HTML structure?

Yes. Gary Illyes explicitly confirms that JSON files and plain text files (.txt, .json) can be indexed and appear in search results. No HTML wrapper needed.

The search engine treats these files as standalone documents, even though they lack conventional tags (title, meta description, h1). This is a nuance often overlooked: many assume only structured HTML pages are eligible for indexing. That's wrong.

Why is it so difficult to rank these files in search results?

Because they are semantically blind. Without an internal title, without content hierarchy (no headings), Google cannot rely on the usual on-page signals to determine what the file is about.

Result: the search engine must reconstruct meaning from external signals. This is where link anchors become critical — they provide the missing context. A JSON file pointed to by 50 links with descriptive anchors like "2023 pricing API" has a chance to rank. Without these signals, it stays in indexing limbo.

What role do external links and their anchor text play?

They become the semantic pillar. The anchor of an incoming link acts as a title proxy — it tells Google: "this file is about X". The more consistent and descriptive the anchors, the better the search engine can build a reliable thematic representation.

Concretely: a JSON file exposed via technical API, without title or meta-data, can still climb the SERPs if third-party pages (documentation, forums, blogs) cite it with precise anchors. This is a brutal reminder that external context shapes internal perception.

- JSON and text files can be indexed even without classic meta-data

- Lack of internal structure makes ranking difficult but not impossible

- External link anchors provide the missing semantic signals

- Consistency of anchors pointing to these files becomes a critical ranking factor

SEO Expert opinion

Is this statement consistent with real-world observations?

Yes — and it's actually verifiable. We regularly observe .json, .xml, even .txt files in the SERPs, particularly for technical queries (API docs, datasets, public logs). But their position is generally poor unless the file is heavily cited with rich anchors.

This aligns with Gary's explanation: Google can index them, but without external help, it doesn't know what to do with them. Cases where these files rank well? Always the same: numerous links and descriptive anchors from authoritative pages. No magic.

What nuances should we add to this rule?

First point: context doesn't mean volume. Just because a JSON file receives 1000 spammy links with generic anchors ("click here", "download") doesn't mean it will rank well. Semantic quality of anchors beats raw quantity.

Second point: [To be verified] the notion of "enough context" remains fuzzy. Gary provides no threshold, no ratio of links to descriptive anchors. We know it works sometimes, but impossible to predict at what point Google shifts an orphaned file to "rankable" status. This is frustrating for SEO planning.

In what cases doesn't this rule apply?

If the file is blocked by robots.txt or X-Robots-Tag, obviously. But also: if the file is technically accessible but never linked — neither internally nor externally — it will remain invisible. No signals = no ranking, even if Google crawled it.

Another limit: JSON files nested in authenticated flows (private APIs, tokens). Even if Google crawls the public URL, without access to the full content, it can't index anything substantial. External context doesn't compensate for inaccessible content.

Practical impact and recommendations

What should you do concretely if you expose JSON or text files publicly?

First, decide if you want them indexed. If not: robots.txt or X-Robots-Tag: noindex on these files. If yes: deliberately build external context.

Concretely? Create a documentation page or blog post that introduces the file, with a link to it carrying a descriptive anchor. Example: instead of "[Download the file](file.json)", write "[Download pricing data in JSON format](file.json)". This anchor becomes the implicit title of the file in Google's eyes.

What mistakes should you absolutely avoid?

Classic mistake: leaving JSON or .txt files publicly accessible without any internal or external links. Result: Google may accidentally crawl them (via an indirect link, a forgotten sitemap), index them, and display them in the SERPs with an empty or absurd snippet. Bad UX signal.

Another trap: using generic anchors everywhere ("see here", "file", "download"). Without descriptive anchor text, Google has no semantic clues. The file remains technically indexable, but practically invisible.

How do you verify these files are treated correctly?

Use Google Search Console. Inspect the URL of the JSON or .txt file: is it indexed? If yes, look at the snippet as Google generates it. If it's empty or incoherent, this is a symptom of lack of context.

Next, do a search site:yourdomain.com filetype:json (or filetype:txt). You'll see all files of this type that are indexed. For each, verify: are there links pointing to it? With what anchors? If the answer is "none", either add context or block indexing.

- Explicitly decide if each JSON/text file should be indexed

- Block indexing via robots.txt or X-Robots-Tag if necessary

- Create context pages (docs, blog) that link to these files with descriptive anchors

- Avoid generic anchors ("click here", "download") — favor semantically rich anchors

- Regularly check in Search Console which non-HTML files are indexed

- Audit Google snippets of these files to detect context problems

❓ Frequently Asked Questions

Un fichier JSON sans aucun lien interne peut-il quand même être indexé par Google ?

Faut-il créer un sitemap dédié pour les fichiers JSON que je veux indexer ?

Les ancres de liens internes fonctionnent-elles aussi bien que les ancres externes pour donner du contexte ?

Dois-je ajouter des balises meta dans mes fichiers JSON pour aider Google ?

Comment bloquer l'indexation d'un fichier JSON sans bloquer son accès public ?

🎥 From the same video 11

Other SEO insights extracted from this same Google Search Central video · published on 08/09/2022

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.