Official statement

Other statements from this video 11 ▾

- □ La fréquence de crawl influence-t-elle réellement le classement SEO ?

- □ Google va-t-il moins crawler votre site au nom de l'écologie ?

- □ Pourquoi Google ignore-t-il la balise lastmod de vos sitemaps ?

- □ IndexNow et Google : faut-il vraiment soumettre vos URLs pour accélérer l'indexation ?

- □ Faut-il vraiment pinger votre sitemap à chaque publication ?

- □ Google est-il vraiment en panne plus souvent qu'avant ?

- □ HTTPS et vitesse de chargement : faut-il vraiment s'en préoccuper pour l'indexation ?

- □ Pourquoi Google a-t-il décidé de refondre entièrement ses Webmaster Guidelines ?

- □ Le cloaking géographique est-il vraiment toléré par Google ?

- □ Les sites multi-locaux sont-ils des doorway pages ou une stratégie SEO légitime ?

- □ Les signaux de Page Experience desktop vont-ils changer la donne pour votre référencement ?

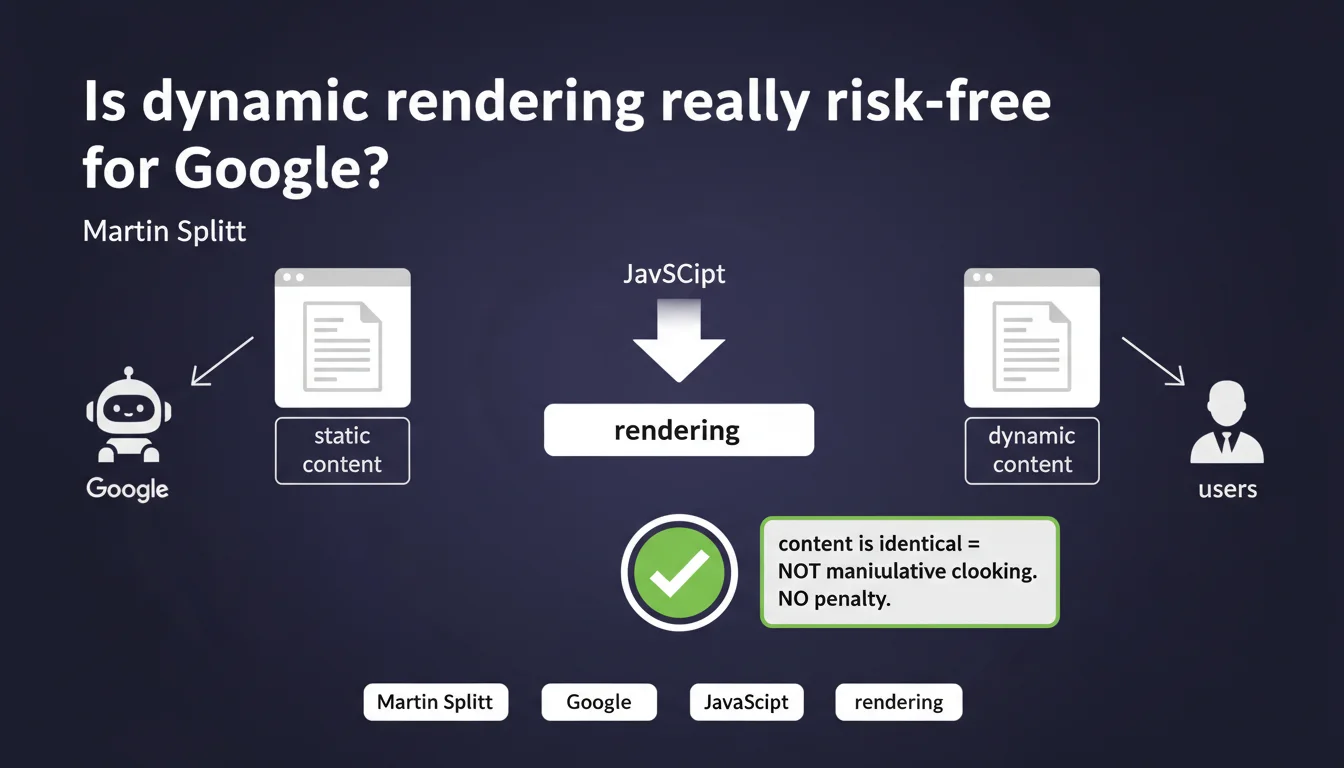

Google confirms that dynamic rendering — serving static HTML to bots and dynamic JavaScript to users — is not considered manipulative cloaking as long as the content remains identical. This practice will not be penalized, which clarifies a gray area for JavaScript-heavy sites that struggle to be crawled effectively.

What you need to understand

Why is this clarification on dynamic rendering coming now?

Dynamic rendering has emerged as a workaround solution for JavaScript-heavy sites that aren't being properly indexed by Googlebot. For years, this technique has circulated in a gray zone: officially tolerated, but never fully endorsed.

The concern? That Google would see it as a form of cloaking — this historically sanctioned practice of serving different content to bots and users to manipulate rankings. Splitt makes it clear: if the content is identical, it's OK.

What is dynamic rendering, concretely?

We're talking about an architecture where the server detects the visitor's user-agent. If it's Googlebot, it serves a pre-rendered HTML version (via Puppeteer, Rendertron, or a modern CDN). If it's a regular user, it sends the JavaScript application that loads client-side.

The objective: circumvent the limitations of crawl budget and Google's JavaScript rendering, which remains imperfect despite advances. In practice, this approach enables faster indexation without sacrificing user experience.

What's the line between dynamic rendering and manipulative cloaking?

The boundary is simple in theory: content must be identical. No hidden text for bots, no extra links invisible to users, no structured data served only to Googlebot.

In reality, maintaining this equivalence can be harder than expected. A single divergence — a title that changes, a paragraph that differs — and you slip into cloaking. Google gives no numerical tolerance here.

- Dynamic rendering is allowed if rendered content is identical for bots and users

- This practice does not constitute manipulative cloaking and will not be penalized

- Strict content equivalence is the only validation criterion — no margin for error tolerated

- Dynamic rendering remains a workaround, not an optimal architecture for the long term

SEO Expert opinion

Is this statement consistent with practices observed in the field?

Yes and no. Across thousands of audited sites, those using dynamic rendering without content divergence indeed suffer no visible manual penalties. However, cases of unintentional cloaking are frequent: a React component that doesn't display in SSR, a different canonical tag, a text block generated client-side that's missing from the bot version.

Google detects these discrepancies, but sanctions aren't always frontal. Sometimes it's a silent ranking loss, slowed crawling, or worse: partial indexation without a Search Console alert. [To verify]: Google never communicates the exact tolerance thresholds.

In what cases does this rule no longer apply?

As soon as there's manipulation. If you serve bots content enriched with extra keywords, hidden links, or structured data absent from the user version, you're out of bounds. Splitt talks about identical content, but doesn't specify whether this includes microformats, meta tags, or alt attributes.

Another gray area: sites that dynamically adjust content based on geolocation or device. If Googlebot sees a US desktop version while your French users see localized mobile content, are you cloaking? The statement doesn't address this.

What nuances should be added to this official position?

Splitt only speaks about non-penalization. He doesn't say dynamic rendering improves your SEO performance. In practice, a natively SSR site will always have an advantage: better rendering control, fewer divergence risks, optimized Core Web Vitals.

The other point not addressed: server load. Pre-rendering every page for Googlebot can quickly become resource-intensive, especially on high-volume sites. This technical debt is never mentioned in Google communications.

Practical impact and recommendations

What should you concretely do if you already use dynamic rendering?

First step: validate strict equivalence between the bot version and user version. Use Search Console's URL inspection tool to compare rendered HTML with what a standard browser sees. Don't rely on a simple visual diff — verify the DOM, meta tags, structured data.

Next, automate this verification. Implement non-regression tests that regularly compare both versions. A deployment that introduces divergence can go unnoticed for weeks before causing traffic loss.

What errors should you avoid to not slip into cloaking?

Don't play with gray areas. Never add content invisible to users, even if it's "to help Google understand better." No white text on white background, no CSS blocks with display:none only for bots, no hidden links in the footer.

Another common error: serving a bot version that's "over-optimized" with H1 titles stuffed with keywords while the user version prioritizes experience. Google detects this, and even without manual action, the algorithm can deprioritize your pages.

How do you verify that your implementation is compliant?

Systematically compare Search Console rendering with a Screaming Frog or OnCrawl crawl in "standard user" mode. Discrepancies should be zero on structuring elements: titles, meta descriptions, text content, internal linking, structured data.

If you detect divergences, two options: fix the dynamic rendering implementation to guarantee equivalence, or migrate to a more reliable SSR/SSG architecture. The second option is often preferable medium-term.

- Regularly compare Googlebot rendering (Search Console) with user rendering (standard browser)

- Automate non-regression tests to detect any divergence after each deployment

- Never add content invisible to users, even "to help Google"

- Verify strict equivalence of structured data, meta tags, titles, internal linking

- Consider migrating to SSR/SSG if dynamic rendering becomes too complex to maintain

❓ Frequently Asked Questions

Le dynamic rendering est-il recommandé par Google comme solution SEO ?

Comment savoir si mon site fait du cloaking involontaire avec le dynamic rendering ?

Puis-je servir une version mobile aux utilisateurs et une version desktop à Googlebot ?

Les données structurées doivent-elles aussi être identiques entre les deux versions ?

Le dynamic rendering affecte-t-il les Core Web Vitals ?

🎥 From the same video 11

Other SEO insights extracted from this same Google Search Central video · published on 20/01/2022

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.