Official statement

Other statements from this video 11 ▾

- □ La fréquence de crawl influence-t-elle réellement le classement SEO ?

- □ Pourquoi Google ignore-t-il la balise lastmod de vos sitemaps ?

- □ IndexNow et Google : faut-il vraiment soumettre vos URLs pour accélérer l'indexation ?

- □ Faut-il vraiment pinger votre sitemap à chaque publication ?

- □ Google est-il vraiment en panne plus souvent qu'avant ?

- □ HTTPS et vitesse de chargement : faut-il vraiment s'en préoccuper pour l'indexation ?

- □ Pourquoi Google a-t-il décidé de refondre entièrement ses Webmaster Guidelines ?

- □ Le cloaking géographique est-il vraiment toléré par Google ?

- □ Le dynamic rendering est-il vraiment sans risque pour Google ?

- □ Les sites multi-locaux sont-ils des doorway pages ou une stratégie SEO légitime ?

- □ Les signaux de Page Experience desktop vont-ils changer la donne pour votre référencement ?

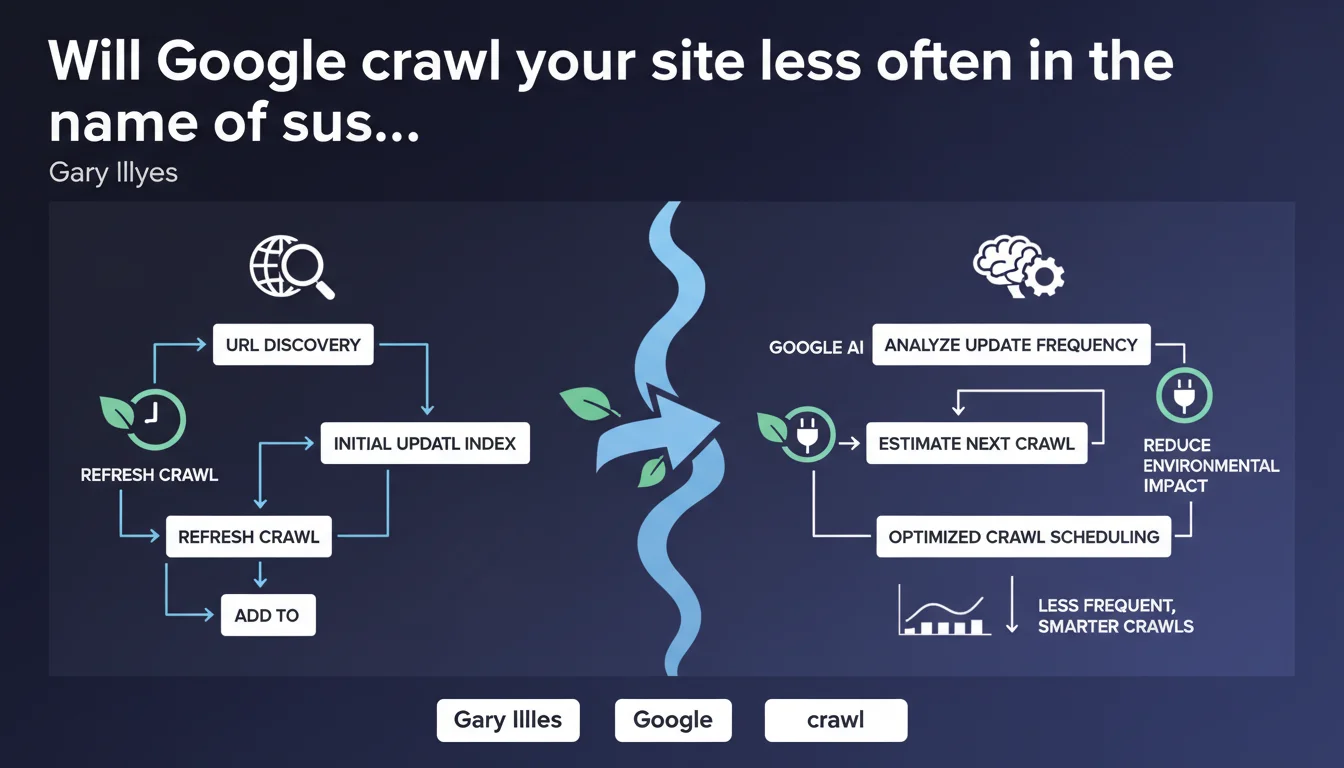

Google is optimizing the frequency of its refresh crawls to reduce its carbon footprint. In practice, the search engine is adjusting its revisits based on the actual update frequency of your pages. Sites that update their content infrequently could see their crawls spaced out over longer periods.

What you need to understand

What exactly are refresh crawls?

Refresh crawls refer to repeated visits by Googlebot to URLs that have already been discovered and indexed. Unlike the initial crawl that discovers a new page, the refresh crawl checks whether its content has changed.

This distinction is crucial: Google is not talking about reducing the discovery of new pages, but rather streamlining revisits to known URLs. The algorithm attempts to predict when a page will be modified to avoid crawling unchanged content unnecessarily.

Why this optimization now?

The official justification is environmental: reducing the carbon footprint linked to crawling infrastructure. Google doesn't hide the fact that its data centers consume enormous amounts of energy.

But let's be honest — this approach also serves Google's economic interests. Crawling less means fewer resources mobilized, lower costs. Ecology becomes a convenient argument here to justify an optimization that benefits Google on multiple fronts.

How does Google estimate update frequency?

Google analyzes the modification history of each URL: if a page changes daily, it will be crawled frequently. If it remains stable for months, visits will be spaced out.

The engine likely uses signals such as last modification dates, observed change patterns, and possibly even behavioral indicators (user engagement, expected freshness based on content type).

- Refresh crawls = revisits of already-known URLs to detect changes

- The stated objective: reduce the environmental impact of crawling

- The method: adjust frequency according to actual update patterns

- Potential impact: static sites crawled less frequently

- No precise figures announced regarding frequency reduction

SEO Expert opinion

Is this statement consistent with what we observe in the field?

Yes and no. For several years now, we've actually noticed that Google spaces out its crawls on infrequently updated content. This isn't new — the official statement simply formalizes a practice already in place.

What's intriguing is the timing of this communication. Why publicly announce a technical optimization that already exists partially? Either Google is intensifying this logic and preparing the ground, or the ecological argument serves to justify budgetary restrictions on crawling. [To verify]: what is the actual share of crawl reduction tied to this initiative?

What nuances is Gary Illyes not mentioning?

The statement remains vague about specific criteria. What is a "real update frequency"? Visible content modification? Minor DOM changes? Metadata updates?

And that's where it gets tricky. If Google detects that a site publishes rarely, it will crawl less — but how can that site signal that it has just published new content? The snake bites its own tail: less crawl = slower detection of updates = vicious cycle for inactive sites trying to restart their editorial momentum.

Another missing point: impact on seasonal sites. An e-commerce platform that massively updates product sheets in November-December then remains stable the rest of the year — how does Google adapt to this irregular pattern?

In what cases could this logic unfairly penalize sites?

Sites that migrate or restart their content strategy after a long period of stagnation risk suffering. Google will have "learned" that the site doesn't change, will space out its visits, and will take longer to discover new content.

Blogs and media with irregular publication schedules are also affected. If you publish one article per month but of exceptional quality, Google might underestimate your rhythm and crawl too infrequently to quickly capture your new publications.

Practical impact and recommendations

What should you do concretely to adapt?

First thing: maintain a consistent editorial cadence, even if modest. It's better to publish one piece of content per week regularly than 10 articles in January followed by radio silence until June. Google learns your patterns — give it exploitable regularity.

Next, leverage all proactive notification channels. The XML sitemap with properly filled <lastmod> and <changefreq> tags remains a weak but useful signal. IndexNow, supported by Microsoft and Yandex (not yet officially by Google, but testing continues), allows you to instantly notify an update.

Finally, monitor your crawl budget via Google Search Console. If you notice a drop in the number of pages crawled per day while you've increased your publishing pace, it's a sign that Google hasn't yet adjusted its frequency to your new activity level.

What mistakes should you absolutely avoid?

Don't artificially modify your pages just to "convince" Google that they're changing. Changing publication dates, adding a dynamic widget that displays the current time, or making invisible micro-adjustments — Google detects these manipulations and they can damage your credibility.

Also avoid neglecting your evergreen content under the assumption that it doesn't evolve. A page that remains relevant should be periodically updated with fresh data, recent examples, and current links. Otherwise, it will gradually fall off Google's radar.

Last trap: relying solely on organic crawling. If your site depends on fast indexing (news, e-commerce with volatile inventory), you must enable active push mechanisms to Google, not simply wait for Googlebot to pass.

How can you verify that your site remains well-crawled?

Analyze crawl statistics in Google Search Console. Look at the trend in the number of pages crawled per day over the last 90 days. A progressive drop while you're publishing regularly? Problem.

Also check the delay between publication and indexing. Use the URL inspection tool to see when Googlebot last visited your latest pages. If this delay is growing abnormally, it means Google is spacing out its refresh crawls on your domain.

Cross-reference this data with your server logs. Logs show you exactly when and which pages Googlebot visits. Compare with your publication rhythm to detect misalignments. If Googlebot mostly visits old URLs and ignores your new content, adjust your internal linking strategy and sitemaps.

- Establish a regular and predictable editorial cadence

- Properly fill

<lastmod>tags in your XML sitemap - Test IndexNow to proactively notify updates

- Monitor crawl statistics in Search Console

- Periodically refresh evergreen content with fresh data

- Analyze server logs to identify actual crawl patterns

- Never artificially manipulate dates or content to force crawling

- Optimize internal linking to promote new publications

❓ Frequently Asked Questions

Google va-t-il crawler mon site moins souvent après cette annonce ?

Est-ce que cette optimisation affecte l'indexation des nouvelles pages ?

Comment forcer Google à crawler plus souvent malgré cette logique ?

Les gros sites sont-ils avantagés par cette logique ?

Dois-je modifier artificiellement mes pages pour maintenir le crawl ?

🎥 From the same video 11

Other SEO insights extracted from this same Google Search Central video · published on 20/01/2022

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.