Official statement

Other statements from this video 11 ▾

- □ Google va-t-il moins crawler votre site au nom de l'écologie ?

- □ Pourquoi Google ignore-t-il la balise lastmod de vos sitemaps ?

- □ IndexNow et Google : faut-il vraiment soumettre vos URLs pour accélérer l'indexation ?

- □ Faut-il vraiment pinger votre sitemap à chaque publication ?

- □ Google est-il vraiment en panne plus souvent qu'avant ?

- □ HTTPS et vitesse de chargement : faut-il vraiment s'en préoccuper pour l'indexation ?

- □ Pourquoi Google a-t-il décidé de refondre entièrement ses Webmaster Guidelines ?

- □ Le cloaking géographique est-il vraiment toléré par Google ?

- □ Le dynamic rendering est-il vraiment sans risque pour Google ?

- □ Les sites multi-locaux sont-ils des doorway pages ou une stratégie SEO légitime ?

- □ Les signaux de Page Experience desktop vont-ils changer la donne pour votre référencement ?

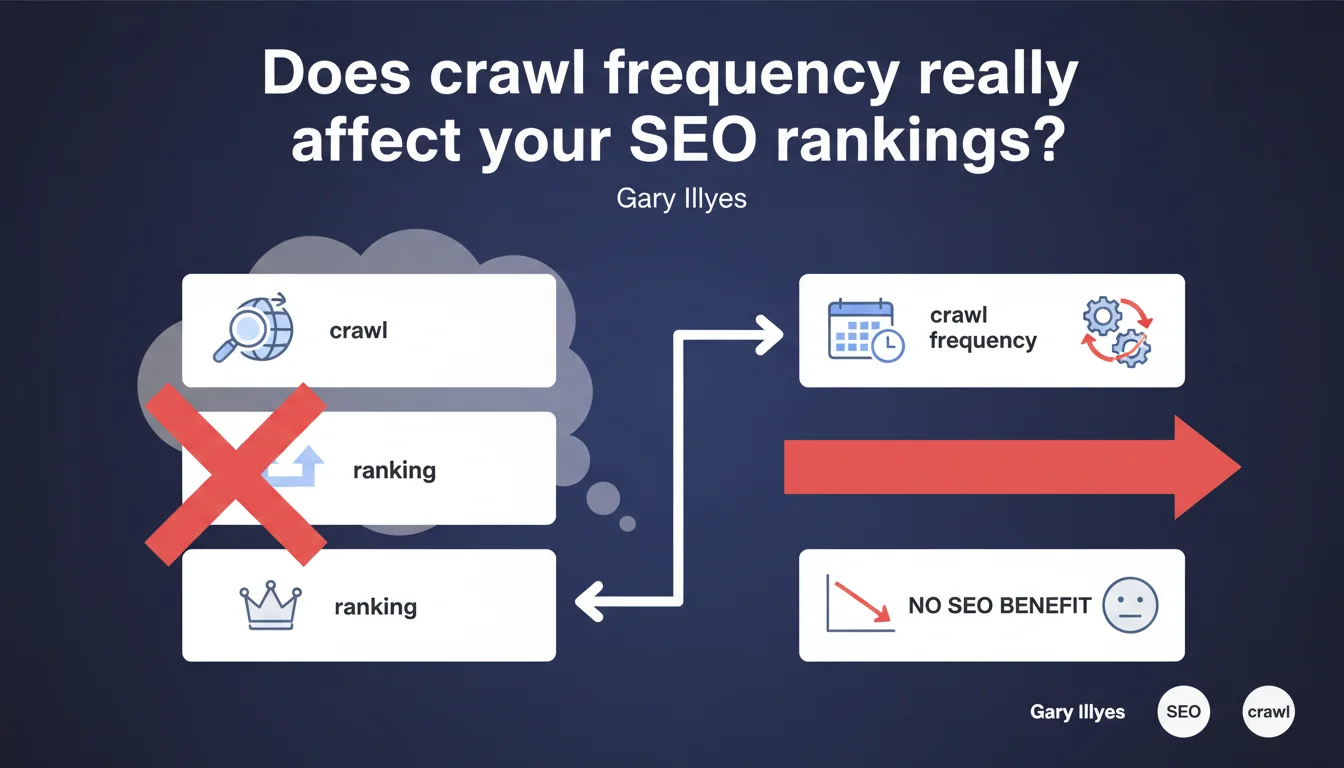

Google states that crawling a page more often does not improve its ranking position. Artificially increasing crawl frequency on static content is pointless — what matters is the actual quality and freshness of your content, not how often Googlebot visits.

What you need to understand

Why does this confusion persist among SEO professionals?

The idea that a frequently crawled page would rank better stems from a confusion between correlation and causation. High-authority sites are indeed crawled more often — but this is a consequence of their performance, not the cause.

Google adjusts the crawl budget based on signals like popularity, content freshness, and technical structure. A page that changes regularly will naturally attract Googlebot more frequently. Inverting the logic — forcing crawl activity without modifying the content — doesn't fool anyone.

What's the difference between crawling and indexing?

Crawling a page simply means visiting it. Indexing means analyzing it, storing it, and determining its ranking potential. A page can be crawled every single day without ever being properly indexed if it offers no differentiated value.

Ranking depends on hundreds of signals: semantic relevance, authority, user experience, behavioral signals. Crawl frequency is not one of them — it merely reflects the site's actual editorial activity.

When does crawl frequency actually become a concern?

For sites with thousands of dynamic pages (e-commerce, classifieds, news outlets), crawl budget is a limited resource. If Googlebot wastes its visits on useless URLs, it will miss strategically important pages.

But artificially increasing crawl on these pages solves nothing. Instead, you need to optimize budget allocation: block parasitic URLs, prioritize fresh content, clean up redirect chains.

- Frequent crawling is an effect of quality, not a cause of good rankings

- Forcing crawl on static content is pointless

- The real issue is crawl budget optimization for large sites

- Google automatically adjusts crawl based on actual editorial activity

- Crawling ≠ indexing ≠ ranking — three distinct processes

SEO Expert opinion

Is this statement consistent with real-world observations?

Yes, absolutely. We regularly observe sites with a minuscule crawl budget that rank excellently because their content is solid. Conversely, sites over-optimized to attract Googlebot (XML sitemaps updated hourly, constant pings) stagnate if the substance isn't there.

The nuance — which Gary Illyes doesn't elaborate on here — is that for certain industries (news, finance, weather), freshness is a ranking signal. But even then, it's not crawl frequency that matters: it's actual content updates. If you republish the identical page, even crawled 10 times daily, it gains nothing.

What outdated practices should you abandon?

Some SEO professionals obsessively submit URLs manually via Search Console multiple times per day, hoping for a boost. Others generate micro-variations of content (update dates, timestamps) just to simulate freshness. Google isn't fooled.

The classic trap: believing that adding "Updated on [DATE]" to an unchanged page will trick the algorithm. Googlebot analyzes actual semantic content, not just date metadata. If nothing has changed, it knows.

When does crawl frequency actually create real problems?

On very large sites (500k+ pages), poorly managed crawl budget can block the indexing of new strategic pages. Googlebot spends its time on useless facets, infinitely paginated archives, tracking URLs — while actual product pages wait.

Here, the problem isn't "too slow crawl," it's poorly directed crawl. The solution involves robots.txt, canonical tags, tactical noindex, and parameter management in Search Console. [To verify]: Google never precisely communicates crawl budget thresholds or dynamic adjustment criteria — we're working blind on this point.

Practical impact and recommendations

What should you concretely do to optimize crawling?

Stop trying to "force" crawl. Focus on what generates natural, relevant crawling: publishing fresh, valuable content, earning backlinks to your new pages, maintaining clean technical architecture.

For large sites, the challenge is intelligently allocating available budget. Identify sections wasting crawl (server logs, Search Console) and block them. Prioritize high-value pages in your XML sitemap.

What critical mistakes should you avoid?

Don't submit the same URLs repeatedly via Search Console. Google eventually ignores repetitive requests if it detects no substantial changes have occurred.

Avoid "fake updates": changing only publication date or adding hollow paragraphs just to simulate freshness. Google analyzes actual semantic change — if it's zero, impact is zero.

How do you verify your site is using its crawl budget effectively?

Analyze your server logs: which pages does Googlebot visit? How frequently? Compare against your strategic pages. If Googlebot spends 80% of its time on useless URLs, you have an architecture problem.

In Search Console, "Crawl statistics" section, monitor daily crawled pages and response time. A crawl collapse can signal a technical issue (slow server, 5xx errors) — but artificial crawl increases without new content will achieve nothing.

- Audit your server logs to identify wasted crawl

- Block useless sections via robots.txt or noindex

- Prioritize strategic pages in your XML sitemap

- Publish genuinely new content to attract Googlebot naturally

- Optimize server response time to facilitate crawling

- Clean up redirect chains and 404 errors

- Monitor unusual crawl spikes (malicious bots)

❓ Frequently Asked Questions

Soumettre une page via la Search Console accélère-t-il son indexation ?

Faut-il mettre à jour régulièrement une page pour qu'elle soit mieux classée ?

Un sitemap XML fréquemment actualisé booste-t-il le SEO ?

Comment savoir si mon crawl budget est bien utilisé ?

Le nombre de pages crawlées par jour indique-t-il la santé SEO du site ?

🎥 From the same video 11

Other SEO insights extracted from this same Google Search Central video · published on 20/01/2022

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.