Official statement

Other statements from this video 8 ▾

- □ Que se passe-t-il réellement quand Google vous inflige une action manuelle ?

- □ Un site hors ligne peut-il vraiment détruire votre trafic de toutes les sources (et pas seulement Google) ?

- □ Les Core Updates provoquent-elles vraiment des changements progressifs plutôt que des chutes brutales ?

- □ Pourquoi analyser 16 mois de données Search Console lors d'une chute de trafic ?

- □ Comment analyser correctement une baisse de trafic SEO sans se tromper de diagnostic ?

- □ Faut-il vraiment analyser tous les onglets de Search Console pour diagnostiquer une baisse de trafic ?

- □ Pourquoi devriez-vous arrêter d'analyser votre trafic SEO de manière globale ?

- □ Pourquoi Google ajoute-t-il des annotations dans Search Console et comment les interpréter ?

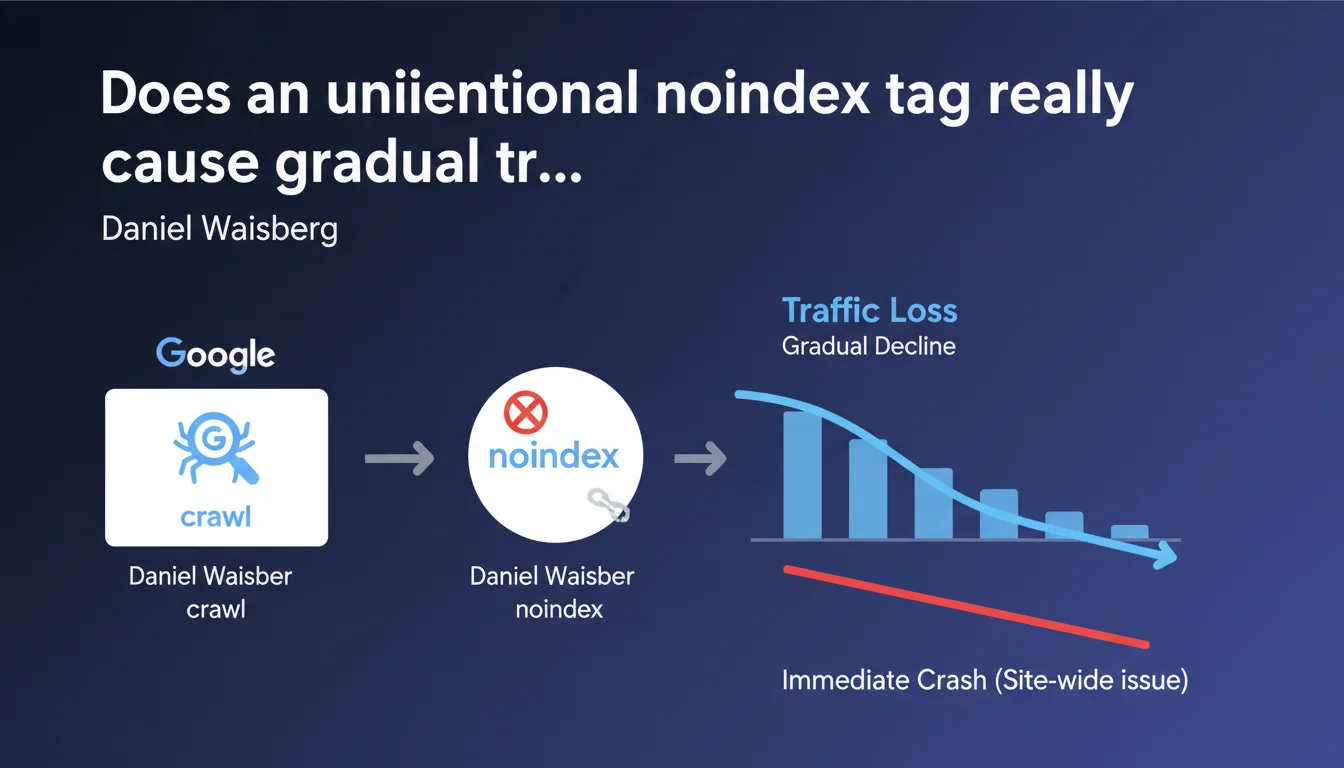

An unintentional noindex tag triggers gradual traffic decline, slower than global technical issues. Why? Google must first recrawl each affected page to detect the directive. This discovery delay postpones visible impact, unlike server-level blocking which instantly affects the entire site.

What you need to understand

Why does traffic loss happen gradually rather than immediately?

Unlike a robots.txt block or server error that affects the entire site instantly, a noindex tag operates at the individual page level. Google must recrawl each URL to detect the directive before removing it from the index.

If your site has 10,000 pages and Googlebot crawls 500 per day, it will take 20 days before all pages are checked. During this period, pages not yet recrawled remain indexed and generate traffic. The decline thus develops in waves, at the pace of crawling.

How is this behavior different from a global technical issue?

A server-level block (503, robots.txt, firewall) immediately prevents Googlebot from accessing all affected resources. The impact on traffic is brutal and visible within 24-48 hours.

With a noindex tag, each page follows its own recrawl schedule. Frequently visited URLs disappear quickly, while orphaned or deep pages may remain indexed for weeks. This desynchronization creates a drawn-out decline curve.

What factors influence how quickly this decline happens?

Several variables determine how quickly Google will detect the noindex tag on your pages:

- Crawl frequency: a daily-crawled site will see impact within days, versus weeks for a low crawl budget site

- Page popularity: high-traffic URLs are recrawled more often and will disappear first

- Navigation depth: pages buried 5+ clicks from the homepage take longer to be discovered

- Sitemap signal: submitting a sitemap with recent lastmod accelerates recrawling

- Server cache/CDN: if Google crawls a cached version without the tag, delays lengthen

SEO Expert opinion

Is this statement consistent with real-world observations?

Absolutely. Post-migration audits regularly reveal this pattern: a developer adds a noindex tag to a template, and the client reports traffic decline 2-3 weeks later. Not immediately. The time it takes Google to recrawl the critical mass of affected pages.

This delay creates a sneaky trap — when the problem becomes visible in Analytics, hundreds of pages are already deindexed. Rollback alone isn't enough: you must wait for another full recrawl cycle to recover lost rankings. Meanwhile, some competitors have taken your spots.

What nuances should we add to this rule?

Daniel Waisberg speaks of a "slower" impact without providing precise timeframe benchmarks. In reality? On a 50,000-product e-commerce site, I've observed decline spread over 6 weeks. On a 200-article blog crawled daily, the collapse was visible in 5 days. [To verify]: Google doesn't share concrete metrics on typical decline velocity.

Another point — the statement assumes "normal" crawling. But if you force massive recrawling via Search Console (URL Inspection) or aggressive sitemap updates, you can artificially accelerate deindexation. The reverse is true: a site with exhausted crawl budget may keep noindex pages in the index for months.

In what cases doesn't this rule apply strictly?

If the noindex tag is added via client-side JavaScript and Google doesn't execute JS on first crawl, the directive may be completely ignored. Some third-party bots never render JS — these pages remain indexed elsewhere indefinitely outside Google.

Another exception — pages with very strong external authority (powerful backlinks) may sometimes stay in Google's cache weeks after noindex detection, while the algorithm arbitrates between conflicting signals. But this is marginal.

Practical impact and recommendations

How do you quickly detect an unintentional noindex tag?

The reflex: monitor the "Indexed pages" metric in Google Search Console. Progressive decline with no explanation (no penalty, no architecture change) often signals a stray noindex. Dig into coverage reports to identify URLs excluded for reason "Excluded by noindex tag".

Automate detection with a weekly Screaming Frog crawl with alerts if noindex page count spikes dramatically. Compare against a baseline crawl. If 500 new pages switch to noindex overnight after deployment, you have 48 hours to fix before Google discovers them en masse.

What do you do if damage is already done?

Remove the noindex tag immediately, but don't expect instant recovery. Google must recrawl cleaned URLs to reintegrate them. Accelerate the process by:

- Submitting a fresh XML sitemap with updated lastmod to force priority recrawling

- Using the URL Inspection tool in Search Console on strategic pages (limited to dozens per day)

- Temporarily strengthening internal linking to affected pages to boost their crawl rate

- Avoiding crawl blocks via robots.txt or rate limiting during recovery phase

Let's be honest — even with these levers, expect 2 to 6 weeks to recover initial rankings. Some pages may never fully recover if competitors have filled the gap in the meantime.

What mistakes should you avoid to prevent this scenario?

Never deploy a global template change (header, footer) without verifying indexation directives. A poorly configured CMS can inject noindex on all child pages of a category without you noticing in the backend.

Test on a staging environment accessible to Googlebot (via Search Console) before production launch. Crawl this environment with the same tools Google uses to catch inconsistencies. And explicitly document which site sections should legitimately carry noindex (facets, archives, internal search pages).

❓ Frequently Asked Questions

Combien de temps faut-il pour qu'une page avec balise noindex disparaisse de l'index Google ?

Peut-on accélérer la réindexation après avoir retiré une balise noindex ?

Une balise noindex ajoutée via JavaScript est-elle prise en compte par Google ?

Quelle différence entre un blocage robots.txt et une balise noindex en termes d'impact sur le trafic ?

Comment détecter automatiquement l'apparition de balises noindex non désirées ?

🎥 From the same video 8

Other SEO insights extracted from this same Google Search Central video · published on 29/03/2023

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.