Official statement

Other statements from this video 8 ▾

- □ Que se passe-t-il réellement quand Google vous inflige une action manuelle ?

- □ Pourquoi une balise noindex provoque-t-elle une baisse de trafic progressive et non brutale ?

- □ Les Core Updates provoquent-elles vraiment des changements progressifs plutôt que des chutes brutales ?

- □ Pourquoi analyser 16 mois de données Search Console lors d'une chute de trafic ?

- □ Comment analyser correctement une baisse de trafic SEO sans se tromper de diagnostic ?

- □ Faut-il vraiment analyser tous les onglets de Search Console pour diagnostiquer une baisse de trafic ?

- □ Pourquoi devriez-vous arrêter d'analyser votre trafic SEO de manière globale ?

- □ Pourquoi Google ajoute-t-il des annotations dans Search Console et comment les interpréter ?

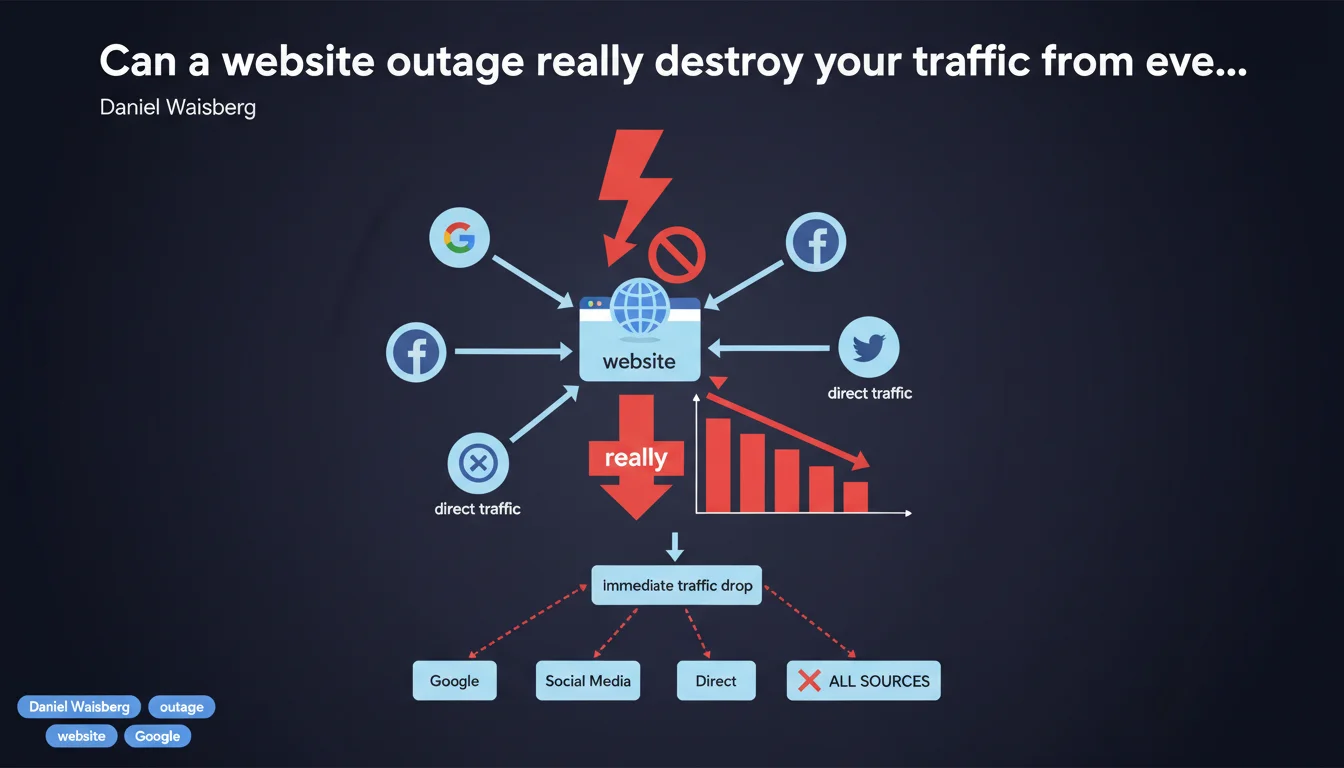

Major technical issues like site unavailability trigger a sharp drop in traffic from all sources combined, not just from Google. The impact is immediate and systemic: organic search, direct traffic, social, paid search… everything collapses simultaneously. Google emphasizes that these technical failures are not an algorithmic problem but a pure accessibility issue.

What you need to understand

Why does Google insist on "all traffic sources"?

Because SEOs tend to attribute everything to the algorithm. When a site crashes, many immediately search for a correlation with a Google update or a penalty. Classic diagnostic error.

If your server is down or your DNS is corrupted, visitors can't access the site — regardless of whether they come from Google, Facebook, a newsletter, or a direct link. This is an infrastructure failure, not a quality signal sent to a search engine.

What's the difference between "sudden drop" and "gradual loss"?

A sharp drop in a few hours almost always signals a pure technical problem: robots.txt blocking, expired SSL certificate, server down, misconfigured 503 redirect. Traffic stops dead because nobody can physically access the content.

Gradual erosion over several weeks suggests rather a quality degradation: outdated content, cannibalization, loss of backlinks, degraded Core Web Vitals. These two scenarios require radically different diagnoses.

Does Google detect these outages instantly?

No, and that's where things get tricky. Googlebot crawls according to a budget allocated to each site, not in real time. If your site goes down on a Sunday evening and the next bot crawl is scheduled for Tuesday morning, it will continue serving cached pages for 24-48 hours.

However, real users clicking on your SERP results immediately hit an error. Result: your bounce rate explodes, your CTR collapses, and Google eventually interprets these signals as a relevance problem — a vicious circle.

- Technical issue = multi-channel impact: SEO, SEM, direct, social, referral all affected simultaneously

- Sudden drop ≠ algorithmic problem: if everything collapses in a few hours, look at infrastructure first

- Google's cache won't save you: users see the error before the bot detects it

- Diagnose quickly: every hour of downtime = lost revenue + accumulated negative signals

SEO Expert opinion

Is this statement consistent with field observations?

Absolutely. I've seen sites lose 90% of their traffic in 3 hours after a botched DNS migration — and it hit both Google Ads campaigns and direct access equally. The problem is that Google provides no order of magnitude.

How much downtime triggers partial deindexation? 6 hours? 48 hours? A week? [To verify] because Google deliberately stays vague on these thresholds. On high-authority sites, I've seen 12-hour outages without major consequences. On new domains, sometimes 4 hours is enough to lose rankings.

What are the blind spots in this statement?

Daniel Waisberg speaks of "site offline" as a binary case: it works or it doesn't. Except in reality, partial outages are more insidious. A CDN serving corrupted content intermittently. A server responding in 10 seconds instead of crashing outright. A page that loads but without CSS or JS.

These cases generate soft errors that Google doesn't necessarily categorize as "site offline." Result: traffic declines gradually without any monitoring tool raising the alarm. And there, we're back to the incorrect algorithmic diagnosis.

In what cases doesn't this rule apply fully?

On large multilingual or multi-domain sites. If your .fr version goes down but .com stays accessible, Google can temporarily redirect some traffic to alternate versions via hreflang. The overall impact exists, but it's cushioned.

Same for sites with a strong mobile app component: if the native app keeps working while the website is down, app traffic can partially offset the loss. But let's be honest — in 95% of cases, a site offline = pure disaster.

Practical impact and recommendations

How do you detect a technical issue before it destroys your traffic?

Implement multi-layer monitoring: don't settle for an HTTP ping every 5 minutes. Test full rendering (JS, CSS, images), measure TTFB, verify SSL certificate validity 30 days before expiration.

Use tools that simulate Googlebot behavior, not just a regular browser's. A site can work perfectly fine for Chrome desktop and crash for a mobile user-agent or a crawl bot. Test from multiple geographic locations — a misconfigured CDN can block certain regions without your knowledge.

What do you do concretely when traffic crashes suddenly?

- Verify actual site accessibility: not from your local machine (browser cache misleads) but via a VPN or remote server

- Analyze server logs immediately: 5xx codes, timeouts, unusual request spikes

- Check robots.txt and meta robots: an accidental post-deployment change can block indexing in one line

- Test your SSL certificate: expiration, incomplete certificate chain, obsolete TLS configuration

- Inspect Google Search Console: coverage errors, spikes in 4xx/5xx errors, abnormal crawl drop

- Verify DNS resolution: worldwide propagation, correct A/AAAA records, TTL not too long blocking quick fixes

- Monitor Core Web Vitals in real time: a sudden degradation in LCP or CLS can signal invisible technical regression

How do you protect against these technical failures?

Automate critical tests in your deployment pipeline: no production release should pass without validating accessibility, SSL validity, and response time. An automatic rollback when thresholds are exceeded can save you.

Document maintenance windows precisely and communicate them via HTTP 503 status code with Retry-After header. Google understands planned maintenance — provided you signal it properly. A random 500 error without context, though, triggers an alert.

Major technical issues require robust monitoring infrastructure and bulletproof deployment processes. If your internal team lacks DevOps expertise or you don't have resources to set up 24/7 monitoring, calling in a specialized technical SEO agency can be critical. These optimizations touch on code, server infrastructure, CDNs, and DNS configurations — so many layers where a single error can trigger a catastrophic domino effect.

❓ Frequently Asked Questions

Combien de temps un site peut-il rester hors ligne avant d'être désindexé par Google ?

Un code HTTP 503 est-il préférable à un 500 en cas de maintenance ?

Une panne partielle affectant seulement certaines pages a-t-elle le même impact qu'un site totalement hors ligne ?

Le trafic revient-il immédiatement une fois le problème technique résolu ?

Faut-il signaler manuellement une panne à Google via Search Console ?

🎥 From the same video 8

Other SEO insights extracted from this same Google Search Central video · published on 29/03/2023

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.