Official statement

Other statements from this video 9 ▾

- □ La Search Console est-elle vraiment le seul outil fiable pour vérifier le crawl de votre site ?

- □ La Search Console détecte-t-elle vraiment tous les problèmes d'indexation de votre site ?

- □ Faut-il vraiment soumettre un sitemap via Search Console pour optimiser l'indexation de vos pages ?

- □ Comment vérifier efficacement vos données structurées et rich results dans la Search Console ?

- □ La Search Console est-elle vraiment la seule source fiable pour mesurer votre trafic organique ?

- □ Comment exploiter la Search Console pour diagnostiquer une chute de trafic organique ?

- □ Pourquoi devriez-vous croiser Search Console et Google Analytics pour piloter votre SEO ?

- □ Faut-il se méfier des données récentes dans la Search Console ?

- □ Comment filtrer correctement le trafic organique Google dans Analytics ?

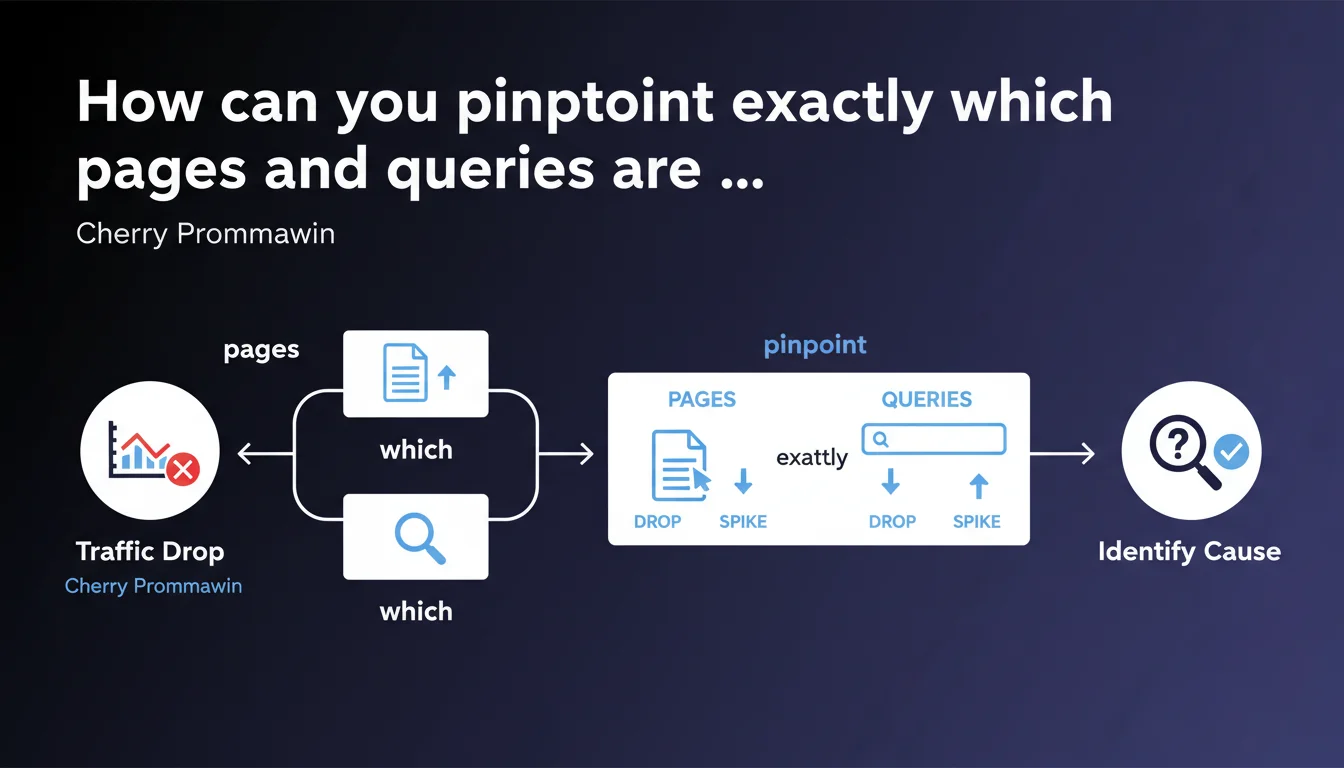

Google recommends not stopping at global metrics when traffic fluctuates. You must drill down to page-by-page and query-by-query analysis to identify the real cause of the change. A granular approach is essential for any serious diagnostic analysis.

What you need to understand

Why does Google insist on granular analysis?

This statement highlights an often-overlooked truth: global click variations mask highly heterogeneous realities. A 10% drop in overall traffic can hide an 80% plunge on a few strategic pages offset by gains elsewhere.

Cherry Prommawin emphasizes that aggregated analysis—like "my site lost 15% of traffic"—provides no actionable insight. What matters is knowing which specific URLs lost which specific queries.

What does it concretely mean to analyze "page by page"?

It means segmenting your Search Console or analytics data to identify variations not at the domain level, but at the level of each indexed URL.

Next, for each declining page, you must cross-reference with the queries that were driving traffic to that page. A page can lose clicks for three main reasons: loss of ranking position on its historical queries, loss of featured snippet, or cannibalization by another page on your site.

What pitfalls should you avoid in this analysis?

First pitfall: focusing only on high-volume pages. A strategic page with 50 clicks/month can have disproportionate business impact compared to a page generating 5,000 unqualified clicks.

Second pitfall: ignoring seasonal or sector-specific variations. A drop in clicks on certain queries may be tied to natural shifts in demand, not an algorithmic penalty.

- Segment analysis by URL and query, never globally

- Identify strategic pages with high business impact, not just those with high traffic

- Cross-reference data: rankings, CTR, impressions, featured snippets

- Contextualize variations with Core Updates, seasonal trends, and competitive actions

- Don't confuse correlation with causation — a drop may have multiple simultaneous causes

SEO Expert opinion

Is this recommendation aligned with real-world practices?

Yes, absolutely. All serious SEO audits start with granular analysis of traffic losses and gains. It's the foundation of any diagnosis — but Google isn't saying anything new here.

What's missing from this statement is methodology. How do you prioritize analysis when a site has 10,000 indexed pages? What statistically significant variation thresholds should you use? Google remains deliberately vague on the "how to" aspect, as it often does.

What nuances should be added?

Page-by-page analysis is most relevant for mid-sized sites (500 to 10,000 pages). Below that, it's obvious. Above that, you should first segment by page type (categories, product pages, editorial content) before drilling down to URL level.

Another nuance: this approach assumes that Search Console provides reliable data. Yet we know query data is sampled beyond a certain volume, and some queries are hidden for privacy reasons. [To verify]: Google doesn't clarify how to handle these limitations in your analysis.

When is this method insufficient?

When the traffic drop is caused by a global technical issue (crawl budget exhaustion, server problems, poor JavaScript handling), page-by-page analysis can give a false impression of multiple causes when the root is actually single.

Similarly, during a massive Core Update, granularity can obscure the essentials: if Google has downgraded the overall perception of your thematic authority, all your pages will suffer — individual analysis becomes secondary to the need to overhaul your editorial strategy.

Practical impact and recommendations

What tools should you use for this analysis?

Google Search Console remains the foundation: use the Performance tab, filter by page, then cross-reference with queries. Export data across multiple periods (before/after the drop) to compare.

For third-party tools, Ahrefs, SEMrush, or Sistrix let you track ranking variations query-by-query with longer history. For large sites, tools like Oncrawl or Botify let you cross-reference server logs with GSC data and identify crawl patterns that are impacting performance.

What concrete methodology should you apply?

Start by identifying pages that lost more than 30% of clicks over a 28-day period (to smooth weekly variations). Rank them by absolute click loss, not just percentage.

For each page, list the 5 to 10 main queries that were driving traffic. Check their current position vs. historical ranking. If position hasn't moved but CTR dropped, maybe a competitor grabbed a featured snippet or improved their snippets.

Then broaden the analysis: is there a common pattern among declining pages? Same content type, same search intent, same semantic segment? If yes, it's probably a targeted algorithmic signal (Core Update, Helpful Content).

How do you avoid interpretation errors?

Never draw conclusions from too short a period. One week of data is noise. Wait at least 4 weeks to confirm a trend, unless there's a sudden, massive drop.

Also avoid over-reacting to an isolated drop. If only one page loses traffic while the rest of your site is stable, it's rarely a global algorithmic issue — look instead for cannibalization, accidental deindexing, or content obsolescence.

- Export GSC data over at least 90 days before/after the variation

- Segment by page type (categories, products, blog, etc.)

- Identify the 20% of pages generating 80% of traffic and prioritize their analysis

- Cross-reference rankings, CTR, impressions for each impacted query

- Manually check the SERPs for strategic queries (featured snippets, PAA, etc.)

- Compare with direct competitors on the same queries

- Look for common patterns among declining pages (topic, length, structure, backlinks)

- Document the analysis in a structured spreadsheet to track improvement progress

❓ Frequently Asked Questions

Faut-il analyser toutes les pages du site en cas de baisse de trafic ?

Comment savoir si une baisse de clics est due à une perte de position ou à une baisse de CTR ?

Quelle période d'analyse privilégier pour identifier les pages impactées ?

Que faire si plusieurs pages se cannibalisent sur les mêmes requêtes ?

Les données de la Search Console suffisent-elles pour cette analyse ?

🎥 From the same video 9

Other SEO insights extracted from this same Google Search Central video · published on 06/02/2025

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.