Official statement

Other statements from this video 9 ▾

- □ La Search Console est-elle vraiment le seul outil fiable pour vérifier le crawl de votre site ?

- □ La Search Console détecte-t-elle vraiment tous les problèmes d'indexation de votre site ?

- □ Faut-il vraiment soumettre un sitemap via Search Console pour optimiser l'indexation de vos pages ?

- □ Comment vérifier efficacement vos données structurées et rich results dans la Search Console ?

- □ La Search Console est-elle vraiment la seule source fiable pour mesurer votre trafic organique ?

- □ Pourquoi devriez-vous croiser Search Console et Google Analytics pour piloter votre SEO ?

- □ Faut-il se méfier des données récentes dans la Search Console ?

- □ Comment filtrer correctement le trafic organique Google dans Analytics ?

- □ Comment identifier précisément les pages et requêtes responsables d'une chute de trafic ?

Google directs webmasters to official Search Console documentation to diagnose traffic drops. A minimalist message that points to existing resources rather than providing direct answers. The real question remains: are these tools sufficient against algorithmic complexity?

What you need to understand

Why does Google point to documentation instead of giving direct answers?

This statement illustrates Google's typical strategy: delegating traffic problem resolution to webmasters themselves. Instead of explaining the potential causes of a drop, Cherry Prommawin points to the general Search Console documentation.

It's consistent with Google's "self-service" approach. Official resources compile classic scenarios: indexing issues, manual penalties, technical errors, query evolution. But they remain standardized reading frameworks.

What exactly does Google expect you to do with Search Console?

Search Console offers several key reports to identify weak signals: query performance, indexed vs non-indexed pages, Core Web Vitals, coverage, errors. By cross-referencing this data, you can isolate the impacted segments.

The problem? These reports describe symptoms, rarely root causes. A drop can stem from an unannounced algorithm update, a competitor who boosted their content, a shift in user intent. Search Console will never tell you: "Your content became outdated" or "Your competitor took your spot".

Is this approach sufficient against complex fluctuations?

For simple cases (technical error, manual penalty), yes. For drops linked to algorithm updates or perceived quality degradation, it falls short. Official documentation doesn't bridge the gap between technical symptoms and competitive reality.

Google relies on methodical data reading, but field audits often require cross-referencing multiple sources: Analytics, server logs, third-party tools, semantic analysis, backlinks. Search Console is a starting point, not a complete solution.

- Official documentation: foundational resource for diagnosing technical issues and penalties

- Search Console reports: performance, indexation, Core Web Vitals, coverage

- Limitations: doesn't reveal deep algorithmic causes or competitive dynamics

- Self-service approach: Google transfers diagnostic burden to webmasters

SEO Expert opinion

Is this statement consistent with field practices?

Yes and no. Search Console remains the reference tool for spotting obvious technical issues: non-indexed pages, server errors, manual penalties. On these points, the documentation is useful and well-structured.

But let's be honest: the majority of traffic drops I see aren't caused by a technical error detectable in Search Console. They come from content relevance degradation, a competitor who optimized better, an undocumented algorithm update. In these cases, Search Console shows you the symptoms (click drop), but tells you nothing about the why.

What nuances should be applied to this recommendation?

Google assumes the webmaster has the skills to interpret the data. In reality, a coverage report showing "500 excluded pages" can have ten different causes. An experienced practitioner knows how to cross-reference server logs, check crawl budget, examine canonical tags. A novice will get lost.

Additionally, official documentation is intentionally generic. It will never say: "Your content became thin after the latest Helpful Content update". [To verify]: Google never provides personalized diagnosis through these public tools. It's up to the practitioner to piece the puzzle together.

In what cases is this approach insufficient?

When the drop is tied to fuzzy algorithmic factors: E-E-A-T evolution, search intent shift, increased competition. Search Console doesn't measure editorial quality, semantic density, or perceived authority by the algorithm.

In these contexts, a complete SEO audit requires going beyond Search Console: competitor analysis, SERP study, internal linking audit, content strategy review, backlink profile. Google's official tools only cover a fraction of the spectrum.

Practical impact and recommendations

What should you do concretely after a traffic drop?

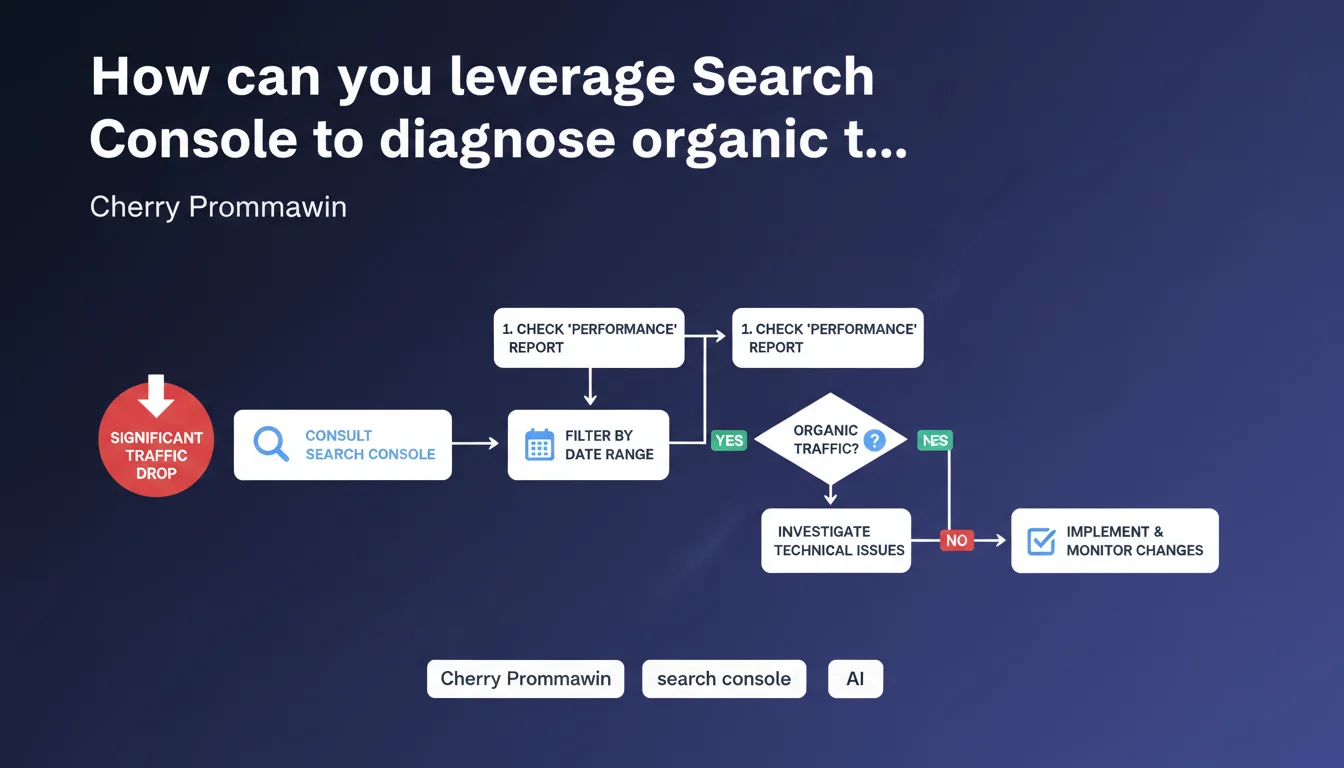

Start by cross-referencing Search Console and Google Analytics data. Identify impacted pages and queries, then verify indexation, technical errors, Core Web Vitals. That's the bare minimum.

Then expand your analysis: examine server logs for crawl variations, compare average position before/after, scrutinize SERP changes (new competitors, featured snippets). If no technical error appears, it's often a content or quality signal problem.

What errors should you avoid during diagnosis?

Don't rush into corrective actions before understanding the cause. Too many webmasters modify their content or change their internal link structure without knowing what triggered the drop. Result: they make things worse.

Also avoid relying solely on Search Console reports. Data can be incomplete (sampling) or have update delays. Always cross-reference with third-party sources: position tracking tools, Analytics, logs.

How to structure a post-drop audit?

Adopt a methodical approach: technique first (indexation, server errors), then content (relevance, quality, E-E-A-T), then backlinks and off-page signals. Document each step to avoid going in circles.

If diagnosis reveals complex issues — site architecture overhaul, massive rewriting, editorial strategy recalibration — internal resources can quickly be overwhelmed. This type of project requires global vision and field experience. In these cases, relying on a specialized SEO agency saves time and prevents false leads, especially when traffic stakes are critical for the business.

- Cross-reference Search Console, Analytics and server logs

- Identify impacted pages and queries

- Verify indexation, technical errors, Core Web Vitals

- Analyze SERP: new competitors, featured snippets, intent evolution

- Examine content quality: relevance, E-E-A-T, semantic density

- Audit backlink profile and internal linking

- Document each diagnostic step

- Don't act before identifying root cause

❓ Frequently Asked Questions

La Search Console suffit-elle pour diagnostiquer toutes les baisses de trafic ?

Quels sont les rapports clés de la Search Console pour analyser une baisse ?

Comment identifier si la baisse est technique ou éditoriale ?

Que faire si aucune erreur technique n'apparaît dans la Search Console ?

Combien de temps faut-il pour diagnostiquer une baisse de trafic ?

🎥 From the same video 9

Other SEO insights extracted from this same Google Search Central video · published on 06/02/2025

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.