Official statement

Other statements from this video 9 ▾

- □ La Search Console est-elle vraiment le seul outil fiable pour vérifier le crawl de votre site ?

- □ La Search Console détecte-t-elle vraiment tous les problèmes d'indexation de votre site ?

- □ Comment vérifier efficacement vos données structurées et rich results dans la Search Console ?

- □ La Search Console est-elle vraiment la seule source fiable pour mesurer votre trafic organique ?

- □ Comment exploiter la Search Console pour diagnostiquer une chute de trafic organique ?

- □ Pourquoi devriez-vous croiser Search Console et Google Analytics pour piloter votre SEO ?

- □ Faut-il se méfier des données récentes dans la Search Console ?

- □ Comment filtrer correctement le trafic organique Google dans Analytics ?

- □ Comment identifier précisément les pages et requêtes responsables d'une chute de trafic ?

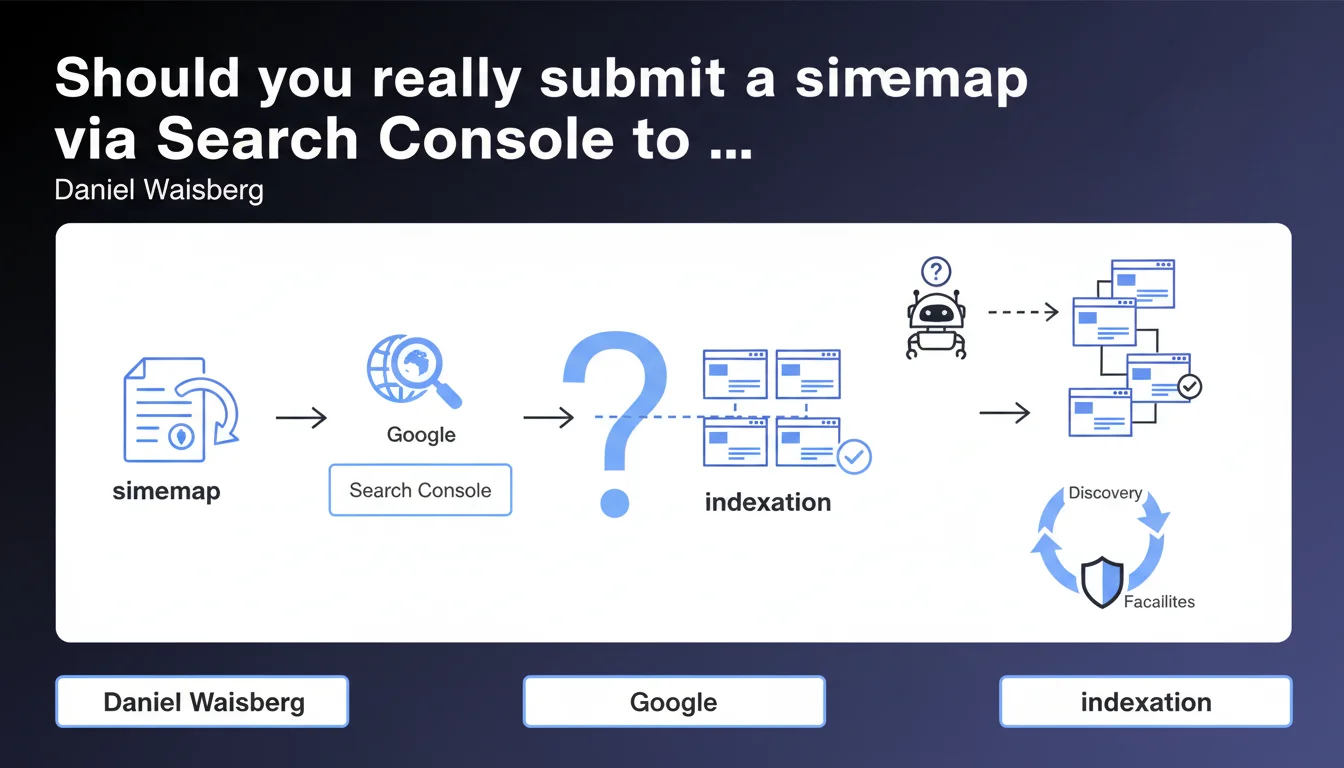

Google confirms that submitting a sitemap via Search Console facilitates the discovery of your pages. Monitoring the processing status remains an essential tool to identify indexation issues. However, this statement does not clarify the actual priority given to sitemaps or their impact on crawl budget — two angles that remain unclear.

What you need to understand

This statement from Daniel Waisberg reminds us of a well-known but often underutilized feature: sitemap submission and monitoring in Search Console. The stakes? Facilitating the work of Google's robots by providing them with a structured map of your important URLs.

Yet the tone used remains cautious: submitting a sitemap "facilitates" discovery without guaranteeing indexation. This nuance is crucial for understanding what Google truly expects from this practice.

What is the actual purpose of a sitemap in the crawl process?

A sitemap is an XML file listing the URLs you want Google to explore. It includes optional metadata: last modification date, update frequency, relative priority.

In theory, Google can discover your pages through internal linking and external backlinks. But for large websites, orphaned pages, or frequently published content, the sitemap becomes a useful complementary signal. It doesn't replace good internal linking; it enhances it.

What does monitoring the processing status in Search Console mean?

The Sitemaps report in Search Console displays several indicators: number of URLs submitted, URLs discovered, URLs indexed, detected errors (404s, redirects, etc.).

This monitoring allows you to quickly identify technical bottlenecks: pages blocked by robots.txt, server errors, misconfigured canonical tags. It's a technical health barometer, not a guarantee of priority indexation.

- A sitemap is not an indexation directive: it suggests, it doesn't command

- Metadata like "priority" or "changefreq" are largely ignored by Google

- Monitoring reveals technical issues invisible otherwise

- A well-constructed sitemap reduces discovery time, especially for new content

- Large sites should segment their sitemaps (50,000 URL limit per file)

SEO Expert opinion

Is this statement consistent with practices observed in the field?

Yes, broadly speaking. Audits show that sites submitting a well-structured sitemap see their new pages discovered faster — particularly for media publishing continuously. But the statement remains vague on a crucial point: the actual weight of the sitemap in crawl budget allocation.

In plain terms: submitting 100,000 URLs via a sitemap guarantees nothing if your internal linking is poor or your domain authority is weak. Google crawls what it deems useful, not what you declare as priority. [To verify]: the differential impact of a sitemap on indexation rates between a 500-page site and a 500,000-page site is never officially documented.

What nuances should be added to this recommendation?

First nuance: a poorly designed sitemap can pollute your signals. If you include noindex pages, 301 redirects, or unnecessary parameterized URLs, you create noise and disperse bot attention.

Second nuance: submitting a sitemap doesn't magically accelerate indexation of pages already accessible through good internal linking. It's a safety net, not an isolated performance lever. Sites that observed significant gains after submission often had pre-existing structural issues (orphaned pages, excessive depth).

In what cases does this rule not apply fully?

On very small sites (fewer than 50 pages), a sitemap remains useful but not critical if internal linking is well-designed. Google will discover your pages naturally. However, for e-commerce sites, media outlets, or marketplaces, the sitemap becomes essential: volume and publication frequency make natural discovery insufficient.

Practical impact and recommendations

What should you concretely do to optimize sitemap submission?

First reflex: generate a clean XML sitemap without URLs blocked by robots.txt, without redirects, without noindex pages. Use a reliable generator or a WordPress plugin like Yoast, Rank Math, or a custom script for complex architectures.

Next, submit it in Search Console via the Sitemaps tab. If your site exceeds 50,000 URLs, create a sitemap index pointing to multiple sitemaps segmented by content type (products, articles, categories, etc.). This facilitates error diagnosis by section.

How to effectively monitor the processing status?

Check the Sitemaps report in Search Console at least once a week. Identify gaps between URLs submitted and URLs indexed: a significant delta often signals a technical issue or content Google deems of low relevance.

Cross-reference this data with the indexation coverage report to spot excluded URLs, server errors, or misconfigured canonical tags. Prioritize fixing critical errors (404s, 500s, robots.txt blocks) before worrying about duplicate content exclusions.

What mistakes should you avoid when submitting a sitemap?

Don't overload your sitemap with low-value URLs: paginated pages without rel=canonical, sort/filter parameters, internal search results pages. Every URL should deserve to be indexed. Otherwise, you dilute signals and disrupt Google's analysis.

Also avoid submitting a static sitemap never updated. If you publish content regularly, automate sitemap generation and updates via your CMS or a cron script. Google re-crawls sitemaps periodically: you might as well provide fresh data.

- Generate an XML sitemap conforming to the Sitemaps 0.9 protocol

- Exclude all URLs blocked by robots.txt, in noindex, or redirected

- Segment large sitemaps (> 50,000 URLs) via a sitemap index

- Submit the sitemap in Search Console and verify no errors exist

- Monitor the Sitemaps report weekly to detect anomalies

- Automate sitemap updates in case of frequent publishing

- Cross-reference sitemap data with the indexation coverage report

Submitting a sitemap via Search Console remains a fundamental best practice, especially for large sites or those with frequent publication. But it doesn't replace good internal linking or solid technical architecture.

Regular monitoring of processing status allows you to quickly detect errors and adjust your indexation strategy. However, this optimization can quickly become complex on sites with high volume or scattered architecture — situations where partnering with a specialized SEO agency can prove valuable to structure, diagnose, and effectively correct the signals sent to Google.

❓ Frequently Asked Questions

Un sitemap garantit-il l'indexation de toutes les URLs qu'il contient ?

Dois-je soumettre un sitemap si mon site fait moins de 100 pages ?

Les balises priority et changefreq dans le sitemap ont-elles un impact réel ?

Combien de temps après soumission Google crawle-t-il le sitemap ?

Faut-il inclure les images et vidéos dans le sitemap ?

🎥 From the same video 9

Other SEO insights extracted from this same Google Search Central video · published on 06/02/2025

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.