Official statement

Other statements from this video 14 ▾

- □ Qu'est-ce qu'un crawler web et pourquoi Google insiste-t-il sur cette définition ?

- □ Googlebot ne fait-il vraiment que crawler sans décider de l'indexation ?

- □ Comment Googlebot crawle-t-il réellement vos pages web ?

- □ Le crawl budget dépend-il vraiment de la demande de Search ?

- □ Le crawl budget existe-t-il vraiment chez Google ?

- □ Faut-il bloquer certaines pages du crawl Google pour optimiser son budget ?

- □ Google manque-t-il vraiment d'espace de stockage pour indexer votre contenu ?

- □ Les liens naturels sont-ils vraiment plus importants que les sitemaps pour la découverte ?

- □ Faut-il vraiment lier depuis la page d'accueil pour accélérer le crawl de vos nouvelles pages ?

- □ Faut-il vraiment limiter l'usage de l'Indexing API aux seuls cas d'usage recommandés par Google ?

- □ Pourquoi Google limite-t-il l'usage de l'Indexing API à certains contenus ?

- □ L'Indexing API peut-elle faire retirer votre contenu aussi vite qu'elle l'indexe ?

- □ Faut-il supprimer vos pages de faible qualité pour améliorer votre crawl budget ?

- □ L'outil d'inspection d'URL peut-il vraiment accélérer l'indexation de vos améliorations ?

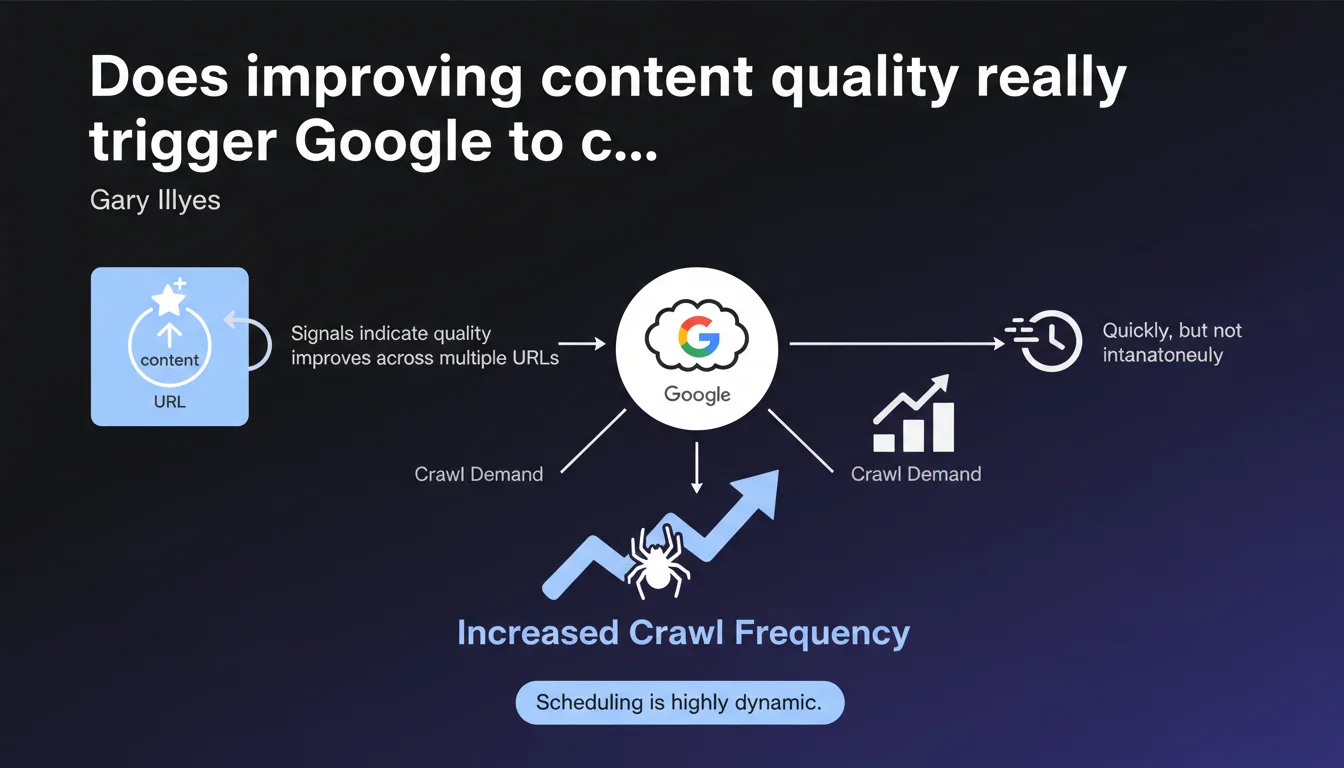

Google adjusts its crawl budget dynamically as soon as it detects quality improvements across multiple URLs. The reaction is fast but not instant — the system needs time to confirm that the improvement is genuine and sustainable before allocating more crawl resources.

What you need to understand

What is dynamic crawl according to Google?

Google doesn't set a static crawl budget for each website. Crawler scheduling varies based on multiple quality signals. When Google detects that several URLs on a site show quality improvements, it adjusts upward the frequency and depth of crawl.

This statement confirms that crawling isn't a fixed constant — it's a reactive process that adapts to site changes. In practice, if you massively fix mediocre content, Google won't make you wait weeks to recognize the effort.

What signals indicate quality improvement?

Gary Illyes mentions "signals" without detailing them, which is typical of Google. We can reasonably think of user engagement metrics (CTR, dwell time, bounce rate), content freshness, reduction of 404 errors or duplicate pages, and improvement of Core Web Vitals.

The fact that improvement must concern "multiple URLs" suggests Google is looking for a trend, not an isolated exception. A single signal isn't enough — the entire site must show a clear direction.

What does "quickly, but not instantly" mean?

Google deliberately avoids giving a precise timeline. "Quickly" could mean anywhere from a few days to a few weeks depending on site size and crawl history. The delay allows Google to validate the consistency of improvement before investing more resources.

This cautious phrasing protects Google against short-term manipulation attempts — if you artificially boost quality just to increase crawl, the system will eventually detect it and scale back.

- Crawl budget is not fixed but reactive to quality improvements

- Improvement must be visible across multiple URLs, not isolated

- Google's reaction takes days to weeks, depending on context

- The system looks for sustainable trends, not temporary spikes

SEO Expert opinion

Is this statement consistent with real-world observations?

Yes, largely. Cases of quality overhauls followed by increased crawl are documented regularly. When a site massively cleans up thin content, consolidates duplicate pages, or improves its architecture, we often observe a crawl spike within 7 to 21 days afterward.

But — and this is a crucial point — this responsiveness also depends on site size and authority. An established site with a stable history will see faster reaction than a new domain or a previously penalized site. [To verify]: Google never specifies exact thresholds or weightings applied based on site history.

What nuances should we add?

Let's be honest: "quality improvement" remains a fuzzy concept. Google probably blends on-page signals (HTML structure, semantic richness, freshness) and behavioral signals (engagement, SERP return rate). But the statement doesn't say whether all these signals carry equal weight.

Another point: Gary talks about increased "crawl demand", not necessarily faster indexing frequency. Increased crawl doesn't automatically guarantee better ranking — it's a necessary but not sufficient condition. If the improved content still doesn't meet relevance or E-E-A-T criteria, extra crawling won't change rankings.

In what cases does this logic fail?

This promise of dynamic adjustment assumes Google actually detects improvements. If your site has historically low crawl budget (large site with lots of unnecessary pages, slow server, low authority), Google may take weeks — even months — to discover your changes.

noindex, blocked by robots.txt, or buried 5 clicks from the homepage, the scheduler won't detect these improvements. Quality must be technically accessible to the crawler.Finally, some sites migrate or change hosting at the same time they improve content. If server response time degrades in parallel, the positive crawl effect will be canceled — even reversed. Google always prioritizes technical stability before editorial quality.

Practical impact and recommendations

What should you concretely do to trigger this crawl increase?

First, focus on coherent and visible improvement. Don't randomly touch 5 pages — target an entire section or content type. Google looks for trends, not exceptions.

Next, ensure your improvements are technically detectable: update publication dates, add relevant structured markup (Article, FAQPage), improve load times. Make the crawler's job easier by keeping these pages easily accessible from the homepage and XML sitemap.

- Identify a set of pages to improve (at least 20-30 URLs for a clear signal)

- Enrich editorial content: add depth, sources, media

- Fix technical errors: 404s, redirect chains, conflicting canonical tags

- Improve Core Web Vitals on these pages (LCP, CLS, INP)

- Update publication dates and

lastmodtags in sitemap - Strengthen internal linking to these pages from well-crawled sections

- Monitor server logs to confirm crawl increase within 2-3 weeks

What mistakes should you avoid?

Don't confuse quantity with quality. Adding 500 words of generic filler will trigger nothing — worse, it can send a negative signal. Google detects artificially inflated content patterns.

Also avoid touching only metadata (title, meta description) hoping for a miracle. These elements play a role, but improvement must be substantive and editorial for Google to react. Finally, don't block the crawler during modifications — some CMS systems automatically put pages in noindex during redesigns, which cancels all effort.

How do you verify the strategy works?

Monitor your server logs: count Googlebot requests per day before and after modifications. A progressive increase confirms Google detects and values your efforts.

In Search Console, check the "Crawl stats" report: observe how the number of pages explored daily and average response time evolve. An upward curve over 2-3 weeks is a positive indicator.

❓ Frequently Asked Questions

Combien de temps faut-il attendre avant de voir une augmentation du crawl après avoir amélioré le contenu ?

Faut-il améliorer toutes les pages du site ou suffit-il de cibler certaines sections ?

Est-ce que l'augmentation du crawl garantit une amélioration du positionnement ?

Quels signaux précis indiquent à Google que la qualité s'est améliorée ?

Peut-on forcer Google à crawler plus rapidement après une mise à jour ?

🎥 From the same video 14

Other SEO insights extracted from this same Google Search Central video · published on 14/03/2024

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.