Official statement

Other statements from this video 14 ▾

- □ Qu'est-ce qu'un crawler web et pourquoi Google insiste-t-il sur cette définition ?

- □ Googlebot ne fait-il vraiment que crawler sans décider de l'indexation ?

- □ Comment Googlebot crawle-t-il réellement vos pages web ?

- □ Le crawl budget dépend-il vraiment de la demande de Search ?

- □ Le crawl budget existe-t-il vraiment chez Google ?

- □ Faut-il bloquer certaines pages du crawl Google pour optimiser son budget ?

- □ Google manque-t-il vraiment d'espace de stockage pour indexer votre contenu ?

- □ Les liens naturels sont-ils vraiment plus importants que les sitemaps pour la découverte ?

- □ Faut-il vraiment lier depuis la page d'accueil pour accélérer le crawl de vos nouvelles pages ?

- □ Faut-il vraiment limiter l'usage de l'Indexing API aux seuls cas d'usage recommandés par Google ?

- □ Pourquoi Google limite-t-il l'usage de l'Indexing API à certains contenus ?

- □ L'Indexing API peut-elle faire retirer votre contenu aussi vite qu'elle l'indexe ?

- □ Comment l'amélioration de la qualité du contenu accélère-t-elle le crawl de Google ?

- □ Faut-il supprimer vos pages de faible qualité pour améliorer votre crawl budget ?

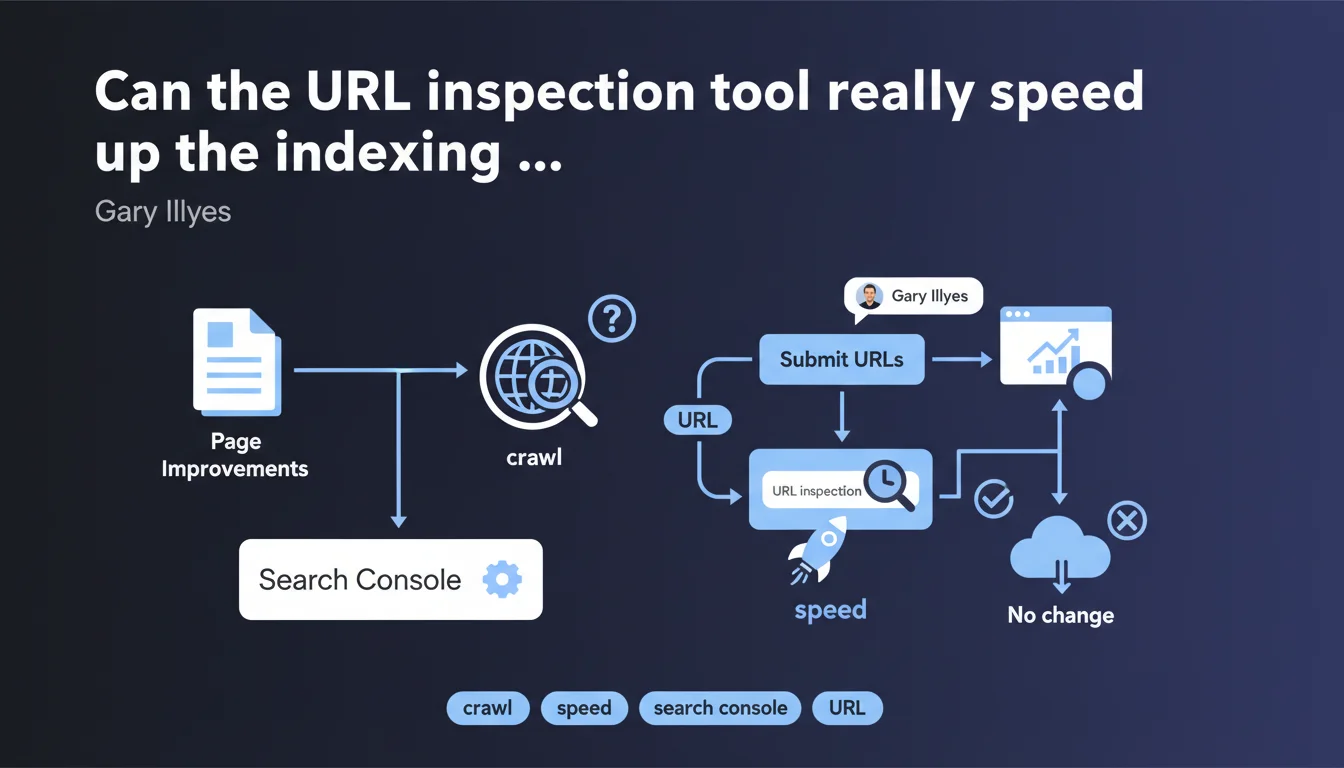

Google confirms that manually submitting improved URLs via the Search Console inspection tool can trigger a targeted recrawl. This method allows you to test whether your optimizations are producing a measurable effect, especially when natural crawling is taking longer than expected. Note: it doesn't replace a healthy crawl budget.

What you need to understand

Why is Gary Illyes suggesting this approach now?

The statement comes at a time when many SEOs are experiencing unpredictable recrawl delays, especially on medium-sized sites. Improving content doesn't guarantee that Googlebot will return immediately — sometimes changes remain invisible for weeks.

The inspection tool allows you to force a one-time reevaluation without waiting for the natural cycle. It's a technical validation: if the page passes indexability criteria, it will be (re)crawled within 24-48 hours in the majority of cases.

What does "see what happens" concretely mean?

Gary isn't promising an immediate boost in the SERPs. He's talking about diagnostic visibility: the tool reveals whether Google detects your improvement (tags, structure, content) and if it passes indexing filters.

In practice? You'll see if the crawled version reflects your modifications, if errors block indexation (blocked resources, contradictory canonicals), and if the rendering matches what you expected. Nothing magical — just accelerated feedback.

- The tool doesn't modify your overall crawl budget — it temporarily prioritizes a few URLs.

- Useful for validating critical fixes (e.g., hreflang tags, structured data fixes).

- Don't confuse it with a ranking lever: indexation is a prerequisite, not a direct ranking factor.

- Limited to a few URLs per day — impossible to scale across thousands of pages.

In what cases does this method make sense?

Typically, after fixing blocking technical issues: 5xx errors, soft 404s, orphaned pages that have been relinked. Or when you're redesigning strategic pages (landing pages, high-traffic product sheets) and want to confirm that Google sees the new version.

On the other hand, if your site generates 500 new pages per day and crawl budget is already tight, submitting 10 URLs manually won't solve anything. The problem is structural — internal linking, pagination, server speed — not tactical.

SEO Expert opinion

Is this recommendation consistent with observed practices in the field?

Yes and no. The inspection tool really does trigger prioritized recrawling — we've observed this for years. But the effect on ranking remains unclear. Gary says "see what happens," which is deliberately vague.

In practice, submitting a URL rarely improves ranking directly. What matters is that the improvement is substantial (enriched content, better answer to intent, optimized user experience). Fast indexation just shortens the delay before evaluation — it doesn't compensate for mediocre content.

What nuances should be added to this advice?

First nuance: it doesn't scale. Search Console limits the number of daily submissions (typically around 10-15). If you're optimizing 200 pages, you'll wait two weeks — might as well work on your overall crawl budget (targeted sitemaps, log analysis, crawl trap removal).

Second nuance: the tool sometimes masks underlying problems. A manually submitted URL might be indexed when it would be ignored in natural crawling (lack of internal PageRank, excessive depth). Result: false sense of security. [To verify]: Do these URLs remain indexed long-term, or do they disappear after a few weeks?

In what cases is this method counterproductive?

When you use it to mask structural weaknesses. Classic example: an e-commerce site generates 10,000 product variants per week, crawl budget is saturated, and you manually submit 10 bestsellers. It solves nothing — the problem is poorly managed pagination, crawlable faceted filters stretching infinitely, or polluted sitemaps.

Another case: submitting pages before verifying their quality. If your "improvement" consists of adding 300 words of generic content, you risk indexing faster … a page that Google might judge less relevant than before. The tool accelerates feedback, but doesn't correct strategic errors.

Practical impact and recommendations

What should you concretely do to benefit from this statement?

Start by identifying strategic pages you've recently improved: enriched content, technical fixes, UX optimizations. Prioritize those already generating traffic or targeting high-potential keywords.

Submit these URLs via the inspection tool in Search Console. Wait 48-72 hours, then compare the version crawled by Google (accessible via "Test live URL") with your published version. Verify that modifications are well detected: title/meta tags, structured data, text content.

- Select 5-10 priority URLs recently optimized

- Use the inspection tool to request indexation

- Check HTML rendering in "Test live URL"

- Monitor server logs to confirm Googlebot's visit

- Compare positions before/after within 2-3 weeks (not immediate)

- Document results to refine future strategy

What errors should you absolutely avoid?

Don't overwhelm the tool with dozens of URLs daily — you risk diluting the effect and slowing processing. Google prioritizes submissions, but excessive volume can signal automated behavior (even if manual).

Also avoid submitting unfinalized pages: duplicate content, missing tags, excessive load times. Fast indexation will expose your weaknesses sooner. Better to polish first, then submit.

How do you verify this approach works for your site?

Implement precise tracking: note the submission date, monitor server logs (does Googlebot return?), and track ranking evolution on targeted keywords. Use tools like Oncrawl or Botify to cross-reference crawl data with organic performance.

If after several cycles (3-4 weeks) you see no measurable effect, the problem probably isn't indexation delay — it's either the quality of improvements, or a structural issue (lack of authority, too much competition, misunderstood intent).

❓ Frequently Asked Questions

Combien d'URLs peut-on soumettre par jour via l'outil d'inspection ?

Soumettre une URL garantit-il son indexation ?

Faut-il soumettre systématiquement toutes les nouvelles pages ?

Combien de temps faut-il attendre pour voir un effet sur le ranking ?

Cette méthode fonctionne-t-elle pour les sites pénalisés ?

🎥 From the same video 14

Other SEO insights extracted from this same Google Search Central video · published on 14/03/2024

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.