Official statement

Other statements from this video 15 ▾

- □ Comment Google jongle-t-il avec 40 signaux pour choisir l'URL canonique ?

- □ Clustering et canonicalisation : Google fait-il vraiment la différence entre ces deux processus ?

- □ Le rel canonical joue-t-il un double rôle dans l'algorithme de Google ?

- □ Que se passe-t-il quand vos signaux de canonicalisation se contredisent ?

- □ Comment Google choisit-il réellement entre HTTP et HTTPS dans ses résultats ?

- □ Pourquoi vos redirections multiples empêchent-elles Google de choisir la version HTTPS ?

- □ Google traite-t-il vraiment différemment les traductions de boilerplate et de contenu ?

- □ Hreflang fonctionne-t-il indépendamment du clustering de contenu dupliqué ?

- □ Google va-t-il vraiment faciliter le traitement du hreflang pour les sites fiables ?

- □ X-default est-il vraiment un signal canonique comme les autres ?

- □ Les pages en soft 404 sont-elles vraiment les seules à créer des clusters problématiques ?

- □ Pourquoi un message d'erreur explicite peut-il sauver votre crawl budget ?

- □ Les redirections JavaScript vers des pages d'erreur sont-elles vraiment prises en compte par Google ?

- □ Pourquoi un no-index supprime-t-il une page plus vite qu'une erreur 404 ou 410 ?

- □ Un rel canonical vide peut-il vraiment supprimer tout votre site de l'index Google ?

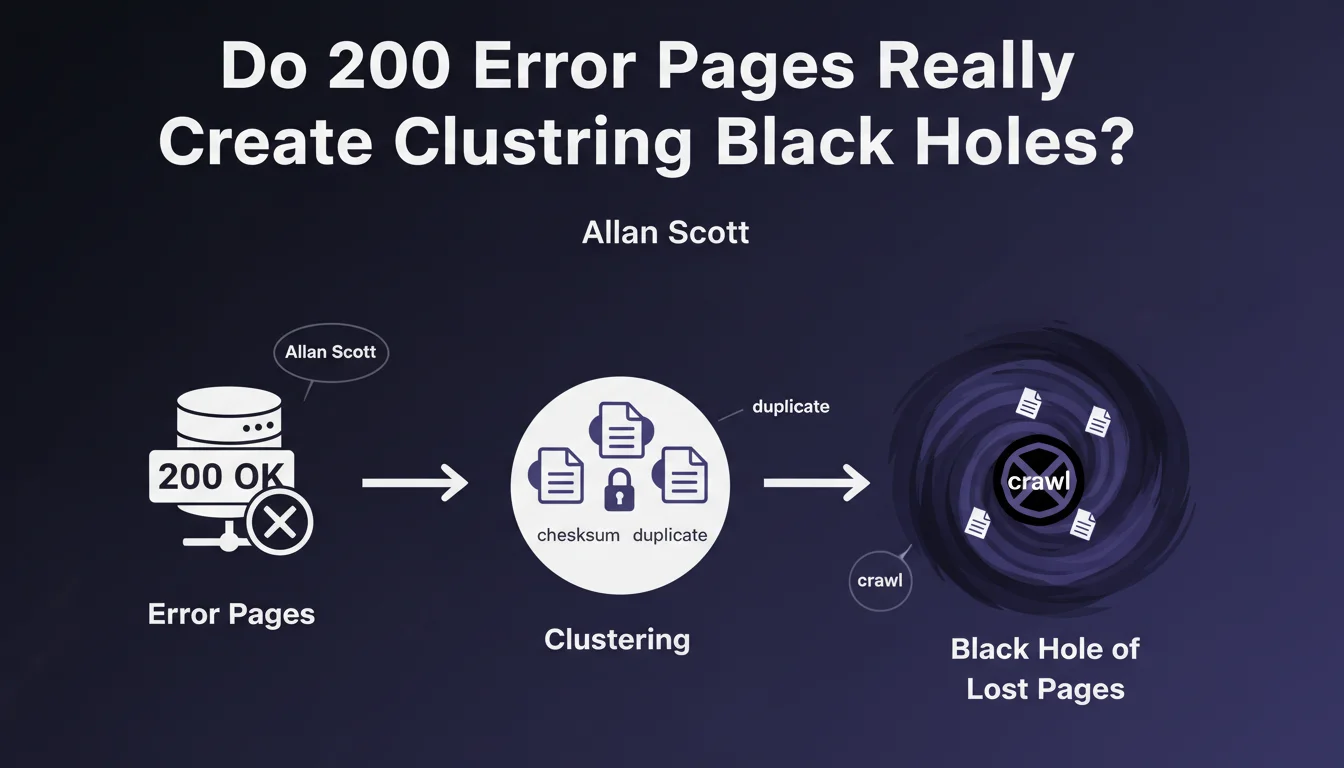

Error pages served with HTTP 200 status cluster together via a checksum system, creating duplication clusters. Once trapped in these clusters, pages become nearly invisible to crawling that avoids duplicate content, forming a 'black hole' from which they escape with difficulty.

What you need to understand

What exactly is a 200 error page?

A 200 error page is a technical aberration: a page that displays an error message (404, 500, etc.) but returns an HTTP 200 status code (success). Technically, you're telling Google 'everything is fine' while the page is broken.

This scenario occurs frequently on poorly configured e-commerce sites, CMSs with faulty redirects, or templates that display 'Product not found' without changing the status code. For the search engine, it's valid content to index.

How does checksum-based clustering work?

Google uses checksums (digital fingerprints) to identify similar or identical content. 200 error pages often share the same template — therefore the same structure, the same generic text.

Result? They end up clustered together. The engine detects massive duplication and applies its spam filter: a single URL represents the cluster, the others are de facto deindexed.

Why is it called a 'black hole'?

The term is brutal but accurate. Once a page falls into a duplication cluster, the crawl budget collapses for that group. Google actively avoids recrawling these URLs detected as duplicates.

Even if you fix the problem later, the page remains marked. The bot doesn't come back — or very rarely. You must force reindexing manually, and even then, without guarantee if the checksum remains suspect.

- 200 error pages: technical errors served with a success HTTP status code

- Checksum-based clustering: automatic grouping of identical or very similar content

- Crawl black hole: URLs trapped in these clusters are no longer crawled regularly

- Indexation impact: progressive deindexation of affected pages, even if corrected

- Difficult detection: these problems often go unnoticed because the 200 code masks the error

SEO Expert opinion

Is this statement consistent with real-world observations?

Yes, completely. We've observed this phenomenon for years on poorly configured e-commerce sites. Hundreds of 'unavailable' product pages returning 200, and six months later, indexation collapses for no apparent reason.

What's new is the official confirmation of the mechanism: checksum-based clustering. Before, we assumed a generic quality filter. Now we know it's an automated process based on content fingerprints.

What nuances should be added?

The statement lacks details on triggering thresholds. How many 200 error pages does it take to create a toxic cluster? 10? 100? 1000? [To verify] — Google remains vague on this crucial point.

Another gray area: what proportion of duplicate content triggers the checksum? If two error pages share 80% common text but 20% different (Product A vs Product B in the title), are they clustered anyway? Probably, but again, no precise metrics.

In which cases does this problem really affect ranking?

Let's be clear: if you have 5 error pages returning 200 on a 500-page site, the impact will be negligible. Clustering doesn't trigger on a few isolated instances.

The real danger concerns sites with hundreds or thousands of poorly managed error pages — marketplaces, seasonal e-commerce, classified ad sites. There, you create a massive cluster that pollutes your crawl profile and dilutes your budget. Healthy pages nearby suffer by ricochet.

Practical impact and recommendations

How do I detect 200 error pages on my site?

First step: complete technical audit. Use Screaming Frog or Sitebulb in 'Full Spider' mode with status code analysis. Filter URLs returning 200 but containing expressions like 'not found', 'unavailable', 'error', '404'.

Second check: Search Console, Coverage report. Look at indexed URLs with zero traffic for 6+ months. Often, these are 200 error pages clustered and abandoned by Google.

What if I already have pages trapped in these clusters?

Fixing the status code isn't enough. You must modify the content to break the checksum. Change the template, add unique text, restructure the HTML — basically, make the page unrecognizable compared to the old version.

Then force reindexing via Search Console (using the 'Request indexing' function). But watch out: with hundreds of URLs, you'll quickly hit daily limits. Prioritize high-potential pages and let natural recrawling handle the rest — hopefully.

What mistakes should be avoided at all costs?

- Never serve error content with a 200 code — properly configure your 404, 410, 503 responses

- Don't use the same generic template for all your error pages — vary the content if possible

- Avoid massive 301 redirects to a 200 error page — it's worse than anything

- Don't ignore soft-404s flagged in Search Console — Google has already detected the problem

- Never leave error pages indexed — use robots.txt, noindex, or complete removal

200 error pages are slow poison for your crawl budget. They create duplication clusters from which you escape with difficulty. Prevention is simple: configure your HTTP codes correctly. Correction is tedious: content modification + forced reindexing.

If you manage a large site with thousands of URLs, auditing and correcting these errors can quickly become a complex technical project. In this context, the support of a specialized SEO agency can prove invaluable to identify the extent of the problem, prioritize corrections, and implement an effective reindexing strategy without burning your crawl budget.

❓ Frequently Asked Questions

Un code 404 personnalisé avec design soigné est-il concerné par ce problème ?

Les soft-404 détectés par Search Console sont-ils la même chose que les pages d'erreur 200 ?

Combien de temps faut-il pour qu'une page corrigée sorte du cluster ?

Les pages d'erreur 200 peuvent-elles affecter le ranking des pages saines ?

Faut-il supprimer les URLs des pages d'erreur 200 du sitemap XML ?

🎥 From the same video 15

Other SEO insights extracted from this same Google Search Central video · published on 05/12/2024

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.