Official statement

Other statements from this video 15 ▾

- □ Comment Google jongle-t-il avec 40 signaux pour choisir l'URL canonique ?

- □ Clustering et canonicalisation : Google fait-il vraiment la différence entre ces deux processus ?

- □ Le rel canonical joue-t-il un double rôle dans l'algorithme de Google ?

- □ Que se passe-t-il quand vos signaux de canonicalisation se contredisent ?

- □ Comment Google choisit-il réellement entre HTTP et HTTPS dans ses résultats ?

- □ Pourquoi vos redirections multiples empêchent-elles Google de choisir la version HTTPS ?

- □ Google traite-t-il vraiment différemment les traductions de boilerplate et de contenu ?

- □ Hreflang fonctionne-t-il indépendamment du clustering de contenu dupliqué ?

- □ Google va-t-il vraiment faciliter le traitement du hreflang pour les sites fiables ?

- □ X-default est-il vraiment un signal canonique comme les autres ?

- □ Les pages d'erreur 200 créent-elles vraiment des trous noirs de clustering ?

- □ Les pages en soft 404 sont-elles vraiment les seules à créer des clusters problématiques ?

- □ Pourquoi un message d'erreur explicite peut-il sauver votre crawl budget ?

- □ Pourquoi un no-index supprime-t-il une page plus vite qu'une erreur 404 ou 410 ?

- □ Un rel canonical vide peut-il vraiment supprimer tout votre site de l'index Google ?

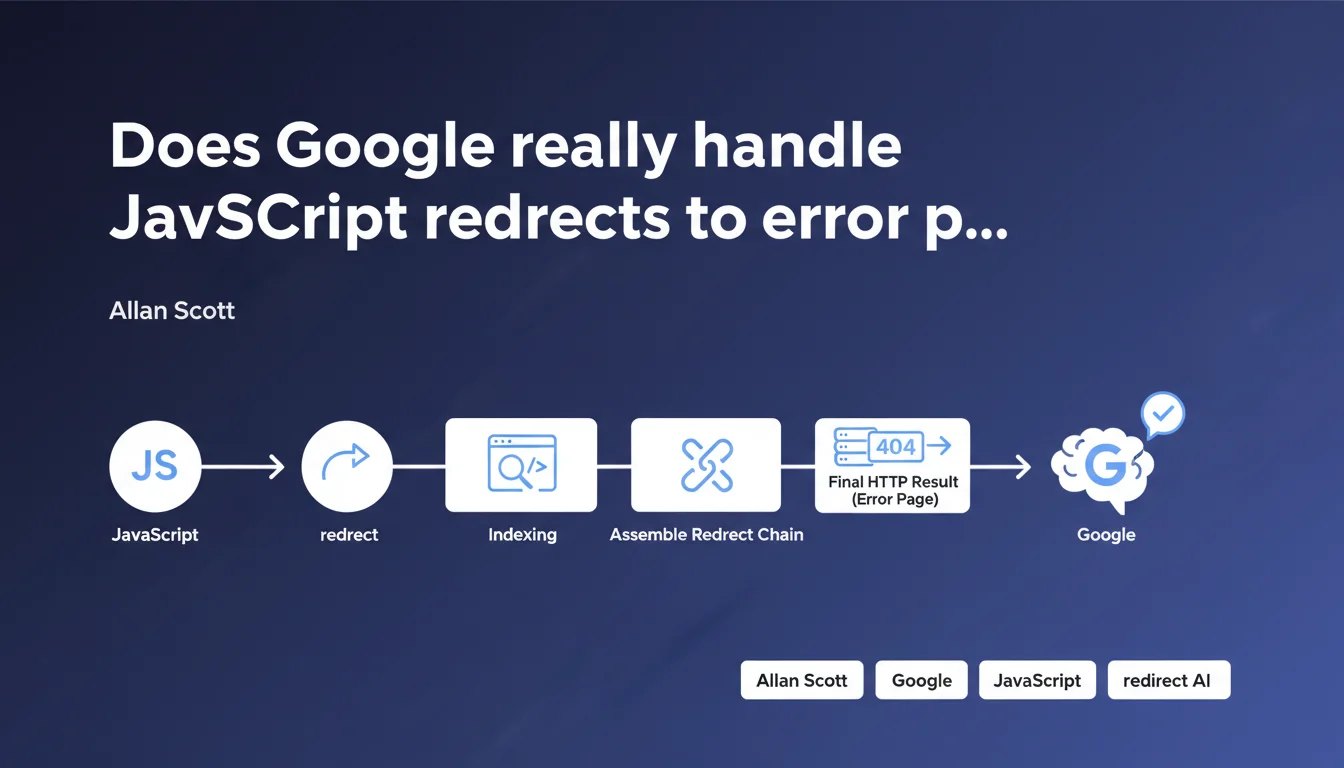

Google confirms that JavaScript redirects to static pages returning proper HTTP error codes work correctly. Indexing reconstructs the complete redirect chain and detects the final HTTP code, meaning a JS redirect to a 404 or 410 will be properly interpreted as such.

What you need to understand

What is redirect clustering that Google mentions?

Google uses what it calls clustering to reconstruct an entire redirect chain, even when it combines different techniques. Concretely, if a URL redirects via JavaScript to a static page that returns a 404 code, Googlebot assembles these two steps to understand the final result.

This capability is fundamental for managing modern architectures where JavaScript plays a central role. It allows Google to follow complex redirect paths without getting lost along the way.

Why this emphasis on HTTP error codes?

The important nuance here concerns HTTP status codes. A JavaScript redirect alone does not transmit an HTTP code — it's the destination page that must return the correct code (404, 410, etc.).

If you redirect via JS to a page that displays "error" but returns a 200, Google will interpret this as valid content. The final HTTP code is what matters, not the content displayed to the user.

In what cases is this approach used?

This configuration appears frequently in JavaScript applications (React, Vue, Angular) where client-side routing handles errors. Instead of configuring the server to return native 404s, some developers prefer to redirect to a dedicated error page.

You also find this pattern in site migrations or redesigns where JS serves as an intermediary layer before final server configuration.

- Clustering reconstructs the complete chain of redirects, even mixing JS and HTTP

- The final HTTP code of the destination page is what counts for Google

- A JS redirect to a page returning 200 will be indexed as valid content

- This approach works but remains less optimal than native server handling

- Particularly relevant for SPAs and client-side applications

SEO Expert opinion

Is this statement consistent with field observations?

Yes, and it's a welcome confirmation. Testing effectively shows that Google manages to follow complex redirect chains, including those involving JavaScript. But — and this is where it gets tricky — processing speed isn't the same.

A server-side 301 redirect will be understood almost instantly. A JS redirect to a 404 can take several crawls before complete consolidation in the index. The final result is identical, the timing differs dramatically.

What nuances should be added to this statement?

Google says "it works", which is true. They don't say it's optimal. There's a fundamental difference between "Google can handle it" and "this is best practice".

Clustering requires additional crawl budget — Googlebot must follow the chain, execute the JS, crawl the destination page, then assemble it all. For a small site, negligible impact. For a site with millions of URLs, it matters.

[To verify]: Google remains evasive about the exact consolidation timeline. Field feedback varies from a few days to several weeks depending on the site's crawl frequency. Impossible to get a numerical guarantee.

In what scenarios does this approach really cause problems?

Let's be honest: if you have 50 URLs involved, nobody will notice the difference. The problem emerges at scale — e-commerce sites with thousands of permanently out-of-stock products, content platforms with massive rotation.

Another pitfall: JS redirects that fail silently. A bug in your code, and Google crawls a blank page returning 200. You think you have a 404, Google indexes nothing. With a server redirect, this type of incident doesn't exist.

Practical impact and recommendations

What should you do if your site already uses this configuration?

Start by auditing the complete chain. Verify that the destination page returns the expected HTTP code, not just a visual error display. Use Chrome DevTools or a tool like Screaming Frog in JavaScript mode to trace the complete path.

Then, monitoring. Add these URLs to Search Console and track their evolution. If Google continues to crawl them heavily weeks after implementation, either clustering isn't working correctly — or you have an internal linking problem pointing to them.

What errors must you absolutely avoid?

Never redirect via JS to a page returning 200 with dummy content. This is the most dangerous configuration: you think you're signaling an error, Google indexes a legitimate page with poor or duplicate content.

Also avoid overly long chains. If you redirect via JS to a page that itself redirects with a 301, you multiply friction points. Google will likely follow, but you're wasting crawl budget unnecessarily.

What is the optimal configuration to aim for?

The ideal remains native server-side handling. Configure your server or CDN to directly return the correct HTTP codes without passing through JavaScript. It's faster, more reliable, and consumes fewer resources — both server-side and crawl budget.

If you're constrained to JS (complex SPA architecture, technical limitations), ensure at minimum that server-side rendering (SSR) or static pre-generation handles HTTP codes correctly. Next.js, Nuxt, and similar tools allow this natively.

- Verify that destination pages return correct HTTP codes (404, 410, etc.)

- Trace the complete chain with a JavaScript-enabled crawler

- Monitor evolution in Search Console over several weeks

- Eliminate JS redirects to dummy 200 pages

- Clean up internal linking to stop pointing to these URLs

- Progressively migrate to native server-side handling when possible

- Document technical choices to prevent regressions

❓ Frequently Asked Questions

Une redirection JavaScript est-elle aussi rapide qu'une redirection 301 côté serveur ?

Si ma page affiche visuellement 'Erreur 404' mais retourne un code 200, que voit Google ?

Dois-je supprimer toutes mes redirections JavaScript existantes ?

Le clustering fonctionne-t-il pour des chaînes de 3+ redirections ?

Comment vérifier que Google a bien consolidé ma chaîne de redirections ?

🎥 From the same video 15

Other SEO insights extracted from this same Google Search Central video · published on 05/12/2024

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.