Official statement

Other statements from this video 7 ▾

- □ Faut-il vraiment exclure les URL non-canoniques de votre sitemap XML ?

- □ Faut-il vraiment un sitemap pour être indexé par Google ?

- □ Faut-il vraiment limiter les mises à jour de lastmod dans vos sitemaps XML ?

- □ Quelles sont les limites techniques réelles des fichiers sitemap XML ?

- □ Faut-il vraiment diviser vos sitemaps volumineux en plusieurs fichiers ?

- □ Faut-il vraiment indexer toutes les URL de votre sitemap ?

- □ Quels types de contenu faut-il vraiment inclure dans vos sitemaps ?

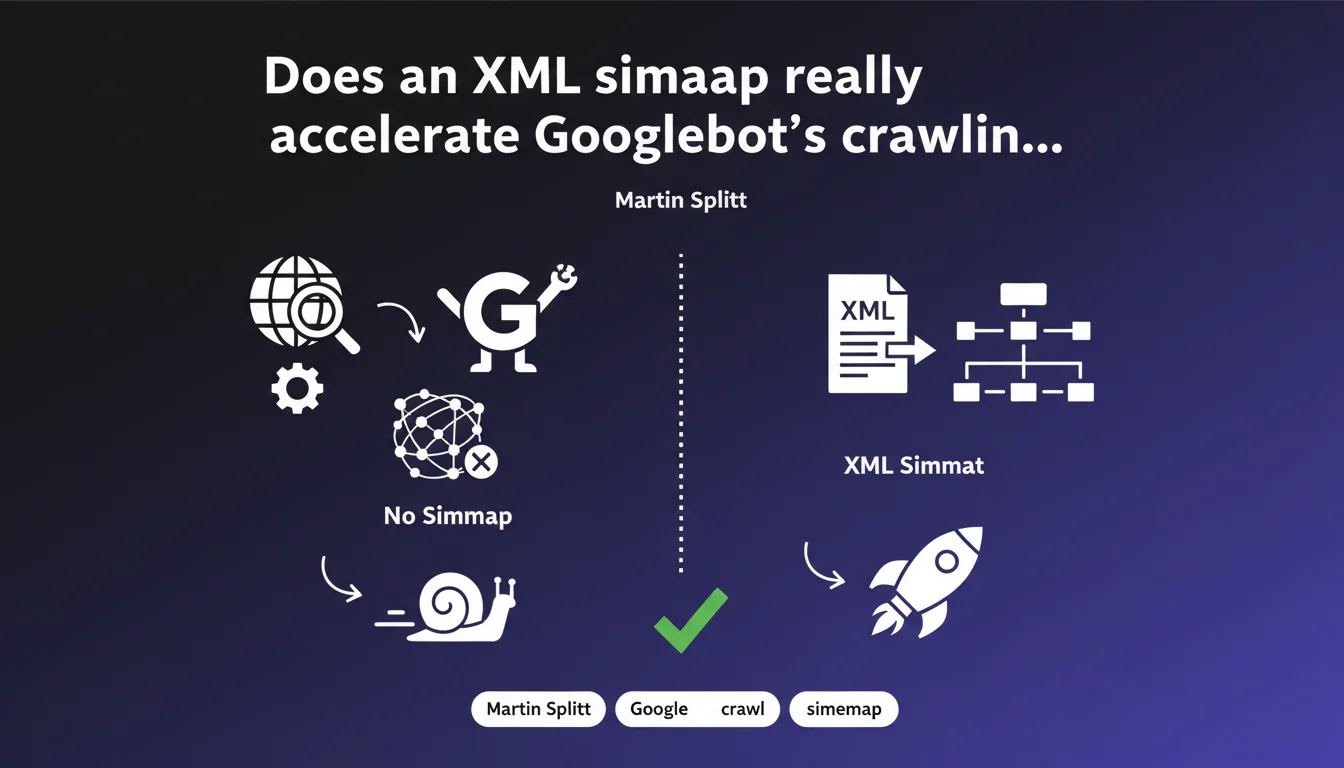

Martin Splitt confirms that XML sitemaps speed up page discovery by Googlebot and optimize crawling efficiency, especially for large websites. In practice, it's a signal that facilitates the crawler's work — not a guarantee of indexation, but a meaningful boost.

What you need to understand

Why does Google emphasize XML sitemaps so much?

The XML sitemap acts as a privileged access map for search engines. Instead of waiting for Googlebot to discover your URLs through internal linking or backlinks, you serve it the complete list of important pages directly.

For sites with thousands of pages — e-commerce, media outlets, directories — this function becomes critical. Without a sitemap, certain deep or poorly linked pages risk remaining invisible for weeks or even months.

What exactly does "more efficient exploration" mean?

Efficiency here is measured in discovery time and prioritization of crawl resources. The sitemap tells Google which pages deserve its attention, with metadata like modification date or update frequency.

That said, a poorly designed sitemap — filled with useless URLs, redirects, or noindex pages — produces the opposite effect. It dilutes the signal and wastes crawl budget instead of saving it.

Does a sitemap guarantee indexation of all my pages?

No. Google repeats this constantly: submitting a URL via sitemap guarantees no indexation whatsoever. The crawler visits, analyzes, then decides based on its own quality and relevance criteria.

The sitemap is a tool for discovery and suggestion, not a shortcut. If your content is duplicated, thin, or canonicalized elsewhere, it will remain out of the index even with a perfect sitemap.

- The XML sitemap accelerates discovery of new pages and important updates.

- It especially helps large or complex sites where internal linking alone isn't enough.

- Submitting a sitemap guarantees no indexation — Google remains the sole judge of content quality.

- A poorly maintained sitemap (redirects, 404 errors, blocked pages) hurts more than it helps.

- Metadata (lastmod, changefreq, priority) are often ignored by Googlebot — don't overestimate their value.

SEO Expert opinion

Does this statement really reflect real-world conditions?

Yes, overall. Observations show that sites submitting a clean sitemap see their new pages discovered much faster than those without one. On a site with several thousand pages, the difference is measured in hours or days, not weeks.

But be careful: Martin Splitt remains vague about "more efficient exploration." Efficient for whom? For the site, or for Google saving crawl on poorly prioritized pages? [To verify]: Google provides no precise metrics on the actual crawl budget gains linked to sitemaps.

In what cases does a sitemap become completely useless?

On a 10-page site with solid internal linking, a sitemap adds absolutely nothing. Googlebot will find everything on its own in two passes. Same for a 50-article blog with good internal linking.

More problematic: "catch-all" sitemaps that include admin pages, duplicate filters, paginated pages — in short, noise. There, not only does the sitemap serve no purpose, but it confuses the crawler and dilutes important signals.

What do we do with lastmod, changefreq, and priority metadata?

Google has confirmed multiple times that priority is completely ignored. For changefreq, it's murky — probably taken as a hint, but with no guarantees. Only lastmod seems to have an impact, and even then: only if it's honest and reliable.

If you lie about lastmod to force recrawls, Google eventually detects the pattern and ignores the signal. Result: you lose overall credibility.

Practical impact and recommendations

What should you do concretely to optimize your XML sitemap?

First, list only indexable pages: no noindex, no canonicalization to other URLs, no redirects. Each line in your sitemap must point to a 200 OK page you want indexed.

Second, segment your sitemaps if your site exceeds 1,000 URLs. Create a sitemap index that points to multiple topic-specific sitemaps (products, categories, articles). This helps Google prioritize and makes maintenance easier.

What mistakes should you absolutely avoid?

The classic mistake: submit a sitemap and never update it. Deleted pages remain listed, new pages are missing — Google loses trust in your signal.

Another trap: including URLs blocked by robots.txt or protected by password. Google discovers them, attempts to crawl, fails, and wastes crawl budget for nothing.

How do you verify that your sitemap is working correctly?

Use Google Search Console, Sitemaps section. Google tells you how many URLs are discovered, how many are indexed, and most importantly, how many generate errors. If the error rate exceeds 5%, there's a structural problem.

Next, check your server logs: Is Googlebot visiting the URLs listed in your sitemap? If not, either they're blocked or Google judges them not worth the effort. Either way, investigation is needed.

- List only indexable pages (200 OK, no noindex, no canonical elsewhere).

- Segment sitemaps beyond 1,000 URLs via a sitemap index.

- Update the sitemap automatically with each content addition/removal.

- Exclude any URL blocked by robots.txt or protected by authentication.

- Regularly check errors in Search Console (Sitemaps section).

- Analyze server logs to confirm that Googlebot crawls the sitemap URLs.

- Don't lie about lastmod: indicate only actual modification dates.

❓ Frequently Asked Questions

Le sitemap XML garantit-il l'indexation de mes pages ?

Faut-il un sitemap pour un petit site de 20 pages ?

Dois-je inclure les métadonnées lastmod, changefreq et priority ?

Combien d'URLs peut contenir un sitemap XML ?

Que faire si Google détecte beaucoup d'erreurs dans mon sitemap ?

🎥 From the same video 7

Other SEO insights extracted from this same Google Search Central video · published on 16/11/2023

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.