Official statement

Other statements from this video 7 ▾

- □ Le sitemap XML est-il vraiment indispensable pour améliorer le crawl de votre site ?

- □ Faut-il vraiment un sitemap pour être indexé par Google ?

- □ Faut-il vraiment limiter les mises à jour de lastmod dans vos sitemaps XML ?

- □ Quelles sont les limites techniques réelles des fichiers sitemap XML ?

- □ Faut-il vraiment diviser vos sitemaps volumineux en plusieurs fichiers ?

- □ Faut-il vraiment indexer toutes les URL de votre sitemap ?

- □ Quels types de contenu faut-il vraiment inclure dans vos sitemaps ?

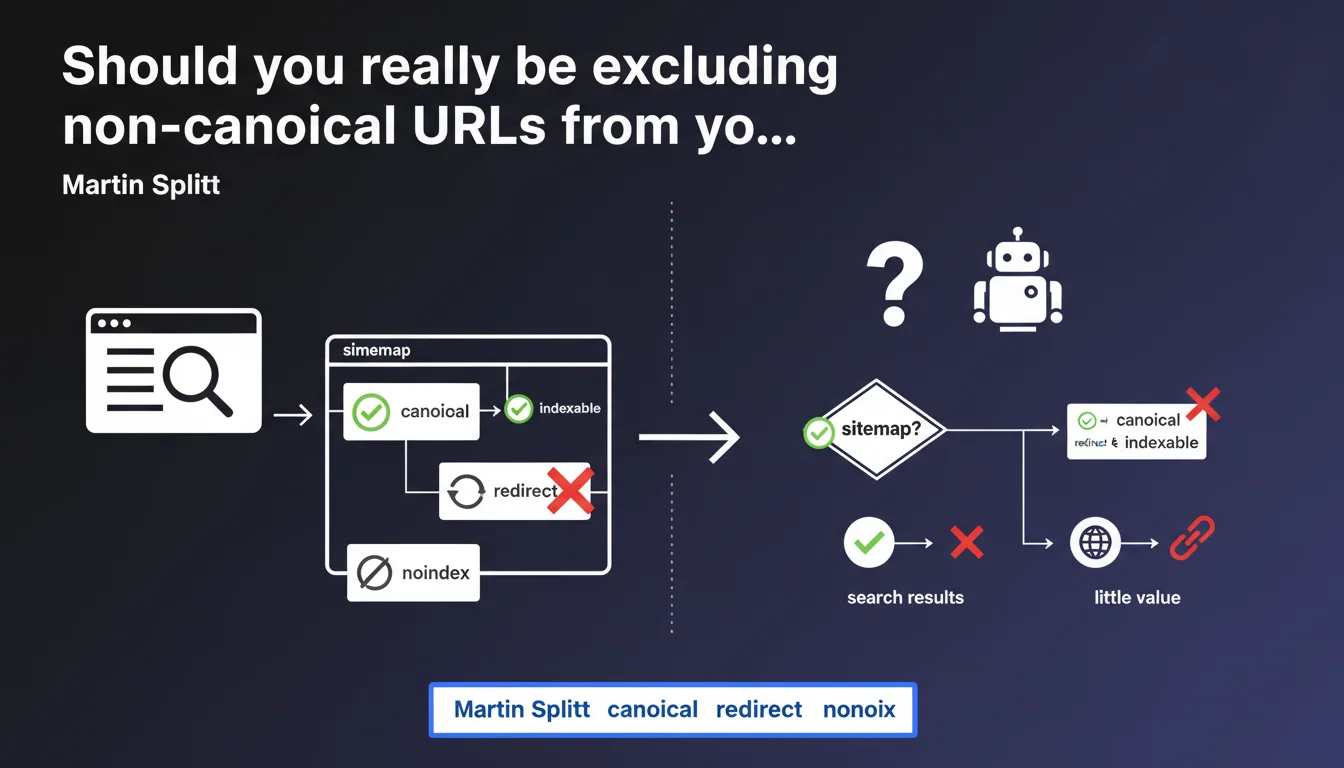

Google is crystal clear: sitemaps should contain only canonical and indexable URLs. Everything else — redirects, noindex pages, non-canonical variants — pollutes your sitemap and adds zero value. Most sites desperately need a cleanup.

What you need to understand

Why does Google keep hammering on what seems like basic stuff?

Because in reality, the majority of sitemaps are misconfigured. You'll find URLs that redirect, pages marked noindex, non-canonicalized parameter variants. Google has to sort through this mess, burning crawl budget for nothing.

The sitemap is supposed to make Googlebot's job easier, not harder. When you stuff it with URLs that shouldn't be indexed, you're sending mixed signals: "crawl this page" on one hand, "don't index it" on the other.

What exactly counts as an indexable URL in this context?

An indexable URL is one that returns a 200 status code, has no noindex tag, isn't blocked in robots.txt, and represents the canonical version (either self-referencing or without any canonical tag if it's the only version).

If your URL redirects to another one with a 301 or 302, it's not indexable. If it has a canonical pointing elsewhere, it's not the canonical version. Simple — and yet.

What are the real consequences of a polluted sitemap?

Googlebot wastes time crawling pointless pages. Your crawl budget gets diluted, especially on large sites. Result: strategic pages might get crawled less frequently.

Another nasty side effect: a sitemap full of errors can lead Google to view it as unreliable, or even partially ignore it. You lose the prioritization advantage it's supposed to provide.

- Put only canonical URLs in your sitemap

- Exclude any noindex URLs or ones that redirect

- Avoid non-canonicalized parameter variants

- Regularly verify consistency between sitemap and indexation directives

- Treat the sitemap as a prioritization signal, not a dumping ground

SEO Expert opinion

Is this rule actually followed by major web players?

Spoiler: nope. A quick audit of sitemaps from well-known sites reveals thousands of redirect or noindex URLs. Even big tech platforms send contradictory signals.

That said — and here's where it gets interesting — Google is capable of handling this pollution. It won't penalize your site because your sitemap contains 10% of 301 URLs. But you lose the crawl optimization effect the sitemap should deliver.

Are there cases where including a non-canonical URL makes sense?

Honestly? No. Some SEOs argue that including variants helps Google discover the canonical version faster. That's flawed reasoning: if your internal linking is solid, Google will find the canonical without help.

Others deliberately include temporary noindex pages to get them crawled faster. Again, that's a crutch. If a page needs to be crawled quickly, it should be linked from an important page — not snuck into a sitemap.

Is Google transparent about the real impact of this recommendation?

As usual, the statement stays vague. Martin Splitt says non-indexable URLs are "of little value." Little value, or genuinely harmful? [Needs verification]

Hard data is missing. What percentage of problematic URLs starts to affect sitemap efficiency? Google won't say. We're flying blind, relying on field reports suggesting that beyond 15-20% useless URLs, the crawl impact becomes measurable.

Practical impact and recommendations

How do you audit your current sitemap?

Start by extracting all URLs from your sitemap. Use Screaming Frog, Oncrawl, or a Python script with standard libraries (requests, BeautifulSoup).

Then crawl those URLs and verify: HTTP status code, presence of canonical tag, indexation directive (noindex or not). Cross-reference with your server logs to see if Google actually crawls what you're telling it to.

What should you actually do to clean up a polluted sitemap?

Remove every URL returning something other than 200. Strip out pages with a canonical pointing elsewhere. Systematically exclude pages marked noindex.

If you have thousands of URLs, automate the process. Most CMS platforms let you set filtering rules. Shopify, for instance, includes filtered collections by default — you need to exclude them manually.

- Crawl your sitemap with an SEO tool (Screaming Frog, Sitebulb, Oncrawl)

- Identify URLs returning 3XX, 4XX, 5XX codes and remove them

- Check for noindex tags and exclude those pages

- Verify that each sitemap URL is truly the canonical version

- Configure your CMS to prevent auto-generation of non-indexable URLs

- Submit the cleaned sitemap via Google Search Console

- Monitor coverage rate and crawl trends in GSC

❓ Frequently Asked Questions

Peut-on avoir plusieurs sitemaps pour un même site ?

Que se passe-t-il si on ne met aucun sitemap ?

Les images et vidéos doivent-elles être dans le sitemap principal ?

Faut-il inclure les pages paginées dans le sitemap ?

À quelle fréquence faut-il mettre à jour le sitemap ?

🎥 From the same video 7

Other SEO insights extracted from this same Google Search Central video · published on 16/11/2023

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.