Official statement

Other statements from this video 7 ▾

- □ Faut-il vraiment exclure les URL non-canoniques de votre sitemap XML ?

- □ Le sitemap XML est-il vraiment indispensable pour améliorer le crawl de votre site ?

- □ Faut-il vraiment un sitemap pour être indexé par Google ?

- □ Faut-il vraiment limiter les mises à jour de lastmod dans vos sitemaps XML ?

- □ Quelles sont les limites techniques réelles des fichiers sitemap XML ?

- □ Faut-il vraiment diviser vos sitemaps volumineux en plusieurs fichiers ?

- □ Quels types de contenu faut-il vraiment inclure dans vos sitemaps ?

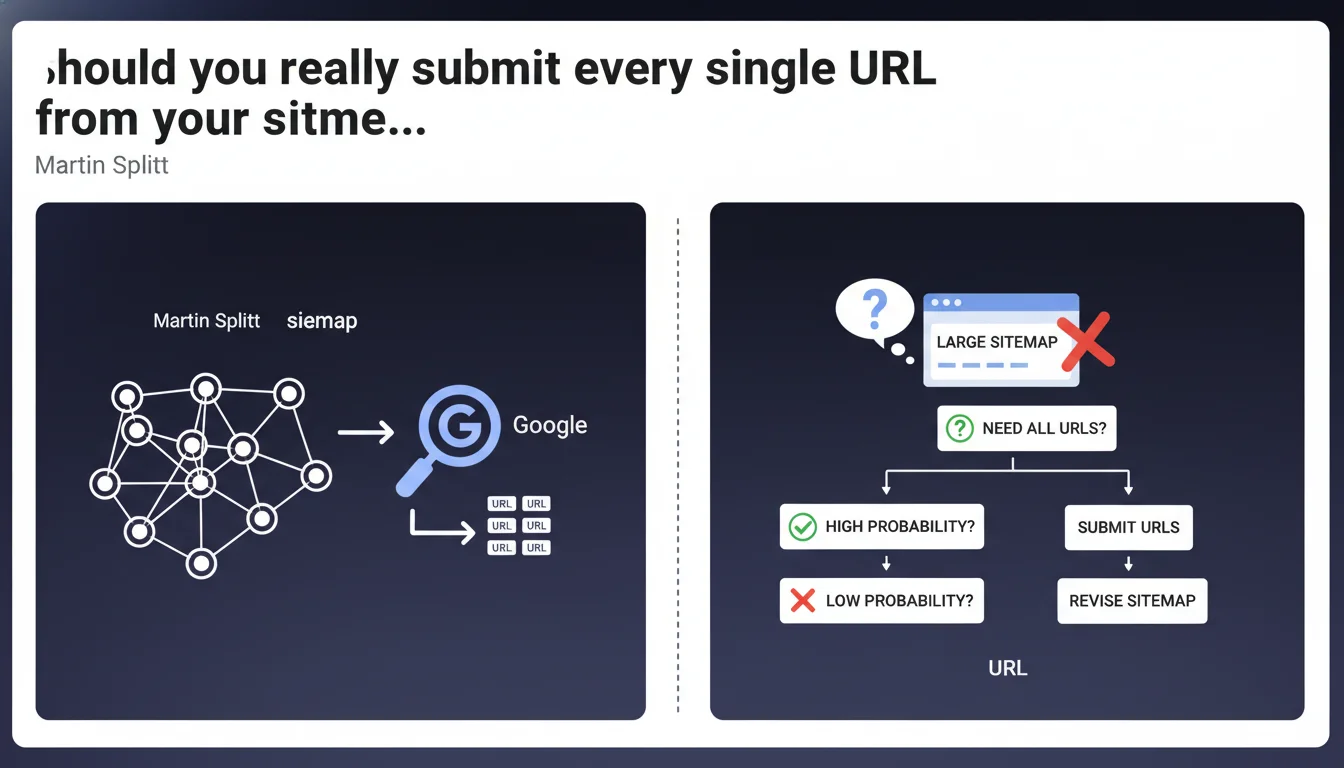

Martin Splitt reminds us of an obvious truth often overlooked: before splitting a massive sitemap, ask yourself whether you actually need to index all those URLs. Google doesn't guarantee indexation of every submitted URL, and an oversized sitemap filled with useless pages dilutes the signal sent to the search engine. Quality always beats quantity.

What you need to understand

Why does Google push so hard for careful URL selection in sitemaps?

An XML sitemap is meant to signal to Google the important pages you want indexed. It's not an exhaustive inventory of every URL on your site. Yet many sites auto-generate sitemaps containing thousands of pages, many of which don't deserve to be indexed at all.

Google has a limited crawl budget for each site. Submitting thousands of low-relevance URLs — duplicate pages, parameter variations, thin content — wastes that budget and muddies the signal about what really matters.

What causes a sitemap to balloon in size?

A sitemap can grow artificially for several reasons: unfiltered automated generation, indexation of paginated or filtered pages, poorly managed language variants, or outdated archives with no real value. In e-commerce or media contexts, it's not unusual to see sitemaps exceed 50,000 URLs without any real thought.

The trap? Believing that more URLs means more visibility. In reality, Google indexes what it deems useful for users, not what you blindly submit to it.

What are the actual odds they'll all get indexed?

This is the key question Splitt raises. Google never commits to indexing an entire sitemap. Indexation depends on content quality, page popularity, information freshness, and the engine's ability to allocate crawl budget to your site.

If you submit 100,000 URLs and only 20,000 actually get indexed, you have a structural problem, not a sitemap problem. Splitting that sitemap into 10 files of 10,000 URLs each won't solve anything if 80% of those pages don't deserve to be indexed.

- A sitemap doesn't guarantee indexation, it signals intent

- Google prioritizes high-value pages and content with strong user demand

- An oversized sitemap dilutes your signal and complicates crawler work

- Before splitting, do a ruthless audit of which URLs to submit

SEO Expert opinion

Does this advice align with real-world observations?

Absolutely. SEO audits regularly reveal sites where over 50% of sitemap-submitted URLs are never indexed. In extreme cases, sites submit 200,000 URLs for only 30,000 pages actually present in Google's index.

This massive gap usually reflects poorly configured automation — sitemaps generated by a CMS without filtering, inclusion of internal search pages, e-commerce filters, or syndicated content. Splitt's advice isn't coming out of nowhere; it answers a common and counterproductive practice.

What nuances should we add to this recommendation?

Splitt stays intentionally vague about what makes a URL necessary. He gives no quantitative criteria or precise methodology. [To verify] — how many non-indexed URLs in a sitemap becomes problematic? Google never says this clearly.

The challenge for practitioners is evaluating this "probability of indexation." Without access to Google's internal metrics, we rely on proxies: indexation rate in Search Console, speed of discovering new URLs, presence or absence in the index via site: queries. But no official signal says "this page has a 10% chance of being indexed."

When should you still submit large volumes?

For news sites or platforms with fresh content, submitting large volumes can be justified: freshness trumps crawl depth in these cases. Media outlets, aggregators, and event platforms need to signal thousands of new URLs daily.

But even then, curation matters: articles vs. ancillary pages, original content vs. syndicated republishing. If your site generates 5,000 URLs daily but 4,500 are variants or recycled content, Splitt's advice applies just as much.

Practical impact and recommendations

What concrete steps should you take before splitting a sitemap?

First step: audit your actual indexation of current URLs. In Google Search Console, check the ratio of discovered pages to indexed pages. If you're submitting 50,000 URLs but only 15,000 are indexed, you have a quality problem, not a volume problem.

Next, identify the URL categories artificially inflating your sitemap: filter pages, old archives, thin content, parameterized variants. Ask yourself: does this page deliver unique value? If the answer is no, remove it from your sitemap.

What mistakes should you avoid when cleaning up your sitemap?

Don't confuse "removing from sitemap" with "blocking crawl." A page absent from your sitemap can still be discovered and indexed via internal links. The reverse is also true: a page in your sitemap but blocked by robots.txt or noindex will never be indexed.

Also avoid removing pages that generate organic traffic, even marginal traffic. Cross-reference Search Console data with your analytics before making cuts. A URL might be indexed without your knowledge and attract a few strategic visits.

How can you verify your site meets this recommendation?

Calculate your indexation rate: indexed URLs / submitted sitemap URLs. If this ratio drops below 50%, you clearly have a problem. Below 30% is critical.

Use the coverage reports in Search Console to identify discovered but non-indexed URLs. Google often gives explicit reasons: duplicate content, insufficient quality, crawling disabled. These signals help you prioritize cleanup.

- Audit current indexation rate via Search Console

- Identify recurring categories of non-indexed URLs

- Remove URLs with no unique added value from your sitemap

- Verify that removed pages remain accessible through internal linking if needed

- Monitor post-cleanup impact on crawl budget and indexation

- Set up automatic filters in your sitemap generation process

❓ Frequently Asked Questions

Combien d'URL maximum doit contenir un sitemap XML ?

Retirer des URL du sitemap peut-il les désindexer ?

Comment savoir si une URL mérite d'être dans le sitemap ?

Faut-il créer plusieurs sitemaps par catégorie ou un seul volumineux ?

Quel impact sur le crawl budget si je réduis mon sitemap de moitié ?

🎥 From the same video 7

Other SEO insights extracted from this same Google Search Central video · published on 16/11/2023

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.