Official statement

Other statements from this video 11 ▾

- □ Google transcrit-il vraiment l'audio de vos vidéos pour les ranker ?

- □ Google analyse-t-il vraiment le texte affiché dans vos vidéos pour le référencement ?

- □ Google analyse-t-il réellement le contenu visuel des vidéos pour le SEO ?

- □ Pourquoi les données structurées vidéo restent-elles indispensables malgré les progrès de l'IA de Google ?

- □ Pourquoi Google exige-t-il l'URL du fichier vidéo dans les données structurées ?

- □ Pourquoi bloquer vos fichiers vidéo pourrait nuire gravement à votre indexation ?

- □ Pourquoi le cache-busting d'URL vidéo bloque-t-il l'indexation Google ?

- □ Faut-il toujours privilégier content URL sur embed URL dans les données structurées vidéo ?

- □ Google analyse-t-il vraiment le contenu vidéo ou se fie-t-il uniquement au texte de la page ?

- □ Google indexe-t-il vraiment les vidéos courtes si elles ont une URL crawlable ?

- □ Pourquoi Google publie-t-il enfin ses adresses IP Googlebot publiquement ?

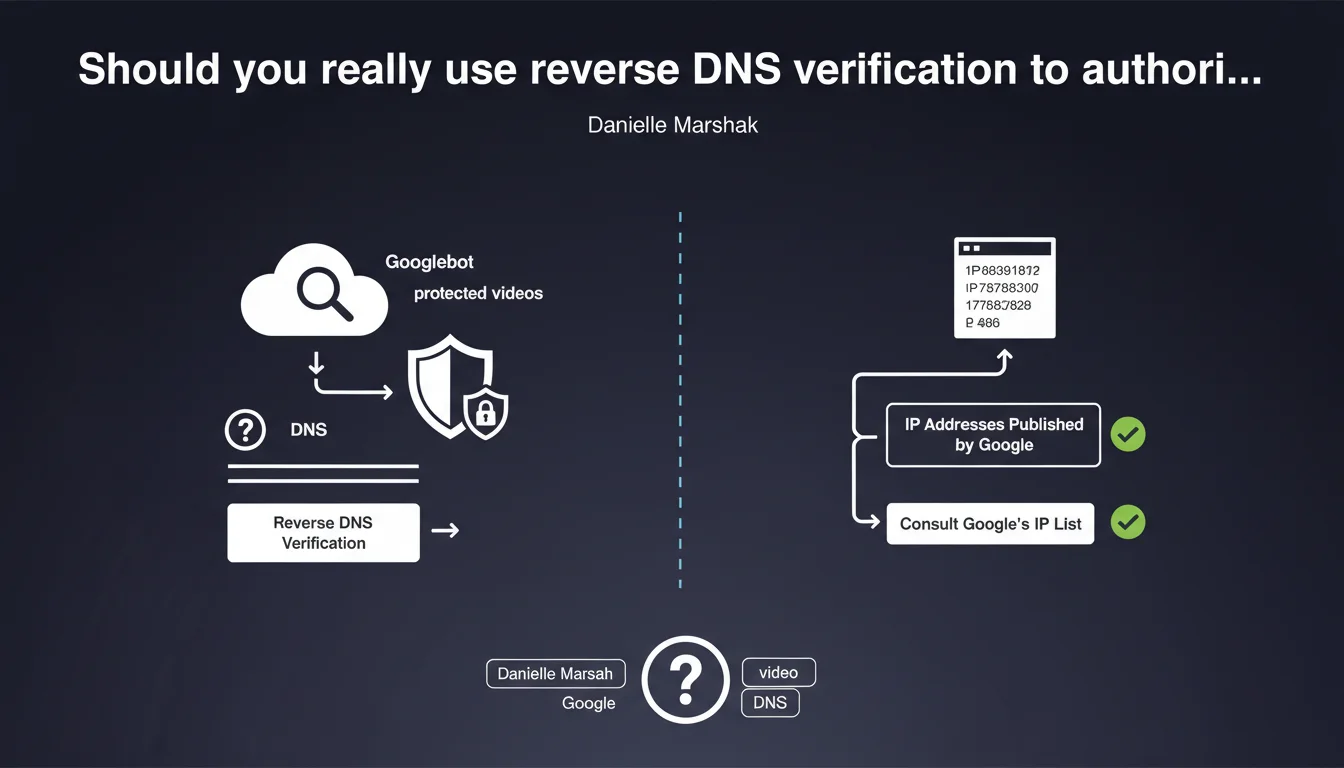

Google recommends reverse DNS verification or published IP ranges to specifically authorize Googlebot to access protected videos. This method ensures that a bot claiming to be from Google is genuinely legitimate. Particularly relevant for sites using authentication systems or access restrictions for video resources.

What you need to understand

Why is Google talking about reverse DNS verification now?

This recommendation targets a specific problem: video content protected by authentication that Googlebot cannot crawl properly. If your site requires a login or authorization to access videos, the bot cannot discover or index them.

Reverse DNS verification allows you to validate the crawler's true identity. In concrete terms, instead of simply reading the User-Agent (easily falsifiable), you query the DNS server to confirm that the bot's IP actually belongs to Google. It's a double-verification system: IP → hostname → IP.

How does this differ from simple IP verification?

Checking the IP ranges published by Google is a more direct method but less flexible. Google can change its IP ranges without notice, which would force you to regularly update your whitelists.

Reverse DNS verification, on the other hand, adapts automatically. Even if Google adds new servers, as long as they respond correctly to the DNS verification process, your system will recognize them as legitimate.

Which sites are truly affected by this recommendation?

Let's be honest: the majority of sites don't need this technique. It's primarily aimed at platforms hosting premium videos, subscription-based content, or resources requiring authentication.

If your videos are publicly accessible without restrictions, this statement adds no practical value. It's a niche solution for a niche problem.

- Reverse DNS verification: recommended method, automatic, resistant to infrastructure changes

- Published IP ranges: simpler alternative but requires regular maintenance

- Primary use case: protected videos, authenticated content, premium resources

- Does not concern sites with freely accessible videos

SEO Expert opinion

Is this recommendation really new or just a reminder?

Google has documented reverse DNS verification for years. It's not a revelation — it's a reformulation of a known practice, likely triggered by field reports showing that some publishers still block Googlebot by mistake.

The interesting point here is the specific mention of protected videos. Google continues to push for better video content indexing, and this message fits into that logic. But in concrete terms, how many sites really have this problem? Hard to quantify.

Are published IP ranges really reliable?

In theory, yes. Google maintains public JSON files listing its IP ranges for Googlebot. But here's the catch: these ranges evolve, and Google guarantees no advance notice before modifications.

I've observed on client infrastructures false positives after undocumented IP range changes. Reverse DNS verification therefore remains superior in robustness, even though it requires more advanced technical configuration. [To verify]: the actual frequency of these range updates on Google's end is nowhere officially documented.

What's the risk of getting the implementation wrong?

A poorly configured reverse DNS can block Googlebot instead of authorizing it. And that's where it gets tricky: many technical teams have never handled this type of verification. Common errors include DNS timeouts that are too short, reverse lookups that fail silently, or firewall rules that conflict.

Practical impact and recommendations

What should I do if my videos are protected by authentication?

First step: precisely identify the resources involved. Do all your videos really need authentication to be crawled? Sometimes, a simple exception for bots is enough — no need to deploy a complex reverse DNS system.

If verification is essential, first document the complete process: retrieving the requester's IP, sending a PTR query to get the hostname, verifying that this name matches googlebot.com or google.com, then sending an A/AAAA query to validate the IP. It's a chain of verifications, not a single operation.

How do I verify that the implementation works correctly?

Use the URL inspection tool in Google Search Console to request a live crawl of a protected video. If Googlebot accesses it without a 403 error, your configuration is operational.

Next, monitor your server logs. Look for requests from Google's IP ranges and verify that they pass through your authorization system correctly. Active monitoring prevents surprises.

What errors should you absolutely avoid?

- Don't test in staging environment before production deployment

- Configure DNS timeouts that are too short (minimum 5 seconds recommended)

- Allow only IPs without reverse DNS verification — spoofing risk

- Forget to log access attempts to detect anomalies

- Fail to update technical documentation for DevOps teams

Reverse DNS verification is a robust but technical solution for authorizing Googlebot on protected video content. It requires a fine understanding of DNS mechanisms and precise server configuration.

If your infrastructure hosts premium or subscription-based videos, this method ensures that only the real Googlebot accesses the resources. But be careful: an implementation error can block crawling. These technical optimizations can quickly become time-consuming and often require specialized expertise — in this context, relying on a specialized SEO agency helps secure the deployment while keeping your focus on your core business.

❓ Frequently Asked Questions

La vérification DNS inversée ralentit-elle le temps de réponse serveur ?

Puis-je me contenter de vérifier uniquement le User-Agent ?

Où trouver les plages IP officielles de Googlebot ?

Que se passe-t-il si je bloque accidentellement Googlebot ?

Cette méthode fonctionne-t-elle pour les autres bots (Bingbot, etc.) ?

🎥 From the same video 11

Other SEO insights extracted from this same Google Search Central video · published on 10/03/2022

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.