Official statement

Other statements from this video 14 ▾

- □ Faut-il changer de domaine lors d'une réduction de catalogue ou conserver l'existant ?

- □ Les backlinks vers une page 404 sont-ils définitivement perdus ou récupérables ?

- □ Peut-on vraiment avoir des millions de redirections 301 sans impacter son SEO ?

- □ Faut-il vraiment ignorer les erreurs 404 dans Google Search Console ?

- □ Faut-il vraiment ajouter les pages paginées dans le sitemap XML ?

- □ Google crawle-t-il vraiment les liens dans les menus déroulants au survol ?

- □ Combien de redirections peut-on vraiment mettre sur un site sans pénalité SEO ?

- □ Faut-il privilégier une personne ou une organisation comme auteur d'un article pour le SEO ?

- □ Faut-il vraiment aligner URL, title et H1 pour ranker en SEO ?

- □ Bloquer une page de redirection par robots.txt peut-il vraiment empêcher le passage du PageRank ?

- □ Les tirets multiples dans un nom de domaine pénalisent-ils votre SEO ?

- □ Faut-il publier du contenu tous les jours pour bien ranker sur Google ?

- □ Faut-il vraiment abandonner le texte dans les images pour le SEO ?

- □ Les Core Web Vitals écrasent-ils vraiment la pertinence dans le classement Google ?

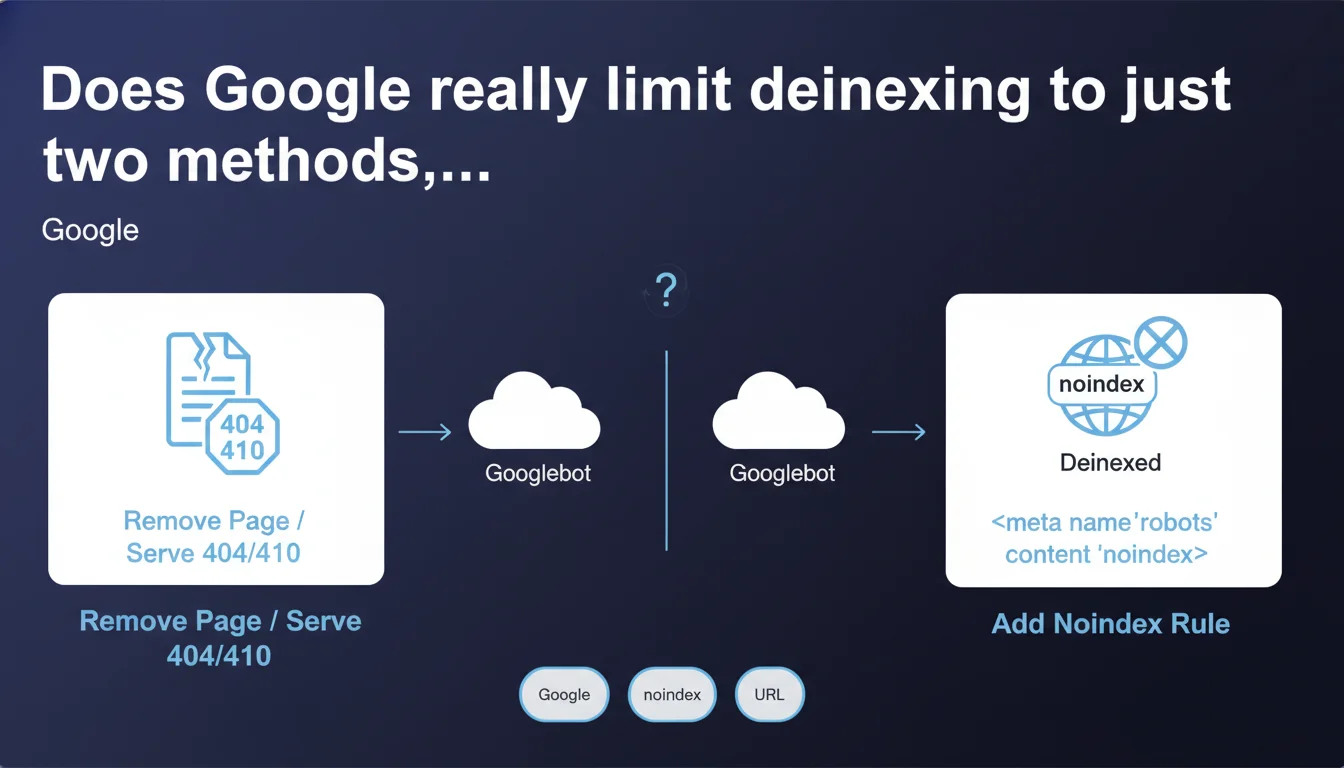

Google claims there are only two effective ways to deindex URLs: returning an HTTP 404/410 status code or using the noindex directive while allowing Googlebot to crawl the page. This deliberate simplification overlooks other technical methods and raises questions about their real effectiveness compared to the two officially recommended options.

What you need to understand

Why does Google insist on only two methods?

Google seeks to simplify communication with webmasters by narrowing the spectrum of possibilities to two clear and manageable options. This pedagogical approach avoids confusion and implementation errors that occur when too many alternatives are offered.

However, this simplification deliberately obscures other technical levers — robots.txt, cross-domain canonical tags, deindexing via Search Console, 301 redirects followed by deletion. Google considers them either ineffective, or as workarounds it prefers to discourage.

What is the fundamental difference between 404/410 and noindex?

The HTTP 404 or 410 status code signals permanent removal at the server level. Googlebot immediately understands the resource no longer exists and removes the URL from the index, usually within days depending on crawl frequency.

The noindex directive requires Googlebot to access the page (status 200) to read the meta tag or HTTP header. It's an explicit instruction requesting not to index the content, while still allowing the crawler to follow links on the page. This nuance changes everything for internal linking and PageRank distribution.

What happens if you block crawling of a noindex page?

Let's be honest: it's a classic mistake. Blocking via robots.txt a page containing a noindex directive prevents Googlebot from discovering that instruction. Result? The URL remains potentially indexed with a truncated snippet.

Google has been insisting on this point for years — and that's why this statement explicitly specifies "allow Googlebot to crawl these pages". Without access, no noindex directive can be read, so no clean deindexing occurs.

- 404/410: Immediate removal, no need to access content

- Noindex: Requires crawling to be applied, preserves crawling of internal links

- Robots.txt: Blocks crawling but doesn't prevent indexing if backlinks exist

- Frequent error: Combining robots.txt + noindex cancels the noindex effect

- Timing: Deindexing via noindex can take several weeks depending on crawl budget

SEO Expert opinion

Is this statement consistent with practices observed in the field?

Broadly yes. Observations align: 404/410 and noindex are indeed the two most reliable levers for deindexing content in a predictable manner. Other methods often produce erratic or incomplete results.

But this simplification sidesteps real use cases. Cross-domain canonicalization, for example, can serve to deindex in favor of another URL — even though Google only considers it a suggestion. 301 redirects followed by deletion on the destination side also create a form of gradual deindexing, albeit less controlled.

What nuances should be added to this claim?

Google says "really only a handful of ways" — which implies others exist, but aren't recommended or as effective. It's an editorial choice, not an absolute technical truth.

Deindexing timing varies dramatically by method. A 410 Gone is treated more aggressively than a standard 404. A noindex on a daily-crawled page will be applied within days; on an orphaned or deep page, it can drag on for weeks. [To verify]: Google publishes no official metrics on average deindexing delays by method.

In what cases is this rule insufficient?

Complex situations escape this binary framework. Imagine a site with millions of dynamically generated URLs — facets, filters, parameters — you want to deindex without physically removing them. Massive noindex can work, but it wastes crawl budget unnecessarily.

In this context, other strategies — aggressive canonicalization, URL parameters in Search Console, architectural redesign — become essential. Google doesn't mention them here, which can mislead practitioners facing large-scale indexation problems.

Practical impact and recommendations

What should you do concretely to deindex properly?

Strategic choice: If content is permanently deleted and will never return, opt for a 404 or 410. If the content still exists but shouldn't appear in search results — member areas, internal conversion pages, duplicate content — use noindex.

Verify that Googlebot has proper access to the pages in question. Check your robots.txt file and ensure no Disallow directive blocks the path. For noindex, crawling must be allowed — it's non-negotiable.

Monitor deindexing via Search Console: search site:yoursite.com/url-to-deindex in Google or track indexed pages in the coverage report. If the URL persists after several weeks, investigate: external backlinks maintaining indexation, crawl issue, mixed signal (contradictory canonical + noindex).

What mistakes should you absolutely avoid?

Never combine robots.txt + noindex on the same URL. It's the most frequent error and completely cancels the desired effect. Google cannot read a directive it doesn't have permission to crawl.

Avoid flipping an entire site to noindex "as a precaution" during migration or redesign. It happens — and it generates SEO disasters. Instead use a staging environment with HTTP authentication or a subdomain blocked via robots.txt.

Don't count on a 301 redirect alone to deindex. The redirect transfers PageRank and signals a move, but the source URL can remain indexed if the destination itself returns an error code or if the redirect chain is broken.

How do you verify your site follows these best practices?

- Audit your robots.txt file: no Disallow directive should block noindex URLs

- Verify HTTP headers of pages to deindex: 404/410 or 200 + noindex code, never incoherent mix

- Check the coverage report in Search Console: identify URLs "Excluded by noindex tag" vs "Not found (404)"

- Scan external backlinks pointing to deindex URLs — they can slow or prevent removal

- Test with a crawler (Screaming Frog, OnCrawl) to spot inconsistencies between directives

- Document your deindexing strategy: which method for which content type, to avoid team errors

❓ Frequently Asked Questions

Peut-on utiliser robots.txt pour désindexer des URLs ?

Quelle différence entre un code 404 et un code 410 ?

Combien de temps faut-il pour qu'une URL en noindex soit désindexée ?

Faut-il supprimer les backlinks vers une page désindexée ?

Peut-on combiner noindex et canonical sur la même page ?

🎥 From the same video 14

Other SEO insights extracted from this same Google Search Central video · published on 29/12/2022

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.