Official statement

Other statements from this video 13 ▾

- □ Le SEO technique est-il vraiment encore indispensable pour le référencement ?

- □ Faut-il arrêter d'obseder sur les détails techniques obscurs en SEO ?

- □ Search Console est-elle vraiment efficace pour diagnostiquer vos problèmes SEO ?

- □ Pourquoi Google privilégie-t-il systématiquement la page d'accueil dans son processus d'indexation ?

- □ Faut-il vraiment sacrifier le volume de trafic au profit de la pertinence ?

- □ Les feedbacks utilisateurs sont-ils plus révélateurs que le trafic pour juger la qualité d'une page ?

- □ La qualité SEO se résume-t-elle vraiment à aider l'utilisateur à accomplir sa tâche ?

- □ Faut-il vraiment miser sur une perspective unique pour ranker dans une niche saturée ?

- □ Faut-il vraiment supprimer les pages à faible trafic de votre site ?

- □ Faut-il vraiment fusionner et rediriger du contenu régulièrement pour améliorer son SEO ?

- □ Faut-il vraiment traiter toutes les erreurs d'exploration de la même manière ?

- □ Faut-il vraiment aligner le title et le H1 pour performer en SEO ?

- □ Faut-il utiliser l'IA générative pour rédiger ses contenus SEO ?

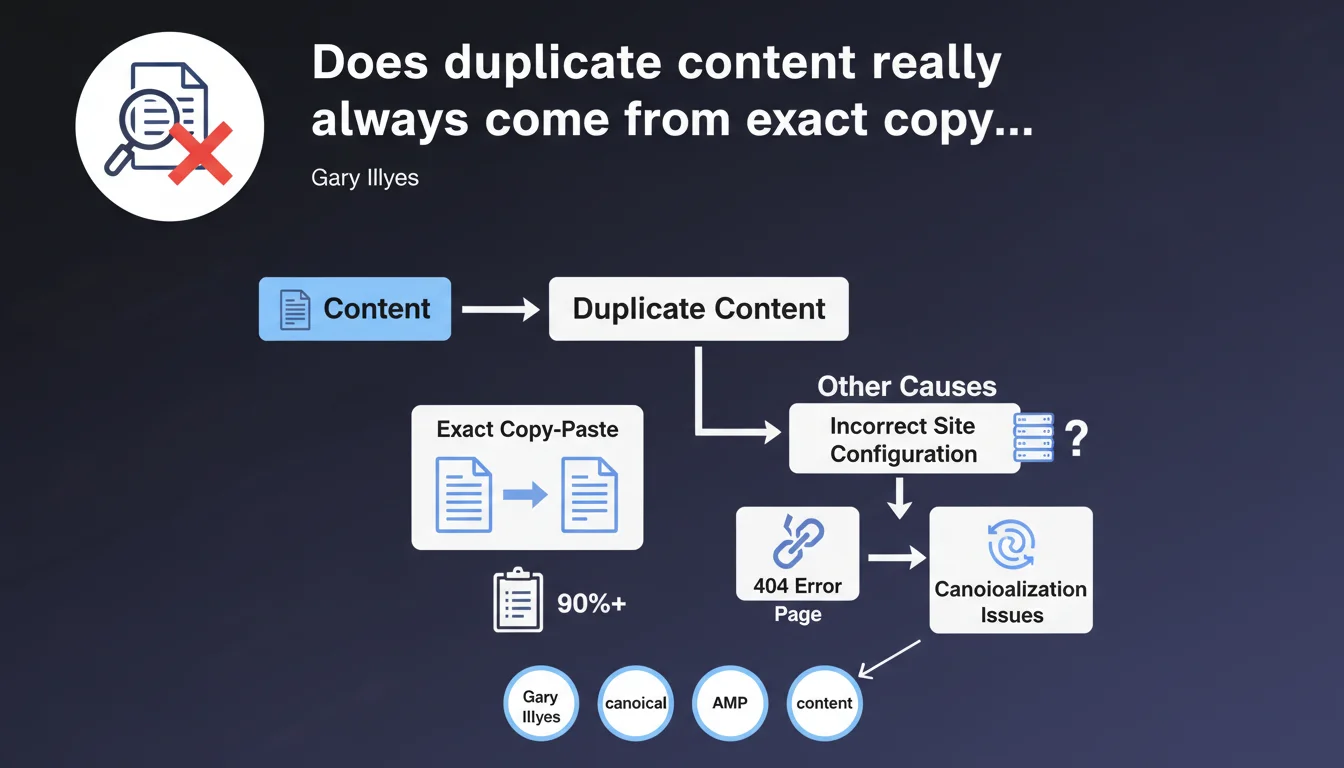

Google claims that the majority of canonicalization problems arise from content duplicated word-for-word, often caused by technical configuration errors. The example given: a site that doesn't return a 404 for random URLs, thereby generating thousands of identical pages. It's a brutal reminder that technical foundations take precedence over semantic subtleties.

What you need to understand

What does Google mean by "word-for-word duplication"?

Gary Illyes is talking here about strictly identical content — not semantic similarity, not paraphrasing, but pure and simple copy-paste. The search engine detects multiple URLs serving exactly the same HTML, word for word.

It's crucial to understand the nuance: Google isn't talking about "thin content" or pages that look similar. It's pointing the finger at technical duplications created by a poorly designed architecture. The example of the missing 404 is revealing — a site that generates a default page for any invented URL multiplies identical versions without even realizing it.

Why do incorrect configurations create so much duplication?

Modern CMSs, URL parameters, HTTP/HTTPS versions, trailing slashes, subdomains — each technical layer can generate URL variations pointing to the same content. If the server doesn't handle these cases with proper redirects or canonical tags, Google sees dozens of identical pages.

The absence of a 404 for random URLs is a particularly dangerous case. Some poorly configured CMSs display the homepage or a generic page instead of returning an error code. The result? Thousands of crawlable URLs with duplicate content.

What's the difference between exact duplication and partial or semantic duplication?

Google makes a clear distinction. Exact duplication triggers a canonicalization problem — the search engine must choose which URL to index among several identical ones. It's a pure algorithmic headache.

Semantic similarity — two pages covering the same topic with different words — falls under a different mechanism. Here, Google doesn't need to choose a canonical URL, but rather evaluate relevance and originality. The stakes and solutions differ radically.

- Exact duplication: Canonicalization problem, often of technical origin

- Server configuration: Missing 404s, missing redirects, faulty URL parameter handling

- Crawl impact: Wasting crawl budget on identical pages

- Key distinction: Word-for-word content ≠ similar or close content

SEO Expert opinion

Does this statement really reflect the majority of real-world cases?

From experience, yes — but with nuances. Most technical audits reveal straightforward duplications: www vs non-www, HTTP vs HTTPS, URLs with session IDs, poorly managed sorting or pagination parameters. These errors easily account for 70-80% of canonicalization issues observed.

Let's be honest: "intelligent" duplication problems (content spinning, automatic paraphrasing) exist, but they're in the minority. What Google is pointing out is that the majority of sites still have rotten foundations before even talking about editorial strategy.

Is the missing 404 example representative?

It's an extreme case, but not uncommon. We mostly see it on custom CMSs or hastily migrated sites. A developer who configures a "user-friendly" fallback without understanding SEO implications creates a abyss of phantom pages.

The problem gets worse with sites that generate dynamic URLs: filters, internal searches, user sessions. If every random combination generates a page instead of an error, Google's crawler gets bogged down in millions of identical variants. [To verify] on your own site: test random URLs and check the HTTP response code.

What are the limitations of this statement?

Gary Illyes talks about "majority," not "totality." There are still cases where partial duplication or semantic cannibalization poses a problem — even if Google doesn't classify them under "canonicalization."

Another point: this statement says nothing about actual impact. Having 10 duplicated URLs on a 100-page site is catastrophic. Having 1,000 on a 500,000-page site can be negligible if they're blocked in robots.txt. Context matters as much as the cause.

Practical impact and recommendations

How do you detect exact duplication on your site?

First step: crawl your site with Screaming Frog, Oncrawl, or Sitebulb. Enable duplicate content detection and analyze clusters of identical pages. Compare MD5 or SHA-1 hashes of HTML content — it's foolproof for spotting exact copies.

Then test random URLs. Invent paths that don't exist and check the HTTP response code. If you get a 200 instead of a 404, you have a structural problem. Also check URL variations: with/without trailing slash, with unnecessary GET parameters, www prefixes, HTTP/HTTPS protocols.

What priority should be given to technical solutions?

Configure HTTP response codes correctly. Any non-existent URL should return a 404 or 410. If you must offer a fallback page (internal search, suggestions), use a 404 code with useful HTML body — yes, it's possible and recommended.

Implement 301 redirects to unify URL variations: www to non-www (or vice versa), HTTP to HTTPS, standardized trailing slash. Consolidate canonical versions at the server layer, before Google even crawls.

Use the canonical tag for cases where redirect isn't possible (pagination, sorting parameters, print versions). But beware: canonical is a suggestion, not an absolute directive. Server configuration always takes precedence.

How do you verify that corrections are working?

Refresh your sitemap and submit it in Search Console. Monitor the coverage report: pages marked as "Discovered, not indexed" or "Excluded by canonical tag" should decrease progressively.

Also check crawl budget. If Google crawls fewer duplicate pages, it devotes more resources to unique content. Server logs give you precise visibility into Googlebot activity before/after your corrections.

- Crawl the site with an SEO tool and identify content duplicated word-for-word

- Test random URLs and verify they properly return a 404 code

- Unify URL variations (www, HTTPS, trailing slash) with 301 redirects

- Configure canonical tags for cases where redirect isn't suitable

- Block unnecessary dynamic URLs in robots.txt (filters, session parameters)

- Monitor Search Console: coverage report, excluded pages, canonicalization errors

- Analyze server logs to measure impact on Googlebot crawl

❓ Frequently Asked Questions

Toutes les duplications de contenu sont-elles pénalisées par Google ?

La balise canonical suffit-elle à régler tous les problèmes de duplication ?

Comment savoir si Google a bien pris en compte mes corrections de duplication ?

Un site qui affiche la page d'accueil pour toute URL invalide est-il vraiment pénalisé ?

Dois-je bloquer en robots.txt toutes les URL avec paramètres pour éviter la duplication ?

🎥 From the same video 13

Other SEO insights extracted from this same Google Search Central video · published on 21/11/2023

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.