Official statement

Other statements from this video 11 ▾

- □ Faut-il supprimer la balise 'priority' de vos sitemaps ?

- □ Faut-il vraiment supprimer la balise 'changefreq' de vos sitemaps ?

- □ Pourquoi Google ignore-t-il la balise 'lastmod' dans vos sitemaps ?

- □ Faut-il encore remplir la balise lastmod dans vos sitemaps XML ?

- □ Pourquoi soumettre un sitemap ne garantit-il pas le crawl de vos URLs ?

- □ Faut-il remplacer les extensions de sitemap par des données structurées ?

- □ Faut-il abandonner les balises vidéo et image dans vos sitemaps XML ?

- □ Faut-il mettre à jour lastmod quand on ajoute des données structurées ?

- □ Pourquoi les identifiants de session en paramètres URL menacent-ils encore le crawl de votre site ?

- □ Un site crawlable garantit-il vraiment une meilleure navigation utilisateur ?

- □ Faut-il vraiment attendre le crawl même après avoir soumis ses URLs via API ?

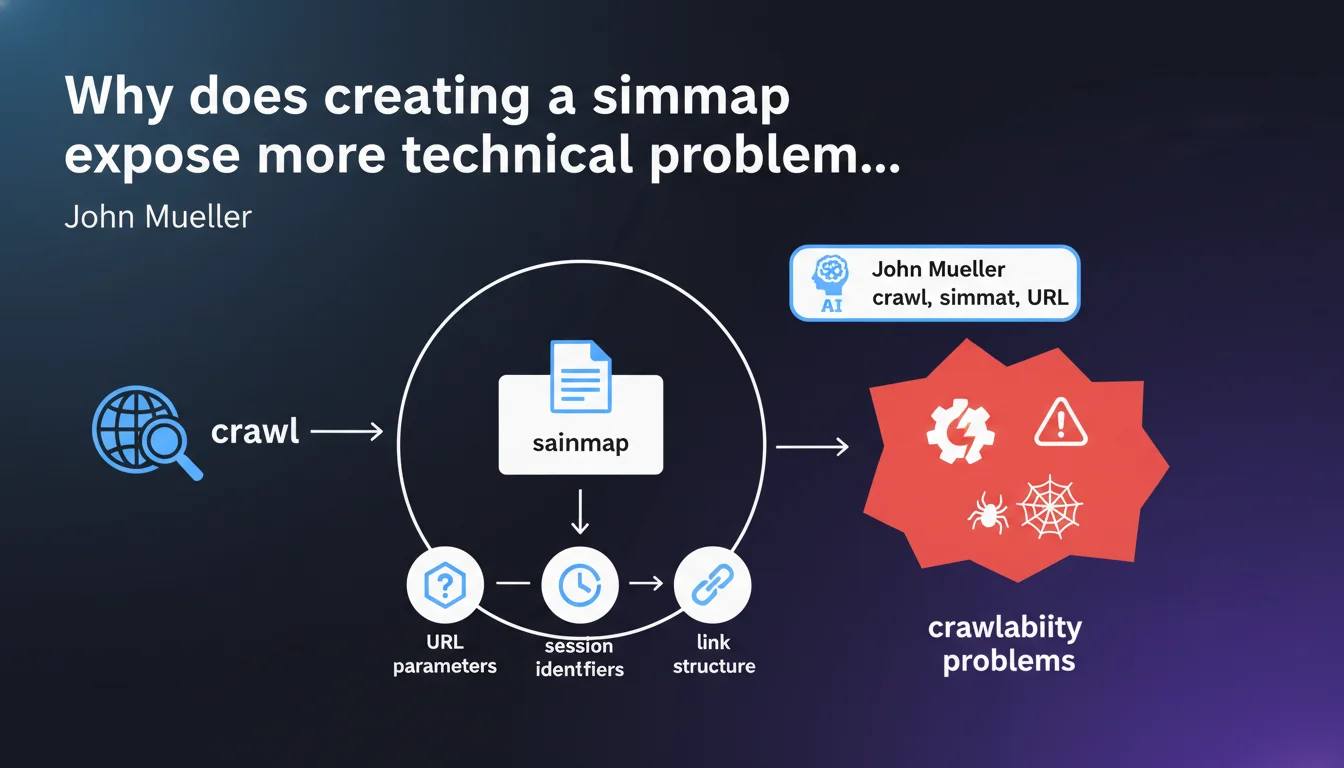

Google confirms that the sitemap creation process forces you to crawl your own site and exposes technical flaws often overlooked: poorly managed URL parameters, session identifiers that pollute the index, failing link structure. A sitemap isn't just an XML file — it's a brutal diagnosis of your architecture.

What you need to understand

Is a sitemap really just a simple submission file for Google?

No. Mueller's statement shifts the perspective: a sitemap is first and foremost an audit tool for the website owner. When you generate a sitemap, you're forced to simulate the behavior of a crawler — traversing URLs, detecting redirects, identifying dynamic parameters.

That's where things get tricky. Many sites discover at this stage that they have tens of thousands of parasitic URLs generated by filters, user sessions, or unnecessary parameters. The sitemap reveals what the human eye never sees in normal browsing.

What crawlability problems emerge concretely?

Three types of alerts that systematically surface: URL parameters that create duplicate content (sorting, filters, tracking), session identifiers that generate unique URLs for each visitor, and internal link structure that reveals orphan pages or infinite loops.

Typical example: an e-commerce site discovers that each combination of product filters produces a distinct URL. Without a sitemap, these URLs stay hidden. With a poorly configured sitemap, they're all submitted to Google — disaster guaranteed.

- URL parameters: sorting, pagination, filters, analytics tracking

- Session identifiers: PHPSESSID, JSESSIONID, temporary tokens

- Failing structure: orphan pages, excessive depth, chained redirects

- Duplication: HTTP/HTTPS variants, www/non-www, trailing slash

Does Google use the sitemap only for crawling?

No, and that's the crucial point. Google analyzes the consistency between your sitemap and your actual link structure. If your sitemap contains 50,000 URLs but only 5,000 are accessible through internal linking, that's an inconsistency signal.

The sitemap then becomes a technical quality indicator. A clean sitemap, aligned with internal structure and free of parasitic parameters, reassures Google about your architectural control.

SEO Expert opinion

Is this statement consistent with field observations?

Absolutely. Audits consistently show that 80% of sites submit sitemaps polluted by technical URLs with no SEO value. E-commerce with thousands of filter combinations, multilingual sites with parameter variants, CMSs that generate preview pages — the list is endless.

Let's be honest: many SEO professionals treat the sitemap as a formality, a box to check. This statement resets expectations — the sitemap is a brutal technical mirror. If you don't like what you see, it's your architecture that's at fault, not the XML file.

Where does this logic find its limits?

On one major point: not all sitemap generation tools are equal. Automatic CMS-generated sitemaps often produce files packed with unnecessary URLs because they don't understand the difference between an indexable page and a technical URL. [To verify]: Google doesn't specify whether a sitemap that's too large (>50,000 URLs) is penalizing or simply ignored beyond a certain threshold.

Second limit: this logic assumes you control your own crawl. Yet many sites have never audited their structure with Screaming Frog or an equivalent. Result: the sitemap reveals problems, but nobody has the skills to interpret them.

What are common misinterpretations?

First mistake: believing that a sitemap guarantees indexation. It doesn't. Google crawls what it wants, when it wants. The sitemap is a suggestion, not a command. If your URLs have zero authority or zero unique content, they'll never be indexed, sitemap or not.

Second mistake: including URLs blocked by robots.txt or noindexed in the sitemap. That's contradictory and sends a signal of technical confusion. Google tolerates it, but it doesn't work in your favor. Concretely? Clean your sitemap before submitting it — it's not a dumping ground.

Practical impact and recommendations

What should you concretely do before generating a sitemap?

Crawl your own site with a professional tool (Screaming Frog, OnCrawl, Botify). Identify URLs generated by unnecessary parameters, sessions, filters. Configure your tool to exclude these patterns before even generating the XML file.

Second critical step: compare your sitemap with your actual internal linking. If a URL is in the sitemap but unreachable within 3 clicks from the homepage, that's a structural problem. Either improve your internal linking or remove the URL from the sitemap.

- Crawl the site with a professional tool to map all URLs

- Identify and exclude unnecessary dynamic parameters (sorting, filters, tracking)

- Remove session identifiers from indexable URLs

- Verify consistency between sitemap and internal link structure

- Exclude URLs blocked by robots.txt or noindexed

- Limit the sitemap to truly strategic URLs (conversion pages, editorial content)

- Test the sitemap on a sample before global submission

What critical mistakes should you absolutely avoid?

Never submit an auto-generated sitemap from your CMS without auditing it first. Generic CMSs (WordPress, Shopify, Magento) include by default technical pages with no SEO value: archives, tags, empty author pages, poorly managed pagination variants.

Fatal mistake: including URLs with session or tracking parameters. Google crawls them, detects duplicate content, and you blow through your crawl budget for nothing. Result: your real strategic pages get under-crawled.

How do you verify that your sitemap is truly clean?

Submit it to Google Search Console and analyze coverage errors. If you have more than 10% errors (pages not found, redirects, blocked by robots.txt), your sitemap is polluted. Fix before relaunching.

Second indicator: compare the number of URLs in your sitemap with the number of URLs actually indexed. A gap >30% signals a quality or crawlability problem. Google is indirectly telling you that part of your sitemap doesn't deserve indexation.

Final recommendation: The sitemap is not a file to generate once and for all. It's a continuous diagnostic tool that reflects the technical health of your site. Every structural change (new category, redesign, filter addition) should trigger a sitemap review.

These optimizations require specialized technical expertise and deep knowledge of professional crawl tools. If your internal team lacks resources or skills in these areas, it may be wise to call on a specialized SEO agency for personalized support — particularly on crawlability audits and advanced Search Console configuration.

❓ Frequently Asked Questions

Un sitemap volumineux (>100 000 URLs) est-il pénalisant pour le SEO ?

Faut-il inclure les pages en noindex dans le sitemap ?

Comment savoir si mon CMS génère des URLs parasites dans le sitemap ?

Le sitemap améliore-t-il directement le classement dans Google ?

À quelle fréquence faut-il mettre à jour son sitemap ?

🎥 From the same video 11

Other SEO insights extracted from this same Google Search Central video · published on 05/05/2022

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.