Official statement

Other statements from this video 12 ▾

- □ La balise meta robots noindex suffit-elle vraiment à empêcher l'indexation d'une page ?

- □ Peut-on vraiment piloter Googlebot News et Googlebot Search avec des balises meta robots distinctes ?

- □ Peut-on vraiment empiler plusieurs directives meta robots dans une seule balise ?

- □ L'en-tête HTTP X-Robots peut-il remplacer la balise meta robots ?

- □ Où faut-il vraiment placer le fichier robots.txt pour qu'il soit pris en compte ?

- □ Faut-il gérer un robots.txt distinct pour chaque sous-domaine ?

- □ Le fichier robots.txt est-il vraiment respecté par tous les moteurs de recherche ?

- □ Faut-il utiliser les wildcards dans robots.txt pour mieux contrôler son crawl ?

- □ Pourquoi ne faut-il jamais combiner robots.txt et meta noindex sur la même page ?

- □ Pourquoi robots.txt empêche-t-il Google de désindexer vos pages ?

- □ Robots.txt bloque-t-il vraiment l'indexation de vos pages ?

- □ Le rapport robots.txt de Google Search Console change-t-il vraiment la donne pour le crawl ?

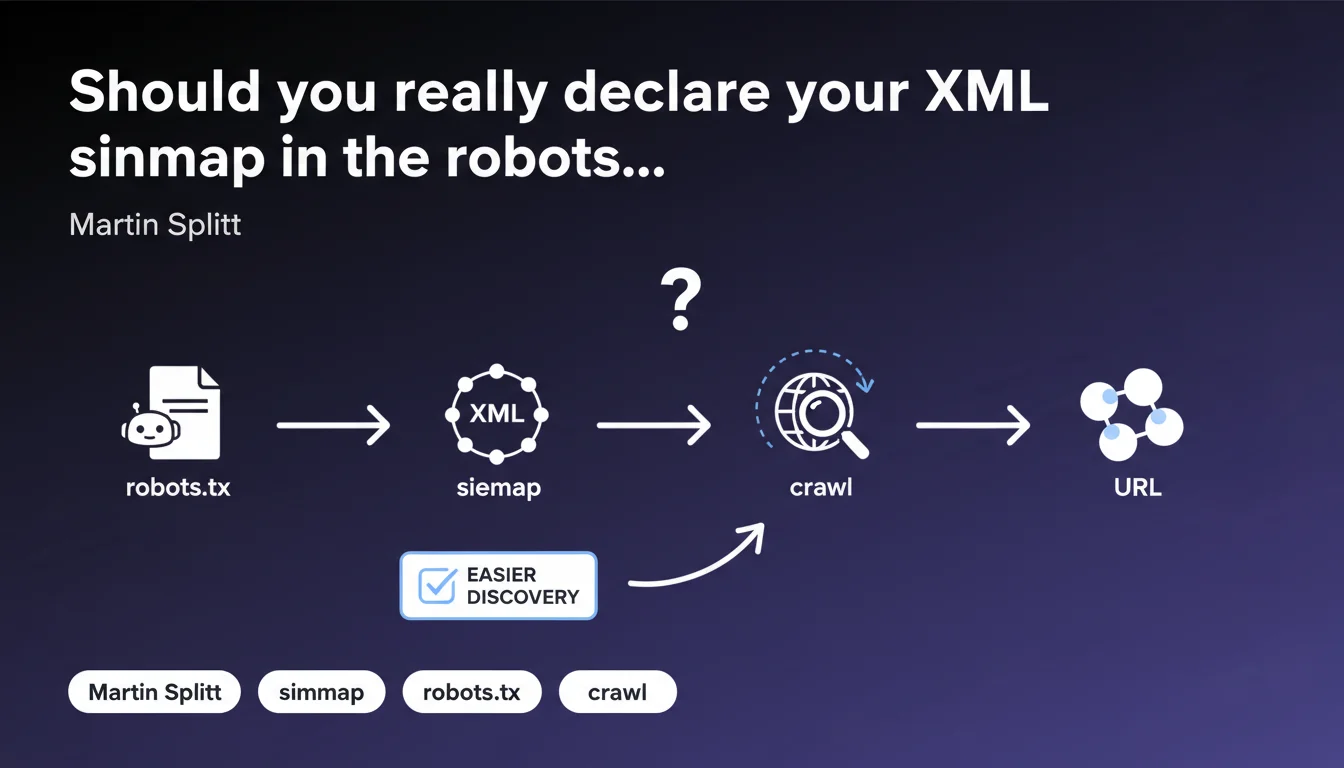

Google confirms that the 'sitemap' directive in robots.txt allows you to indicate the location of your XML sitemap to crawlers. This method facilitates URL discovery, but is just one option among others (Search Console, HTML tag, auto-discovery). The key question: understand whether this practice delivers real value or remains marginal.

What you need to understand

What is the exact function of the sitemap directive in robots.txt?

This directive tells crawl robots — Googlebot chief among them — where to find your XML sitemap. Concretely, you add a line Sitemap: https://example.com/sitemap.xml to your robots.txt file.

The idea: centralize the information at the level of the file that all crawlers consult as a priority. Technically straightforward, but not mandatory — Google discovers sitemaps through multiple channels.

Does this directive replace submitting your sitemap via Search Console?

No. The robots.txt directive is complementary, not exclusive. Google still recommends submitting the sitemap via Search Console for fine-grained tracking (URLs discovered, errors, indexation statuses).

The difference: Search Console provides visibility and control, robots.txt facilitates initial discovery without manual interaction. Both methods coexist without conflict.

What are the other ways to declare a sitemap?

Beyond robots.txt and Search Console, Google can discover a sitemap via:

- A

<link rel="sitemap">tag in the HTML<head> - Auto-discovery: sitemap placed at your domain root (e.g., /sitemap.xml)

- HTTP ping via

http://www.google.com/ping?sitemap=URL(rarely used in practice) - Reference in a sitemap index file

In short, you have options. The robots.txt directive is just one option among several, not a mandatory step.

SEO Expert opinion

Is this statement consistent with real-world observations?

Yes, but with a significant caveat: in practice, Search Console remains the preferred channel for most SEO professionals. The robots.txt directive works, but its real impact on crawl speed or efficiency is never clearly quantified by Google.

We observe that sites declaring their sitemap only via robots.txt are crawled just as well — provided the sitemap is clean and accessible. But it's impossible to measure a tangible gain compared to Search Console alone. [To verify] whether this method actually accelerates discovery on large sites or if it's just technical convenience.

What pitfalls should you avoid with this directive?

First pitfall: declaring multiple conflicting sitemaps. If your robots.txt points to sitemap A and Search Console references sitemap B, you create confusion — even though Google will ultimately process both.

Second pitfall: forgetting to update the directive when you change your sitemap structure. A static robots.txt pointing to an obsolete or inaccessible sitemap serves no purpose, and you won't know without active monitoring.

In what cases is this approach truly useful?

It makes real sense on multi-domain or multilingual sites where each language version has its own robots.txt. Declaring the sitemap locally avoids juggling multiple Search Console accounts.

Another case: sites generating dynamic or split sitemaps (by category, by date). Centralizing references in robots.txt simplifies maintenance, especially if you automate file generation.

Practical impact and recommendations

What do you need to do concretely to declare your sitemap in robots.txt?

Simply add a line Sitemap: https://yourdomain.com/sitemap.xml to your robots.txt file, ideally at the end of the file for clarity. You can declare multiple sitemaps by repeating the directive.

Then verify that your robots.txt is accessible from your domain root and that the sitemap URLs are absolute (no relative paths). Test using the robots.txt inspection tool in Search Console.

Should you abandon Search Console in favor of robots.txt?

Absolutely not. Use both methods to maximize your chances of fast crawling and keep an eye on errors. Search Console provides irreplaceable diagnostics (blocked URLs, server errors, index coverage).

The robots.txt directive is a safety net — it ensures that even a third-party bot or crawler that doesn't go through Search Console will find your sitemap. But it doesn't replace the fine control provided by the Google interface.

How do you verify that the directive is working correctly?

- Test access to your robots.txt:

https://yourdomain.com/robots.txt - Verify that the URLs of declared sitemaps return a 200 HTTP status

- Check the XML format of the sitemap (online validator or Google Search Console)

- Monitor server logs: Googlebot should access the sitemap regularly

- Compare index coverage rates before/after declaration (if possible)

The sitemap directive in robots.txt is a useful but non-critical complement. It simplifies URL discovery, especially on complex architectures, but doesn't replace a well-designed sitemap or Search Console monitoring.

If your site contains thousands of URLs, dynamic sections, or multiple language versions, orchestrating robots.txt, sitemaps, and Search Console can quickly become complex. In that case, guidance from a specialized SEO agency helps you avoid configuration errors and fine-tune your crawl strategy.

❓ Frequently Asked Questions

Peut-on déclarer plusieurs sitemaps dans un seul fichier robots.txt ?

La directive sitemap accélère-t-elle l'indexation par rapport à Search Console ?

Que se passe-t-il si le sitemap déclaré dans robots.txt est inaccessible ?

Faut-il déclarer le sitemap dans robots.txt si on utilise déjà un sitemap index ?

Les crawlers autres que Google respectent-ils la directive sitemap dans robots.txt ?

🎥 From the same video 12

Other SEO insights extracted from this same Google Search Central video · published on 04/12/2024

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.