Official statement

Other statements from this video 11 ▾

- □ Googlebot peut-il indexer un site qui dépend de service workers pour afficher son contenu ?

- □ Googlebot ignore-t-il vraiment les service workers sur votre site ?

- □ Comment diagnostiquer les problèmes d'indexation causés par les service workers dans Search Console ?

- □ Comment les outils de test en direct de Google révèlent-ils les failles de rendu de votre site ?

- □ La console JavaScript révèle-t-elle vraiment les problèmes de rendu critiques pour le SEO ?

- □ Pourquoi la collaboration avec les développeurs est-elle la clé pour débloquer les problèmes d'indexation ?

- □ Faut-il vraiment injecter des console.log pour diagnostiquer les échecs de rendu côté Googlebot ?

- □ Pourquoi les service workers peuvent-ils rendre votre contenu invisible pour Googlebot ?

- □ Faut-il vraiment vérifier le HTML rendu dans Search Console pour diagnostiquer vos problèmes d'indexation ?

- □ Votre page indexée mais invisible : problème technique ou simplement mal classée ?

- □ Comment désactiver un service worker pour diagnostiquer des problèmes SEO ?

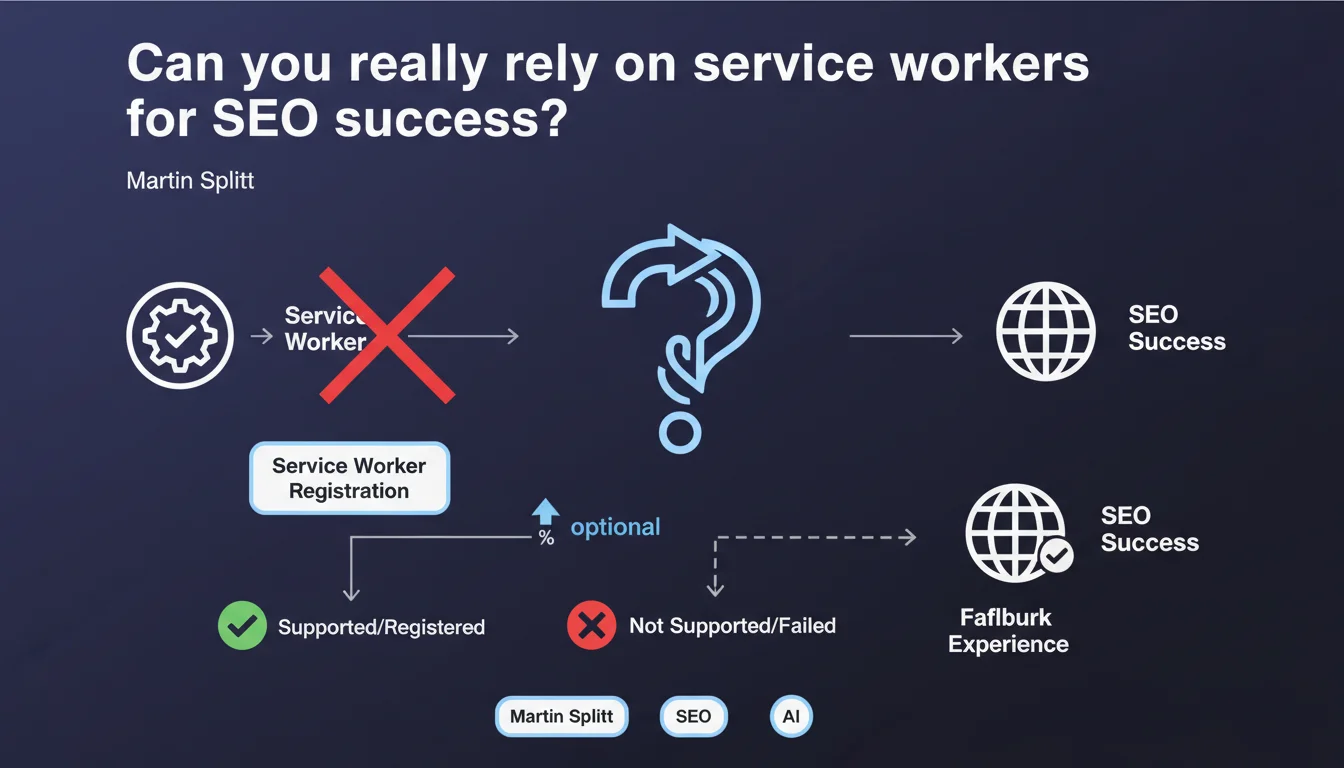

Google reminds us that service workers are optional and can fail during registration — browsers may refuse them. A site must remain functional and crawlable even if the service worker doesn't activate. For SEO, this means you can never rely solely on a service worker to serve critical content.

What you need to understand

Why does Google emphasize that service workers are optional?

Service workers are scripts that run in the background of the browser and intercept network requests. They allow you to cache resources, serve content offline, and improve performance.

The problem: their registration is never guaranteed. A browser may refuse to activate them for security reasons, privacy policy, or simply because the user has disabled JavaScript. Googlebot itself can encounter registration failures during crawling.

What does this change for crawling and indexing?

If your site relies on a service worker to serve main content or manage navigation, you're taking a major risk. Googlebot must be able to access content even if the service worker fails.

Concretely, this mainly affects Progressive Web Apps (PWAs) that rely heavily on service workers. If content is only accessible through the service worker, and that worker fails to register, Googlebot will see an empty or broken page.

What are the concrete technical implications?

- A site must always have a classic HTML version accessible without a service worker

- Critical content must never depend solely on a script intercepting requests

- Caching strategies must be designed as progressive enhancement, not as a dependency

- Crawlability tests must simulate scenarios where the service worker fails

- Internal navigation must remain functional even if the service worker doesn't load

SEO Expert opinion

Is this statement consistent with real-world observations?

Yes, completely. I've seen several PWAs lose rankings because their content was only accessible through a poorly implemented service worker. Googlebot is finicky with these scripts — sometimes it executes them, sometimes it doesn't.

The real issue is that many developers think that if their service worker works locally, it will work everywhere. Wrong. Crawling conditions are different: shorter timeouts, stricter JavaScript environment, different cache management.

What nuances should be added to this advice?

Google isn't saying service workers are bad for SEO. It's saying they must never be a single point of failure. Used correctly, they improve Core Web Vitals and user experience.

The important nuance: service workers can serve cached content to speed up navigation, but that content must also be directly accessible from the server. This is the principle of progressive enhancement applied at the network level.

[To verify]: Google remains vague about how often Googlebot fails to register a service worker. No official metrics are available, making it difficult to assess the real risk.

In what cases does this rule become critical?

Three scenarios to watch closely:

First, Single Page Applications (SPAs) that route everything through a service worker. If the worker crashes, internal navigation becomes invisible to Googlebot. Next, sites that serve dynamic content only through intercepted requests — if the interception fails, the content disappears.

Finally, poorly configured cache-first strategies can serve outdated content to Googlebot for weeks. If the service worker doesn't update, the bot crawls a stale version of the site.

Practical impact and recommendations

What should you check first on your site?

Start by completely disabling service workers in your browser and navigate your site. Everything must work normally: navigation, content loading, forms. If something breaks, you have an SEO problem.

Next, use Search Console to inspect your URLs live. Look at the rendered source code — if main content is missing, it's probably related to a service worker failure on Googlebot's side.

What errors should you avoid during implementation?

The most common mistake: routing all requests through the service worker without a server fallback. If the worker fails, the user (or Googlebot) ends up facing a network error.

Another frequent trap: caching resources critical for SEO (like title tags, meta descriptions, or main content) without also serving these elements directly from the initial HTML. The service worker should improve performance, not replace server rendering.

Finally, never configure a cache-only strategy for indexable content. Always plan a degraded mode that fetches data from the server if the cache is empty or invalid.

How do you test the resilience of your architecture?

- Disable JavaScript and verify that main content displays

- Block service worker registration in DevTools and test navigation

- Simulate a network failure to see how the site behaves without cache

- Inspect URLs via Search Console in live mode to verify Googlebot rendering

- Verify that meta, title, canonical tags are present in the source HTML, not just injected by JavaScript

- Test redirects and HTTP status codes — they must be handled server-side, never through the service worker

- Audit caching strategies to avoid serving stale content to Googlebot

❓ Frequently Asked Questions

Googlebot exécute-t-il les service workers systématiquement ?

Peut-on utiliser un service worker pour accélérer le crawl ?

Faut-il supprimer les service workers de son site ?

Comment savoir si mon service worker bloque l'indexation ?

Les PWA sont-elles mauvaises pour le SEO alors ?

🎥 From the same video 11

Other SEO insights extracted from this same Google Search Central video · published on 01/11/2022

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.