Official statement

Other statements from this video 11 ▾

- □ Faut-il supprimer la balise 'priority' de vos sitemaps ?

- □ Faut-il vraiment supprimer la balise 'changefreq' de vos sitemaps ?

- □ Pourquoi Google ignore-t-il la balise 'lastmod' dans vos sitemaps ?

- □ Faut-il encore remplir la balise lastmod dans vos sitemaps XML ?

- □ Pourquoi soumettre un sitemap ne garantit-il pas le crawl de vos URLs ?

- □ Faut-il remplacer les extensions de sitemap par des données structurées ?

- □ Faut-il abandonner les balises vidéo et image dans vos sitemaps XML ?

- □ Faut-il mettre à jour lastmod quand on ajoute des données structurées ?

- □ Pourquoi créer un sitemap révèle-t-il plus de problèmes techniques qu'il n'en résout ?

- □ Pourquoi les identifiants de session en paramètres URL menacent-ils encore le crawl de votre site ?

- □ Un site crawlable garantit-il vraiment une meilleure navigation utilisateur ?

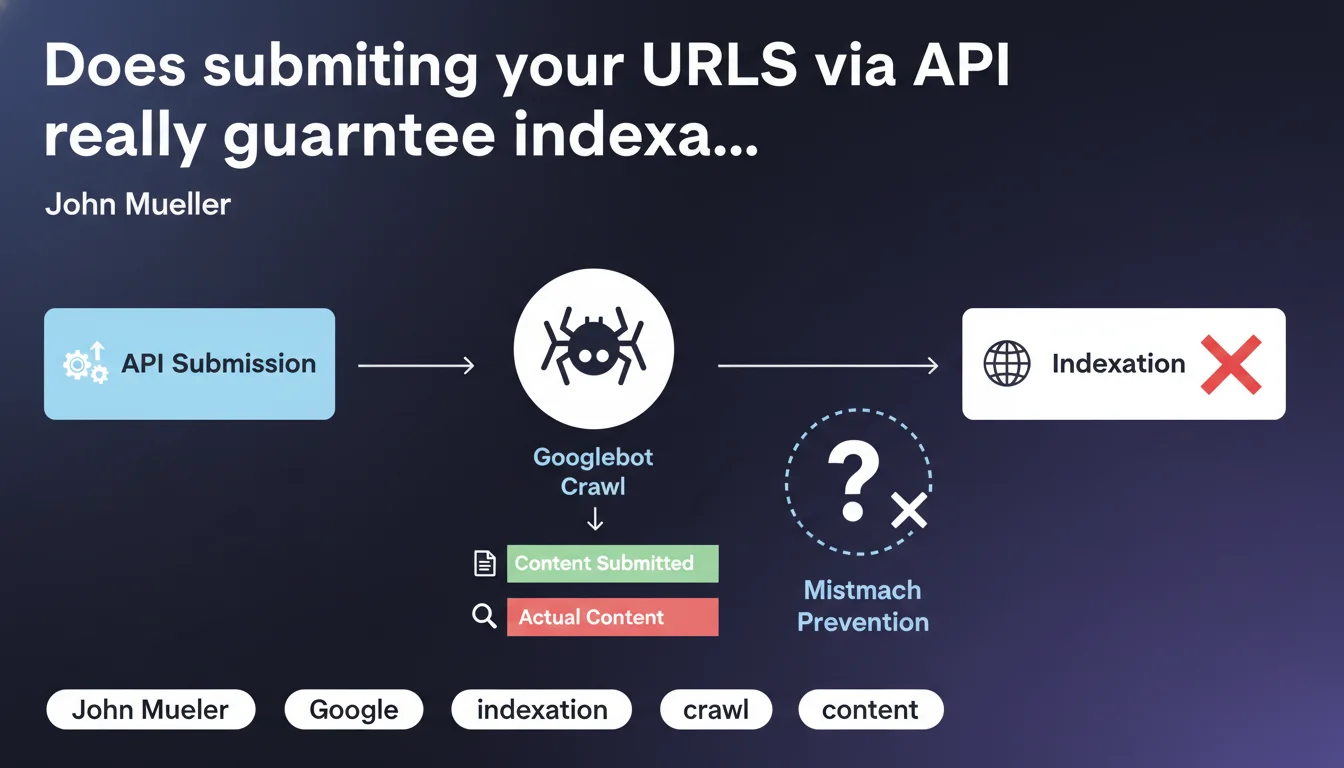

Google confirms that submitting a URL via the Indexing API or IndexNow is not enough: the search engine must crawl the page to verify that the actual content matches what was declared. This systematic verification prevents mismatches between the signals sent and the real content on the ground.

What you need to understand

Why does Google insist on crawling after submission?

The issue here is straightforward: preventing manipulation. If Google blindly indexed all submitted URLs without verifying their content, any site could declare premium content while actually serving spam.

Crawling allows Google to validate consistency between what is announced (via API) and what is actually served to users. It's an essential security layer to maintain index quality.

What exactly happens when you submit a URL?

API submission acts as a priority signal. It tells Google "this page deserves your attention now," but it doesn't guarantee immediate indexation or bypass the crawl requirement.

Google adds the URL to its crawl queue, potentially with higher priority, then sends Googlebot to verify. Indexation can only happen after this verification — or not at all, depending on what the bot finds.

Does this change anything for high-velocity sites?

Yes and no. For news or e-commerce sites publishing massive amounts of content, the Indexing API accelerates discovery but doesn't eliminate the crawl step. The time savings come from prioritization, not validation.

Sites with limited crawl budget can optimize their submissions to concentrate Googlebot resources on strategic content — but the bot will still pass through.

- API submission is a priority signal, not a shortcut to indexation

- Crawling remains mandatory to validate content/declaration matching

- This dual verification protects the index against manipulation

- The real advantage: accelerating discovery, not bypassing the process

SEO Expert opinion

Is this statement consistent with real-world observations?

Absolutely. Testing has long shown that submitting a URL guarantees nothing. We regularly observe URLs submitted via API that take several days to be crawled — or never get indexed if the content is problematic.

What Mueller confirms here is that crawling remains the mandatory step. APIs aren't magic levers but prioritization tools. Those hoping to bypass the standard process can abandon that idea.

What nuances should we add to this rule?

First point: Google doesn't specify the delay between submission and crawl. An authoritative site with good crawl budget will likely see its submitted URLs crawled quickly. A weaker site will wait. [To verify]: is there an implicit SLA based on the site's profile?

Second nuance — and it's significant: this statement applies to standard indexation. JobPosting and other real-time content sometimes benefit from differentiated treatment, where API submission carries more immediate weight.

In what cases can this verification cause problems?

Let's be honest: if your content changes frequently between submission and actual crawl, you risk desynchronization. Imagine a price changing every hour or stock fluctuating. The bot crawls with a delay and indexes outdated info.

Another problematic case: sites that serve different content based on user-agent. If what's shown to Googlebot doesn't match what was promised in the submission, you risk a cloaking penalty — even if unintentional.

Practical impact and recommendations

What should you actually do with this information?

Stop believing the Indexing API is a magic wand. Use it to signal priority content (new products, news articles, strategic pages), but continue optimizing your overall crawl budget.

Ensure your site is technically crawlable: clean robots.txt, updated XML sitemap, correct response times, no unnecessary redirect chains. If Googlebot can't crawl efficiently, API submission is useless.

What mistakes must you absolutely avoid?

Don't massively submit low-quality URLs hoping to force indexation. You'll waste your API quota and, worse, send a negative signal about your site's quality.

Avoid mismatches between what you submit and what's actually served. If you announce a page via API, it must be accessible and compliant at crawl time — not under construction, not 404, not redirected.

How do you verify everything is working correctly?

Track your API submissions in Search Console (Indexing API report). Cross-reference with server logs to identify the average delay between submission and actual crawl. If this delay is abnormally long, look for technical bottlenecks.

Also verify that submitted pages are the ones generating traffic or conversions. A well-targeted API submission optimizes your crawl budget and concentrates Google resources where it matters.

- Reserve the Indexing API for truly priority and real-time content

- Maintain infrastructure that allows fast and efficient crawling

- Synchronize submissions with actual content availability

- Monitor submission/crawl delay to detect anomalies

- Avoid massive submissions of weak or duplicate content

- Cross-reference API data with server logs for complete visibility

❓ Frequently Asked Questions

L'Indexing API garantit-elle une indexation plus rapide ?

Peut-on utiliser IndexNow et Indexing API en parallèle ?

Que se passe-t-il si le contenu change entre la soumission et le crawl ?

Les pages soumises via API sont-elles toujours crawlées dans les 24h ?

Faut-il soumettre toutes les nouvelles pages via API ?

🎥 From the same video 11

Other SEO insights extracted from this same Google Search Central video · published on 05/05/2022

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.