Official statement

Other statements from this video 11 ▾

- □ Faut-il supprimer la balise 'priority' de vos sitemaps ?

- □ Faut-il vraiment supprimer la balise 'changefreq' de vos sitemaps ?

- □ Pourquoi Google ignore-t-il la balise 'lastmod' dans vos sitemaps ?

- □ Faut-il encore remplir la balise lastmod dans vos sitemaps XML ?

- □ Faut-il remplacer les extensions de sitemap par des données structurées ?

- □ Faut-il abandonner les balises vidéo et image dans vos sitemaps XML ?

- □ Faut-il mettre à jour lastmod quand on ajoute des données structurées ?

- □ Pourquoi créer un sitemap révèle-t-il plus de problèmes techniques qu'il n'en résout ?

- □ Pourquoi les identifiants de session en paramètres URL menacent-ils encore le crawl de votre site ?

- □ Un site crawlable garantit-il vraiment une meilleure navigation utilisateur ?

- □ Faut-il vraiment attendre le crawl même après avoir soumis ses URLs via API ?

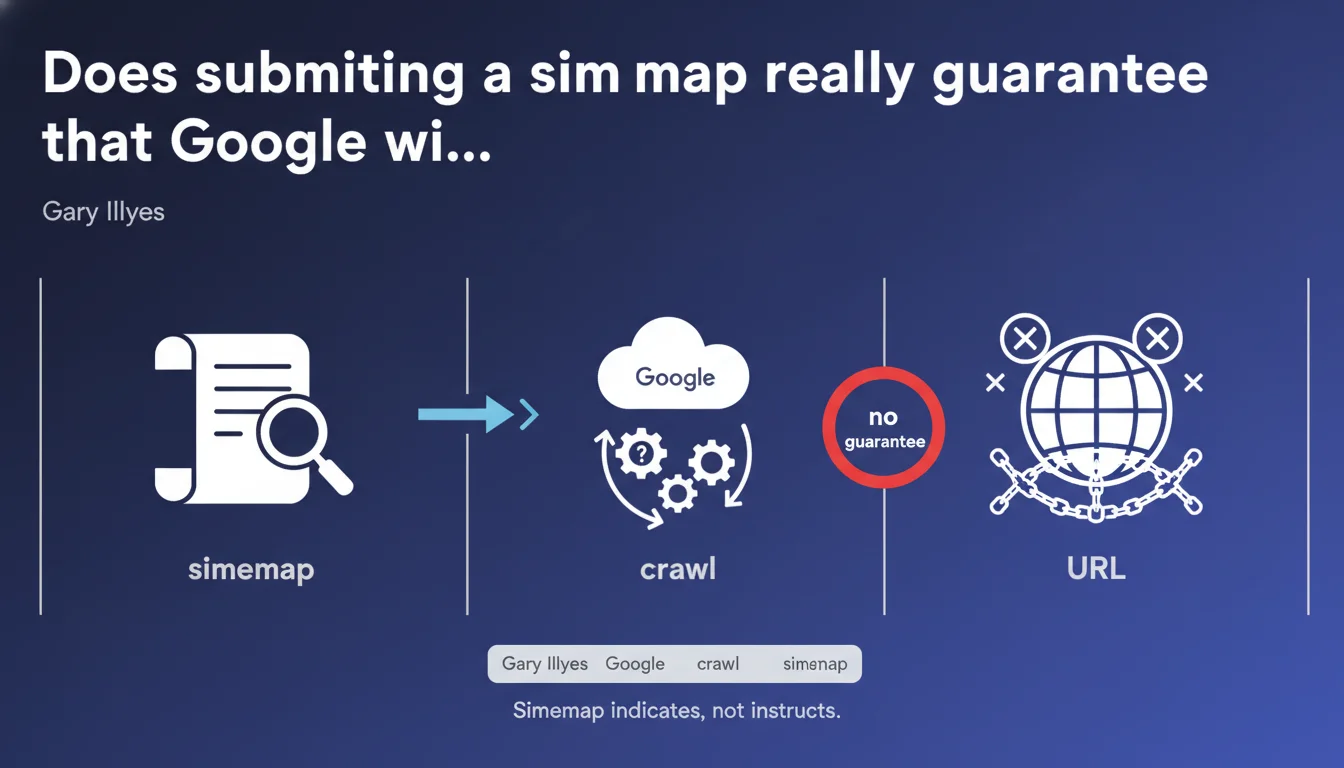

Submitting a sitemap to Google absolutely does not guarantee that your URLs will be crawled. The sitemap is merely a declaration of existence — not a crawl instruction. The mechanism that determines what enters the crawl queue is infinitely more complex than simply having a URL in an XML file.

What you need to understand

What does this statement really mean for crawling?

Many SEO practitioners still view the sitemap as an automatic "entry ticket" into Google's index. This is a fundamental misunderstanding. The sitemap is a discovery tool, nothing more. It signals potentially interesting URLs, but imposes no priority or obligation on Googlebot.

The process that decides what will be crawled relies on dozens of signals: domain popularity, perceived content freshness, historical update frequency, the number of internal and external links pointing to the URL, the crawl budget allocated to the site, and a multitude of other opaque factors. The sitemap is just one piece of data among many.

Why does Google emphasize this point so strongly?

Because millions of webmasters submit sitemaps thinking that it's enough to solve their indexation problems. Spoiler: it isn't. Google wants to prevent site owners from attributing magical powers to the sitemap, when it only suggests URLs — without any guarantee of processing.

This statement reflects a desire to temper expectations. Too many sites complain that their pages are not indexed "even though they're in the sitemap." Gary Illyes is reminding us here that presence in a sitemap is neither a sufficient condition, nor even always necessary, to be crawled.

What factors really take precedence over the sitemap?

The sitemap is a weak signal compared to other levers. Internal links, for example, have a far more decisive impact on the probability of crawl. A URL buried deep in the site structure, even if present in the sitemap, risks being ignored if no relevant internal link reinforces it.

Likewise, crawl budget is a major filter. A site with low authority or technical issues (slow response times, frequent server errors) will have its URLs processed sparingly — sitemap or not. Google optimizes its crawling based on the perceived value of the site and its available server resources.

- The sitemap does not guarantee crawl, it only signals the existence of URLs

- Crawling depends on multiple factors: popularity, freshness, links, crawl budget

- A poorly designed or oversized sitemap can even dilute Googlebot's attention

- Internal links remain the priority lever for directing crawl

- Quality always takes precedence over quantity: a clean sitemap is better than an exhaustive one

SEO Expert opinion

Is this statement consistent with observations in the field?

Absolutely. In practice, we regularly observe that URLs present in the sitemap are never crawled — or are crawled months later. Conversely, URLs absent from the sitemap but well-linked internally can be discovered and indexed within days. The sitemap is one signal among others, not a decisive lever.

What's troubling is that Google maintains some ambiguity about the true usefulness of the sitemap. In its official documentation, it recommends submitting a sitemap "to help with discovery." But in practice, we observe that its impact is often marginal on well-structured sites with good internal linking. [Needs verification]: Google could clarify the specific contexts where the sitemap provides measurable added value.

What nuances should be added to this statement?

There are cases where the sitemap remains essential. On very large sites (e-commerce with millions of references, content aggregators), the sitemap allows signaling isolated URLs that don't benefit from optimal internal linking. In these configurations, the sitemap serves as a safety net to prevent strategic pages from flying under the radar.

Similarly, for content published very frequently (media, active blogs), the sitemap allows Google to detect new URLs more quickly — although, once again, this does not guarantee immediate crawling. The sitemap accelerates discovery, but forces no priority in crawl queues.

In what cases does this rule not really apply?

For very small sites (fewer than 100 pages), the sitemap is often superfluous. If internal linking is clear and coherent, Googlebot will naturally discover all pages by following links. The sitemap becomes merely an administrative formality with no real impact on crawling.

Conversely, on technically complex sites (heavy JavaScript, AJAX pagination, dynamic facets), the sitemap can serve as a backup signal to ensure that certain critical URLs are at least known to Google — although, again, nothing guarantees that they will be crawled with priority.

Practical impact and recommendations

What should you concretely do with your sitemap?

First rule: clean ruthlessly. Keep in your sitemap only the URLs you truly want to see indexed. Remove all pages with noindex, redirects, 404 errors, non-self-referencing canonicals. A pared-down sitemap sends a quality signal to Googlebot.

Next, segment your sitemaps by content type or site section. Rather than one monolithic file of 50,000 URLs, create several thematic sitemaps (products, categories, editorial content, etc.). This allows you to more closely track Google's crawl behavior and identify under-crawled areas.

What mistakes should you absolutely avoid?

Don't overload your sitemap with unnecessary URLs. Including all filter variants, all pagination pages, all URL parameters dilutes Googlebot's attention and can even slow the crawling of strategic pages. Selectivity is crucial.

Also avoid constantly modifying your sitemap without strategic reason. Google crawls sitemaps asynchronously — submitting them every hour won't speed anything up. Focus on stability and consistency of the file rather than update frequency.

- Clean your sitemap: remove all non-indexable URLs (noindex, 404, redirects)

- Segment your sitemaps by content type for granular monitoring

- Limit yourself to strategic URLs — quality takes precedence over quantity

- Regularly check coverage reports in Search Console

- Strengthen internal linking of priority URLs — the sitemap doesn't replace links

- Monitor crawl budget: identify pages crawled unnecessarily and block them via robots.txt

- Never rely on the sitemap alone to solve an indexing problem

How can you ensure Google effectively crawls your strategic pages?

The sitemap is one tool among many. To maximize crawling of priority pages, focus on solid internal linking, optimal server response times, and clear architecture. Check in Search Console which URLs are crawled, how frequently, and adjust your strategy accordingly.

If you find that critical pages are never crawled despite being in the sitemap, it's a sign of a deeper problem: lack of internal links, content perceived as low value, or insufficient crawl budget. In this case, reviewing the overall site architecture and strengthening relevance signals becomes the priority.

❓ Frequently Asked Questions

Un sitemap est-il obligatoire pour que Google indexe mon site ?

Combien de temps après la soumission d'un sitemap Google crawle-t-il les URLs ?

Dois-je inclure toutes mes pages dans le sitemap ?

Le sitemap influence-t-il le classement dans les résultats de recherche ?

Que faire si Google ne crawle pas mes URLs malgré leur présence dans le sitemap ?

🎥 From the same video 11

Other SEO insights extracted from this same Google Search Central video · published on 05/05/2022

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.