Official statement

Other statements from this video 17 ▾

- 1:24 Why is Google republishing guides on robots.txt and meta robots right now?

- 7:02 Does GoogleBot really crawl URLs that your site never created?

- 7:27 Why do Search Console and Google Analytics show different numbers?

- 7:27 Does GoogleBot really crawl URLs your site never created?

- 8:07 Why does Search Console show completely different data than Google Analytics?

- 8:51 How long does it actually take Google to detect and process a noindex tag fix?

- 11:11 Does special character encoding in source code really harm your SEO rankings?

- 11:11 Does special character encoding in your source code actually hurt your SEO rankings?

- 11:47 What's the safest way to prevent Google from crawling your PDFs without accidentally getting them indexed?

- 11:51 Should you really block PDFs with robots.txt or use noindex instead?

- 14:14 How long does Google really take to display your new site name in search results?

- 14:14 How can you force Google to display your site's correct name in the SERPs?

- 14:59 Does Google really penalize brand names that are too similar to competitors in search results?

- 15:14 Could similar brand names harm your SEO performance and search visibility?

- 19:01 Why won't Google reveal its exact criteria for adult content classification?

- 20:13 Does Google penalize a site that's 100% HTTPS with no HTTP version?

- 20:30 Does running an HTTPS-only website actually hurt your SEO rankings?

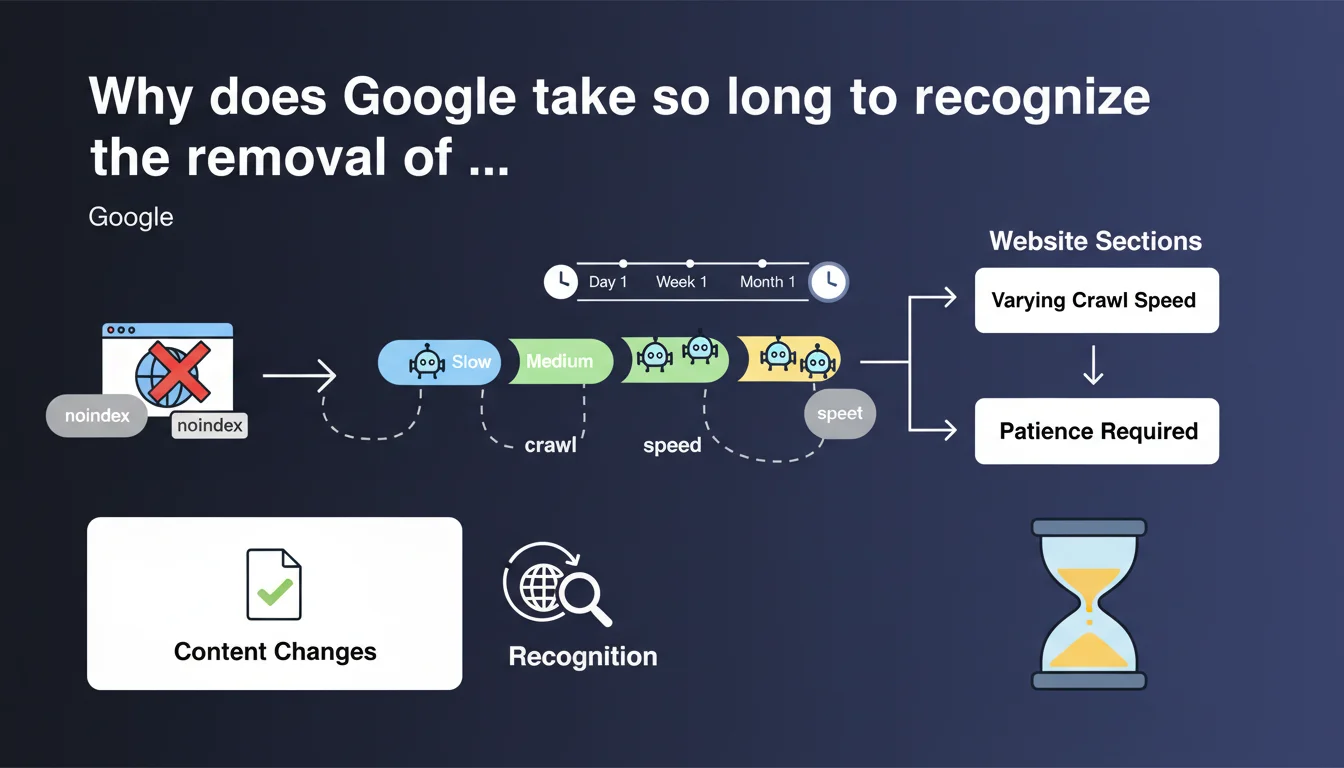

Google does not instantly detect noindex tag modifications: the delay depends on the crawl frequency of each section of your site. After fixing a massive noindex error, you must accept a variable waiting period before complete reindexing occurs. Patience is necessary, but it's not just passive resignation.

What you need to understand

What actually happens when you remove a noindex tag?

Removing a noindex tag triggers no automatic notification to Google. The search engine must recrawl the page to detect the change, then process this information through its indexing pipeline. This process is part of the regular crawl cycle, which varies considerably from one URL to another.

Concretely: a page crawled daily will potentially be reindexed within a few days, while a peripheral URL may wait weeks. Crawl speed is not uniform across a site — Google allocates its budget differently depending on sections.

Why does this slowness pose a problem for SEO professionals?

Because a massive noindex error (faulty template, incorrect manipulation) can block hundreds or thousands of pages for weeks after correction. The damage extends well beyond the initial bug.

Google recommends patience, but provides no leverage to accelerate processing. Forcing tools (URL Inspection, XML sitemaps) have marginal effect on large volumes. It's frustrating when organic traffic collapses.

What factors influence this recognition delay?

- Habitual crawl frequency of the section concerned (history, depth, popularity)

- Server load and crawl budget : Google won't push harder if the site shows signs of slowdown

- Perceived content quality : pages historically deemed uninteresting are recrawled less frequently

- External signals : internal/external links, mentions, traffic can accelerate recrawl

- Technical architecture : response time, stability, presence of an updated sitemap

SEO Expert opinion

Is this statement consistent with real-world observations?

Yes, completely. We regularly observe delays of several weeks between fixing a noindex and complete reindexing, especially on sites with medium or weak crawl budget. This is not SEO urban legend — it's documented in dozens of client cases.

The problem is that Google provides no concrete metrics. How long? Impossible to predict. Between 3 days and 2 months according to reports. [To verify] whether internal thresholds at Google trigger priority recrawling after detecting a massive structural change.

What nuances should be added to this recommendation?

"Being patient" is not a strategy. You can take action to reduce waiting time without guarantee of immediate success. Submitting URLs via Google Search Console (URL Inspection) sometimes accelerates individual processing, but it's ineffective beyond a few dozen pages.

Updating the XML sitemap and resubmitting it can signal a structural change. Temporarily increasing internal linking to the affected pages improves their crawl priority. Let's be honest: none of these tactics force Google to instantly recrawl thousands of URLs.

In what cases does this delay become critical?

For an e-commerce site in peak season, waiting 3 weeks for Google to reindex 10,000 product sheets accidentally noindexed can represent massive revenue losses. Same for a news media outlet: recent articles blocked lose all value.

Practical impact and recommendations

What should you do immediately after fixing an accidental noindex?

Document the incident : list affected URLs, correction date, before/after metrics (impressions, clicks in GSC). This will serve as a reference for tracking progressive recovery.

Resubmit the updated XML sitemap via Search Console. Add critical URLs (high historical traffic, high conversion) in manual inspection to signal priority to Google. Don't expect miracles, but it costs nothing.

How to accelerate recrawl without violating guidelines?

- Strengthen internal linking to repaired pages from the homepage or important hubs

- Publish fresh content on these pages (date updates, added sections) to signal activity

- Verify that crawl budget isn't wasted elsewhere (facets, unnecessary URL parameters, chain redirects)

- Monitor server logs to observe Googlebot visits and identify neglected sections

- Use IndexNow (Bing/Yandex) in parallel if your traffic doesn't depend exclusively on Google

What mistakes should you avoid during the waiting phase?

Don't massively modify other technical parameters in parallel (URL structure, canonicals, redirects). You would obscure the picture for diagnosing what works or not. Stay focused on noindex and its consequences.

Avoid frantically submitting the same URLs via URL Inspection every hour. Google interprets this as spam and may slow down processing. One submission per URL is sufficient.

❓ Frequently Asked Questions

Combien de temps Google met-il en moyenne pour reconnaître la suppression d'un noindex ?

Soumettre une URL via Google Search Console accélère-t-il vraiment le processus ?

Faut-il resoumettre le sitemap XML après avoir corrigé un noindex ?

Pourquoi certaines pages sont-elles réindexées rapidement et d'autres non ?

Existe-t-il un moyen de forcer Google à recrawler immédiatement des milliers de pages ?

🎥 From the same video 17

Other SEO insights extracted from this same Google Search Central video · published on 27/03/2025

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.