Official statement

Other statements from this video 13 ▾

- □ Le SEO technique est-il vraiment encore indispensable pour le référencement ?

- □ Search Console est-elle vraiment efficace pour diagnostiquer vos problèmes SEO ?

- □ Pourquoi Google privilégie-t-il systématiquement la page d'accueil dans son processus d'indexation ?

- □ La duplication de contenu provient-elle vraiment toujours de copié-collé exact ?

- □ Faut-il vraiment sacrifier le volume de trafic au profit de la pertinence ?

- □ Les feedbacks utilisateurs sont-ils plus révélateurs que le trafic pour juger la qualité d'une page ?

- □ La qualité SEO se résume-t-elle vraiment à aider l'utilisateur à accomplir sa tâche ?

- □ Faut-il vraiment miser sur une perspective unique pour ranker dans une niche saturée ?

- □ Faut-il vraiment supprimer les pages à faible trafic de votre site ?

- □ Faut-il vraiment fusionner et rediriger du contenu régulièrement pour améliorer son SEO ?

- □ Faut-il vraiment traiter toutes les erreurs d'exploration de la même manière ?

- □ Faut-il vraiment aligner le title et le H1 pour performer en SEO ?

- □ Faut-il utiliser l'IA générative pour rédiger ses contenus SEO ?

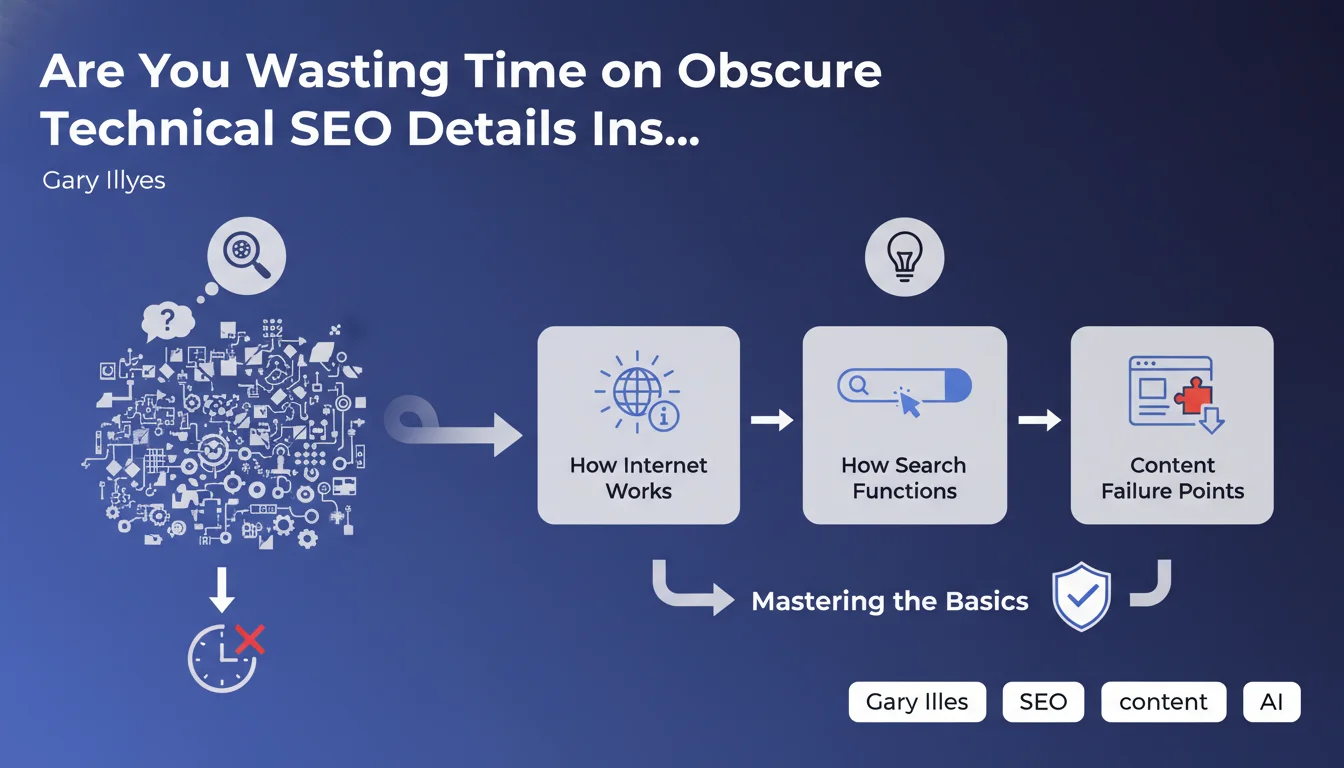

Gary Illyes reminds us that technical fundamentals (how the internet works, how crawling functions, how indexation happens) take priority over optimizing esoteric details. The recommended approach: methodically identify where the process fails rather than multiplying micro-optimizations. A return to basics that questions the relevance of certain advanced practices.

What you need to understand

What Does Google Mean by "Strange Technical Details"?

Google is targeting here the marginal optimizations that some SEOs spend disproportionate time on: micro-adjustments of obscure tags, over-optimization of exotic server parameters, endless debates about secondary HTML attributes.

The message is clear — before fine-tuning details, you must master the complete journey: DNS, server, rendering, crawling, indexation, ranking. If a page isn't indexed, optimizing its schema markup will do absolutely nothing.

Why This Insistence on the "Basics"?

Because the majority of SEO problems stem from fundamental errors: misconfigured robots.txt, catastrophic server response times, JavaScript content not being rendered, broken canonicalization.

Google likely observes that too many sites neglect these foundations while obsessing over second-order optimizations. The gap between effort invested and impact achieved is often disproportionate.

How Do You Identify Where the Process Fails?

The methodical approach consists of tracing back up the chain: Can Googlebot access the page? Is the content visible in the rendered HTML? Is the page indexable? Does the content address a search intent?

Each step must be validated sequentially. If crawling fails, there's no point analyzing content. If indexation is blocked, on-page optimizations are superfluous.

- Systematically verify the complete chain: DNS → server → crawling → rendering → indexation → ranking

- Prioritize structural blockers before marginal optimizations

- Understand how the internet works (HTTP requests, DNS, CDN, caching) to diagnose effectively

- Use Search Console and server logs to identify breaking points

- Accept that detailed optimization will have zero impact if fundamentals are failing

SEO Expert opinion

Is This Statement Consistent with Real-World Observations?

Yes, but with a major caveat — Google is simplifying. In reality, the "basics" aren't always sufficient in ultra-competitive markets. When all players master the fundamentals, it's precisely those details that make the difference.

The problem is that many SEOs skip steps. They optimize microdata while their site has a 3-second response time. They debate the "decoding" attribute on images while 40% of their pages aren't indexed. This imbalance is what Google is pointing out.

In Which Cases Do Technical Details Become Decisive?

On technically solid sites, advanced optimizations can indeed generate measurable gains: crawl budget fine-tuning, Critical Rendering Path optimization, sophisticated prerendering strategies.

But these cases concern a minority of sites. For most, the ROI lies elsewhere — accessibility, raw performance, logical architecture, relevant content. [To verify]: Google provides no metrics to define where to draw the line between "basics" and "strange details".

What Risks Come With Following This Advice Too Literally?

Limiting yourself to the "basics" can lead to underestimating the importance of certain signals that Google now considers fundamental: Core Web Vitals, HTTPS, mobile-first, structured data for certain features.

Google's discourse remains deliberately vague about what qualifies as "basic" versus "strange detail". Result: everyone interprets it according to their level or interests. What's "weird" for Google might not be for an e-commerce site managing 100,000 products.

Practical impact and recommendations

What Should You Do Concretely After This Statement?

Start with a sequential technical audit: document each step of Googlebot's journey on your strategic pages. Use server logs to verify that crawling proceeds normally, Search Console to detect indexation errors.

Next, prioritize ruthlessly. List all technical projects under consideration and rank them by their impact on the basic process (crawling, rendering, indexation). Everything else that doesn't directly block these steps takes a backseat.

What Mistakes Should You Avoid With This Advice?

Don't confuse "focusing on the basics" with "doing the bare minimum". The basics include complex subjects: crawl budget management on large sites, JavaScript rendering strategy, scalable information architecture.

Another trap — completely ignoring advanced optimizations under the guise that they're "obscure". If your site already masters the fundamentals, that's precisely when you should go further. Google's advice is mainly aimed at sites putting the cart before the horse.

How Can You Verify Your Site Respects These Priorities?

- Analyze server logs to confirm that Googlebot accesses important pages normally

- Verify in Search Console that the indexation rate of strategic pages is close to 100%

- Test actual rendering via the URL inspection tool (not just source code)

- Audit server response times and Core Web Vitals — if they're red, everything else waits

- Check the consistency of directives: robots.txt, meta robots, canonicals, XML sitemap

- Identify orphaned or poorly linked content — a page invisible to crawlers doesn't exist

- Ensure that critical resources (CSS, JS, fonts) aren't blocked from crawling

❓ Frequently Asked Questions

Qu'est-ce qu'un « détail technique étrange » selon Google ?

Les optimisations avancées (schema markup, prerendering) sont-elles inutiles ?

Comment savoir si je dois me concentrer sur les bases ou aller plus loin ?

Google va-t-il sanctionner les sites qui sur-optimisent les détails ?

Cette déclaration change-t-elle la stratégie SEO à adopter ?

🎥 From the same video 13

Other SEO insights extracted from this same Google Search Central video · published on 21/11/2023

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.